Clear Sky Science · en

Intelligent recognition of embroidered purse patterns: comparing YOLO series and RT-DETR

Why old embroidered purses matter today

Across China, small embroidered purses once carried herbs, charms, and wishes for good fortune. Today many survive only in museum drawers and private collections. Each tiny stitched flower or dragon encodes stories about beliefs, fashion, and daily life. Yet digitizing and cataloging these richly decorated objects by hand is painfully slow. This study explores how modern artificial intelligence can automatically recognize the patterns on these purses, helping museums and communities preserve an important strand of intangible cultural heritage in the digital age.

From hand and eye to smart recognition

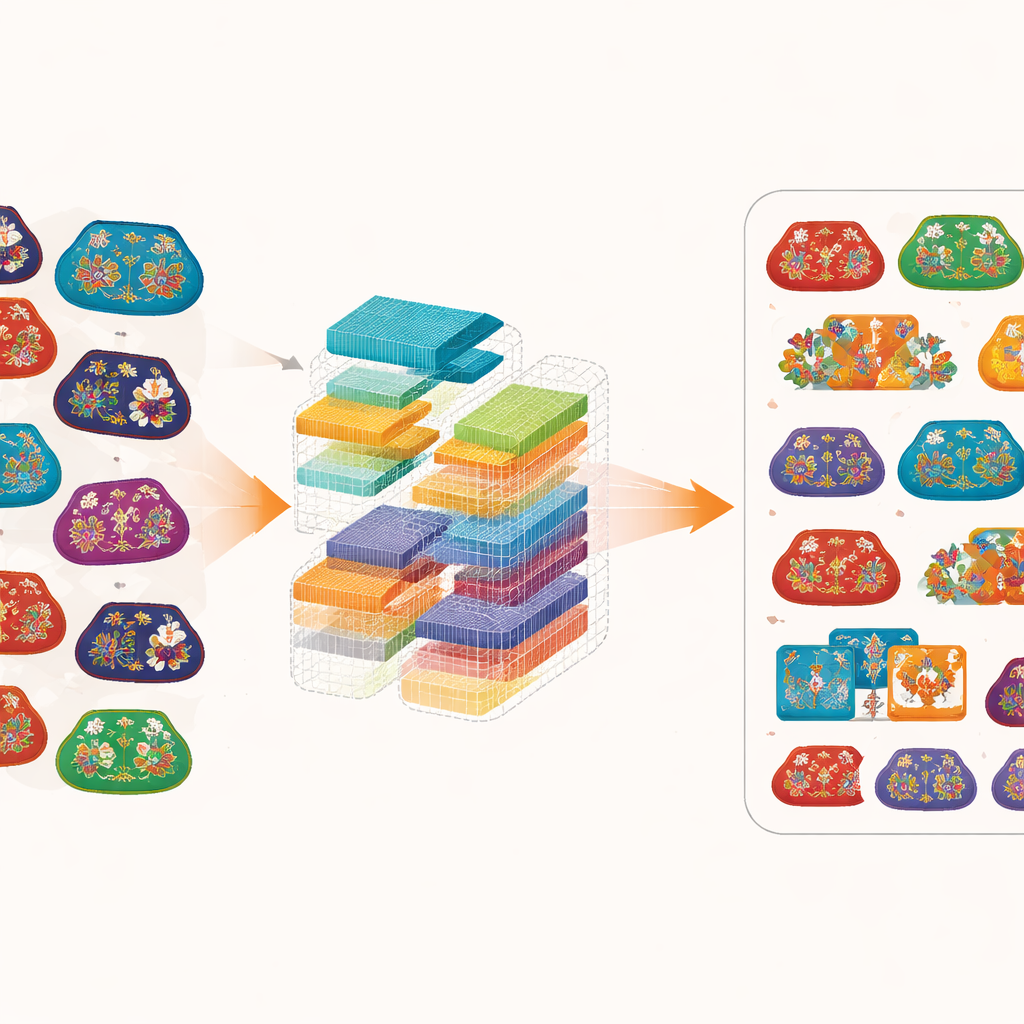

Traditionally, experts identified purse designs by closely inspecting photographs and consulting reference books. That approach does not scale to tens of thousands of items scattered across archives. The researchers instead assembled a specialized image collection of 783 embroidered purses drawn from books and a major museum’s digital archive. They defined eight common motif categories – including plants and flowers, birds and beasts, insects and aquatic life, landscapes and buildings, symbols and characters, figures and stories, artifacts and antiques, and geometric patterns – then carefully drew boxes around each pattern in every image. To combat the small size of the dataset, they digitally flipped, rotated, brightened, darkened, and blurred the images, expanding the training material more than fourfold while checking labels with both software and cultural heritage experts.

Putting popular AI tools to the test

With this curated dataset in hand, the team compared two families of object-detection systems. One family, known as YOLO, is widely used for fast tasks such as spotting pedestrians or cars in video. These models look at the image in a single pass and rely heavily on local patches. The other, a newer design called RT-DETR, combines conventional image filters with transformer-style attention, which can connect tiny stitches to the overall scene. The authors first tuned several YOLO variants and chose YOLOv5m as a strong baseline. It performed reasonably well on some categories – especially complex narrative scenes grouped under “Figures and Stories” – but struggled when motifs were small, heavily overlapped, or faded into the background. In such cases, flowers could disappear, geometric borders were misread, and portions of the image were incorrectly labeled as empty background.

How a hybrid transformer sees the stitches

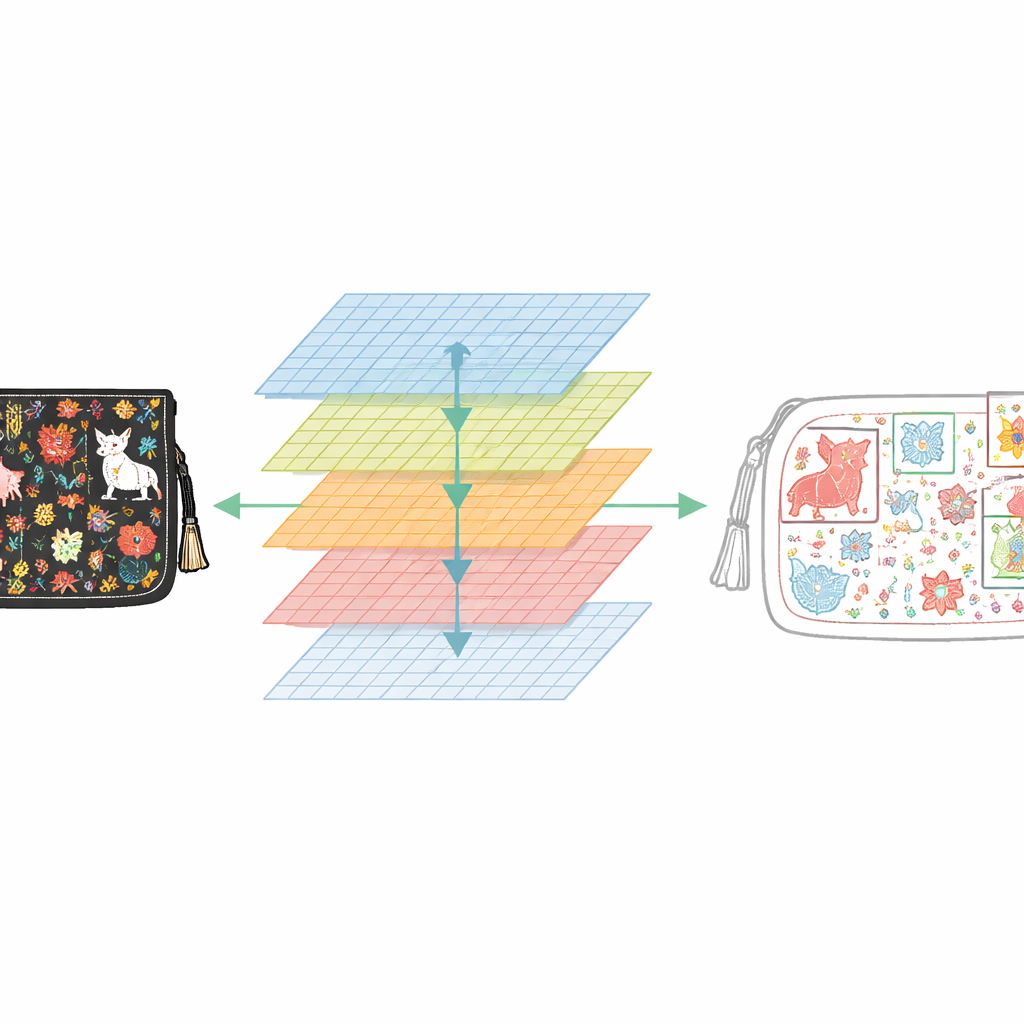

The researchers then focused on upgrading RT-DETR for this unusual visual challenge. They replaced its standard backbone with ConvNeXt-Large, a modern convolutional network designed to capture fine textures while still seeing the bigger picture. They also adopted a training strategy called Focal Loss, which tells the model to pay extra attention to difficult, easily confused examples instead of coasting on easy ones. Inside RT-DETR, features from the purse image are extracted at several scales and fused, while an attention mechanism links distant yet related regions, such as matching pairs of animals or repeating borders. Through careful ablation studies and step‑by‑step tuning of learning schedules and regularization, the authors arrived at an optimized configuration that balances accuracy and stability over many training runs.

What the improved system actually achieves

Measured on standard object‑detection scores, the enhanced RT-DETR clearly surpassed the YOLO models. Its main accuracy metric, mAP@0.5, reached 0.5433 – about a 33% improvement over the YOLOv5m baseline – with statistics showing that this gain is unlikely to be a fluke. The system did especially well on intricate narrative scenes, achieving an average precision of 0.833 for “Figures and Stories,” and recovered many motifs that YOLO missed, particularly in sparse or underrepresented categories such as landscapes and geometric borders. It also proved more consistent across repeated experiments, indicating reliable behavior rather than fragile overfitting to a single train–test split. The trade‑off is size: the best RT-DETR model is much larger and heavier than its YOLO counterparts, which could limit deployment on lightweight devices.

What this means for cultural heritage

For non‑specialists, the key message is that computers are learning not just to find cars and faces, but to read the language of traditional craft. By showing that a transformer‑based detector, carefully adapted and trained, can pick out dense, overlapping embroidery motifs more accurately than popular real‑time models, this work establishes a benchmark for future tools. Museums and cultural institutions could eventually use such systems to search vast photo collections by motif, track how certain symbols evolved, or help artisans revive old designs. The authors emphasize that performance is still moderate and further refinements – including lighter models and the addition of cultural knowledge and text descriptions – are needed before large‑scale deployment. Even so, the study marks a significant step toward intelligent, respectful digital stewardship of embroidered purse heritage.

Citation: Yang, H., Sui, Q., Xie, H. et al. Intelligent recognition of embroidered purse patterns: comparing YOLO series and RT-DETR. npj Herit. Sci. 14, 251 (2026). https://doi.org/10.1038/s40494-026-02518-3

Keywords: embroidery pattern recognition, intangible cultural heritage, object detection, transformer-based vision, digital preservation