Clear Sky Science · en

Human–computer collaborative approach to the decipherment of racle bone inscriptions with generative adversarial networks

Ancient Writing Meets Modern Machines

More than three thousand years ago, diviners in China carved questions about war, weather, and harvests onto turtle shells and animal bones. Today, these oracle bone inscriptions are a treasure trove for understanding how Chinese writing and early Chinese society took shape. But many of these tiny, weathered symbols remain undeciphered and are too numerous for a handful of human experts to tackle alone. This paper shows how artificial intelligence, working hand-in-hand with scholars, can help read these ancient scratches by turning them into modern-style Chinese characters.

From Pictures on Bone to Images for a Computer

Oracle bone script is unusually visual. Early scribes carved simplified pictures of tigers, suns, people, and tools directly into bone, so each character is almost like a tiny drawing. The authors take advantage of this by treating each character as an image rather than as a line of code or text. They collect over a thousand pairs of images: on one side a photographed oracle bone glyph, on the other its known modern Chinese counterpart. By resizing and cleaning these images, they build a standardized picture library that a computer can learn from, turning the age‑old problem of decipherment into a modern image‑to‑image translation task.

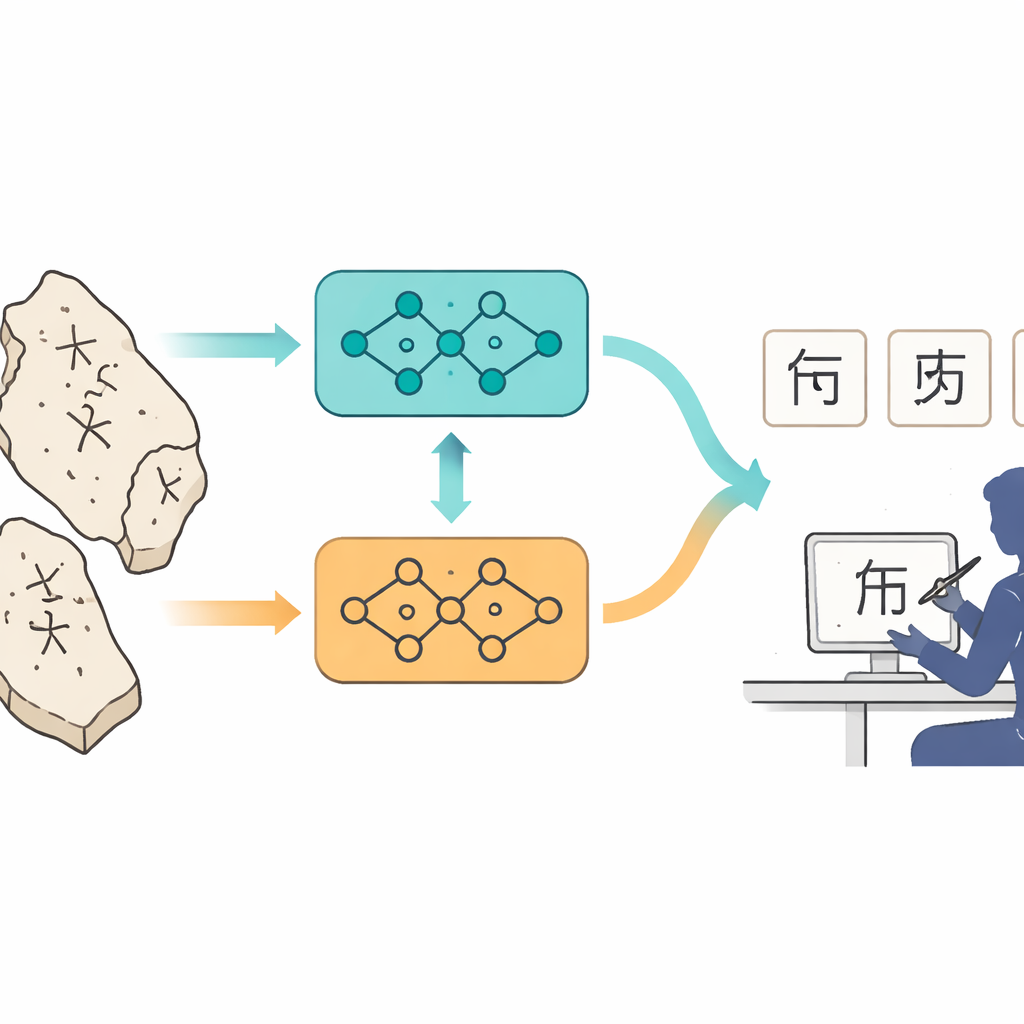

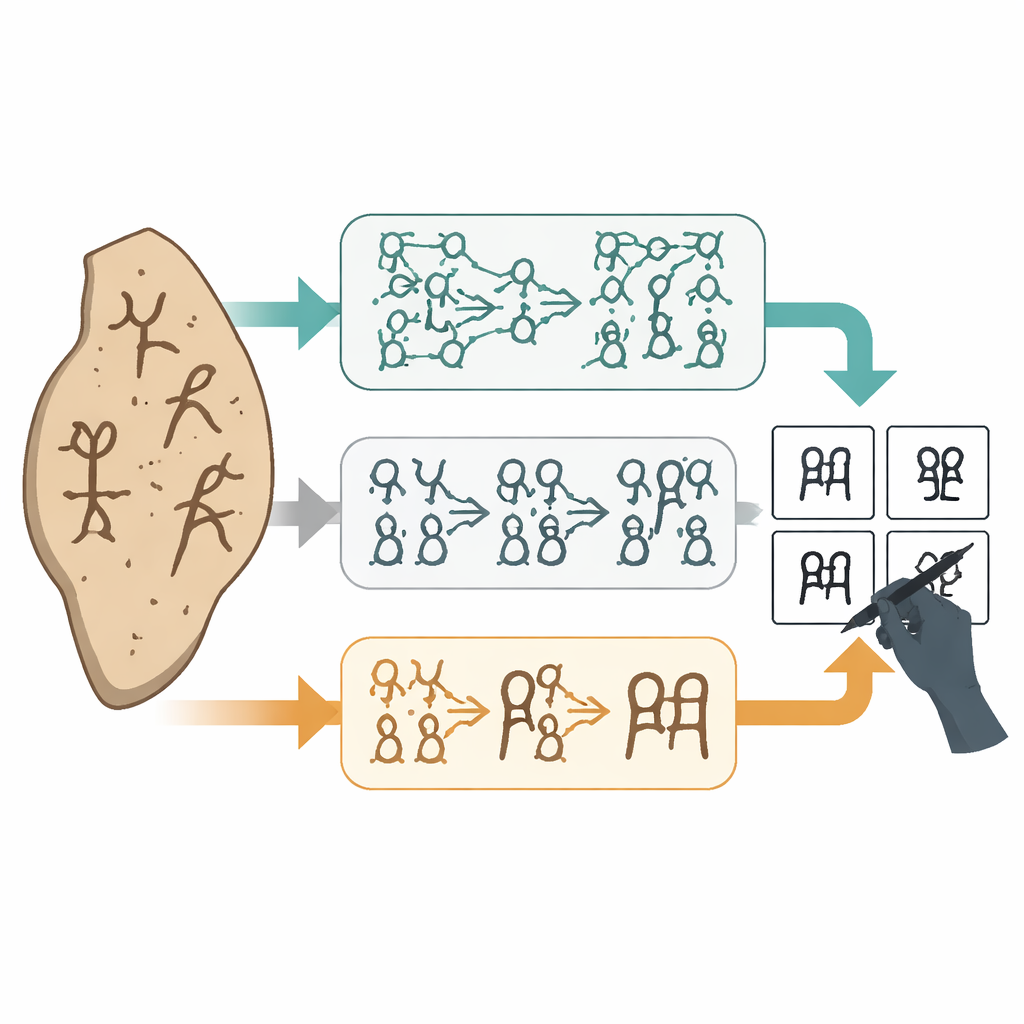

Two Digital Artists: Complementary AI Models

The team trains two related but different kinds of generative adversarial networks (GANs), which are AI models that learn to create realistic images by pitting a "generator" against a "critic." One model, based on a system called Pix2Pix, learns from paired examples: it sees a bone character and the exact modern character it should become. This model excels at producing crisp, well-formed modern-style glyphs that closely match the expected shapes. The second model, based on CycleGAN, is designed to work even when examples are not perfectly matched. It tends to preserve the original skeleton and style of the oracle script while gently regularizing it toward modern forms. Together, the two models offer different "takes" on what an old, damaged glyph might have looked like in a more regular, readable style.

Testing the Machines and Comparing Alternatives

To check whether these systems are doing more than just drawing pretty pictures, the authors run a careful set of tests. They hold back 160 known oracle–modern pairs that the AI never sees during training, then ask each model to predict the modern characters from the oracle images. They compare the outputs to the true answers using standard measures of image quality and structure, and they also inspect the results visually. In a broader comparison, they pit their two GANs against other popular image methods, including diffusion models, transformers, and classic U‑Net style networks. The GAN pair stands out: Pix2Pix produces the clearest, most legible characters, while CycleGAN provides conservative, structure‑preserving alternatives. Other methods often blur strokes, break the character skeleton, or generate shapes that no longer resemble writing at all, making them poor tools for serious decipherment.

Humans in the Loop: Collaboration, Not Replacement

The heart of the study is not AI alone but a structured partnership between machines and experts. For 150 inscriptions whose meanings were previously unknown, the models generate multiple candidate modern glyphs. Human specialists then select promising candidates and redraw missing or uncertain strokes by hand, guided both by the AI’s suggestions and by their knowledge of language history and the everyday life scenes reflected in the texts. In detailed case studies—such as proposing that one unknown glyph means "cave" or "den"—the authors combine AI‑suggested shapes, comparisons with related characters, and historical information about ancient housing and rituals. They also conduct controlled experiments on 160 known cases, comparing three modes: AI alone, humans drawing from scratch, and humans refining AI drafts. The combined approach consistently produces images that are closer to the true characters, judged both by numerical image scores and by expert panels, while also saving time and effort for the scholars.

Why This Matters for History and Heritage

For non‑specialists, the payoff is clear: each newly deciphered oracle bone character sharpens our picture of early Chinese history, from royal sacrifices to farming and disease. This work shows that AI can do more than recognize modern handwriting or generate art; it can help unlock the earliest layers of written culture when paired with careful human judgment. Rather than replacing experts, the system acts as an intelligent assistant that proposes shapes, speeds up tedious redrawing, and makes the whole process more transparent and measurable. The authors argue that this human–computer partnership could extend to other ancient scripts and damaged documents, turning fragile marks on bone, clay, or parchment into readable stories about how people once lived, believed, and recorded their world.

Citation: Zeng, S., Bai, J., Shi, J. et al. Human–computer collaborative approach to the decipherment of racle bone inscriptions with generative adversarial networks. npj Herit. Sci. 14, 232 (2026). https://doi.org/10.1038/s40494-026-02509-4

Keywords: oracle bone script, ancient writing, cultural heritage AI, human–AI collaboration, Chinese character evolution