Clear Sky Science · en

Generative AI technologies and educational outcomes: a comprehensive meta-analysis comparing traditional and AI-driven approaches

Why this matters for students and teachers

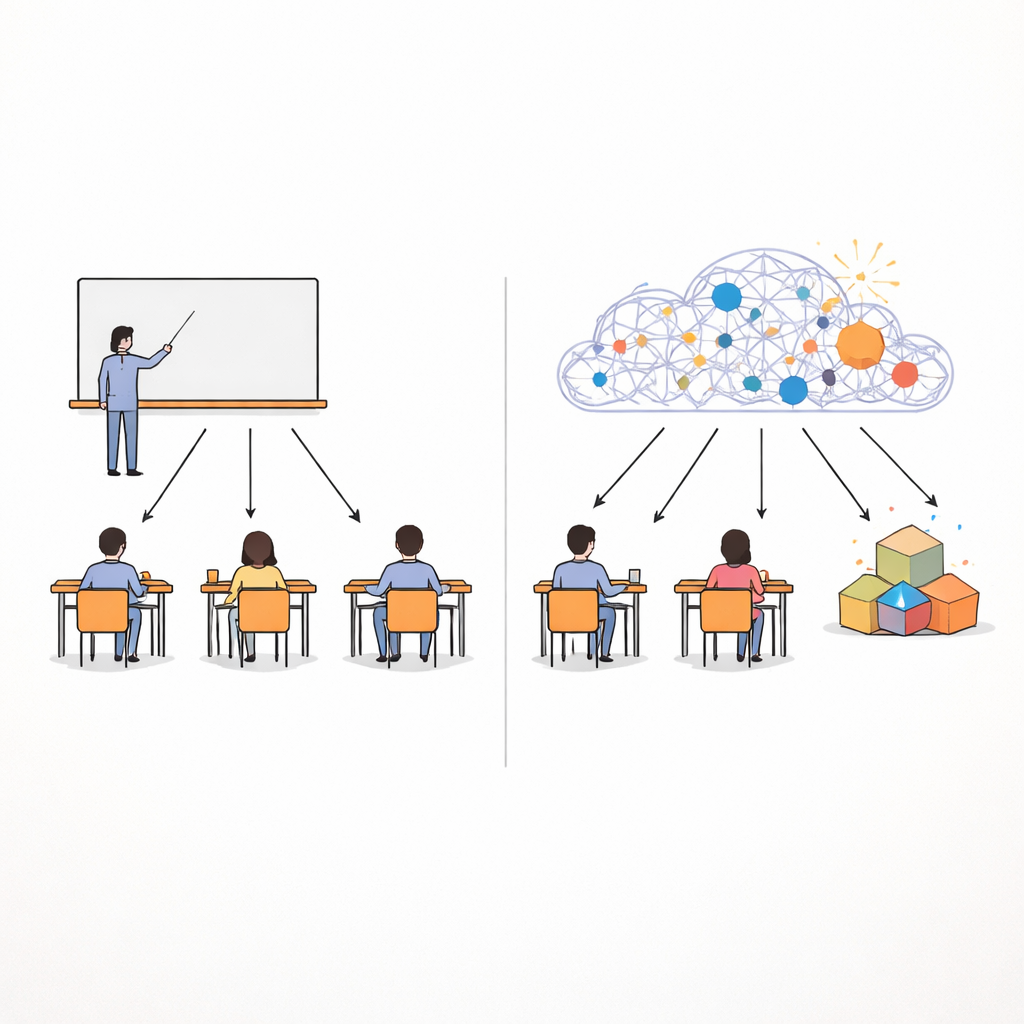

Generative artificial intelligence is rapidly finding its way into classrooms, homework tools, and learning platforms. Parents, teachers, and students alike are wondering: does this new wave of “smart helpers” actually improve learning, or does it make students more passive and dependent? This article pulls together results from dozens of studies around the world to give a clear, big-picture answer. It looks at how generative AI affects test scores, critical thinking, and writing skills, and compares these with more traditional, non‑AI teaching methods.

Bringing many studies into one clear picture

Instead of focusing on a single classroom or app, the author combines 53 separate studies in a statistical approach known as a meta-analysis. These studies span university and secondary school settings in several countries, and cover tools ranging from chatbots and AI tutors to AI‑supported games. The research team sifted through more than a thousand papers from major academic databases, applied strict quality checks, and kept only studies with solid designs and comparable data. This unified lens allows them to move beyond scattered success stories or cautionary tales and to estimate the overall impact of generative AI on education.

What generative AI seems to do best

The combined results suggest that generative AI, when used as a learning aid rather than a replacement for teachers, tends to outperform traditional approaches on several fronts. Students using these tools generally earn higher grades, show stronger higher‑order thinking (such as problem‑solving and critical analysis), and produce better writing, especially in organization, accuracy, and originality. The gains in advanced thinking skills and writing quality are particularly notable, with effect sizes in the moderate‑to‑large range. AI is especially powerful when it provides tailored practice materials, explains complex ideas in different ways, and supplies examples that students can study and adapt, all of which support active rather than rote learning.

Feedback from machines: a quiet powerhouse

One of the most striking findings concerns feedback. When students receive comments on their work generated by AI—such as suggestions on how to revise an essay or fix a programming solution—their learning outcomes improve more than when they receive only traditional feedback. Across studies, AI feedback shows a large advantage, likely because it is immediate, detailed, and can be customized to each learner’s needs. It helps students spot patterns in their mistakes, reflect on their reasoning, and try again quickly. At the same time, some students report trusting human feedback more, especially for emotionally charged or highly personal tasks, reminding educators that AI feedback works best when combined with human guidance rather than used alone.

Games, countries, and school levels: where results differ

The picture is more mixed when games enter the scene. Adding game elements to AI tools does not, on average, produce better learning than AI tools without games. Well‑designed educational games can boost engagement and effort, but purely entertainment‑driven designs can distract students from the underlying concepts. Age and self‑discipline also matter: younger learners appear more vulnerable to getting lost in the game instead of the lesson. The study also uncovers striking regional differences. In China and Pakistan, where high‑quality teaching is unevenly distributed, generative AI tends to raise educational outcomes by delivering rich resources and personalized support to more students. In contrast, studies from Korea and Turkey do not find clear benefits, possibly because long‑standing traditions of teacher‑centered instruction and curriculum structures do not mesh smoothly with AI‑driven approaches. Importantly, generative AI shows positive effects at both university and secondary school levels, though university students’ greater digital skills may help them make better use of these tools.

What this means for the classroom of tomorrow

Overall, the article concludes that generative AI can be a strong ally for learning if it is treated as a “cognitive aid” that supports human thinking rather than replaces it. Used wisely, it can lift grades, deepen reasoning, and sharpen writing, with AI‑powered feedback standing out as especially helpful. Yet its success depends on thoughtful design: games must align with learning goals, feedback must be paired with human care and judgment, and classroom practices must fit local cultures and resources. The author calls for future work on how generative AI can support learners with disabilities and on the long‑term effects on motivation and independent thinking. For families, teachers, and policymakers, the message is neither hype nor doom: generative AI is not a magic tutor, but in the right hands it can become a powerful tool for more equitable and effective education.

Citation: Dong, Y. Generative AI technologies and educational outcomes: a comprehensive meta-analysis comparing traditional and AI-driven approaches. Humanit Soc Sci Commun 13, 559 (2026). https://doi.org/10.1057/s41599-026-06903-y

Keywords: generative AI in education, AI feedback, student learning outcomes, educational technology, higher-order thinking