Clear Sky Science · en

Exploring AI’s performance in literary autobiography translation: how closely do AI models match human translation

Why this matters to everyday readers

Most of us now rely on online translation tools, and some even use AI to read novels or memoirs written in other languages. But can these systems really capture the emotion, rhythm, and cultural depth of a life story? This study digs into how three popular AI systems and professional human translators handle a celebrated Chinese literary autobiography, revealing where machines shine, where they stumble, and what that means for readers who encounter world literature through a screen.

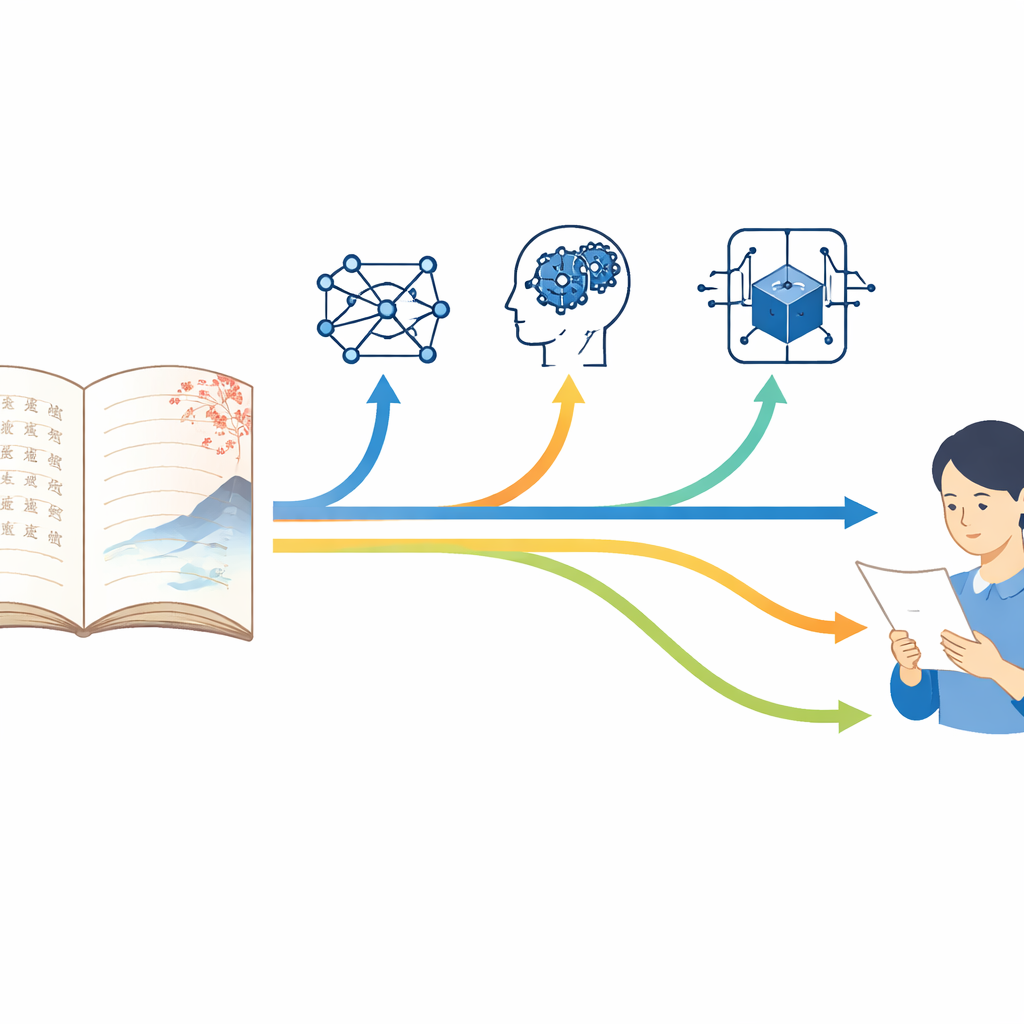

Stories crossing languages

The researchers focus on The Great Flowing River, a widely praised Chinese autobiography that blends personal memory with the turbulent history of wartime China and postwar Taiwan. Its English version was produced over years by a team of expert translators working closely with the author to preserve both factual accuracy and a quiet, emotionally restrained style. This careful human translation is treated as the benchmark. Against it, the authors compare three AI outputs: Google Translate’s neural machine system, a general-purpose large language model (ChatGPT-4o), and a newer reasoning-focused model (OpenAI-o1). All were asked to translate the same chapters from Chinese to English under everyday, default settings, much like a typical user would.

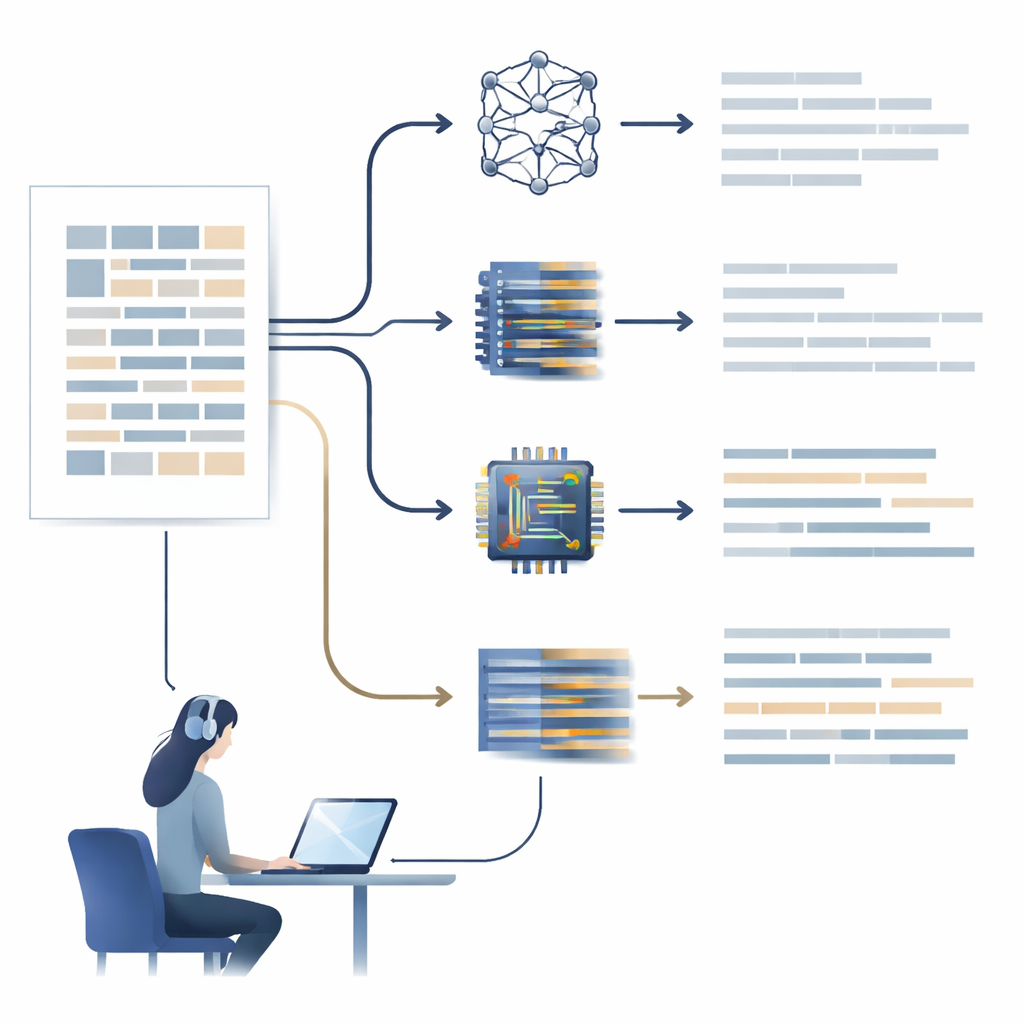

Peeking under the language hood

To move beyond gut feelings about “good” or “bad” translations, the study uses a tool called Coh-Metrix, which measures over a hundred features of English texts. These range from simple counts—like how many verbs or adjectives appear—to subtler properties such as how tightly sentences are linked, how concrete the wording is, and how easy a passage is to follow. The authors group these measures into six broad areas: word choice, sentence structure, explicit links between ideas, deeper conceptual connections, surface features like sentence length, and overall readability. By comparing scores across these dimensions, they can show, in quantitative terms, how closely each AI’s style and structure resemble the human translation.

How the different AIs behave

The three AI systems turn out to have distinct “personalities.” Google Translate tends to use more common vocabulary and relatively simple sentences, making its output easy to read but less rich and less tied to the narrator’s personal voice. It uses fewer first-person plural pronouns like “we” and fewer vivid verbs than humans, which weakens the sense of shared experience that is central to autobiography. The two large language models, by contrast, favor more adjectives and adverbs and a broader range of vocabulary. Their wording can feel more elaborate and dynamic, sometimes adding descriptive touches that were not emphasized by the human translators. This can enhance clarity in places but also risks upsetting the original’s understated tone, especially in passages where the book’s power comes from restraint rather than flourish.

Depth, coherence, and emotional undercurrents

When it comes to how ideas connect across sentences and paragraphs, none of the AI systems fully match human translators. The human version makes consistent use of repeated nouns, carefully chosen linking words, and clear cause-and-effect cues to help readers follow complex events and emotional shifts. The AIs often rely less on such explicit signposts. At the same time, they sometimes overemphasize action and causality, using many causal and intentional verbs that can make situations more straightforward but also more literal than the original. The reasoning-oriented model, OpenAI-o1, is particularly prone to infer extra details—such as specifying a political leader’s full name or turning a “change in circumstances” into a “crisis.” These guesses can make the narrative feel more direct but also drift away from what the author actually wrote.

Which AI feels most human-like

Across the many measurements, ChatGPT-4o comes closest to the human translators’ profile. It generally offers richer vocabulary and more context-aware phrasing than Google Translate, while avoiding some of the bolder interpretive leaps made by OpenAI-o1. Google Translate, while less nuanced, often remains more faithful to the surface wording and produces very readable text, particularly for non-specialist audiences. OpenAI-o1, despite being designed to “think harder,” aligns least well with the human translation overall. Its strengths in reasoning lead it to reframe or expand certain expressions in ways that may be stylistically off or culturally inaccurate for this kind of literary writing.

What this means for readers and translators

For a lay reader, the takeaway is that today’s AI can already produce translations of literary autobiography that are smooth and sometimes strikingly effective—but they still fall short of human experts in preserving voice, subtle emotion, and cultural nuance. Among the systems tested, ChatGPT-4o currently offers the closest approximation to professional work, with Google Translate not far behind in practical readability. The reasoning-focused model trails in this specific task. Human translators, however, remain crucial: their ability to weigh history, culture, and style allows them to build coherent, emotionally layered narratives that machines only partially replicate. As AI tools continue to improve, this study suggests they are best seen not as replacements for literary translators, but as powerful aids that still need human judgment to bring life stories fully across languages.

Citation: Huang, Y., Cheung, A.K.F. Exploring AI’s performance in literary autobiography translation: how closely do AI models match human translation. Humanit Soc Sci Commun 13, 518 (2026). https://doi.org/10.1057/s41599-026-06630-4

Keywords: literary translation, machine translation, large language models, Chinese autobiography, AI vs human translators