Clear Sky Science · en

AI model predicts patient outcomes from surgical gestures and provides insights into explainability

Why Surgical Movements Matter

For men facing prostate cancer surgery, one of the biggest worries is whether they will regain normal sexual function afterward—and they often must wait a year or more to find out. This study explores how artificial intelligence (AI) can watch exactly how a prostate operation is performed and use those tiny hand movements to forecast which patients are most likely to recover erectile function. By turning video of surgery into data, the researchers aim to give surgeons faster, clearer feedback so they can refine their techniques and improve patients’ quality of life.

Breaking Surgery Into Tiny Building Blocks

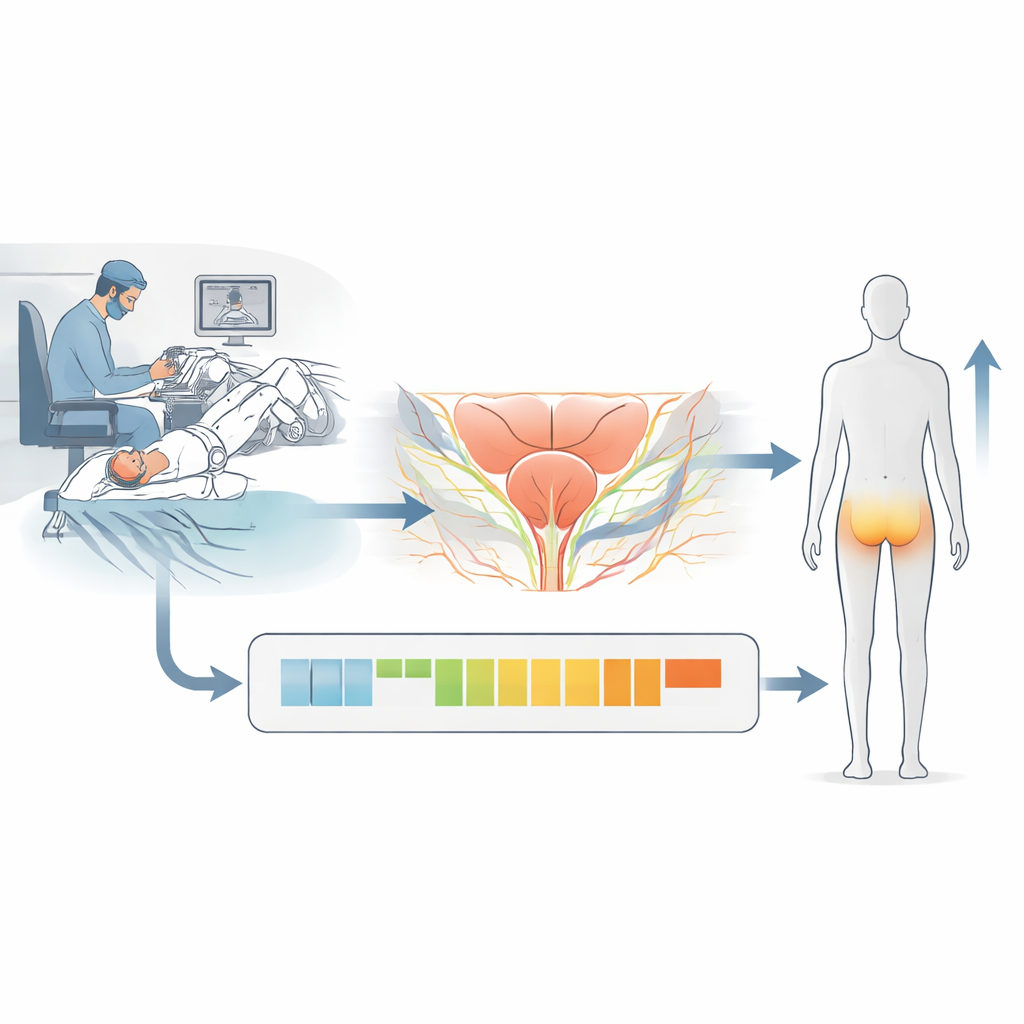

The team focused on robot-assisted radical prostatectomy, a common operation in which a surgeon uses robotic instruments to remove the prostate while trying to spare nearby nerves that are crucial for erections. They studied 147 men treated at five centers by 26 surgeons. Instead of judging performance by broad impressions, they broke the nerve-sparing step of each surgery into “gestures”—discrete actions such as pushing tissue aside, cutting, or retracting. Previous work showed that the sequence of these gestures could predict who would regain erectile function after a year, but it did not explain why certain patterns were better than others.

Adding the Where and Why to Each Move

To make the analysis more meaningful, the researchers added two extra layers of information for every gesture: where it happened in the body and what the surgeon was trying to achieve at that moment. For example, a gentle peeling motion might be used to free the nerve bundle along the side of the prostate, while a cut might extend a tissue plane behind the gland. By combining the action, the anatomical location, and the surgical purpose, they created “contextualized gestures” that more fully describe what is happening during the operation.

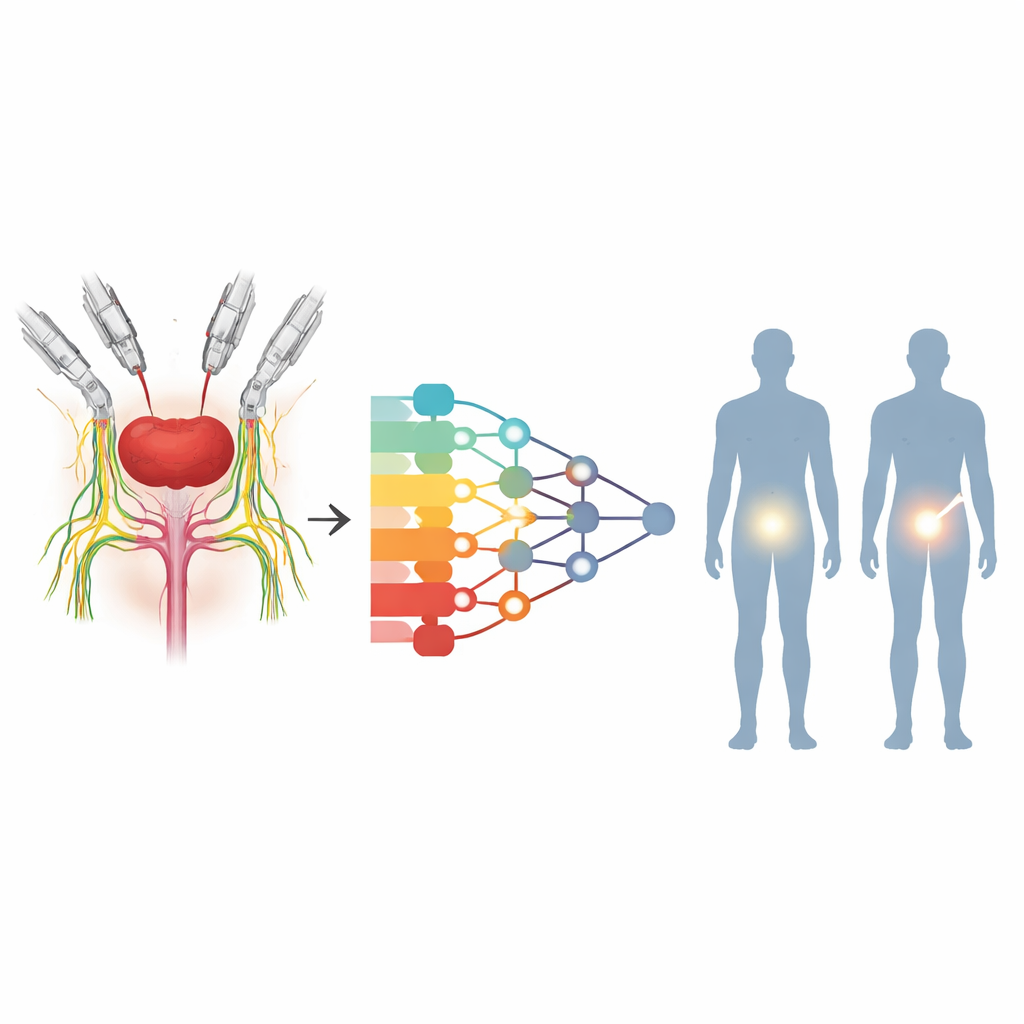

Teaching an AI to Read the Operation

These contextualized gestures, taken in time order, were fed into a type of AI model called a transformer, which is well suited to finding patterns in sequences. The model learned from tens of thousands of annotated gestures across all patients. When it looked only at the bare sequence of actions, it already did reasonably well at predicting who would have intact erectile function after one year. But when the same model was given the added context of where in the anatomy the gesture occurred and what function it served, its accuracy improved noticeably. Including standard patient characteristics, such as age or prostate size, did not further boost performance beyond what the rich description of the surgery itself already provided.

What the AI Saw Inside the Operation

Because transformers highlight which parts of a sequence weigh most in their decisions, the team could peer into the model and see which combinations of gestures, locations, and purposes were most tied to recovery or non-recovery. Helpful patterns included careful combinations of gentle spreading and precise cutting when freeing the nerve bundles, suggesting that balanced, delicate dissection protects these fragile structures. In contrast, patterns involving instrument retraction or movements by the bedside assistant, particularly when adjusting the camera or pulling on tissue near the nerves, were linked to poorer outcomes. This points to the importance not just of the lead surgeon’s technique, but also of how assistants handle tissue and maintain visibility during critical steps.

What This Means for Future Patients

To a layperson, the message is that the fine details of how prostate surgery is performed—down to the order and style of each small movement—can strongly influence sexual function a year later, and that AI can help reveal these hidden links. While the study cannot yet prove cause and effect, it shows that encoding the “what,” “where,” and “why” of each surgical action allows computers to predict outcomes more accurately and highlight the moments that likely matter most. In the future, such systems could give surgeons near-real-time feedback on their technique, guide training based on data rather than intuition, and ultimately help more men emerge from prostate cancer surgery with their quality of life preserved.

Citation: Heard, J.R., Deo, A., Ghaffar, U. et al. AI model predicts patient outcomes from surgical gestures and provides insights into explainability. npj Digit. Surg. 1, 4 (2026). https://doi.org/10.1038/s44484-025-00006-y

Keywords: robotic prostate surgery, surgical gestures, artificial intelligence, erectile function recovery, surgical training