Clear Sky Science · en

EPEE: towards efficient and effective foundation models in biomedicine

Why faster thinking AI matters in medicine

Modern artificial intelligence can read medical charts and scan images with impressive skill, but in real hospitals every second counts. Doctors in emergency rooms and intensive care units cannot wait while a huge model slowly “thinks” through dozens of steps, especially if those extra steps do not improve the answer. This study introduces a way to help large medical AI systems know when they have already seen enough to make a safe, confident decision, saving time and computer power without sacrificing accuracy.

The problem of slow and fussy AI

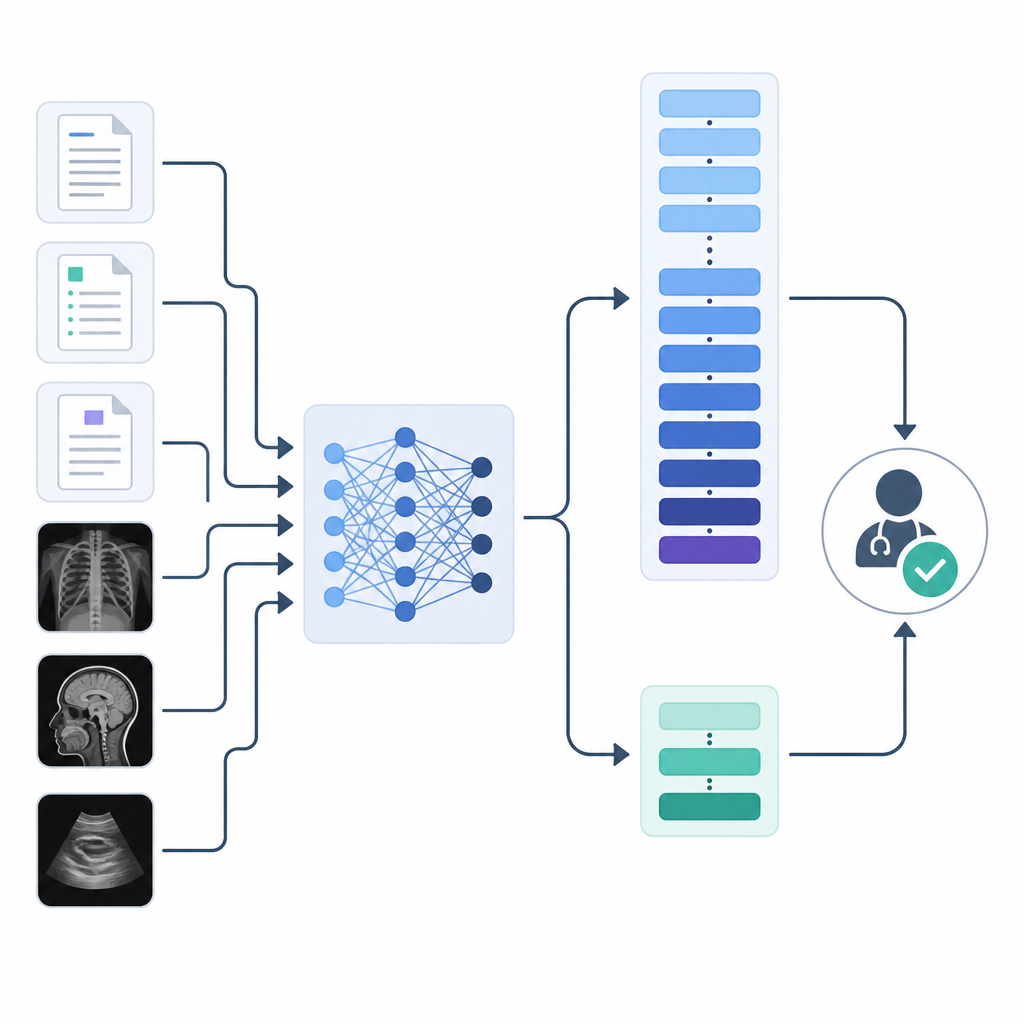

Large “foundation” models power many recent advances in health technology. Language models help sift through electronic health records and research papers, while vision models examine images such as X rays and tissue slides. Yet these models are built with many stacked layers that process the same input again and again. In practice, the later layers often add little value and can even hurt accuracy, a problem the authors call overthinking. For a doctor waiting on a risk score or a flag for a dangerous drug interaction, this extra mental churn from the computer turns into real-world delays and higher computing costs.

Letting easy cases exit early

Previous research proposed “early exiting,” in which a model includes small checkpoints between layers. If a checkpoint is already very sure of its answer, the model can stop there rather than pushing the data through all remaining layers. One family of methods decides based on confidence: if the prediction looks very focused on one outcome, the model exits. These approaches are simple and flexible but can lose accuracy when tuned for speed. Another family waits for several layers in a row to agree on the same answer, a “patience” rule that tends to protect accuracy but is sensitive to how many agreements are required, making it tricky to set for different clinical needs.

A hybrid early exit called EPEE

The authors present EPEE, short for Entropy- and Patience-based Early Exiting, which blends these two ideas. At every layer of a transformer model, EPEE attaches a lightweight classifier. The system checks two simple conditions: is the current prediction very confident, and have recent layers been consistently making the same call? If either condition is met, the model stops and returns the result. By adjusting how “confident” is defined and how many repeated agreements are required, users can tune both speed and caution. Importantly, the authors show that the old confidence-only and patience-only methods are just special cases of this more general strategy.

Tests on real medical text and images

To see whether EPEE works in practice, the team tested it on three kinds of biomedical tasks: classifying notes or reviews, finding relationships such as drug interactions, and extracting medical events from text. They used eight popular foundation models, including language models like BERT and GPT-2 and a vision transformer for medical images. Across twelve datasets drawn from intensive care records, patient reviews, medical literature, and image collections such as chest X rays and blood cell slides, they compared EPEE with standard full-depth inference and with earlier early-exit methods. In many cases, the model reached its best or near-best accuracy at intermediate layers, meaning that forcing it to use all layers was unnecessary. EPEE took advantage of this by allowing simple cases to exit early while still letting harder ones pass through more layers.

Balancing speed and reliability in the clinic

When the researchers measured running time, EPEE consistently reduced inference latency compared with both ordinary full-depth models and prior early-exit techniques, often cutting the effective computation while matching or slightly improving accuracy. The method required only a small extra cost during training and worked similarly well for both language and image models, including newer large biomedical models. Because its two settings can be adjusted to target a chosen trade-off between speed and correctness, EPEE is well suited to settings like intensive care, where rapid answers are crucial but mistakes are costly.

What this means for future medical AI

In simple terms, this work teaches big medical AI systems to stop when they already know the answer, instead of endlessly rechecking their work. By combining two common exit rules into a flexible framework, EPEE shows that hospitals may not need even larger models to get better performance; they may just need models that use their existing brains more wisely. If adopted widely, this kind of early exit strategy could help bring powerful text and image models into real-time clinical workflows, supporting faster yet still dependable decisions at the bedside.

Citation: Zhan, Z., Zhou, S., Zhou, H. et al. EPEE: towards efficient and effective foundation models in biomedicine. npj Health Syst. 3, 30 (2026). https://doi.org/10.1038/s44401-026-00083-2

Keywords: early exiting, biomedical AI, foundation models, model efficiency, clinical decision support