Clear Sky Science · en

Symbiotic brain-machine drawing via visual brain-computer interfaces

Drawing With Your Mind

Imagine sketching a picture without moving a muscle—no mouse, no stylus, not even an eye movement—just by thinking about the shape you want to draw. This study shows an early but working version of exactly that: a simple, low-cost system that lets people “mind-draw” basic shapes and digits by partnering their brain activity with an adaptive computer program.

How Brain Signals Talk to a Screen

The researchers built a non-invasive brain–computer interface (BCI) using a basic headband with three electrodes, including one over the visual part of the brain. On a computer screen, ten white discs flicker at slightly different rates against a dark background. The person quietly imagines a simple shape—such as a letter, a geometric figure, or a handwritten digit—and is asked to look at the flickering disc that overlaps best with that imagined shape. Because each disc flickers at a unique rhythm, the brain’s electrical response to that rhythm can be picked up by the headband. By analysing these “steady-state visual evoked potentials,” the system can tell which disc the person is attending to and treat that disc as a small piece of the mental drawing.

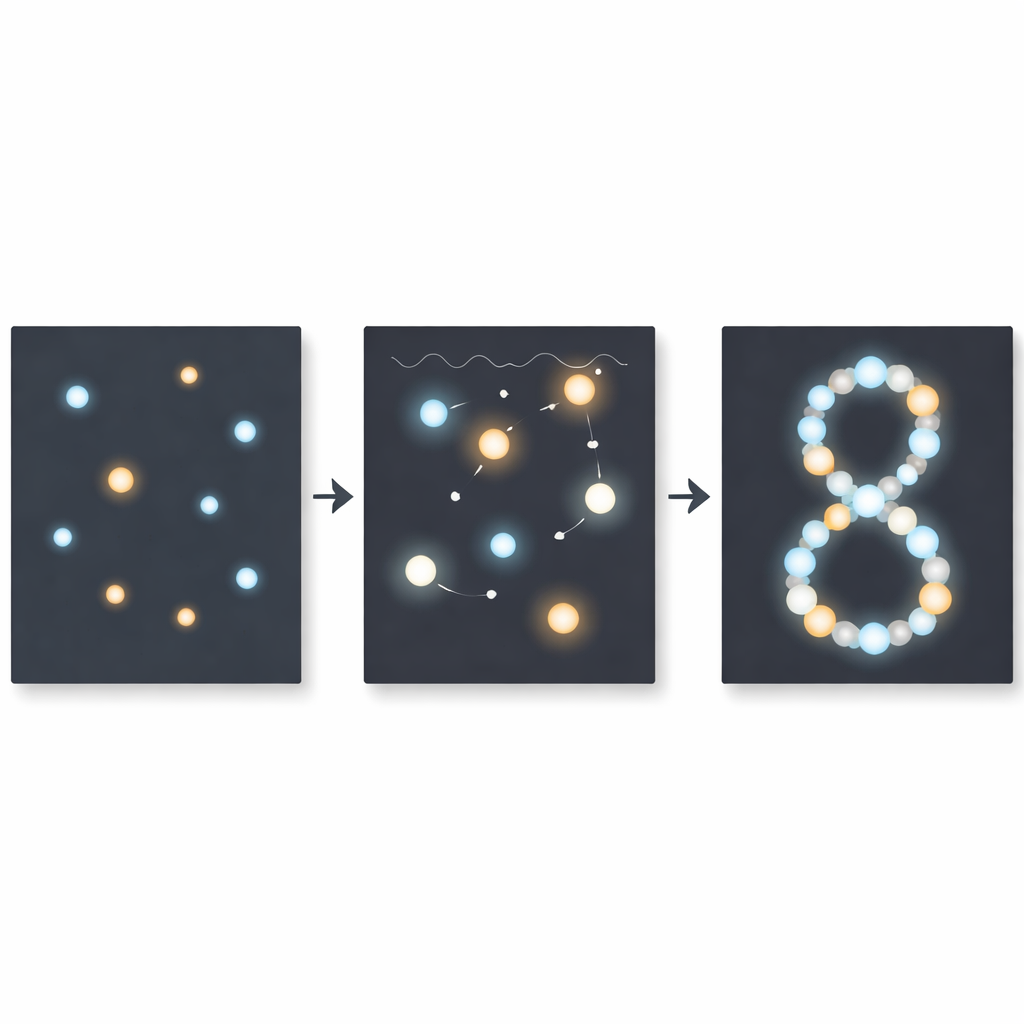

Building a Picture Step by Step

The drawing does not appear all at once. Instead, the process runs in short rounds lasting a few seconds. In each round, the subject chooses the disc with the best overlap to their imagined object. The system records how strongly the brain responds and assigns a weight to that disc. Over 25 such rounds, these weighted disc locations are added together like dots on a canvas to form an image. A clever “policy” then decides where to place the next set of discs, focusing sampling effort on the most promising parts of the screen. One version of this policy is inspired by how the early visual system detects edges and textures; another, faster one uses machine-learned building blocks derived from thousands of handwritten digits. In both cases, the computer adapts to the growing drawing, tightening in on the user’s intent.

How Well Does Mind-Drawing Work?

Eight volunteers used the basic version of the system to draw three simple shapes each. The team compared the mind-drawn results to hand-drawn target images and found a good match on average: the reconstructed shapes captured the main structure of the intended letters and symbols, even if they were not pixel-perfect. Using information theory, the researchers then estimated how much usable information per second this process carries. The adaptive mind-drawing reached about 1.3 bits per second—already higher than what standard one-way BCIs are predicted to achieve with the same hardware. When they turned on the data-driven policy tailored to digits, the information rate jumped to over 4 bits per second, at the cost of being restricted to shapes similar to those in the training data.

From Rough Sketches to Rich Images

To explore what such rough brain-guided sketches could be used for, the team combined them with a modern image generator (Stable Diffusion). Here the system first produces the coarse mind-drawn shape, then feeds it—together with a text description—into the image generator, which fills in detail and style. For prompts like a robot, tree, lamp, or aircraft, two different mind-drawing sessions under the same prompt led to distinct but recognisably related final images. This shows how simple neural sketches might someday seed rich, personalised graphics for communication or creativity, while the heavy lifting of detail is done by artificial intelligence rather than by the brain interface alone.

Why This Matters and What Comes Next

The work demonstrates that with only a single inexpensive brain sensor and a clever, feedback-driven design, people can guide a computer to reconstruct basic imagined shapes in about two minutes, and sometimes in under a minute for digits. The key advance is not just decoding brain signals, but creating a true partnership in which the computer repeatedly refines its guesses and the human simply chooses the best match. While still limited to simple shapes and relying on flickering probes, this approach hints at future tools for people who cannot speak or move easily, and for artists or designers who want to brainstorm visually at the speed of thought.

Citation: Wang, G., Huang, Y., Muckli, L. et al. Symbiotic brain-machine drawing via visual brain-computer interfaces. npj Biomed. Innov. 3, 31 (2026). https://doi.org/10.1038/s44385-026-00086-6

Keywords: brain-computer interface, mind drawing, EEG, visual imagination, assistive communication