Clear Sky Science · en

Sequential sensitivity analysis of multimodal large language models for rare orbital disease detection

Why faster answers for rare eye problems matter

Rare diseases that affect the eye socket—the bony cavity around the eye—can slowly steal sight and even threaten life, yet they are notoriously hard to diagnose. Many patients spend years going from doctor to doctor before receiving a clear answer. This study explores whether a new kind of artificial intelligence (AI), able to look at eye photographs and read basic clinical information, can help doctors spot these unusual orbital diseases earlier and more accurately.

Seeing rare disease in ordinary eye photos

The researchers focused on three important orbital problems: thyroid eye disease, orbital inflammation, and orbital tumors. All can change the way the eyes and surrounding tissues look from the outside. That makes simple external eye photographs a promising starting point for computer-based screening. The team assembled two large collections of such images from hospitals in China, Singapore, and Thailand, reflecting several racial groups. One dataset, with nearly seven thousand single-eye photos, mixed healthy eyes, orbital diseases, and other eye conditions. A second, smaller dataset contained only patients with confirmed orbital disease and included extra details such as age, sex, race, and symptoms.

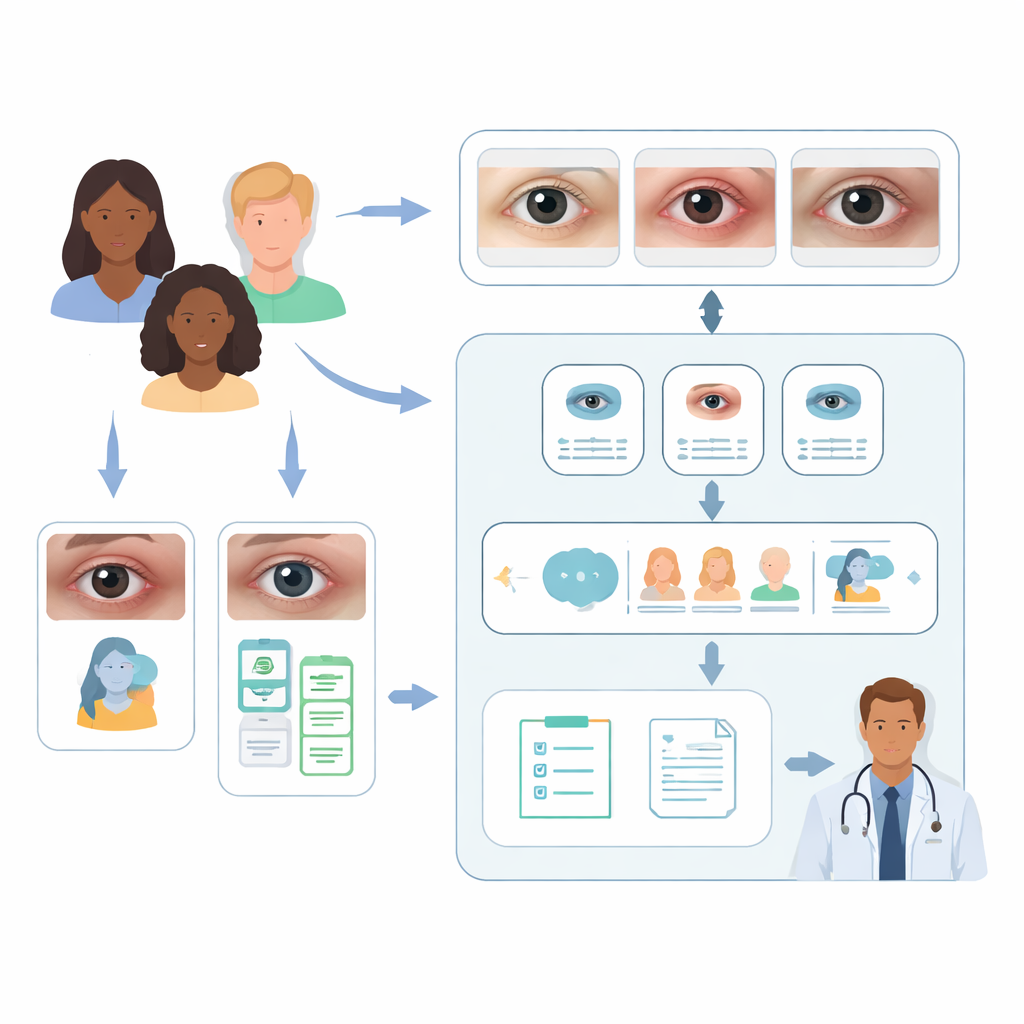

A two-step AI helper for doctors

In the first step, the team fine-tuned a vision-language model known as CLIP to act like a smart triage nurse. Given a single eye image, CLIP learned to sort it into three broad groups: healthy, orbital disease, or another eye problem. After training, this model correctly classified about nine out of ten images, clearly beating several widely used deep-learning image models and newer multimodal systems that had not been adapted to this task. This suggests that tailoring AI specifically to orbital pictures makes a big difference, and that even lightweight models can work well when carefully tuned.

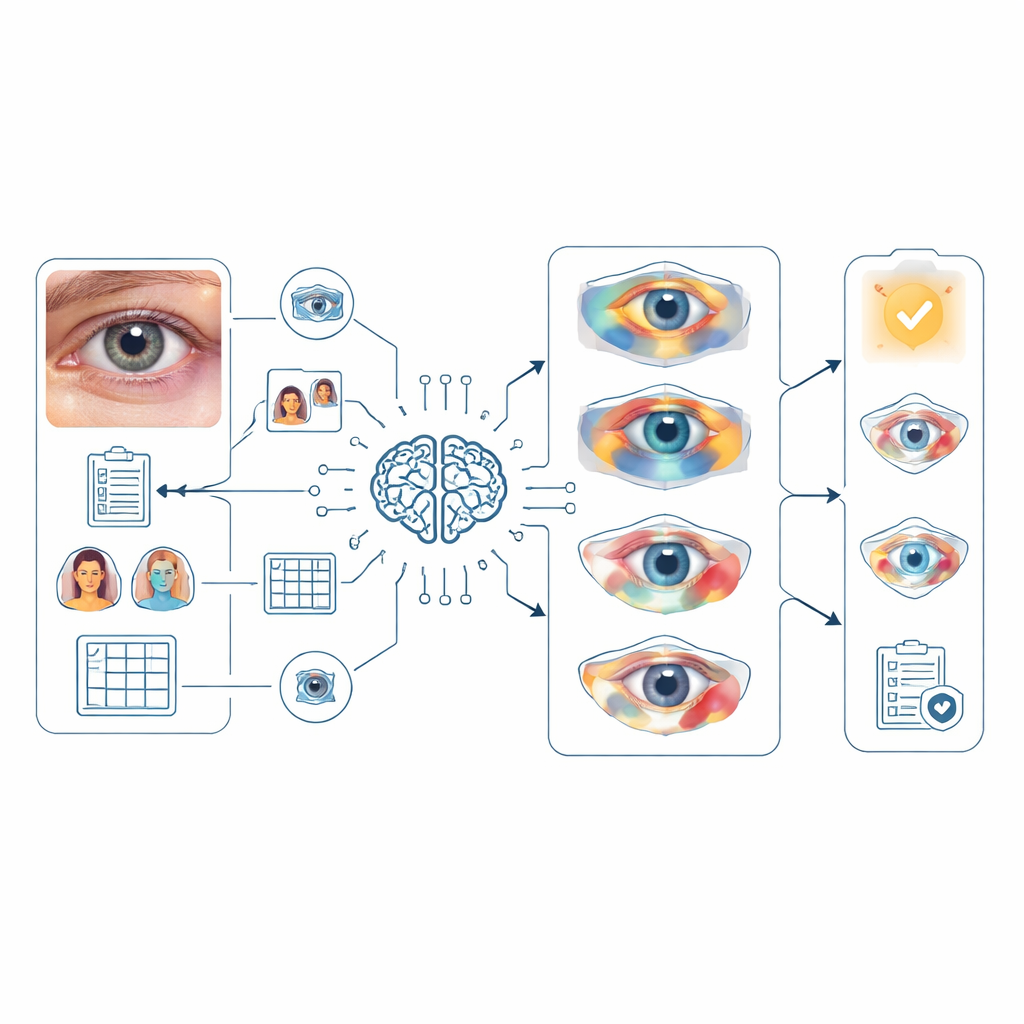

Layering information to sharpen diagnoses

The second step tested a multimodal large language model, GPT‑4o, as a virtual specialist trying to decide which of the three rare orbital diseases a patient has. Here, the researchers performed a “sequential sensitivity” experiment, gradually feeding the model more information to see how each piece helped. When GPT‑4o saw only the eye photo, its top guess was correct for fewer than 14% of patients, and the right answer was somewhere in its top five ideas only about a quarter of the time. Adding the patient’s main complaint—such as double vision, bulging eyes, or pain—caused a dramatic jump in accuracy, especially for thyroid eye disease and orbital tumors. Including racial background gave a smaller but meaningful boost for tumor cases, likely reflecting real-world differences in who tends to develop which conditions.

Teaching AI to think more like a clinician

The team then guided the model with a structured “reasoning prompt” that mimicked how an eye doctor examines a face: checking eye position, eyelids, the white of the eye, the cornea, the iris, the tear glands, the surrounding skin, and whether both sides match. For orbital inflammation in particular, this deliberate step-by-step description improved the model’s first-choice accuracy, suggesting that nudging AI to follow human-like examination routines can uncover subtle patterns. Finally, the researchers created an AI “agent” by feeding CLIP’s three-way triage result into GPT‑4o as an extra cue. This combination pushed the chance that the correct diagnosis appeared in the top five possibilities to about 85% overall and more than 97% for thyroid eye disease, though it offered less benefit and even some decline for orbital inflammation, where the data were more limited and varied.

Helping doctors communicate and plan care

Beyond naming diseases, the researchers asked ophthalmologists to judge AI-generated medical reports and examination recommendations based on readability, completeness, accuracy, and safety. On average, the experts found the reports easy to understand, mostly complete, and largely correct, with only minor gaps in detail and few suggestions that might pose any risk. The recommended follow-up tests were clear and generally appropriate, although still not at the level where they could be used without human oversight. Together, these findings show that such models can already assist clinicians in explaining findings and outlining reasonable next steps.

What this means for patients with rare eye conditions

This work suggests that when AI is given both pictures and key clinical clues—symptoms, background, and a guided way of examining the eye—it can become a powerful helper for spotting rare orbital diseases. While it does not replace trained specialists and still needs prospective testing in larger, more diverse groups, a two-stage system like this could one day run on ordinary cameras or mobile devices. It could flag people who need urgent expert care, shorten the long diagnostic journeys many patients face, and support doctors with clear, readable reports, ultimately improving the chances of preserving sight and health.

Citation: Lei, C., Ji, K., Zhao, C. et al. Sequential sensitivity analysis of multimodal large language models for rare orbital disease detection. Commun Med 6, 175 (2026). https://doi.org/10.1038/s43856-026-01447-3

Keywords: orbital disease, artificial intelligence, eye imaging, multimodal models, rare diseases