Clear Sky Science · en

Leveraging machine learning for digital gait analysis in ataxia using sensor-free motion capture

Why walking videos matter for brain health

Loss of balance and coordination is a defining feature of ataxia, a group of neurodegenerative disorders that make walking increasingly difficult. Today, doctors usually grade a person’s walking with a simple clinical scale that can miss early, subtle changes. This study asks a timely question: can ordinary video recordings of people walking, analyzed by machine learning, pick up gait problems earlier and track them more precisely—without any wearable sensors or specialized lab equipment?

Seeing movement through a digital lens

The researchers worked with 91 adults with various forms of ataxia and 28 healthy volunteers who were already being assessed at a German neurodegeneration center. During routine visits, each person performed a normal walking task while being filmed with a single tablet camera placed in front of them. Neurologists rated walking ability using the first item of a standard scale called SARA, which scores gait from normal walking to being unable to walk. These on-site scores served as the “ground truth” to which the digital system would be compared.

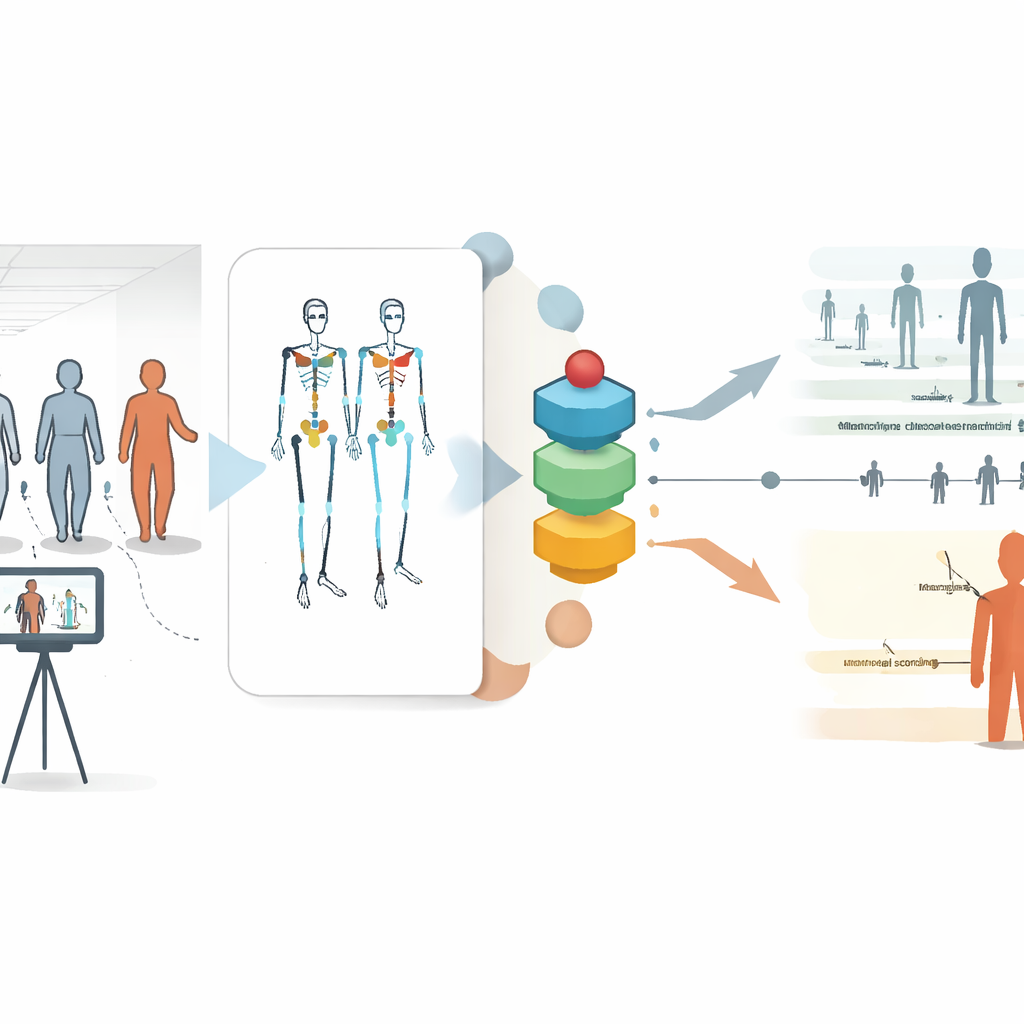

From pixels to body motion

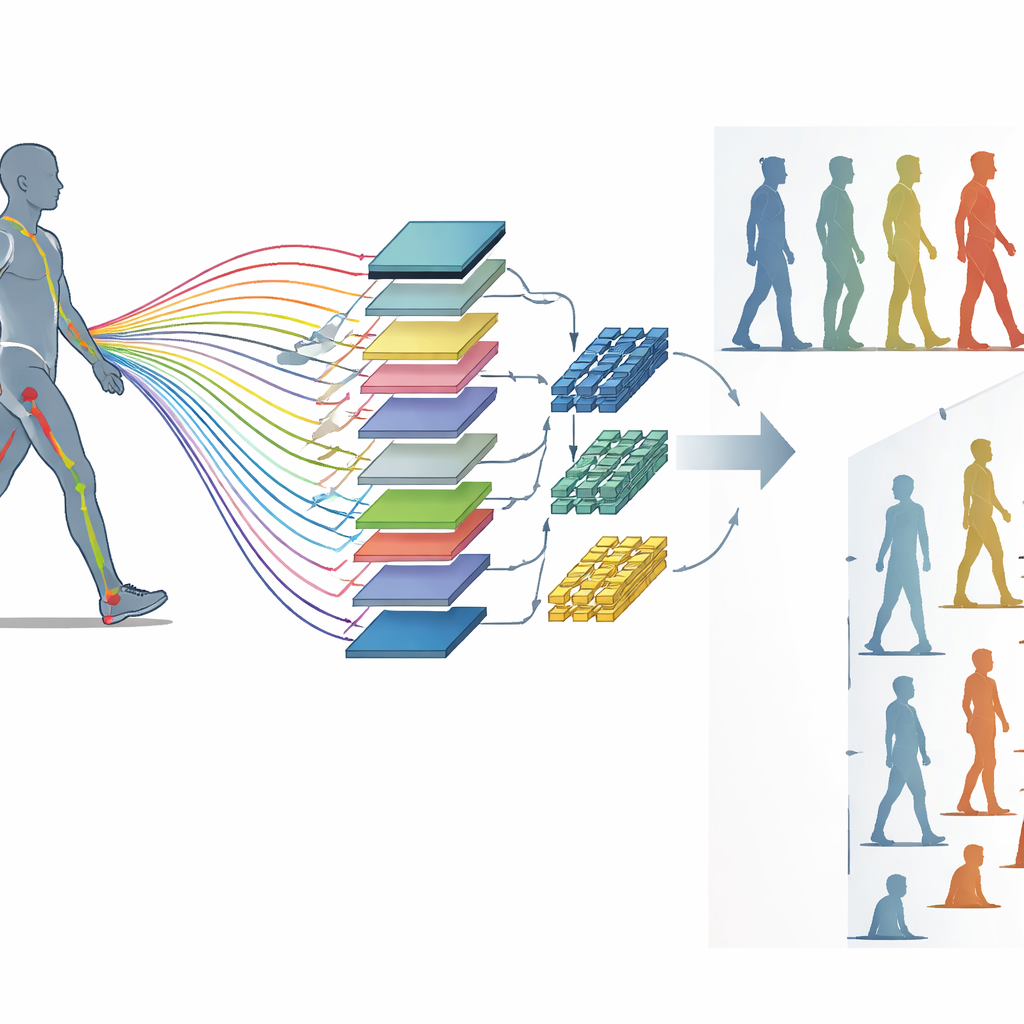

To turn raw video into measurable movement, the team used a pose estimation algorithm called AlphaPose. This program tracks key body points—such as hips, shoulders, wrists, and ankles—in every video frame, creating time series that describe how positions, distances, and joint angles change during each step. From these signals, the researchers built several machine learning models. Some extracted hundreds of mathematical features that describe patterns in the motion, like how variable a joint angle is, while others used fast convolution-based methods designed for time series data. The models were trained to either reproduce the clinician’s numeric gait score or to decide which of two groups (for example, healthy vs. mildly affected) a person belonged to.

Matching and surpassing human judgment

The best-performing combination used feature extraction with the tsfresh toolkit together with a gradient-boosted decision tree method (XGBoost). This model reproduced clinicians’ gait scores with good accuracy and slightly outperformed a separate “human baseline”: consensus ratings from three trained neurologists who scored the videos after the fact. The digital system was especially strong at distinguishing people with clearly different levels of gait problems, but it also handled very subtle distinctions. Remarkably, it could tell apart healthy volunteers from gene-carriers who still appeared to walk normally to human examiners, achieving a substantially better-than-chance classification. In other words, the algorithm detected hidden gait abnormalities before they were visible in routine clinical exams.

Looking inside the black box

To understand what the model was “looking at,” the team used an explainable AI method called SHAP to rank which movement signals mattered most for its decisions. The angles at the shoulders and hips emerged as key contributors, aligning with clinical experience that people with ataxia often widen their stance and use their arms to compensate for poor balance. Over time, the researchers tracked how selected digital features changed in 30 patients who had repeated visits. While the standard clinical gait score barely changed on average, several of these motion-derived markers showed consistent trends, tightly correlated with time and tailored to the person’s baseline level of impairment. This suggests the digital measures can pick up gradual progression that the coarse clinical scale often misses.

Fairness, practicality, and home use

The best model performed similarly across different age groups and between men and women, hinting that it can be used broadly without strong demographic bias. Because the system works from a single front-facing video captured on a consumer tablet, it can be integrated into regular clinic visits and, with basic guidance on camera placement and background, could be extended to home recordings. This contrasts with traditional gait analysis systems that require multiple cameras, markers glued to the skin, or wearable sensors that some patients find uncomfortable or impractical for frequent monitoring.

What this means for patients and care

The study shows that simple walking videos, analyzed with modern machine learning, can not only replicate but slightly improve on expert clinical ratings of gait in ataxia. More importantly, the digital approach uncovers early and slow changes that humans tend to overlook. For patients and clinicians, this opens the door to earlier detection of disease onset, more sensitive tracking of whether treatments are working, and convenient monitoring at home. As more data are collected and methods refined, such video-based digital biomarkers could become a routine part of managing ataxia and evaluating new therapies.

Citation: Wegner, P., Grobe-Einsler, M., Reimer, L. et al. Leveraging machine learning for digital gait analysis in ataxia using sensor-free motion capture. Commun Med 6, 167 (2026). https://doi.org/10.1038/s43856-025-01258-y

Keywords: ataxia gait, video motion capture, machine learning, digital biomarkers, neurological movement disorders