Clear Sky Science · en

Cardiac health assessment across scenarios and devices using a multimodal foundation model pretrained on data from 1.7 million individuals

Why your heartbeat data matters

From hospital heart monitors to smartwatches, more of our lives are being tracked through tiny electrical and optical signals from the heart. These recordings can spot dangerous rhythm problems, estimate blood pressure without a cuff and even hint at future heart risk. But because devices and settings are so different, today’s algorithms often work well only in the narrow situations they were built for. This study introduces a new kind of "foundation" model for heart signals that aims to understand cardiac health across many devices, countries and use cases at once.

Many ways to listen to the heart

Doctors and devices can listen to the heart in several ways. The classic hospital test is the 12-lead electrocardiogram (ECG), with stickers placed across the chest and limbs to record the heart’s electrical activity from different angles. Intensive care units often use fewer leads plus an optical sensor called a photoplethysmogram (PPG), which shines light into the skin to track blood pulsing through vessels. At home, smartwatches and patches may record just a single ECG channel or only PPG. Each of these setups produces signals with different shapes, lengths and numbers of channels, which has made it hard to build one model that works everywhere. Traditional approaches usually train separate, tailor‑made algorithms for each device and task, and they struggle when moved to new environments or populations.

A single brain for many heart signals

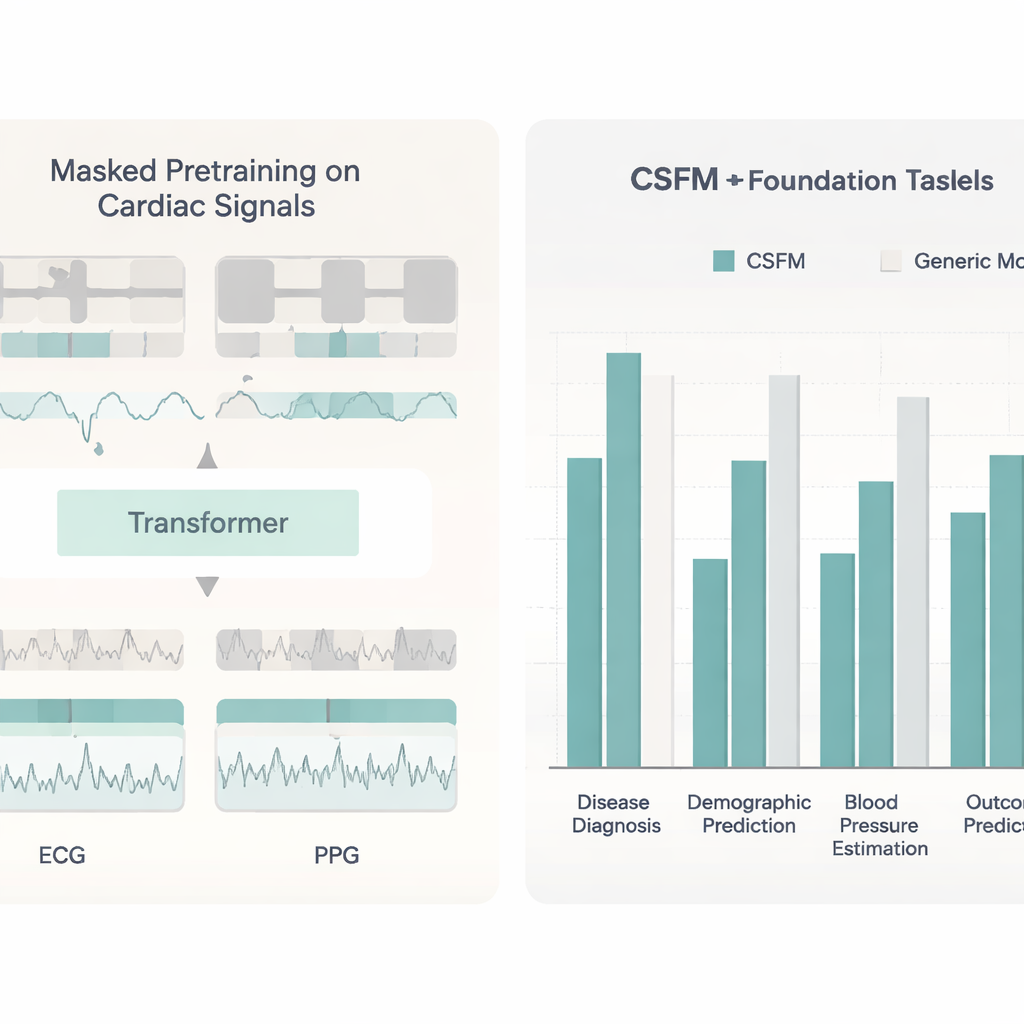

The researchers designed a cardiac sensing foundation model, or CSFM, to act as a common brain for all these signals. Instead of learning from one tidy dataset, CSFM was trained on a huge and messy collection: about 1.7 million heart recordings from multiple hospitals and countries, including both ECG and PPG waveforms plus the text reports that doctors or machines wrote about them. The model chops signals into short segments, turns both signals and words into tokens and feeds them into a transformer, a type of deep learning architecture that has powered recent advances in language and image understanding. During training, large portions of the tokens are deliberately hidden, and the model learns to reconstruct the missing pieces. This “masked” training pushes CSFM to capture the essential patterns that are shared across different devices, leads and languages of description.

From diagnosis to blood pressure and beyond

Once trained, CSFM can be adapted to many concrete jobs using relatively small labeled datasets. The team tested it on heart rhythm and disease classification using standard 12‑lead ECGs, wearable single‑lead ECGs and PPG from smartwatches. It not only matched but often beat strong, task‑specific deep networks. CSFM also helped estimate age, sex and body mass index directly from brief ECG and PPG segments, showing that it had absorbed subtle clues about the person, not just the heartbeat. In another set of experiments, the model turned ECG and PPG into continuous blood pressure waveforms and then into systolic and diastolic values, producing more accurate cuff‑less blood pressure estimates than competing methods.

Working across devices and filling in gaps

A particularly important test was whether CSFM could handle situations where only a subset of usual information is available. The researchers showed that models fine‑tuned from CSFM worked well whether they saw all 12 ECG leads, six leads, two common leads or even a single lead. They also tested combinations of ECG‑only, PPG‑only and ECG‑plus‑PPG inputs. Across these setups, CSFM‑based systems stayed strong while conventional models degraded more sharply. The model’s internal representations could even be used as ready‑made features for simple tools like gradient‑boosted trees, often reaching performance similar to that of fully fine‑tuned deep networks. Finally, by adding a regression head, CSFM could generate one type of signal from another—for example, producing a realistic ECG from a PPG trace, or reconstructing a full 12‑lead ECG from a single lead—opening the door to data augmentation and improved analysis when ideal recordings are unavailable.

What this could mean for patients

For non‑experts, the core message is that a single, general‑purpose model can now make sense of very different heart recordings and still provide accurate, clinically useful answers. Rather than building one fragile algorithm per device and hospital, CSFM offers a shared foundation that can be lightly adapted to local needs, from spotting dangerous rhythms on a smartwatch to predicting which patients are at higher risk of death within a year. The authors acknowledge open issues, such as making the model’s decisions easier for clinicians to interpret and reducing its computing demands. Even so, their results suggest that foundation models for heart signals could help bring advanced cardiac monitoring and risk prediction to more people, in more places, using the devices they already have.

Citation: Gu, X., Tang, W., Han, J. et al. Cardiac health assessment across scenarios and devices using a multimodal foundation model pretrained on data from 1.7 million individuals. Nat Mach Intell 8, 220–233 (2026). https://doi.org/10.1038/s42256-026-01180-5

Keywords: cardiac foundation model, electrocardiogram, photoplethysmography, digital cardiology, wearable heart monitoring