Clear Sky Science · en

Configuring oscillator Ising machines as P-bit engines

A New Way to Harness Noise for Computing

Many powerful computers struggle with tasks like scheduling airline routes or training certain neural networks because these problems explode in complexity. This paper explores a fresh approach that turns tiny electronic "oscillators"—circuits that naturally produce rhythmic signals—into machines that can both hunt for good solutions and deliberately explore many possibilities at random. By cleverly using noise, or randomness, as a resource rather than a nuisance, the authors show how one kind of special-purpose hardware, called an oscillator Ising machine, can be reconfigured to behave like another, known as a probabilistic bit engine.

Two Unusual Computers Meet

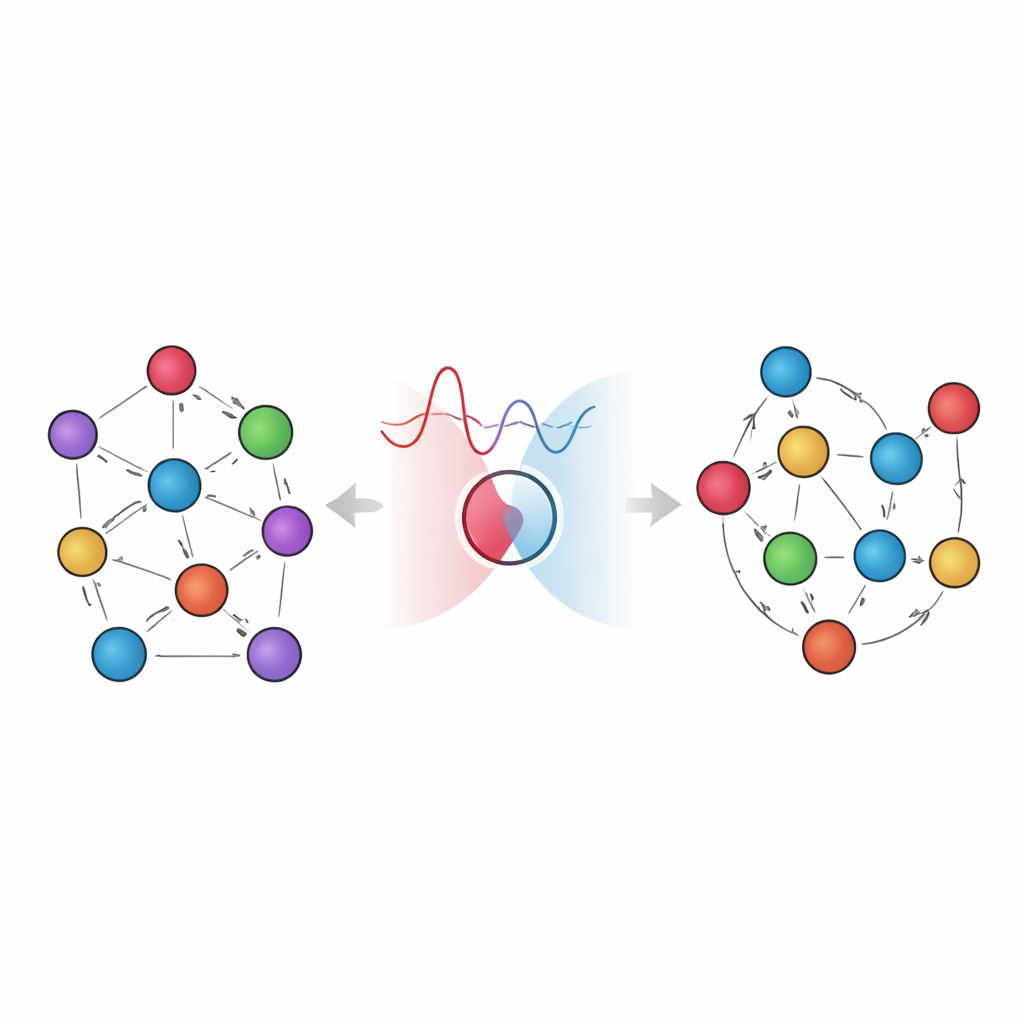

The work brings together two emerging ideas in unconventional computing. Oscillator Ising machines use networks of synchronized oscillators to solve tough optimization problems by mimicking how interacting spins in a magnetic material settle into low-energy patterns. Probabilistic-bit, or p-bit, engines take a different path: they rely on deliberately noisy binary elements whose outputs randomly flip between 0 and 1, but with probabilities that depend on their inputs. These p-bit networks excel at sampling many likely configurations from a problem’s "energy landscape," a key ingredient for probabilistic inference and machine learning. Until now, these two hardware concepts have largely evolved in parallel, with little understanding of how one might emulate the other.

Turning an Oscillator into a Noisy Decision Maker

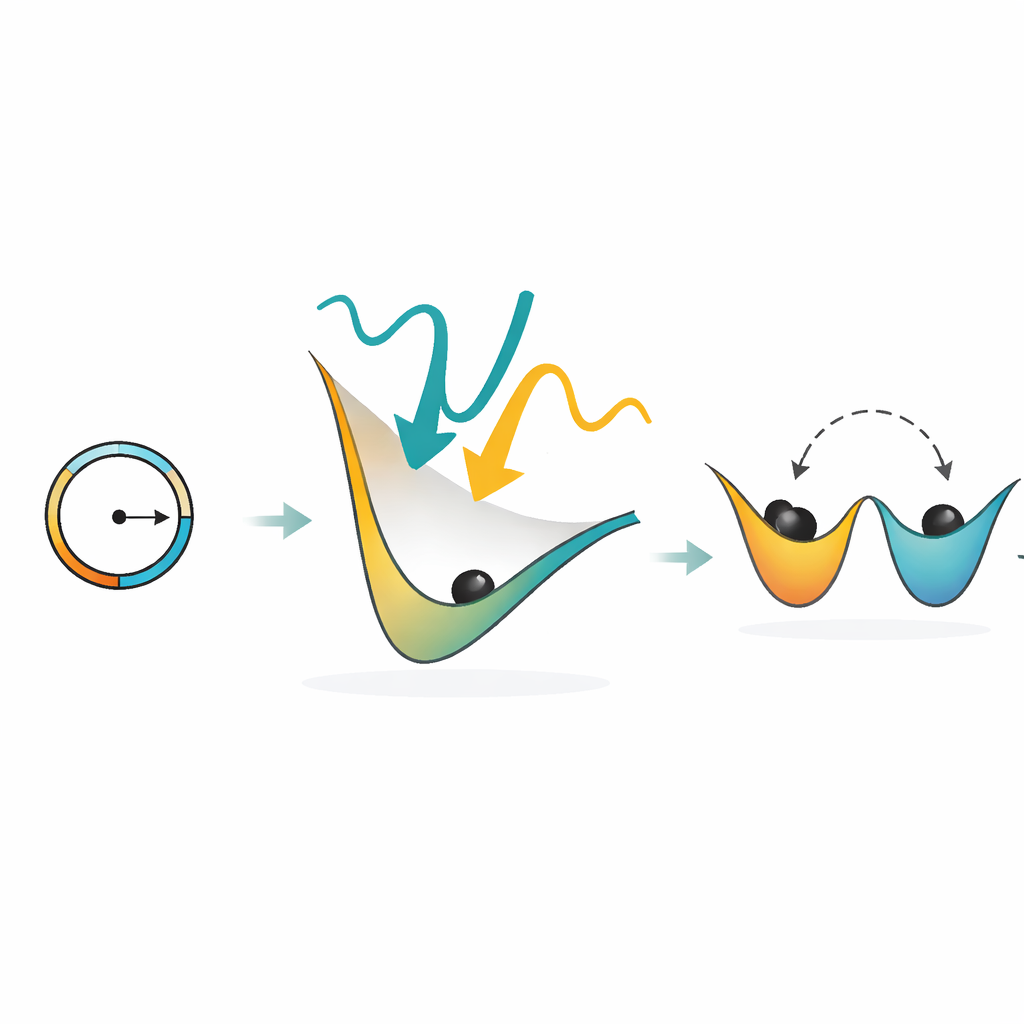

The authors first zoom in on a single oscillator and show how it can behave like a binary stochastic neuron—the hardware equivalent of a coin whose bias can be tuned. They apply two types of rhythmic driving signals: one at the oscillator’s natural frequency (the fundamental harmonic) that sets its phase, and a second at twice that frequency (the second harmonic) that prefers the phase to sit at one of two stable positions. When the second signal is made weak, the energy barrier between these two positions is low, so thermal noise can easily push the oscillator to either side. By briefly preparing the oscillator at a neutral phase and then letting these forces compete, its final state becomes a random but controllable choice between two outcomes, with the odds governed by the strength of its input.

Building a Probabilistic Network from Many Oscillators

Next, the paper shows how a whole network of such oscillators can act like a probabilistic neural network—a hardware realization of a p-bit engine. In a conventional oscillator Ising machine, coupling between oscillators encourages them to settle into phase patterns that correspond to good solutions of an optimization problem. Here, the authors introduce a sampling routine: most oscillators are held firmly in their current states, while one is temporarily released, reset to a neutral phase, and allowed to randomly fall into one of its two stable phases under the influence of its neighbors and noise. Repeating this process, oscillator by oscillator, reproduces the same mathematical rule used in established p-bit models, meaning the hardware effectively performs Gibbs sampling over the problem’s energy landscape.

Putting the Idea to the Test

To test their approach, the researchers simulate two kinds of tasks. First, they use their oscillator-based stochastic neuron to implement a small logic circuit called a full adder, which combines three input bits into a sum and a carry. When run in a mode where all terminals are left free, the system naturally visits the different input–output combinations with frequencies that closely match the expected Boltzmann distribution, confirming correct probabilistic behavior. Second, they tackle the MaxCut problem on random graphs, where the goal is to divide nodes into two groups so that as many connections as possible cross between them. By using the sampling routine, the oscillator network not only finds optimal cuts but does so in a way that reflects the underlying statistical physics, and the distribution of visited states follows the expected exponential relation between probability and energy.

Beyond One Type of Machine

The authors further demonstrate that the same sampling recipe can be applied to other analog Ising-like systems, such as a dynamical Ising machine that uses a slightly different interaction rule between phases. Because the core mathematics governing the stochastic updates is the same, these different physical platforms can all be tuned to behave as p-bit engines. This generality hints that many analog dynamical systems, not just the specific oscillator design studied here, could be repurposed for probabilistic computing by exploiting their natural bifurcations and noise-driven switching.

Why This Matters for Future Computing

For a non-specialist, the key takeaway is that the authors show how to make a deterministic, energy-minimizing machine double as a controlled random explorer. By carefully shaping how oscillators are nudged and how noise is allowed to act, the same hardware that once only "fell downhill" to a nearby solution can now wander the landscape in a statistically meaningful way. This dual capability could be valuable for both solving hard optimization problems and powering new hardware-friendly forms of probabilistic reasoning and learning, potentially offering energy-efficient alternatives to today’s digital processors for specialized tasks.

Citation: Ekanayake, E.M.H., Khan, N. & Shukla, N. Configuring oscillator Ising machines as P-bit engines. Commun Phys 9, 128 (2026). https://doi.org/10.1038/s42005-026-02492-z

Keywords: oscillator Ising machines, p-bit computing, probabilistic hardware, Boltzmann sampling, combinatorial optimization