Clear Sky Science · en

Gastrointestinal endoscopic image style transfer using EndoStyle to improve artificial intelligence prediction models

Sharper Computer Help for Colon Cancer Checks

Colonoscopies save lives by finding and removing small growths, called polyps, before they turn into cancer. Many hospitals now use artificial intelligence (AI) to help doctors spot these tiny lesions on the video screen. But AI tools often stumble when they are moved from one hospital to another, because the images look slightly different depending on the camera system. This study introduces a new technique, called EndoStyle, that reshapes colonoscopy images so that AI systems can perform more reliably across different machines.

Why Endoscopy Images Don’t All Look Alike

A colonoscopy may look the same to a patient, but under the hood each clinic uses its own video processor and camera. These devices vary in color tone, brightness, contrast, sharpness, and even the size and position of the round image on the screen. For an AI system trained at one hospital, this “domain shift” can be a major shock: the new images no longer match what it learned, and accuracy can drop. Today’s standard fixes—like flipping, rotating, or slightly brightening images during training—do not reproduce the distinctive look of different video systems. As a result, commercial AI assistants are usually tuned to specific hardware and may not work as well elsewhere.

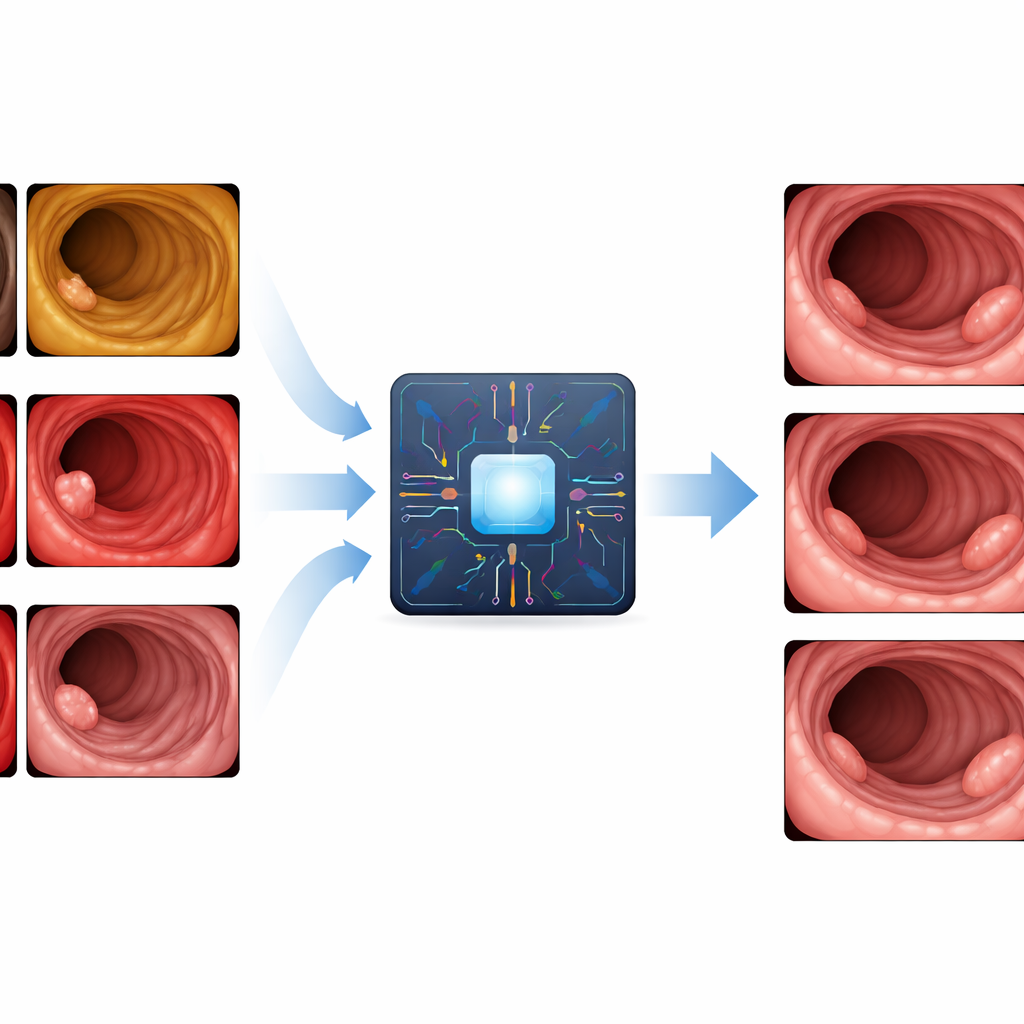

Teaching Images to Match the Local Camera Style

The researchers designed EndoStyle to act like a digital translator between endoscopy systems. It takes a colonoscopy frame and a target “style” (for example, the visual look of a specific video processor) and produces a new image that keeps the anatomy and any polyps intact but changes the overall appearance to match the target device. Under the hood, EndoStyle combines a powerful image-translation network with a super‑resolution model that sharpens the final output. Special safeguards make sure that important features, such as the folds of the bowel and subtle flat polyps, are preserved instead of being washed out or accidentally invented.

Checking Whether the Fake Looks Real

To test whether EndoStyle’s synthetic images were trustworthy, the team first compared them to real colonoscopy images using computer vision metrics that capture visual realism and perceptual similarity. Across five different video processors and hundreds of thousands of frames, the converted images closely matched real ones in both overall look and detailed texture. Three independent AI models, trained to understand medical images, also judged the new pictures to be simultaneously similar to the original content and to the target device style. Next, 22 experienced endoscopists from 16 centers took part in a blinded experiment. They watched short colonoscopy videos and were shown still frames, some real and some generated by EndoStyle. Doctors were asked which images came from the same exam. They chose EndoStyle images almost as often as true frames and far more often than obvious mismatches, suggesting that the synthetic pictures passed a real‑world “smell test.”

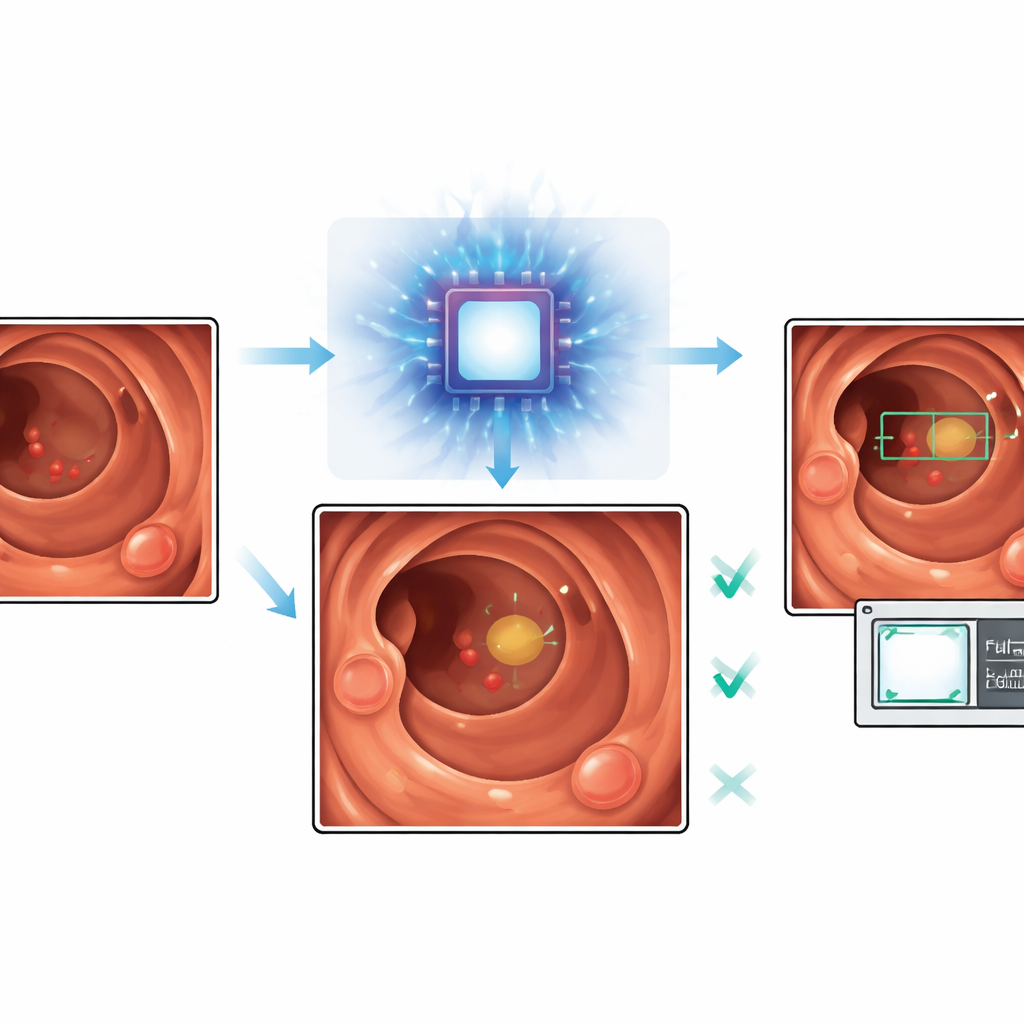

Helping AI Find the Right Polyps and Ignore the Noise

The authors then asked whether these realistic, style‑matched images could actually improve AI polyp detection. They trained several detection systems on a mix of public colonoscopy images and EndoStyle‑generated frames adjusted to match two specific Olympus processors used in the test videos. They also examined a tougher case where the original training data came from a different brand (Fujifilm) that EndoStyle had never seen during its own training. In both situations, adding synthetic images tuned to the target processor made the AI models more selective: false alarms dropped by roughly one quarter to over 40 percent, depending on the setup, while sensitivity—how often true polyps were detected—fell only modestly. Importantly, the synthetic images did not appear to create fake polyps, and most of the few missed lesions were already difficult for both baseline AI and commercial tools.

What This Could Mean for Patients and Clinics

By showing that realistic, processor‑specific synthetic images can be created and safely used to train detection algorithms, this work offers a practical way to make colonoscopy AI more portable and reliable. Instead of collecting and labeling large new datasets every time a clinic upgrades or changes its equipment, developers could adapt existing image collections to the new device style with EndoStyle and retrain their systems with fewer extra steps. The immediate payoff is AI that raises fewer false alarms while still catching most important polyps, making the tools less distracting and more trustworthy for endoscopists. Over time, such style‑transfer approaches could help standardize AI performance across hospitals and support both better cancer prevention and more realistic training simulators for future doctors.

Citation: Troya, J., Kafetzis, I., Weber, R. et al. Gastrointestinal endoscopic image style transfer using EndoStyle to improve artificial intelligence prediction models. npj Digit. Med. 9, 340 (2026). https://doi.org/10.1038/s41746-026-02693-4

Keywords: colonoscopy, polyp detection, medical imaging AI, style transfer, domain shift