Clear Sky Science · en

Deep language model-based early recognition of out-of-hospital cardiac arrest from real-time emergency calls

Why Faster Heart Emergency Detection Matters

When someone’s heart suddenly stops outside a hospital, every second counts. Survival depends on how quickly someone recognizes the crisis, calls for help, and begins chest compressions. Yet even trained emergency dispatchers can miss these events during chaotic phone calls. This study explores whether an advanced language-based artificial intelligence system can listen in on emergency call transcripts and flag possible cardiac arrest in real time, giving dispatchers an extra pair of ears during the most critical first minute.

What Happens During a 911-Style Call

Out-of-hospital cardiac arrest strikes without warning, stopping blood flow and threatening brain damage within minutes. Survival improves dramatically when bystanders start chest compressions quickly, often guided over the phone by dispatchers. But callers may be panicked, unsure what they are seeing, or misled by abnormal gasping that sounds like breathing. As a result, dispatchers fail to recognize cardiac arrest in roughly one out of four cases, delaying life-saving instructions. Traditional tools try to help by following rigid question lists or by spotting certain key words in calls, but these can be fooled by vague, emotional, or misleading language.

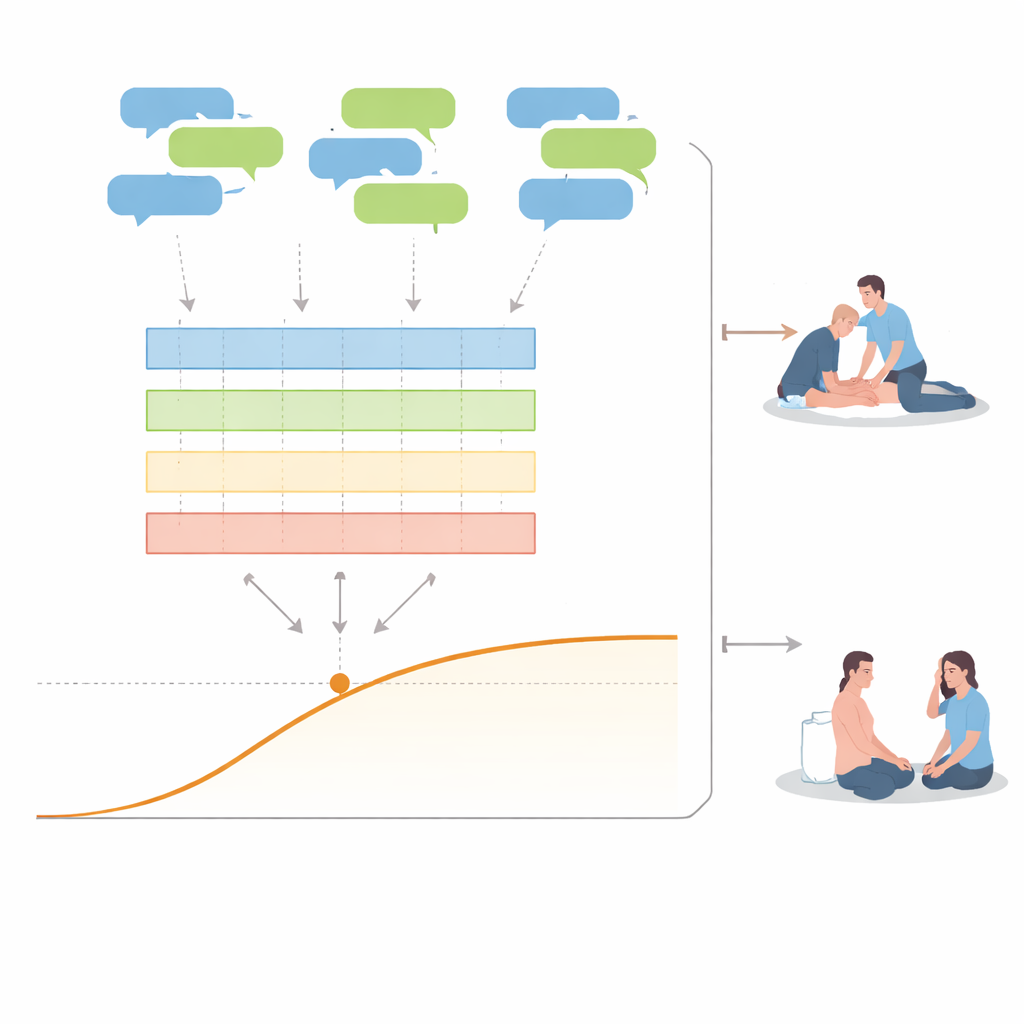

How a Conversation-Aware AI Was Built

The researchers developed a system called DyLM-OHCA that uses deep language modeling, a technique similar to that behind modern chatbots, to analyze emergency call transcripts. Instead of focusing on isolated words, the model reads the back-and-forth dialog between caller and dispatcher as it unfolds. It was trained on nearly 159,000 emergency calls from three large South Korean cities, including more than 2,600 confirmed cardiac arrest cases. During training, the model learned to convert every new word sequence into a running estimate of whether the caller is describing a cardiac arrest, a different severe emergency, or a less serious problem, all without needing detailed labels for each sentence.

How Well the AI Recognizes Cardiac Arrest

The team tested DyLM-OHCA on calls it had never seen before and asked it to decide, within the first 60 seconds, whether a cardiac arrest was occurring. Compared with four established machine-learning methods that rely on hand-crafted word statistics, the language-based system was substantially more accurate. It correctly separated cardiac arrest calls from others in more than nine out of ten cases by standard summary measures, while identifying about four out of five true cardiac arrests within that first minute. On average, it raised its first alert around 20 seconds into the call—more than 20 seconds faster than some earlier computer models reported in other studies—while still keeping incorrect alerts to under one in ten calls.

What the AI Actually Listens For

To understand the system’s decisions, the researchers examined which parts of the conversation most strongly pushed the model toward a cardiac-arrest warning. Rather than latching onto a single magic word, the model responded to patterns: a dispatcher asking if the person is conscious or breathing, followed by a caller saying things like “not waking up” or hinting that something feels seriously wrong. The model also tracked how its own confidence changed over time. In most truly cardiac-arrest calls, once the risk score rose, it stayed high. In more than half of the false alarms, however, the risk peaked early and then dropped as the conversation revealed details pointing to other emergencies such as seizures or breathing attacks.

How This Could Help Real Dispatchers

From a practical standpoint, DyLM-OHCA is designed as a silent assistant, not a replacement for human judgment. As a call unfolds, it would continuously update a risk signal that the dispatcher can see, along with simple explanations of which recent phrases drove the alert. This context could make the system’s warnings easier to trust than black-box alerts from older models and might prompt more focused questions or earlier chest-compression instructions when seconds matter. The study was done on Korean-language calls from one country and has not yet been tested live, but it suggests that conversation-aware AI could meaningfully boost early recognition of cardiac arrest and, ultimately, improve survival.

Citation: Choi, HJ., Hwang, M., Cho, S. et al. Deep language model-based early recognition of out-of-hospital cardiac arrest from real-time emergency calls. npj Digit. Med. 9, 307 (2026). https://doi.org/10.1038/s41746-026-02498-5

Keywords: cardiac arrest, emergency calls, artificial intelligence, language models, dispatcher-assisted CPR