Clear Sky Science · en

Clarifying validation terminologies in healthcare

Why the Word “Validation” Matters

When we hear that a medical test, an AI tool, or a new device is “validated,” we tend to relax and assume it is safe, accurate, and ready for use. But in modern healthcare, that single word can mean very different things to doctors, data scientists, regulators, and business leaders. This article explores how those hidden differences can cause confusion, slow down innovation, and even threaten patient trust—and it proposes practical ways to make the meaning of “validation” clearer and more honest.

One Word, Many Different Worlds

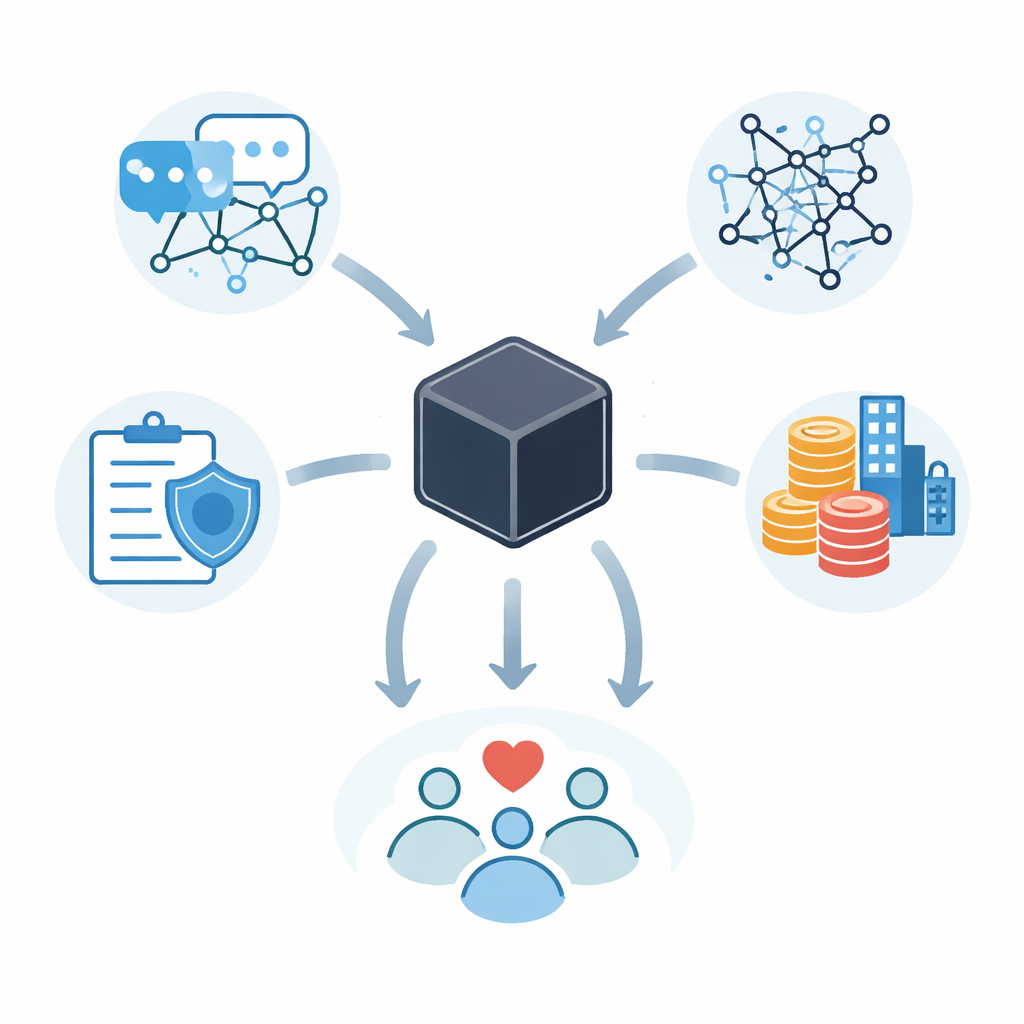

The authors brought together experts from five areas that all rely on validation: communication science, artificial intelligence and machine learning (AI/ML), clinical and laboratory practice, regulatory science, and business. By reviewing 94 key papers, guidelines, and reports, they found that each field quietly builds its own assumptions into the word. In plain terms, “validated” might mean a computer model works well on past data, a lab test is technically precise, a product meets legal standards, or a business idea has investors. None of these meanings is wrong—but mixing them up causes trouble. A tool called “validated” in a research paper may still be far from ready for real patients, for example.

How Communication Shapes Understanding

Communication science studies how people share information, especially when uncertainty is involved. The authors show that, across healthcare and technology, people rarely spell out what exactly has been validated, for whom, and under what conditions. Instead, they rely on shorthand. This makes it easy for teams to talk past one another, a problem the authors frame as a lack of “semantic interoperability”—a shared understanding of what words actually mean in practice. They suggest simple but powerful fixes: start every project by agreeing on what validation will cover; separate fact-finding from debate; and teach team members the basic validation approaches used outside their own specialty. Their first consensus proposal is straightforward: always define the term in context before relying on it.

From Data to Patients: Getting AI and Tests Right

In AI/ML, the term validation is especially tangled. It can refer to checking whether a dataset is suitable, using a “validation set” during model tuning, or judging whether a finished model truly works in the real world. Without clear boundaries, teams may overstate readiness—for example, calling a model “validated” after only internal cross-checks. Recent regulatory frameworks encourage a stepwise view: assess whether the data represent the intended patients, train and tune the model, test it on unseen data, and then perform safety checks and clinical evaluation. In clinical laboratories, a different split is crucial: “analytical validation” shows that a test is technically accurate and reproducible, while “clinical validation” shows that its results are actually meaningful for diagnosis or treatment. A lab test can be technically excellent yet still unproven in terms of improving patient care.

Rules, Markets, and Real-World Use

Regulators and businesses add more layers to the story. Regulatory science uses validation to mean objective proof that a product meets safety and performance requirements for a specific purpose, but details differ between regions such as the United States and the European Union. At the same time, business teams talk about validating a product when they confirm demand, investor interest, or successful pilots in hospitals. A product might look “validated” from a market angle yet fail to meet regulatory or clinical expectations, leading to late-stage redesigns and delays. The authors argue that these uses should not be forced into one rigid definition; instead, people should always signal which kind of validation they mean—technical, clinical, regulatory, or business—and how far along they really are.

Small Changes for Clearer Promises

Rather than inventing a new jargon, the paper proposes light-weight adjustments: attach short qualifiers to claims, such as “validated for this patient group,” “validated against this reference,” or “validated under these operating conditions.” Across five consensus proposals, the message is consistent: separate stages of AI development, distinguish analytical from clinical evidence, spell out regulatory settings, and align business claims with clinical and legal reality. Used this way, “validation” stops being a vague badge of quality and becomes a precise, context-aware promise. That clarity can reduce misunderstandings, cut wasteful rework, and, most importantly, help ensure that when patients hear a tool is “validated,” it truly means what they think it does.

Citation: Dy, A., Buetow, S.M., Bredemeyer, A.J. et al. Clarifying validation terminologies in healthcare. npj Digit. Med. 9, 318 (2026). https://doi.org/10.1038/s41746-026-02471-2

Keywords: healthcare validation, medical AI, clinical diagnostics, regulatory science, digital health