Clear Sky Science · en

Hierarchical deep learning pipeline for robust cervical parameter measurement in radiographs with C7 obscuration

Why Neck X-Rays Need a Helping Hand

When doctors plan surgery on the neck or track spinal problems over time, they rely on precise measurements taken from side-view X-rays of the cervical spine. These angles and distances, which describe how the neck curves and lines up with the rest of the body, are closely linked to pain relief, nerve function, and long-term outcomes. Yet in everyday practice, these measurements are slow, can vary from one specialist to another, and are often thrown off when part of the lowest neck bone (the C7 vertebra) is hidden by a patient’s shoulders. This study introduces a new kind of artificial intelligence (AI) system designed specifically to tackle that real-life challenge.

A Tough Problem in Everyday Neck Imaging

Side-view X-rays of the neck are a workhorse in spine clinics worldwide. From these images, surgeons measure how much the neck curves backward (cervical lordosis), how each vertebra tilts, and how far the head sits in front of the spine. These values help decide whether surgery is needed, what type of operation to perform, and how well a patient is recovering. However, taking these measurements by hand is time-consuming and can be inconsistent, especially when different clinicians draw slightly different lines on the same image. Making matters worse, in many X-rays the C7 vertebra—the key reference point at the base of the neck—is partially or completely hidden behind the patient’s shoulders, making accurate measurement difficult even for experts.

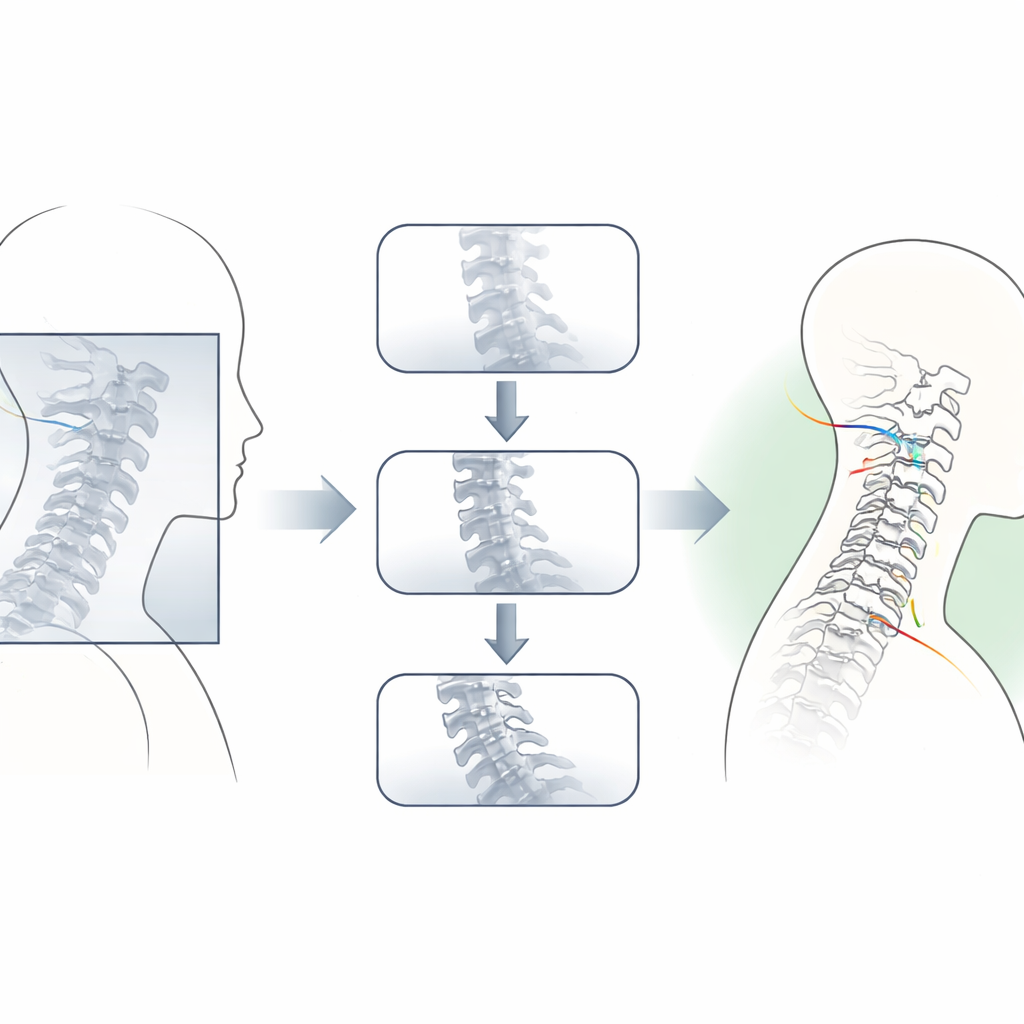

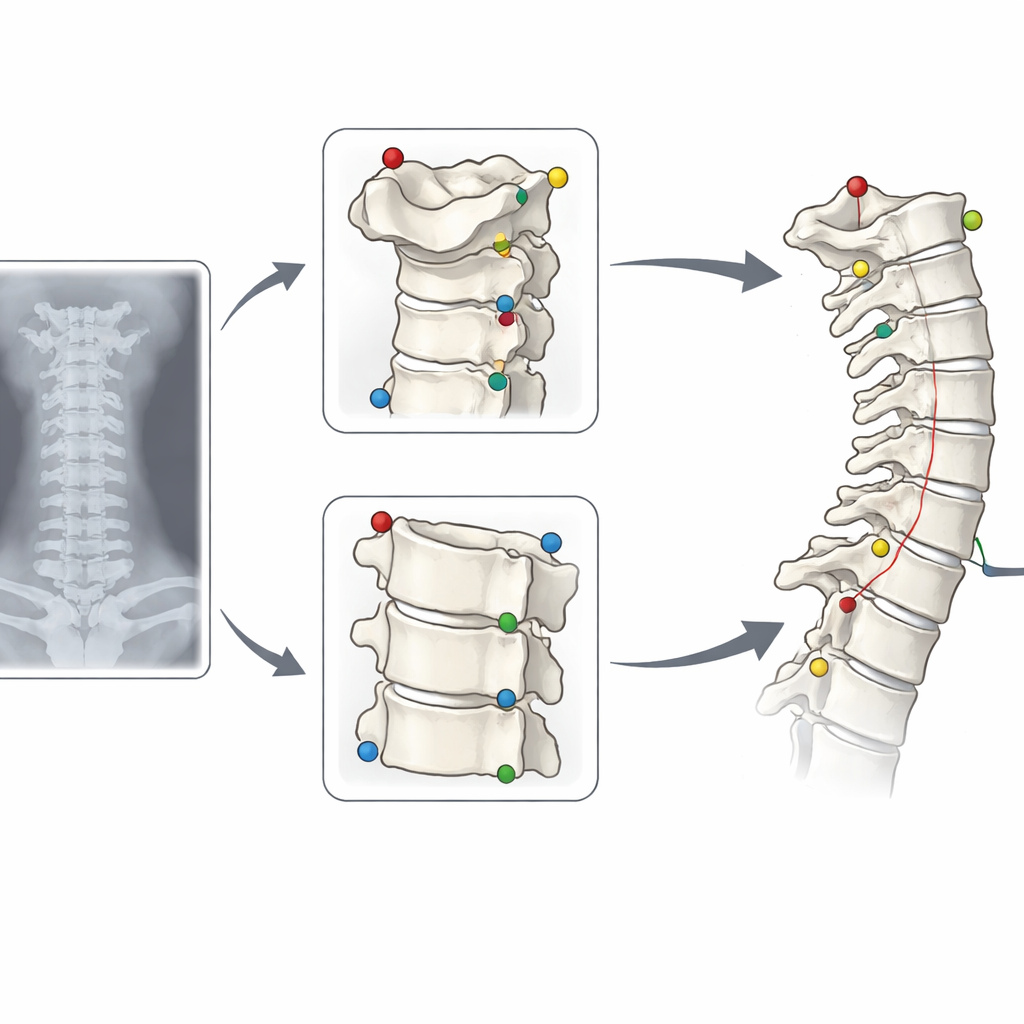

How a Step-by-Step AI System Reads the Spine

To address this, the researchers built a “hierarchical” or step-by-step deep learning pipeline that mimics how a careful human might tackle a tricky image. First, a global model scans the entire X-ray, resized to a standard square, and makes coarse guesses about where important points on the vertebrae lie from C2 to C7. Then, instead of stopping there, a separate module estimates where the hidden C7 region should be based on the more easily seen bones above it. With this guidance, the system crops high-resolution images around the top (C2) and bottom (C7) of the neck. Two specialist models then re-examine these smaller patches, fine-tuning the positions of key corners and centers. Finally, the system combines the refined top and bottom landmarks with the original mid-neck points to calculate the clinically important angles and offsets.

Putting the System to the Test

The team trained the models on more than 5,600 X-rays from multinational datasets that deliberately included many difficult cases with poor views of C7. They then tested the system on two separate groups: an internal set from the same hospital and an external set from a public database specifically enriched with images where C7 was partly or fully hidden. Across both groups, the AI’s measurements of neck curvature and vertebral tilt agreed extremely well with expert-drawn “ground truth” values. On the challenging external data, agreement scores were in the “excellent” range for all major parameters, with typical errors of only a few degrees. Importantly, the hierarchical pipeline outperformed a simpler one-step model, especially when C7 was obscured. In some of the hardest cases, it reduced large errors of around 10 degrees down to less than a quarter of a degree, effectively rescuing measurements that would otherwise have been unreliable.

More Consistent Than Human Readers

The researchers also compared the AI’s repeatability to that of human specialists. When the same expert measured the same images twice, results for some angles—particularly those involving C7—could differ noticeably from one attempt to the next. By contrast, when the AI processed the same X-ray repeatedly, its outputs were almost perfectly identical. Remarkably, the agreement between the AI and each specialist for the difficult C7-related angle was higher than the agreement between the two human readers themselves. This suggests that, rather than replacing clinicians, the system can serve as a stable, standardized measuring tool that reduces human variability, especially in borderline or obscured cases.

What This Means for Patients and Clinics

For patients, the practical message is that computers can now help spine surgeons read neck X-rays more quickly and consistently, even when parts of the image are hard to see. The study shows that a carefully designed, multi-step AI system can handle messy, real-world imaging conditions instead of relying only on perfect pictures. While more work is needed to test the approach on post-surgery images, different scanners, and full-spine views, this hierarchical pipeline sets a new benchmark for automated cervical spine analysis. It points toward a future in which AI quietly works in the background, flagging problematic images for human review and providing trustworthy measurements that support safer, more precise care.

Citation: Kang, DH., Park, SJ., Park, JS. et al. Hierarchical deep learning pipeline for robust cervical parameter measurement in radiographs with C7 obscuration. npj Digit. Med. 9, 261 (2026). https://doi.org/10.1038/s41746-026-02455-2

Keywords: cervical spine, medical imaging AI, deep learning, spinal alignment, radiograph analysis