Clear Sky Science · en

Computer vision applications in vascular surgery: a systematic review and critical appraisal

Smart Cameras for Blood Vessel Doctors

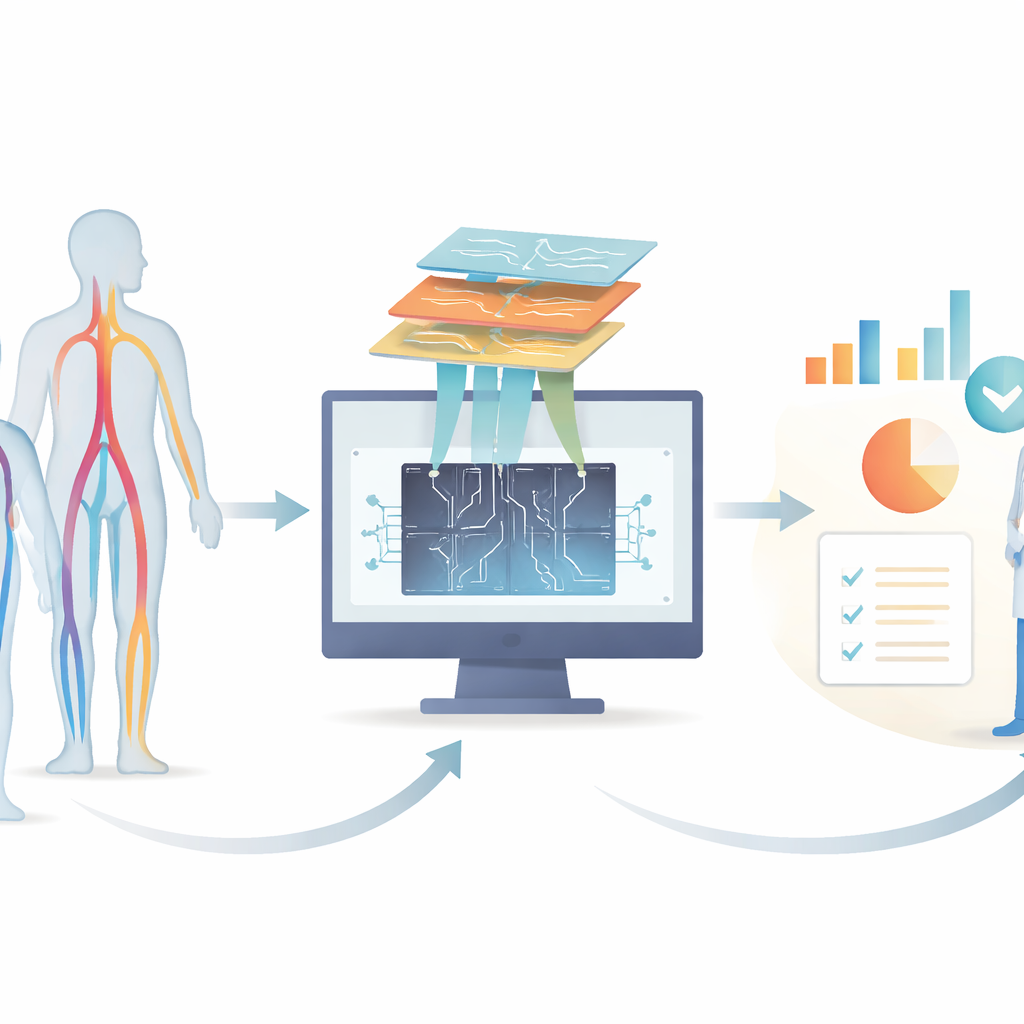

Doctors who treat blood vessel problems rely heavily on medical images, from body scans to simple photos of wounds. In recent years, computer vision—software that “sees” and interprets images—has advanced so quickly that it can sometimes match medical experts. This article looks across hundreds of studies to see how these image‑reading tools are being used in vascular surgery, how well they work, and what still needs to improve before they can safely help doctors care for patients.

Where the Research Is Growing Fast

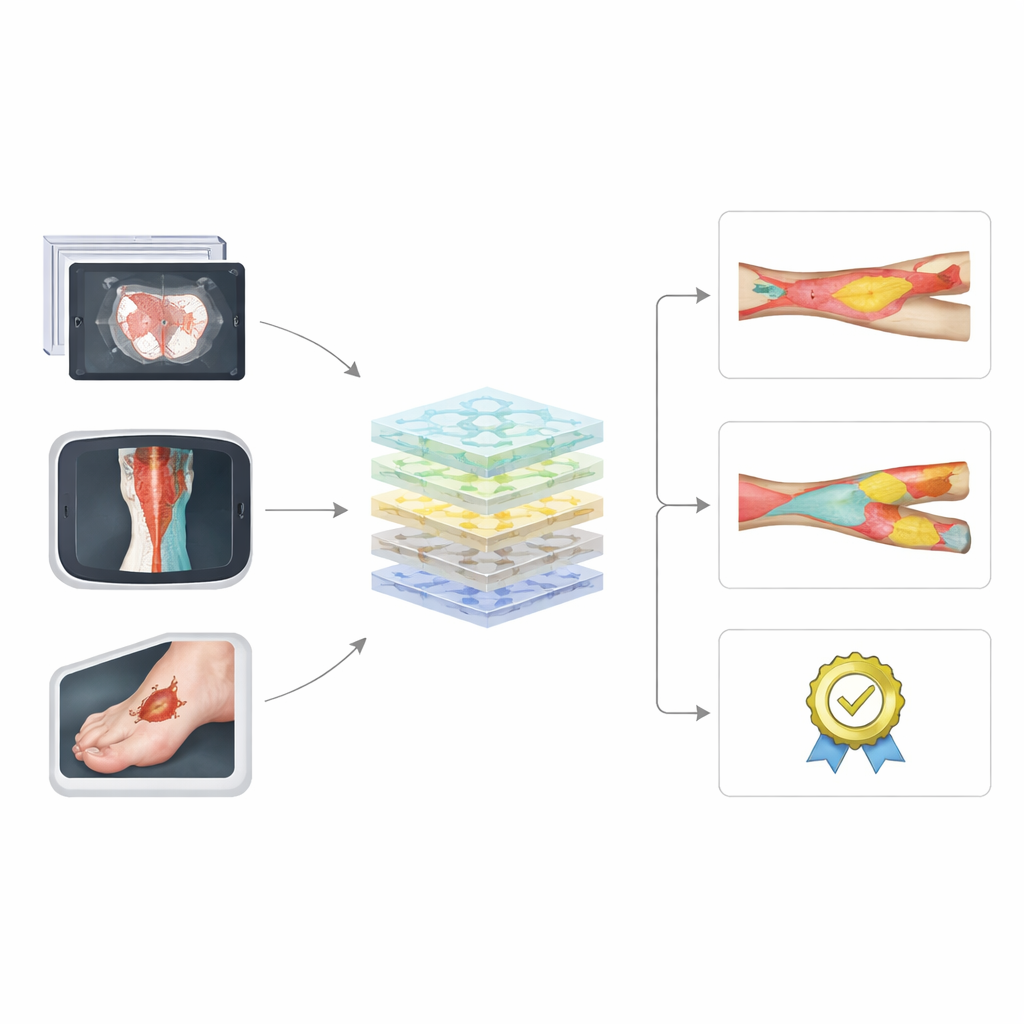

The authors searched major medical and technical databases and found 288 studies, with an explosion of work after 2017 and another wave in 2024–2025. Most projects analyzed existing images rather than following patients forward in time. Researchers mainly targeted three problems: ballooning of the body’s main artery (aortic aneurysms and dissections), narrowing of neck arteries that feed the brain, and hard‑to‑heal foot sores, especially in diabetes. In contrast, conditions like blocked leg arteries and vein disease—everyday issues in vascular clinics—were rarely studied, even though they can cause pain, disability, and amputations.

What the Computers Actually Look At

Across the studies, computers were fed many kinds of images. For aortic disease, most used CT scans; for neck artery disease they leaned on ultrasound; for foot ulcers they relied on standard photos and heat‑sensing pictures. The most common tools were modern deep‑learning networks, especially U‑Net and other convolutional neural networks, sometimes combined into ensembles. These systems were usually asked to outline structures (such as the inner channel and wall of an artery, or the edges of a wound), measure sizes, or sort images into categories like “diseased” or “healthy.” Many models performed impressively on paper, sometimes closely matching specialist doctors for tasks such as measuring aneurysm size or tracking the area of a foot ulcer.

How Reliable and Fair Are These Tools?

Despite promising results, the review found serious concerns about how these systems were built and tested. Only a minority used outside data from other hospitals, which is crucial to show that a model will work in the real world and not just on its home dataset. Many papers skipped basic safeguards against over‑training the model, such as separate validation sets or checks for class imbalance, and some even measured performance on the very images used for training. Standard ways of reporting results were uneven: for image‑outlining tasks, about half reported a widely accepted overlap score, and for yes‑or‑no predictions, fewer than one in five used the more robust AUROC measure. Very few studies examined whether their systems behaved differently across patient groups or imaging devices, leaving questions about fairness and generalizability.

Gaps in What Gets Studied

The pattern of research does not mirror everyday patient needs. The bulk of work clusters around aorta, neck arteries, and diabetic foot disease—areas with abundant imaging datasets and clear tasks. Meanwhile, peripheral artery disease in the legs, which often affects people from racial minorities and those with lower income, remains underexplored. The authors argue that computer vision could be particularly helpful here by predicting which patients will worsen and by automating complex scoring systems that currently take too long to use in busy clinics. Another overlooked opportunity is software that can mimic contrast dye in scans without exposing patients—many of whom have kidney problems—to potentially harmful chemicals, a technique that only a handful of vascular studies have tried.

Raising the Bar for Future Work

To judge quality, the authors applied two established checklists that assess bias and reporting in prediction studies. Only about one in five newer studies scored as low risk of bias, and overall adherence to reporting standards stayed just above the halfway mark, though it has improved over time. Common gaps included unclear description of how “ground truth” labels were created, lack of transparency about data sources, missing information on how model settings were chosen, and almost no involvement of patients or public stakeholders. The authors call on researchers to follow these guidelines from the planning stage, use consistent performance measures (dice for outlining, AUROC for yes‑or‑no decisions), share data and code when possible, and build prospective trials that test whether computer vision tools truly improve patient outcomes.

What This Means for Patients and Clinicians

Overall, the review paints a picture of powerful image‑reading tools that are still early in their journey from lab to clinic. Computer vision in vascular surgery already shows it can spot, outline, and measure blood vessel problems and wounds with impressive accuracy under controlled conditions. But until studies better reflect real‑world practice, include diverse patients, and meet stricter quality standards, these systems will remain mainly research curiosities rather than trusted partners in care. If developers and clinicians address these issues, computer vision could become a routine aid that speeds diagnosis, supports complex decisions, and expands access to specialist‑level assessment for people with vascular disease.

Citation: Liyanage, A., Li, B., Yi, J. et al. Computer vision applications in vascular surgery: a systematic review and critical appraisal. npj Digit. Med. 9, 260 (2026). https://doi.org/10.1038/s41746-026-02427-6

Keywords: computer vision in vascular surgery, medical imaging AI, aortic and carotid disease, diabetic foot ulcers, peripheral artery disease