Clear Sky Science · en

Artificial intelligence-based incident analysis and learning system to enhance patient safety and improve treatment quality

Why Smarter Incident Reviews Matter for Patients

Behind every safe cancer treatment lies an invisible layer of checking, double‑checking, and learning from close calls. Radiation therapy is highly precise, but its complex, team‑based workflows leave room for small mistakes that can ripple into big problems. Hospitals already collect thousands of reports on near misses and minor glitches, yet sifting through these stories by hand is slow and inconsistent. This article describes a new artificial intelligence–based system that helps experts rapidly analyze incident reports, uncover hidden patterns in how and why things go wrong, and turn that knowledge into safer care.

From Human Stories to System Learning

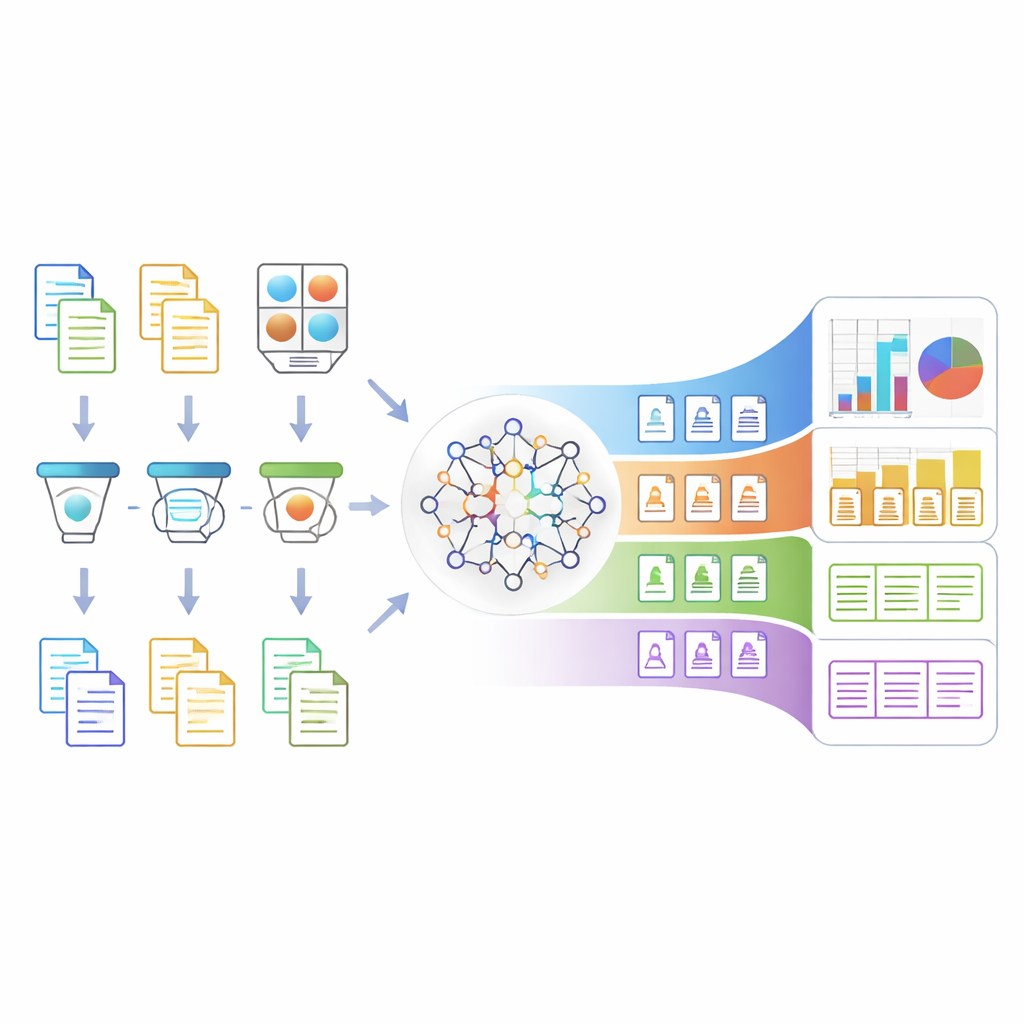

When something unusual happens during radiation treatment—such as a confusing instruction, a delay, or a near miss—clinicians file a narrative report in their own words. Traditionally, quality and safety teams read each story, decide what went wrong, and map it onto a human factors framework called HFACS, which breaks down contributing causes from front‑line slips to deeper organizational issues. This approach is powerful but time‑consuming, especially in busy cancer centers where thousands of incidents are logged, most of which do not harm patients but still contain valuable lessons. The new Artificial Intelligence–based Incident Analysis and Learning System (AI‑ILS) aims to support these teams by automating much of the classification work while preserving expert oversight.

Cleaning and Clarifying the Language of Incidents

Before an algorithm can understand an incident story, the text must be both safe to share and clear in meaning. AI‑ILS first strips out personal details—such as names, dates, and record numbers—using specialized language models trained to recognize sensitive information in medical text. It then expands medical shorthand and local jargon into clearer phrases, so that acronyms like "DIBH" or job‑specific abbreviations are interpreted correctly. This two‑step preparation makes the reports anonymous and easier for the core model to understand, while still preserving the original structure and intent of the narrative, which is crucial for accurately judging what went wrong.

Teaching an AI to See Deeper Causes

At the heart of the system is a large language model fine‑tuned on 1,548 carefully crafted mock incident reports. A multidisciplinary team—including physicists, nurses, therapists, dosimetrists, and physicians—wrote these examples to cover a wide range of problems across the four HFACS tiers: unsafe acts, preconditions (like staffing or environment), supervision, and broader organizational influences. The model was trained in four parallel branches, one for each tier, with an added "none of the above" option to avoid forcing every story into an ill‑fitting category. After fine‑tuning, the model’s performance improved dramatically: its ability to distinguish correct from incorrect labels (as measured by standard accuracy and correlation metrics) rose from roughly chance level to strong agreement with expert judgment.

Putting the System to the Test in Real Clinics

The researchers then applied AI‑ILS to 350 real incident reports from a large cancer center’s internal safety database. Comparing its classifications with those of experienced medical physicists, they found about 79% strict agreement and nearly 88% agreement when borderline cases were counted as "possibly acceptable". The system operated far faster than humans: it could fully process and classify an incident in about five seconds, whereas reviewers needed around two and a half minutes per case. A simple chat‑style interface lets clinicians see how the AI interpreted a report, ask follow‑up questions, and better understand why a particular cause was suggested, turning the tool into a partner rather than a black box.

What This Means for Safer Care

This study suggests that a carefully tailored AI system can reliably support experts in making sense of large volumes of safety reports, freeing up time for deeper investigation and action. Rather than replacing human judgment, AI‑ILS organizes and standardizes incident patterns across front‑line errors, working conditions, supervision, and organizational practices. With broader adoption and larger, multi‑center datasets, similar tools could help hospitals move from reactive fixes after something goes wrong to proactive, data‑driven improvements in how care is delivered—quietly reducing preventable harm and making complex treatments like radiation therapy safer for patients everywhere.

Citation: Jinia, A.J., Chapman, K., Liu, S. et al. Artificial intelligence-based incident analysis and learning system to enhance patient safety and improve treatment quality. npj Digit. Med. 9, 254 (2026). https://doi.org/10.1038/s41746-026-02390-2

Keywords: patient safety, radiation therapy, incident reporting, artificial intelligence, human factors