Clear Sky Science · en

DC bias optimization in intelligent DCO-OFDM Li-Fi systems using hybrid machine learning with hardware validation

Light-Based Internet for Everyday Spaces

Imagine your room lights not only brightening the space but also streaming movies to your laptop and phone at very high speeds. That is the promise of Li-Fi, a technology that uses light instead of radio waves for wireless data. This paper tackles a subtle but crucial technical tuning knob in Li-Fi transmitters—how much steady background light, or DC bias, should be added to the data signal—using smart machine learning methods and real hardware tests, to make Li-Fi faster, more efficient, and more reliable.

Why Light Can Beat Wi-Fi

Conventional Wi-Fi relies on radio waves that pass through walls, share crowded frequency bands, and can be prone to interference and security issues. Li-Fi, by contrast, uses infrared for sending data back from devices and visible light for sending data down from ceiling lamps. Because light does not penetrate walls, a Li-Fi link can be naturally confined to a single room, which reduces interference and improves privacy. It also taps into a huge, unlicensed slice of the spectrum, enabling very high data rates. This makes Li-Fi an attractive ingredient for future 6G networks, hospitals where radio emissions are restricted, and any setting that needs both illumination and high-speed connectivity.

The Hidden Role of a Steady Glow

Under the hood, many Li-Fi systems use a technique called DCO-OFDM to pack lots of data onto a LED’s light output. The raw electrical signal produced by this method swings both above and below zero, but LEDs can only shine with nonnegative light intensity. Engineers therefore add a constant offset—DC bias—to shift the whole waveform upward and then clip off any remaining negative portions. If the bias is too small, the negative parts are heavily clipped, introducing noise and errors. If it is too large, the LED wastes power on useless brightness instead of data. The sweet spot depends on several factors, including how the signal is encoded and how noisy the link is, and conventional optimization methods can be slow or difficult to adapt to changing conditions.

Teaching the System to Tune Itself

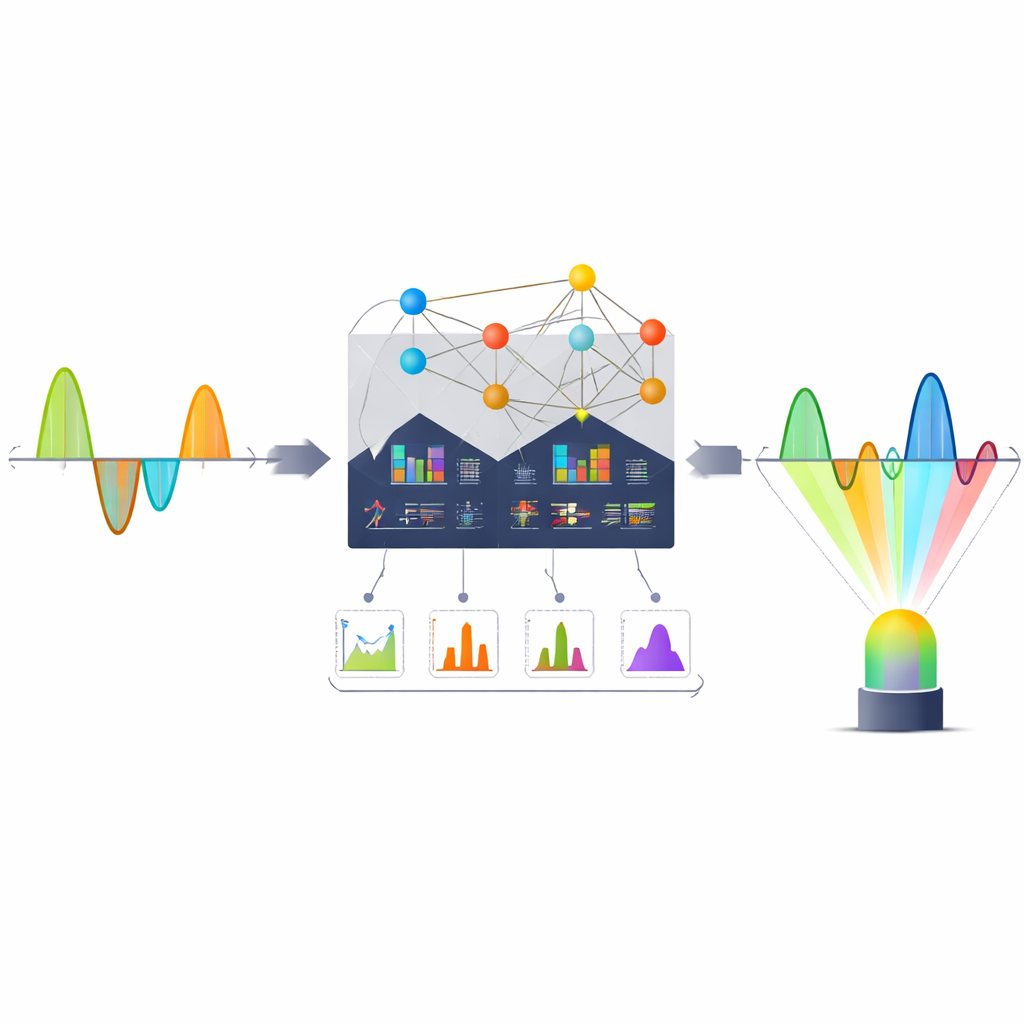

To let the Li-Fi transmitter “learn” its own best bias level, the authors built a dataset using detailed computer simulations of DCO-OFDM signals. For each generated signal, they calculated simple numerical features such as the minimum and maximum values of the waveform, its average level, its variability, and the error rate after transmission. These summaries, along with system settings like the constellation size and number of subcarriers, formed the input to machine learning models trained to predict the ideal DC bias that balances errors and power use. The team explored several hybrid approaches that blend classic regression, which fits smooth curves, with K-Nearest Neighbors (KNN), which looks at similar past examples. They compared different combinations and measured how well each predicted bias values agreed with the true optimum, using standard accuracy scores.

From Computer Models to Real Hardware

Beyond simulations, the researchers built a small laboratory Li-Fi link using a high-brightness LED as the transmitter, a photodiode as the receiver, and an Arduino-based circuit to capture real signals over a short indoor distance. The same kinds of signal features were extracted from this hardware setup, creating a second dataset that reflects real noise, imperfections, and environmental effects. Without retraining, the machine learning models learned on simulated data were asked to predict the best DC bias for these real measurements. This step is critical, because many earlier studies stopped at computer experiments and never showed that their methods survive the messiness of the physical world.

Smarter Biasing for Future Li-Fi Rooms

The standout result is that a hybrid model combining polynomial regression with KNN produced the most accurate and robust predictions. It achieved high agreement with the true optimum in simulations and maintained strong performance when applied directly to the Arduino-based Li-Fi link. In practical terms, this means a Li-Fi system equipped with such a model could automatically dial in the right bias level as conditions change, minimizing wasted light and signal distortion without constant manual tuning. While the current hardware tests cover only short distances and simplified indoor scenarios, the approach points toward intelligent, self-optimizing Li-Fi networks where ceiling lights quietly adjust themselves to deliver secure, high-speed data alongside everyday illumination.

Citation: Abdelhakim, E., Ragab, D.A., Abaza, M. et al. DC bias optimization in intelligent DCO-OFDM Li-Fi systems using hybrid machine learning with hardware validation. Sci Rep 16, 14658 (2026). https://doi.org/10.1038/s41598-026-49732-4

Keywords: Li-Fi, visible light communication, machine learning, OFDM, wireless networks