Clear Sky Science · en

TRACER: a reliability-first GemNet baseline for trustworthy computational materials discovery

Why smarter materials searches matter

New batteries, solar cells, and electronic devices all depend on finding materials with just the right atomic recipe. Computers can help explore this vast “materials genome,” but the most reliable physics calculations are so expensive that they cannot be run on billions of candidates. This study introduces TRACER, a way to use modern machine learning to speed up the search while keeping a tight grip on how trustworthy each prediction is, so scientists know when to rely on the model and when to double-check with heavier calculations.

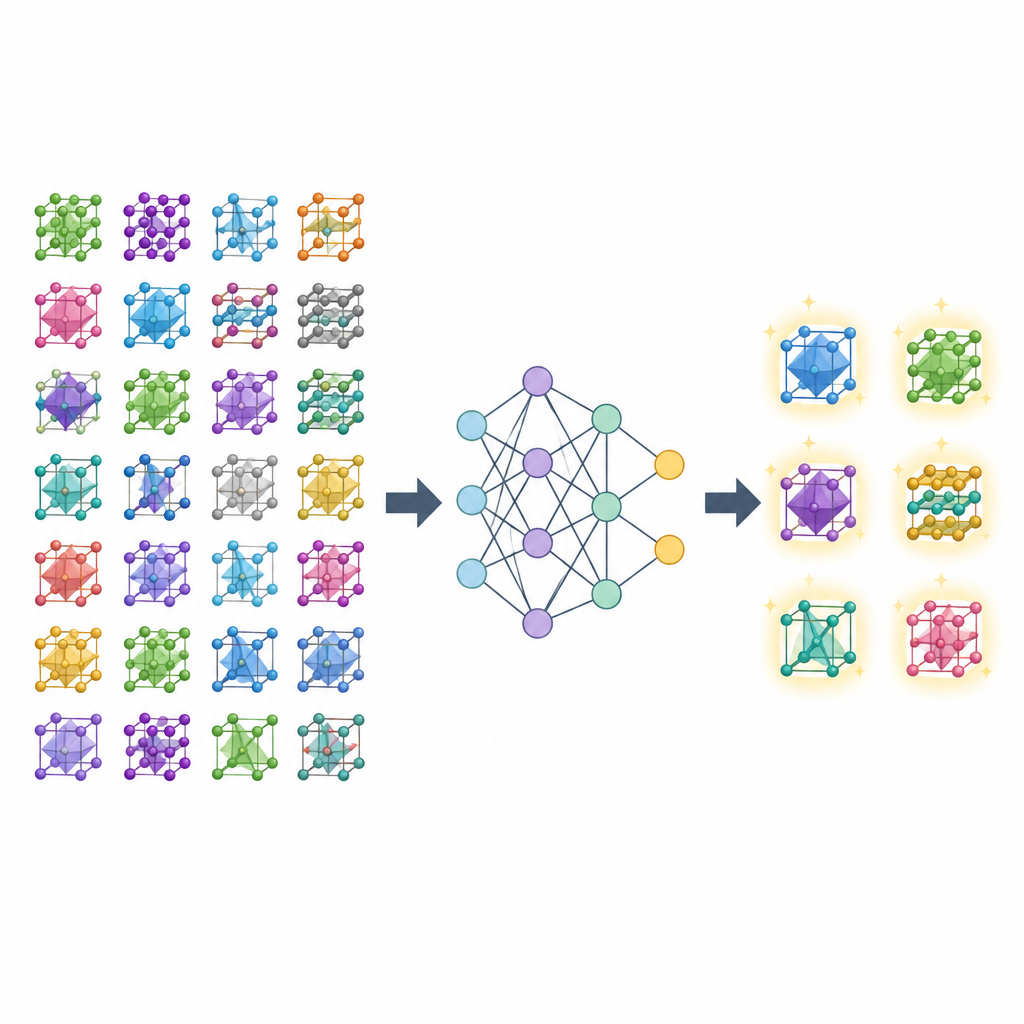

Teaching a model to read atomic blueprints

At the heart of this work is a type of neural network that treats a crystal as a graph: atoms are points and the connections between them capture which atoms are near each other in three-dimensional space. The authors build on a design called GemNet, which passes messages along these connections to learn how arrangement controls energy, a basic quantity that tells whether a material is stable. Training on tens of thousands of crystal structures from a public database, their model learns to predict the energy per atom with high accuracy, coming closer to gold-standard physics calculations than a widely used earlier network called ALIGNN, while also training several times faster.

From average accuracy to dependable decisions

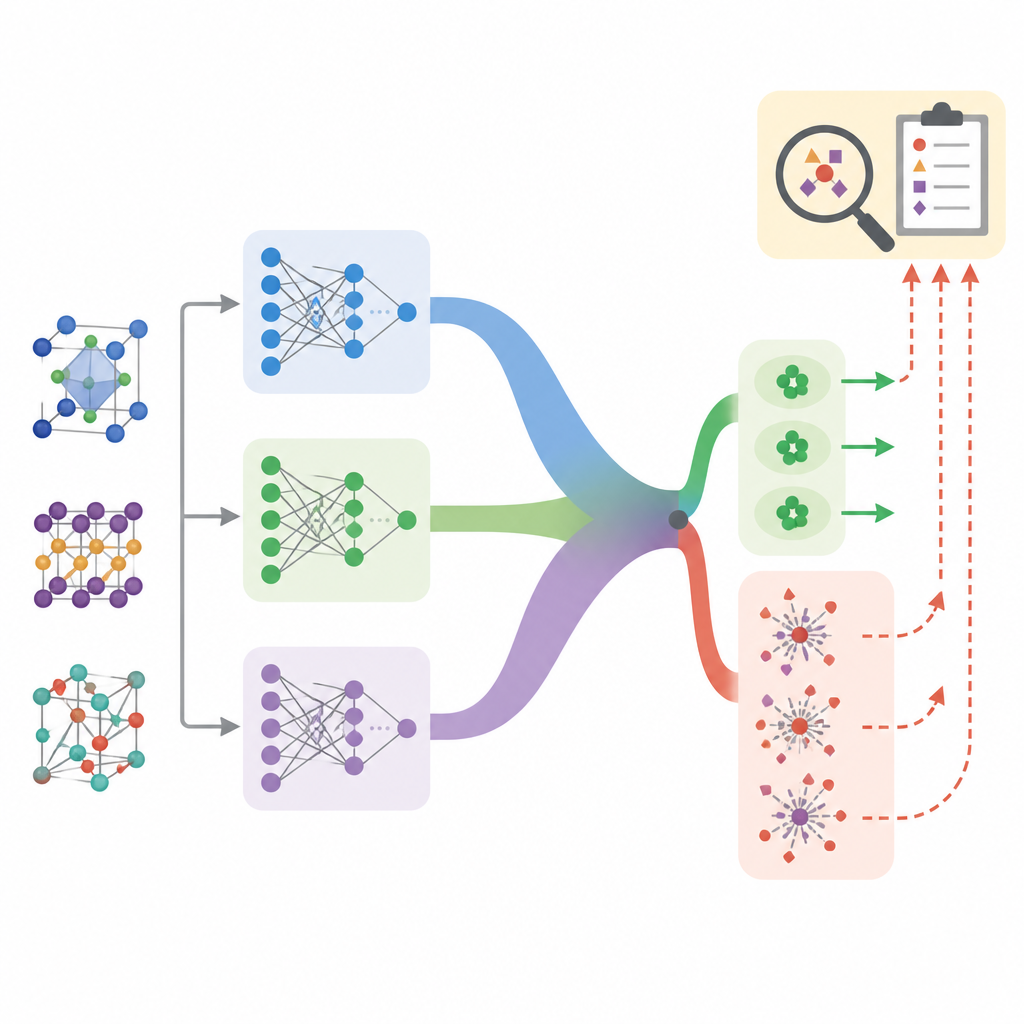

In real screening campaigns, the challenge is not just how small the average error is, but how often the model silently fails on unusual materials. TRACER shifts the focus from raw accuracy to reliability. The authors run several copies of the same GemNet model, each started with different random settings, and treat the spread in their answers as a measure of uncertainty. When all copies agree, confidence is high; when they disagree, the prediction is flagged as risky. This simple ensemble strategy turns uncertainty into a usable signal that correlates strongly with actual errors, helping to rank which candidates are safe to trust and which deserve extra scrutiny.

Finding the trouble spots

Using this uncertainty signal, the team examines where the model struggles. Errors tend to be larger for crystals with very few or very many atoms, extremely high or low energies, or exotic chemical makeups such as oxygen clusters, metal dimers, and large carbon cages. Crucially, these same cases also show high disagreement among the ensemble members, so they are naturally flagged for review. When the authors simulate a realistic situation in which only a fraction of predictions can be checked with expensive calculations, prioritizing by model uncertainty consistently finds more of these hard cases than random choice. In other words, the system not only knows many answers, it often knows when it does not know.

Learning what does not help

The study also reports “negative results,” which are rarely highlighted but important for guiding future work. The authors test a strategy that adds simple chemistry flags, such as whether a material contains a transition metal, on top of the uncertainty signal. This more complicated rule actually performs worse: it often treats common but easy materials as risky, diluting the focus on truly problematic cases. They also experiment with an extra module meant to adapt to different data domains and find that it adds computing cost without meaningful gain for this particular single-database task.

Extending to new material families

To see whether the learned representation is reusable, the team applies their model to a separate collection of perovskite materials, a class important for solar cells. Without any adjustment, predictions are poor, showing that this new set differs from the original training data. After a short fine-tuning step on the new examples, however, the same GemNet backbone quickly reaches strong accuracy. This suggests that the network has captured general patterns of bonding and structure that can be adapted to new chemistries with modest extra training.

What this means for future materials discovery

Put together, TRACER is less a brand-new model and more a full recipe for honest, reusable materials screening. It pairs an efficient, accurate predictor with a tested way to gauge confidence, a clear record of where it fails, and a checklist that others can reproduce. For researchers, this means they can use machine learning to scan large materials spaces while reserving expensive physics calculations for the few cases that look both interesting and uncertain. That mix of speed with self-awareness is a key step toward trustworthy computational discovery of the materials that will power future technologies.

Citation: Datta, G., Sharif, S. & Banad, Y. TRACER: a reliability-first GemNet baseline for trustworthy computational materials discovery. Sci Rep 16, 14962 (2026). https://doi.org/10.1038/s41598-026-45279-6

Keywords: computational materials discovery, graph neural networks, uncertainty quantification, formation energy prediction, materials screening