Clear Sky Science · en

Conformable Fractional Deep Neural Networks (CFDNN) for high-speed cyber-attack detection

Smarter Shields for a Connected World

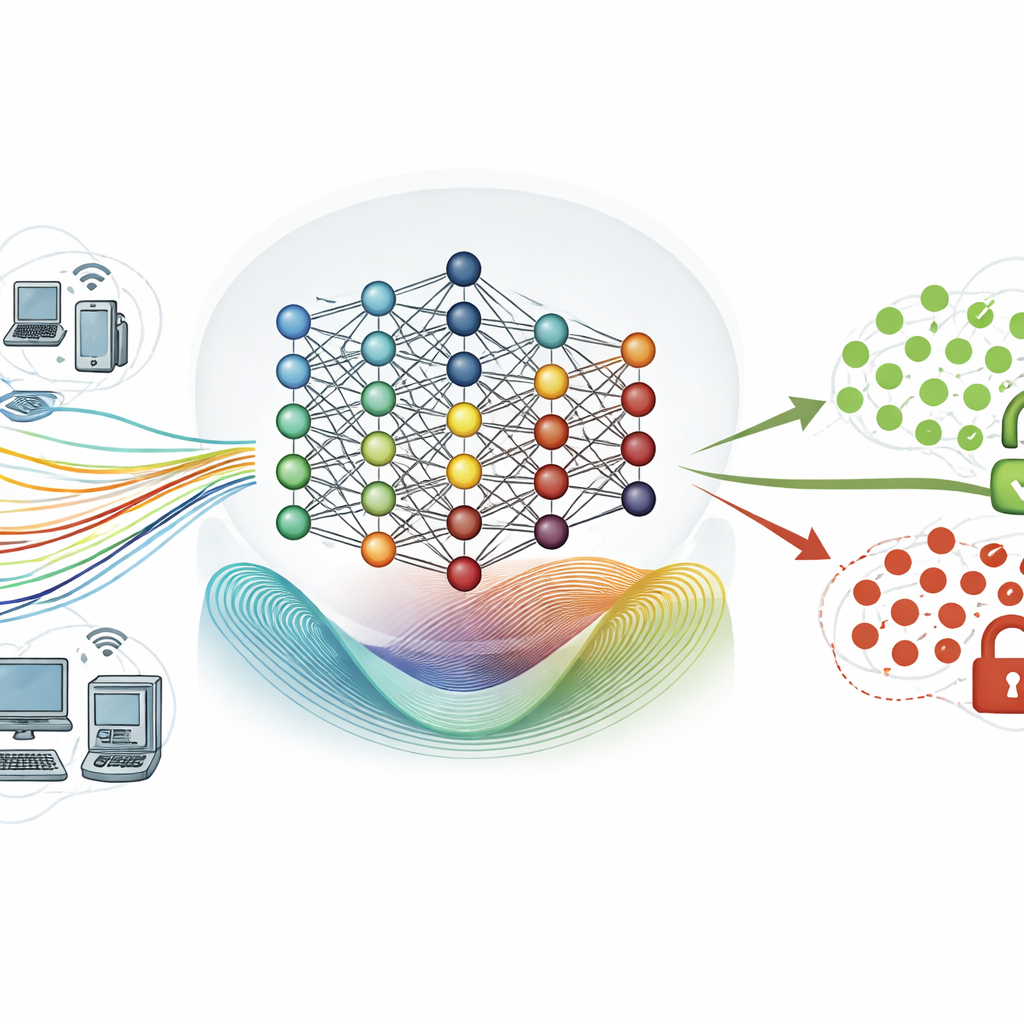

Every day, our homes, offices, and cities depend on networks that quietly shuttle billions of digital messages. Hidden in that stream are cyber-attacks trying to steal data or knock systems offline. Security tools must spot these threats quickly and accurately, without needing huge computers. This paper presents a new kind of artificial intelligence model, the Conformable Fractional Deep Neural Network (CFDNN), designed to detect cyber-attacks faster and with less computing power than many current approaches.

Why Traditional Defenses Struggle

Conventional intrusion detection systems often rely on fixed rules or known attack signatures. They work well for threats we have seen before but falter when attackers change tactics or launch “zero‑day” attacks. Deep neural networks improved this picture by learning subtle patterns in network traffic, yet they come with their own problems: they can be slow to train, need large labeled datasets, and are difficult to deploy in real time on ordinary hardware. They may also miss complex, long‑running attack campaigns that unfold over many steps, because their learning rules treat each training step too locally in time.

A New Way to Teach Neural Networks

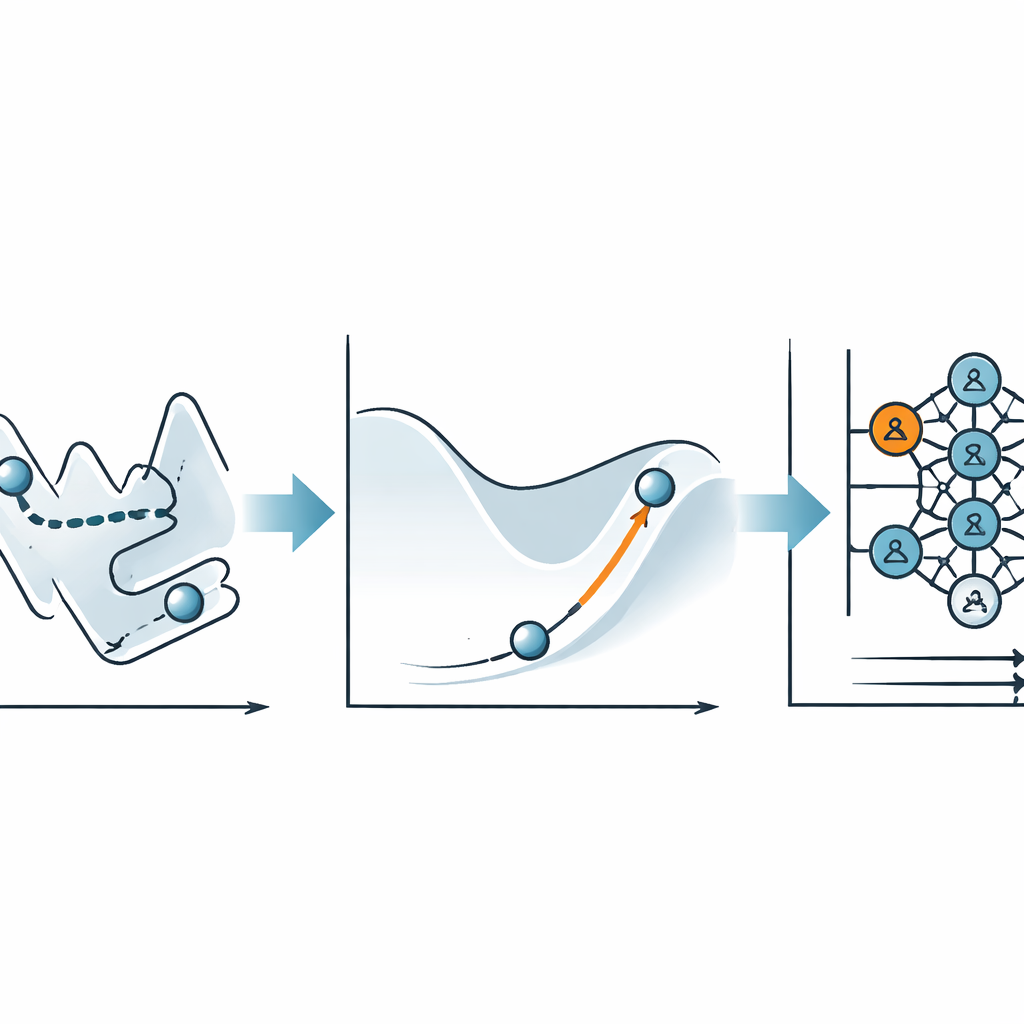

The key innovation in this work is not a bigger or deeper network, but a new way of updating its internal parameters while it learns. Instead of the standard backpropagation recipe, the authors use a technique called conformable fractional gradient descent. In simple terms, this method gives the learning process a kind of adjustable “memory” of past steps. By choosing a fractional setting between 1.2 and 1.8, the algorithm gently reshapes the learning landscape so that the network can slide more smoothly toward a good solution, rather than getting stuck or wandering slowly over rough terrain.

Putting the Idea to the Test

To find out whether this fractional learning actually helps, the researchers trained their CFDNN on two widely used intrusion detection datasets: the older NSL‑KDD collection and the more modern, much larger CIC‑IDS2018 set, which contains over 1.6 million examples of normal and malicious traffic. They kept the network’s structure deliberately simple—a stack of four hidden layers whose size shrinks toward a single output neuron—so that any performance gains could be credited mainly to the new training rule. By sweeping across different fractional “orders” (from 0.5 up to 1.8), they measured accuracy, error rates, and the time needed to train on an ordinary laptop‑class CPU.

Faster Learning with Near‑Perfect Detection

The results show a clear pattern: when the fractional setting is below 1, the model trains poorly and misclassifies many connections. But once the setting moves into the higher “super‑integer” range, between 1.2 and 1.8, performance jumps dramatically. At the best value, 1.8, the CFDNN reaches about 99.4–99.9% accuracy on both datasets, matching or beating state‑of‑the‑art deep learning systems. Just as important, it does this with far fewer training cycles—only 30 rounds instead of the 50 or 100 commonly reported—and entirely on a standard CPU. On the large CIC‑IDS2018 dataset, the full training run finishes in roughly 24 minutes, and evaluating the test data afterwards takes only fractions of a millisecond per network packet.

What This Means for Everyday Security

For non‑specialists, the takeaway is that the CFDNN offers a more efficient brain for digital gatekeepers. By tweaking the way the network learns, rather than building ever more complex models, the authors achieve a system that spots cyber‑attacks with near‑perfect accuracy while remaining fast and lightweight enough for realistic deployment. Although further testing on newer and more diverse traffic is needed, and the fractional setting itself must be chosen with care, this approach points toward intrusion detection tools that can keep pace with evolving threats without demanding supercomputers—helping secure everything from smart homes to industrial control systems.

Citation: Ajarmah, B., Iwidat, H. Conformable Fractional Deep Neural Networks (CFDNN) for high-speed cyber-attack detection. Sci Rep 16, 10616 (2026). https://doi.org/10.1038/s41598-026-45213-w

Keywords: cyber-attack detection, intrusion detection systems, deep neural networks, fractional calculus, network security