Clear Sky Science · en

Training the parametric interactions in an analog bosonic quantum neural network with Fock basis measurement

Smart learning with quantum waves

Modern artificial intelligence runs on neural networks made from transistors and digital code. This study explores how a very different kind of hardware, built from tiny vibrating electromagnetic fields obeying the rules of quantum physics, can be trained to recognize patterns in data. The work shows how to design and train such a quantum neural network in a practical way, so it could eventually help process information directly inside future quantum machines.

A new kind of quantum brain

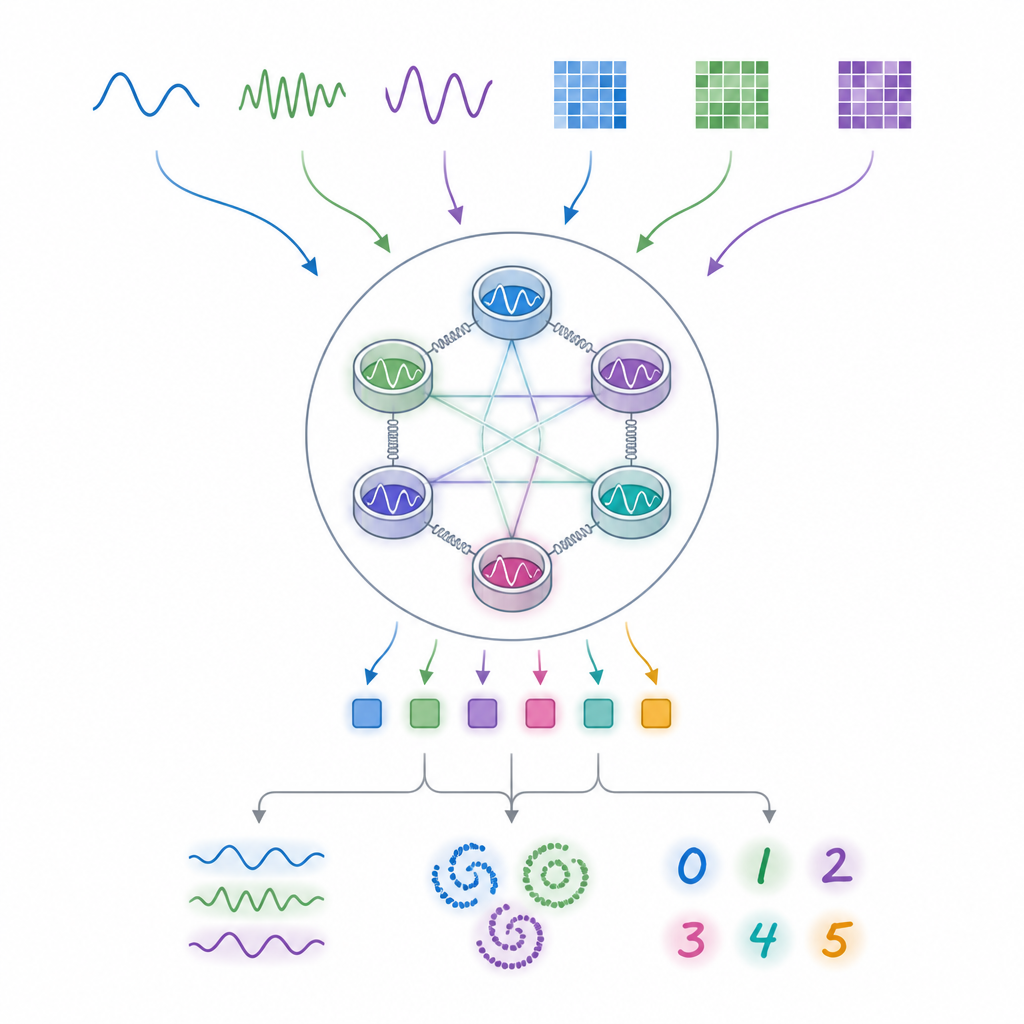

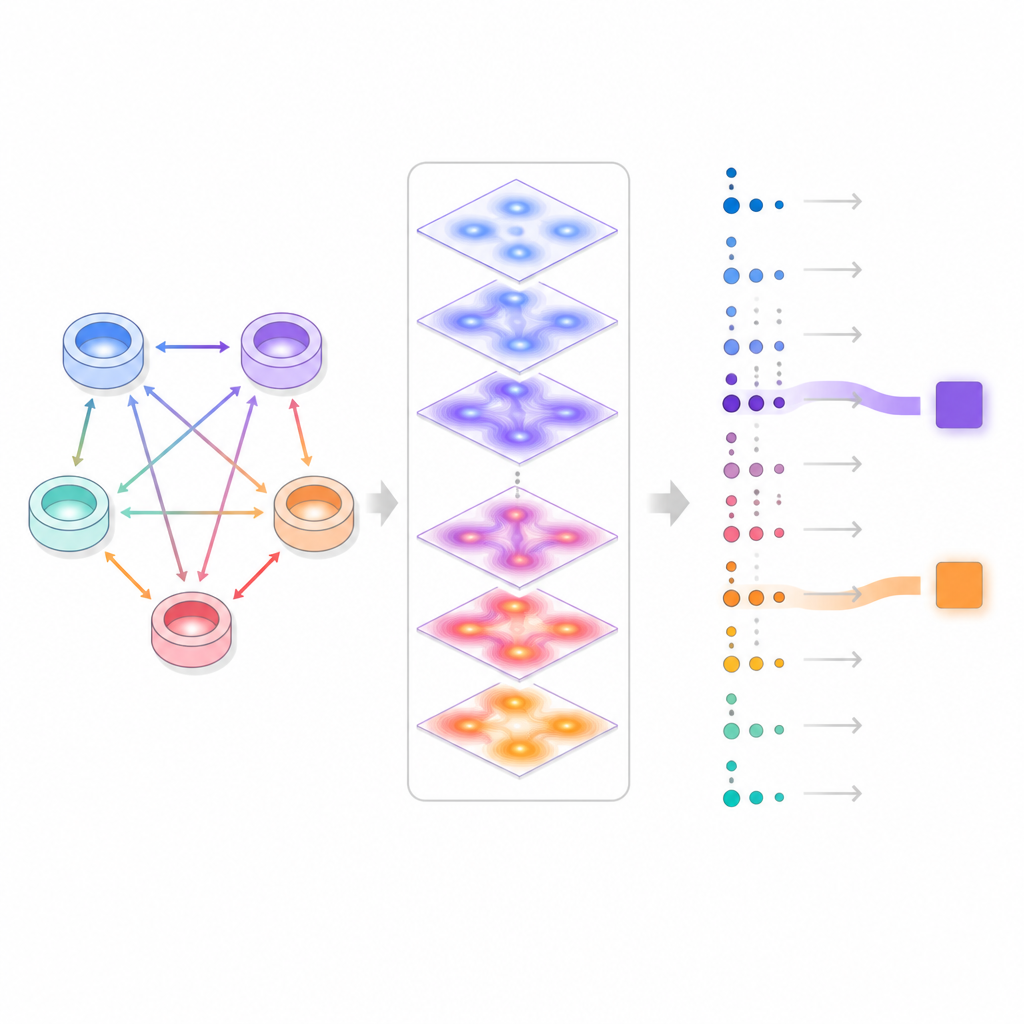

The authors focus on systems made of light-like excitations, called bosons, stored in microwave or optical resonators. These resonators can exchange energy and create pairs of particles when driven by external signals. On their own, these interactions are described by linear equations, which are usually too simple to perform powerful learning. The key trick here is to keep the physical evolution linear but read out the system using photon counting, which naturally produces nonlinear responses. By carefully choosing how to drive and couple the resonators, the quantum device behaves like an analog neural network that maps input data to useful output features.

Letting a classical computer handle the hard part

Training ordinary neural networks relies on backpropagation, a method that efficiently adjusts parameters by following gradients of the loss function. Directly applying this idea to a large quantum system is usually impossible, because simulating its full dynamics quickly becomes intractable. The innovation in this work is to exploit the special structure of so-called Gaussian states, where the evolution of average values and fluctuations can be compactly described. The quantum hardware would perform the forward step, evolving the physical fields, while a classical model of the same Gaussian dynamics, which is easy to simulate, is used to compute gradients. This hybrid strategy allows end-to-end training of the physical drive strengths and couplings without needing to extract gradient information from the quantum device itself.

Teaching the device to recognize patterns

To test their approach, the researchers simulate several learning tasks of increasing difficulty. First, they ask a small two-resonator network to distinguish between sine and square wave signals presented as short time series. By measuring only the chance that one resonator contains zero photons after each input, and using gradient-based training on the physical parameters, the model reaches perfect classification. Compared with an untrained “reservoir” version of the same hardware, which uses many output readings, the trained network needs far fewer measured quantities and many fewer experimental shots to reach the same accuracy.

Finding the best way to feed in data

The team then studies a classic hard problem where points must be assigned to one of two interlaced spirals in the plane. This task demands strong nonlinearity. Using four coupled resonators, they compare several ways of encoding the two input coordinates into the physical controls, such as the amplitude or phase of the driving tones and of different coupling processes. They find that embedding the data into the strength or phase of a special interaction that creates photon pairs has a particularly strong effect, enabling perfect classification while reading out just one photon probability. Other encoding choices need many more measured outputs, or never reach full accuracy. This shows that how data is written into the quantum device strongly shapes its effective nonlinearity.

From handwritten digits to future devices

Finally, the authors tackle a small image-recognition task involving handwritten digits represented as 8 × 8 pixel grids. With six resonators and multiple pair-creation processes, they feed the pixels in over several time slices, a strategy similar to repeatedly presenting the same quantum circuit with new data. After training a few hundred physical and classical parameters, the model classifies unseen digits with over 97 percent accuracy while measuring only a modest set of photon-counting outcomes. In contrast, when the same hardware is used as an untrained reservoir, performance saturates far lower even with more measurements, underlining the benefit of optimizing the physical interactions.

Why this matters for quantum technology

The study demonstrates that networks built from linearly evolving bosonic modes, combined with nonlinear photon counting, can be both expressive and trainable using familiar gradient tools. While the present work relies on classical simulation to guide training and so is limited in size, the underlying ingredients match well with existing superconducting and photonic platforms that already support tunable parametric couplings. This opens a realistic route toward quantum hardware that not only processes information in a quantum way but can also be trained like today’s neural networks, potentially serving as intelligent front ends for future quantum sensors and processors.

Citation: Dudas, J., Carles, B., Gouzien, E. et al. Training the parametric interactions in an analog bosonic quantum neural network with Fock basis measurement. Sci Rep 16, 14997 (2026). https://doi.org/10.1038/s41598-026-45038-7

Keywords: quantum neural networks, bosonic modes, Gaussian dynamics, photon counting, quantum machine learning