Clear Sky Science · en

Comparing energy consumption and accuracy in text classification inference

Why Power-Hungry AI Matters

Behind the scenes of chatbots and smart document tools, computers are quietly burning electricity. As large language models grow bigger and more common, their appetite for power raises questions for climate goals and public budgets. This paper asks a simple but crucial question: when we use AI to sort and label text, do we really need the largest models, or can smaller, lighter tools do the job just as well while using far less energy?

Sorting Real-World Complaints

The authors ground their study in a concrete task from German public administration: processing written objections from citizens about where to store high-level radioactive waste. Hundreds of short statements had to be grouped into categories such as data issues or site requirements so they could be sent to the right experts. This is a classic text classification problem that governments, companies, and NGOs face whenever they triage emails, support tickets, or public comments.

To study this, the researchers used a cleaned public dataset of 378 labeled submissions. They split it into equal halves for training and testing and repeated every experiment ten times with different random splits to avoid flukes. They then compared traditional machine‑learning models—such as logistic regression and gradient boosting fed with simple text features—to a wide range of modern large language models, including recent open models from the Llama, Qwen, Phi, Jamba, and DeepSeek families. All large language models were used “out of the box” in a zero‑shot mode: they received task instructions and the text, but no additional training on the specific categories.

Measuring Electricity, Not Just Correct Answers

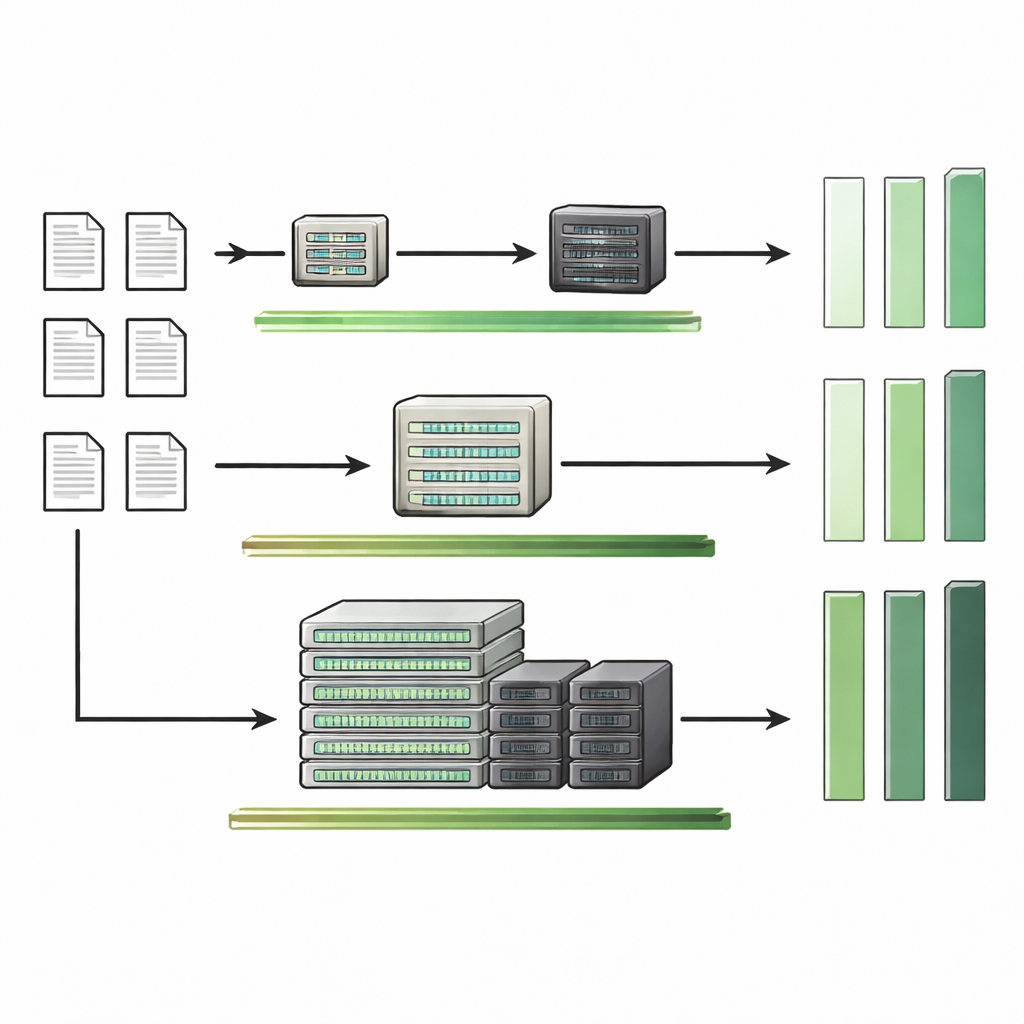

Most AI papers highlight accuracy and little else. Here, the authors measure not only how often each model sorts the text correctly, but also how much energy it consumes while doing so, and how long it takes. They run their experiments on three high‑performance computing clusters equipped with different generations of NVIDIA GPUs. Using the CodeCarbon toolkit, they estimate the power drawn by processors, graphics cards, and memory during the inference phase—the moment models are actually used to make predictions. They focus on “warm start” conditions that mirror real deployments, where a model stays loaded in memory and processes many documents in sequence.

This setup lets them probe several practical questions: Are big models always more accurate? Do more GPUs save time without saving energy? How much does hardware choice matter? And can simple runtime—the wall‑clock time a model needs—stand in as a rough proxy for its energy use when direct measurements are not available?

Smaller Models, Smaller Bills

The core finding is striking: for the radioactive‑waste dataset, a traditional linear model built on pre‑computed sentence embeddings is both the most accurate and far more energy‑efficient than any of the large language models tested. Even the simplest traditional models beat several large models while sipping tiny amounts of energy. In contrast, some of the biggest models, especially those with added internal “reasoning” steps, consume hundreds to thousands of times more electricity without delivering better results.

Looking across different hardware settings, the GPU dominates energy use whenever large models are involved. Adding more GPUs speeds up inference but generally does not cut total energy, and spreading a model over multiple computer nodes actually makes things worse due to communication overhead. When the authors examine multiple datasets beyond the nuclear‑waste case—news topics, customer reviews, movie sentiment, and emotions—they find a more nuanced picture: on some tasks, large language models do achieve noticeably higher accuracy, yet this improvement often comes at steep energy costs. In every setting, energy use scales nearly linearly with runtime, meaning that how long a model takes is a very good stand‑in for how much power it draws on a given machine.

Toward Climate‑Conscious AI Choices

Beyond the numbers, the paper argues that sustainable AI should be judged on at least two separate axes: how well it performs a task and how many resources it consumes. Bigger is not automatically better, and relying by default on massive general‑purpose models for routine classification risks unnecessary emissions, higher operating costs, and longer processing times. The authors recommend that organizations start with transparent, lightweight models as baselines, move to larger language models only when they demonstrably improve accuracy, and always weigh that gain against energy and hardware demands.

What This Means for Everyday Systems

For lay readers, the message is clear: when an AI system tags your email, routes your complaint, or classifies a document, a carefully chosen small model may serve you just as well as a giant one—while being cheaper, faster, and kinder to the planet. By showing that energy use can differ by six orders of magnitude for similar accuracy, and that simple timing measurements can approximate power needs, this study offers a practical toolkit for more climate‑aware AI decisions in government and beyond.

Citation: Zschache, J., Hartwig, T. Comparing energy consumption and accuracy in text classification inference. Sci Rep 16, 12717 (2026). https://doi.org/10.1038/s41598-026-45023-0

Keywords: energy-efficient AI, text classification, large language models, sustainable computing, public administration data