Clear Sky Science · en

Machine learning prediction of common bile duct stones using synthetic data to guide emergency ERCP decisions

Why this matters in the emergency room

When people arrive at the emergency room with severe belly pain from gallstones, doctors must quickly decide who truly needs an invasive procedure called ERCP to remove stones from the common bile duct. ERCP can be life‑saving, but it is costly and carries real risks, including pancreatitis and even perforation. This study shows how a carefully designed artificial intelligence (AI) tool, trained partly on realistic "made‑up" patient records, can better predict who actually has these dangerous stones—cutting down on unnecessary procedures while keeping patients safe.

The problem of risky procedures and uncertain guidelines

Stones in the common bile duct occur in a sizeable fraction of patients with symptomatic gallstones and can lead to serious infections, pancreatitis, and liver damage if not treated promptly. The current standard treatment, ERCP, involves threading an endoscope through the stomach into the bile duct under X‑ray guidance, which exposes patients to radiation and complications in roughly one in ten cases. To help decide who should get ERCP, medical societies in the United States and Europe created step‑by‑step guidelines based on blood tests and imaging. However, real‑world studies have shown that these rules still send many patients to the procedure who turn out not to have stones, while missing some who do.

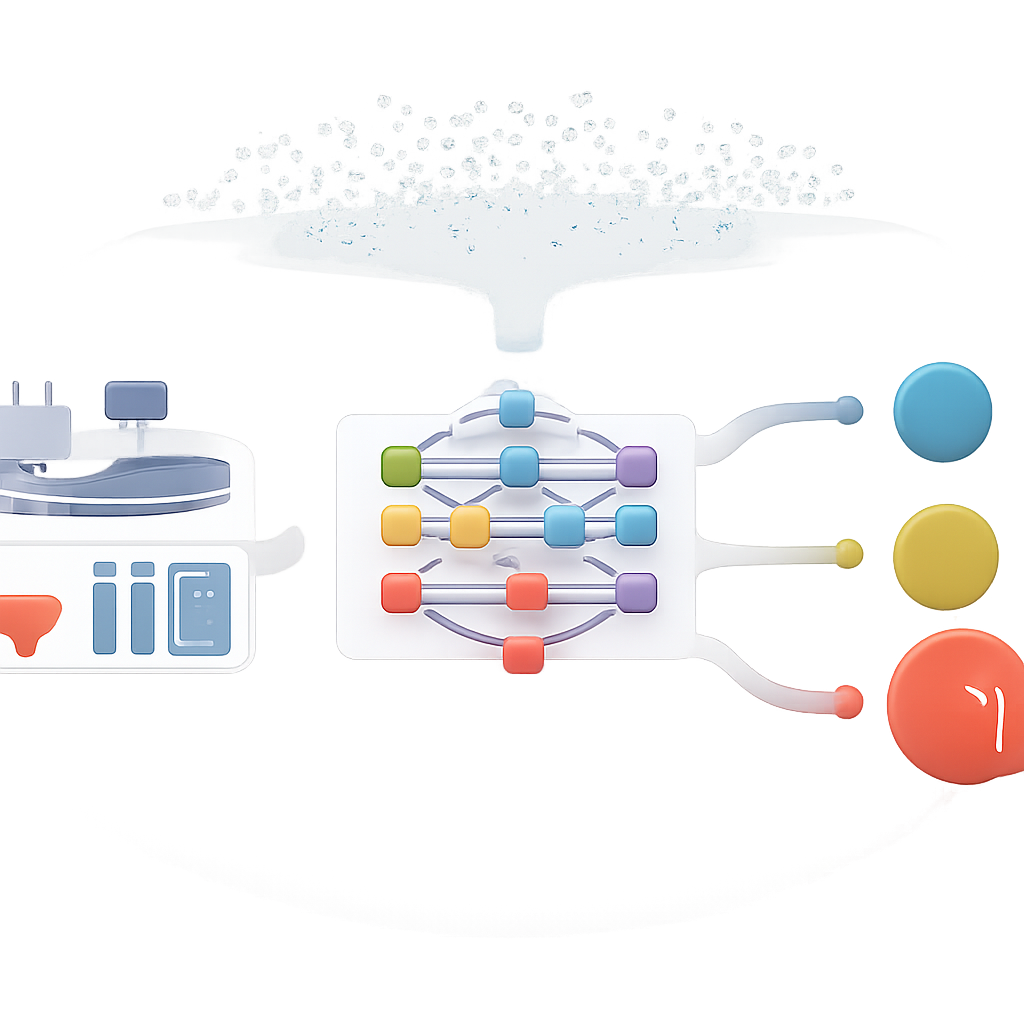

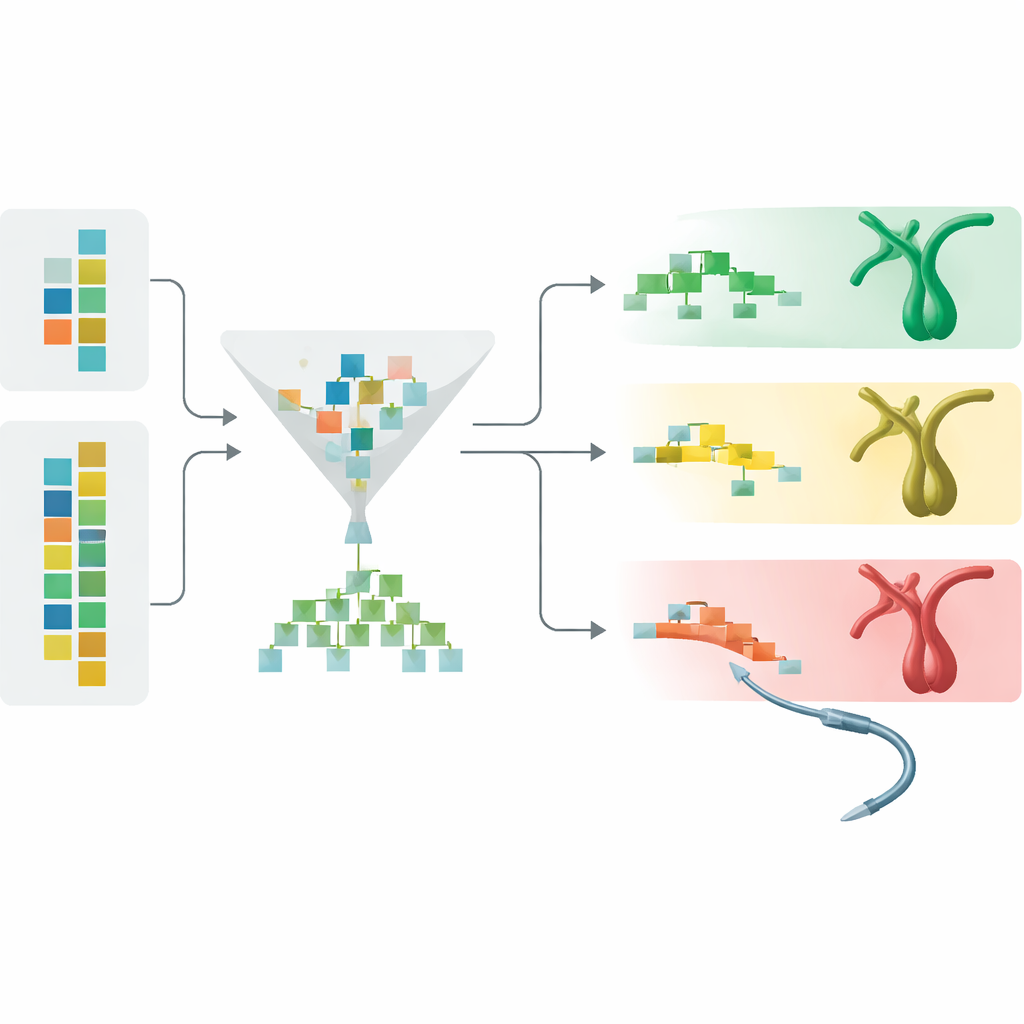

Using real and synthetic patients to train a smarter model

The researchers gathered emergency‑room data from three large hospitals in South Korea on adults thought to have a moderate to high chance of common bile duct stones. They collected vital signs, routine blood tests, and CT scan findings for 733 patients at one hospital and 348 patients at two others. Instead of relying only on these real records, they used a large language model—software originally built to understand medical text—to generate thousands of additional, synthetic patient cases. These artificial records were not random: they were constrained to match the statistical patterns of the real patients, such as the link between larger bile duct size, abnormal liver blood tests, and the presence of stones. A separate filtering step then removed synthetic cases that looked unrealistic or confusing to a preliminary model.

Building a practical prediction tool from everyday data

With this expanded dataset, the team tested multiple machine‑learning approaches and found that a tree‑based method called ExtraTrees worked best. They narrowed the inputs down to 11 pieces of information that are almost always available in the emergency department: age; heart rate; body temperature; white blood cell, hemoglobin, and platelet counts; several liver‑related blood tests; and whether the bile duct on CT was enlarged to at least 10 millimeters. Notably, they did not include the direct appearance of a stone on imaging, a choice that forces the model to recognize more subtle patterns, including stones that do not clearly show up on scans.

How the AI outperformed existing rules

When the model was tested on new patients from the original hospital, it correctly distinguished those with and without stones with an area under the ROC curve of 0.982, a very high measure of accuracy. On patients from the two outside hospitals—who were never used in training—the performance remained strong at 0.957, showing that the tool generalizes to different settings. Compared with current guidelines, the AI model sharply reduced the fraction of patients undergoing unnecessary ERCP: from roughly 12–23% down to 0% in the internal test group, and from nearly 30–36% down to about 7% in the external hospitals. At the same time, it kept the rate of missed stones (false negatives) lower than the guidelines. The model also produced well‑calibrated risk scores, meaning that its predicted probabilities closely matched the true frequencies of disease.

What drives the predictions and how doctors could use them

To make the system more transparent, the researchers examined which features most influenced its decisions. Marked widening of the bile duct on CT emerged as the strongest signal, followed by liver enzyme levels and bilirubin, all familiar red flags to gastroenterologists. Using these risk scores, the team proposed a three‑tiered scheme: high‑risk patients proceed directly to ERCP, intermediate‑high risk patients undergo additional non‑invasive imaging such as MRI‑based cholangiography or endoscopic ultrasound, and intermediate‑low risk patients can often be monitored closely rather than rushed to invasive procedures. Importantly, patients with severe infection of the bile ducts would still go straight to ERCP regardless of model output, preserving current life‑saving practice.

What this means for patients and clinicians

This work suggests that an AI model, trained on a blend of real and carefully curated synthetic data, can guide emergency decisions about ERCP more safely and efficiently than today’s guideline checklists. By using only routinely collected measurements, the tool could be integrated into simple web‑based calculators or hospital systems, helping doctors identify who truly needs an invasive bile duct procedure and who can avoid it. While the authors call for future prospective trials, their results point toward a future in which intelligent, data‑driven support reduces risk, lowers costs, and personalizes care for patients with suspected bile duct stones.

Citation: Kang, S., Park, N., Shin, I.S. et al. Machine learning prediction of common bile duct stones using synthetic data to guide emergency ERCP decisions. Sci Rep 16, 10585 (2026). https://doi.org/10.1038/s41598-026-45014-1

Keywords: bile duct stones, ERCP decision support, medical machine learning, synthetic clinical data, emergency gastroenterology