Clear Sky Science · en

Using AI to forecast student dropout risk in technical education using a learning analytics approach

Why early warnings for struggling students matter

Many college students quietly slip behind in their online courses long before bad grades show up. In technical fields, where classes are demanding and fast-paced, this can quickly lead to dropping out. This study explores how data from everyday activity in an online learning platform can be turned into an early warning system. By analysing how students move through course materials, an artificial intelligence (AI) model flags patterns that signal trouble early enough for teachers to step in with targeted help.

Following digital footprints in online classes

The research focuses on two computer-aided design (CAD) courses taught through the Moodle learning platform at an applied sciences university. Every click—opening a file, attempting a test, submitting homework—creates a time-stamped log entry. From 12,941 such records for 80 students, the author built a dataset describing 49 different learning resources, from simple introductory pages to complex, multi-step assignments. Because these courses showed unusually high dropout rates, they provided a useful testbed for exploring how activity patterns relate to student success or failure.

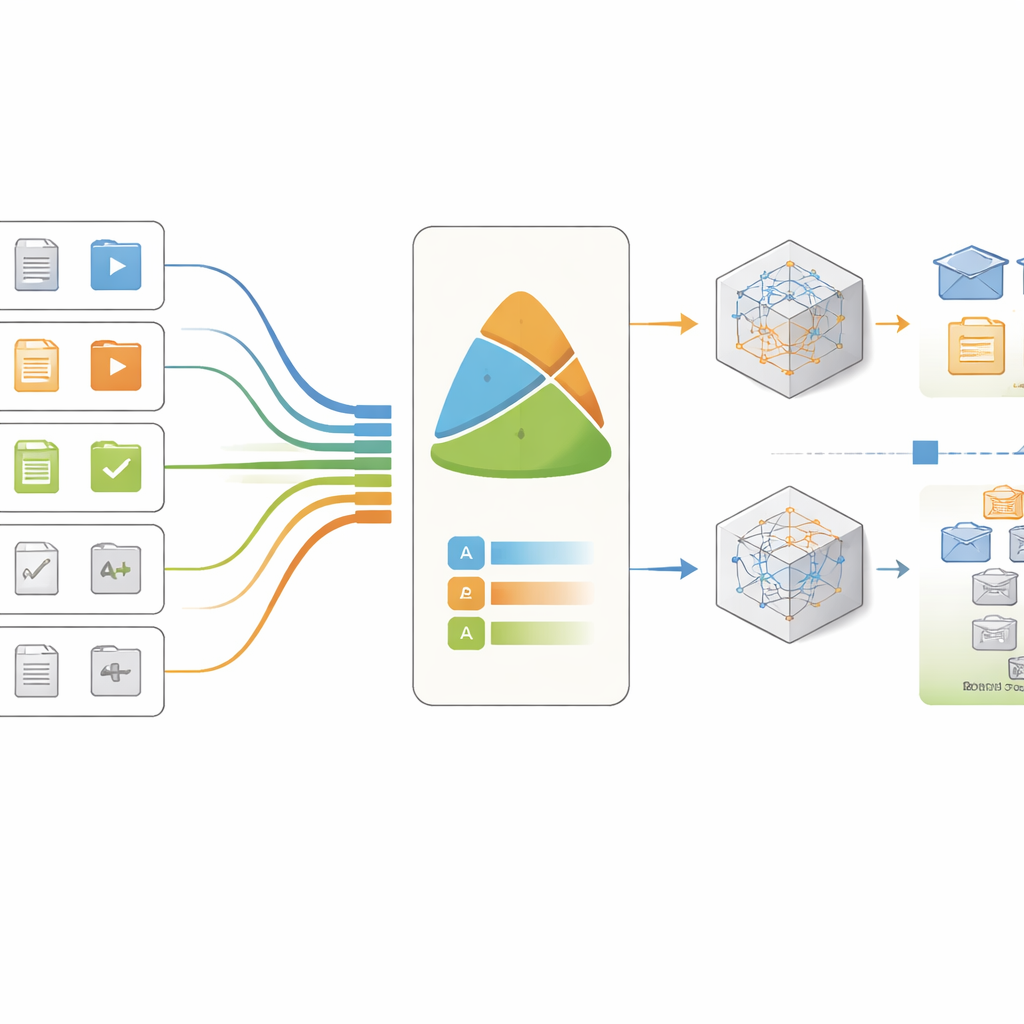

Turning clicks into meaningful learning signals

Rather than feeding raw click counts into an opaque algorithm, the study introduces a "weighted attribute" method that reshapes the data into indicators that teachers can understand. Each resource is scored on three main aspects: how mentally demanding it is, how often students engage with it, and how much time the course expects them to spend on it. These scores are combined into a single effectiveness measure that reflects how well students actually handle that resource. In this way, the model does not just say that a student is in danger; it points to specific tasks or materials that may be causing the problem.

What the AI models discovered

Two types of prediction models—logistic regression and random forests—were trained to tell apart more effective and less effective learning tools based on these weighted indicators. To avoid being misled by the relatively small sample, the researcher used repeated cross-checks that rotate which data are used for training and which for testing. Across these checks, both models performed well, with the random forest slightly ahead but the simpler logistic model offering easier-to-interpret results. In every case, the most important signals were how often students interacted with a resource, how long they spent on it, and how difficult it was designed to be.

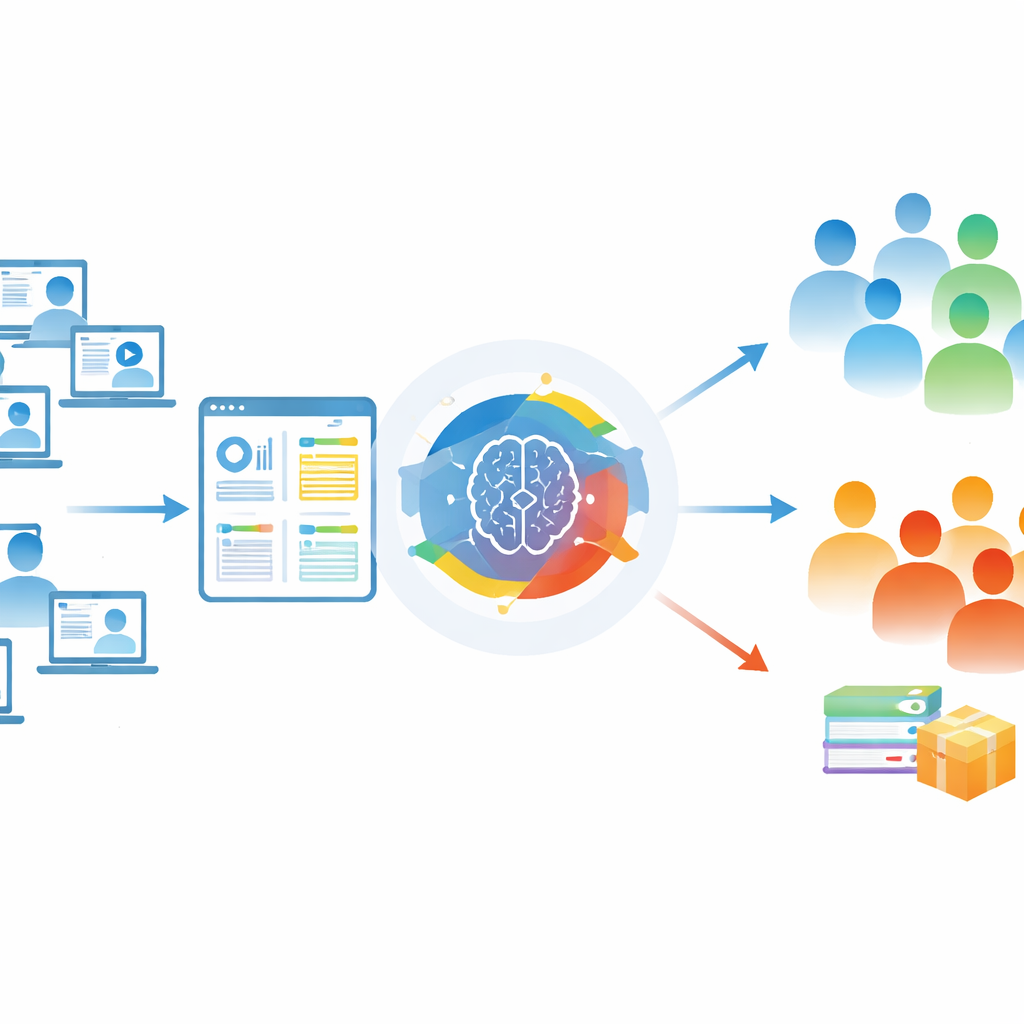

Designing better courses, not replacing teachers

The findings show that complex, time-consuming tasks—such as multi-step modelling projects and substantial homework—are strongly tied to better learning outcomes, whereas basic announcements or short overviews contribute less. This suggests that well-designed challenges, supported by adequate time, are central to student success. The study also outlines how an early warning system could work in practice: an automated dashboard would highlight risky patterns to instructors, who would then decide how to respond, for example by adding examples, adjusting workload, or offering extra support sessions. Importantly, the system is designed to inform teachers, not to automate decisions about students.

What this means for students and educators

For a general reader, the key message is that AI can help universities notice trouble spots before students fail, but only if it is used thoughtfully. By combining course design insight with careful data handling, the weighted attribute method turns raw activity logs into clear signals about which parts of a course help or hinder learning. The study is still a pilot, limited to a small number of technical courses at one institution, so its results are preliminary. Yet it offers a concrete path toward more transparent, fair, and supportive use of AI in education—where data-driven tools help teachers redesign courses and reach struggling students earlier, making it less likely that they quietly drift away from their studies.

Citation: Ovtšarenko, O. Using AI to forecast student dropout risk in technical education using a learning analytics approach. Sci Rep 16, 14616 (2026). https://doi.org/10.1038/s41598-026-44919-1

Keywords: student dropout risk, learning analytics, online technical education, predictive modelling, early warning systems