Clear Sky Science · en

FAD-MIL: a weakly supervised fracture detection model based on X-ray images

Why broken bones still get missed

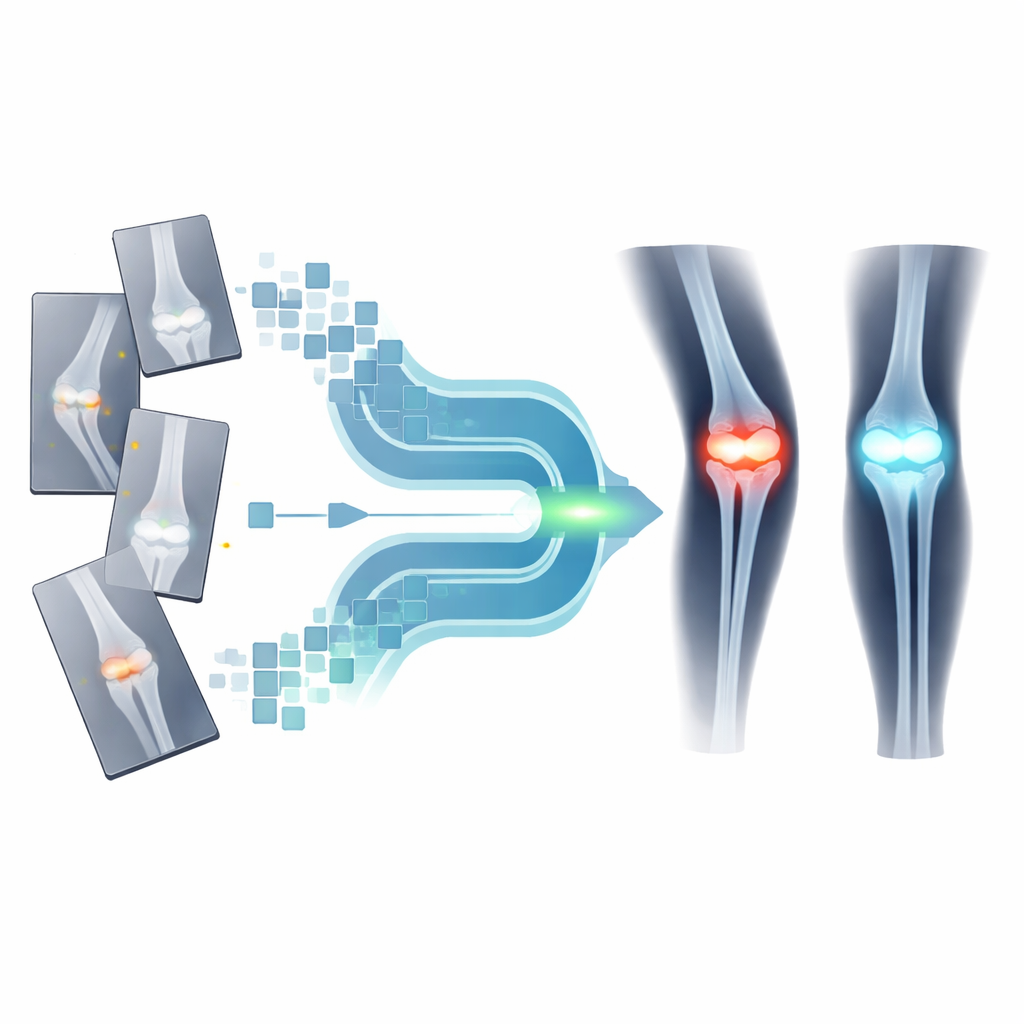

People break bones every day, and X‑ray scans are usually the first step in spotting those fractures. Yet even experienced doctors can miss subtle cracks, especially during busy night shifts or in hospitals with few specialists. This study introduces a computer model called FAD‑MIL that looks at X‑ray images in a new way, aiming to act as an extra pair of sharp eyes. By learning from thousands of past scans without needing labor‑intensive, hand‑drawn markings of every fracture line, the system tries to highlight which images are most likely to hide a break.

Teaching computers from real‑world X‑rays

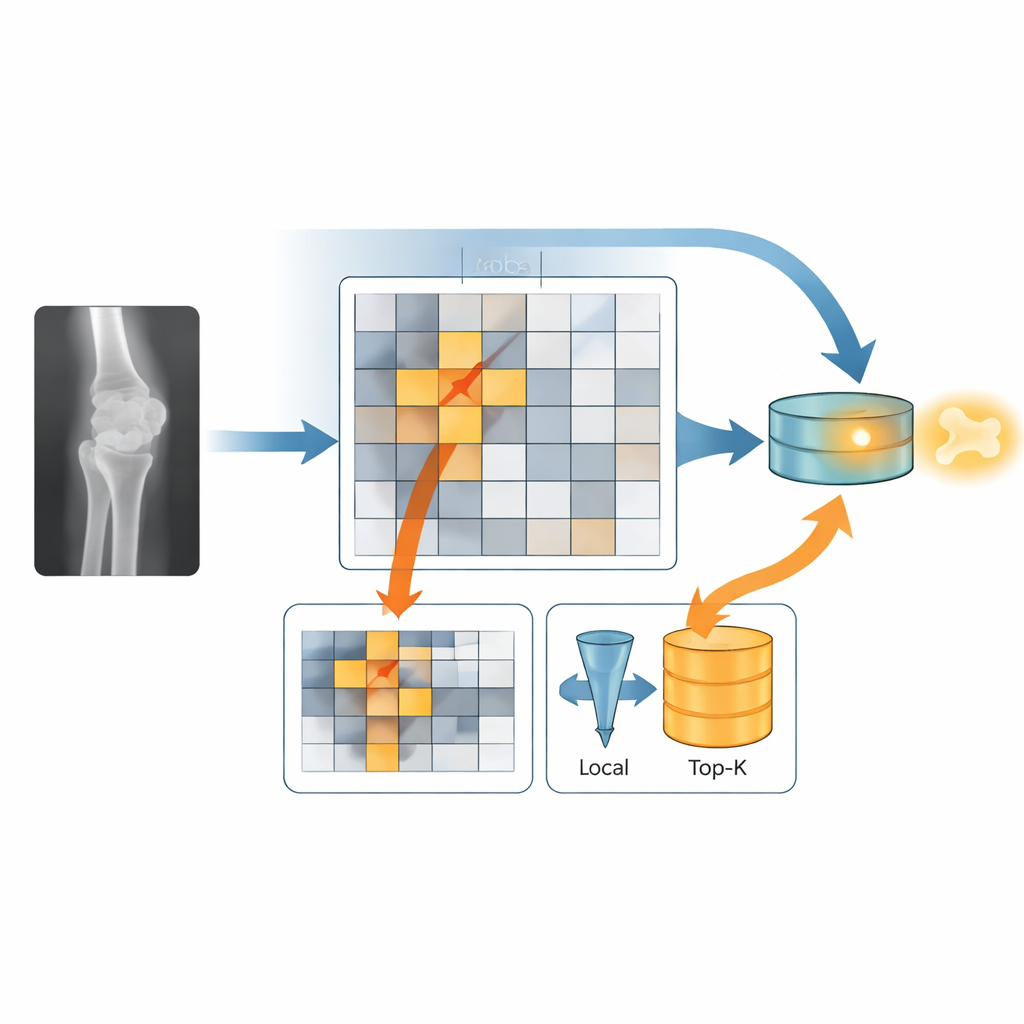

The researchers trained their model on a public collection of over four thousand limb X‑rays called FracAtlas, where each image is labeled simply as “fracture” or “no fracture.” Instead of treating each picture as a single blob of information, they cut every image into many small tiles, like a grid of puzzle pieces. A pre‑trained image‑analysis network turns each tile into a numerical fingerprint that describes its visual patterns. All these fingerprints from one X‑ray are then bundled together as a “bag” of clues that the model must interpret to decide whether a break is present, even though it is never told which exact tile shows the injury.

Seeing the big picture and the tiny crack

FAD‑MIL’s key idea is to mimic how a radiologist switches between a broad overview and close inspection. One part of the model, the global stream, looks across all tiles to understand the overall shape of the limb and joint, helping it avoid being fooled by normal bumps and bends. The other part, the local stream, tries to home in on the few tiles most likely to contain a crack. A “fracture gate” gently boosts tiles that look suspicious and downplays the rest, and then only the top fraction of these high‑scoring tiles is kept for closer analysis. Finally, the model fuses the global context and the local hotspots into a single decision about whether the image shows a fracture.

How well the model spots fractures

On the FracAtlas test images, FAD‑MIL outperformed two simpler approaches that either averaged all tile information or voted tile‑by‑tile, both of which tended to drown out the faint fracture signal in normal bone detail. Its ability to rank scans from least to most likely to show a break was similar to that of another advanced method that also uses attention to focus on important regions, but FAD‑MIL produced better‑calibrated scores, meaning its confidence levels were more in line with how often it was actually right. The researchers also generated colorful heatmaps that glow over regions most linked to the model’s fracture prediction, giving doctors a rough visual guide to where the algorithm “looked,” without claiming to pinpoint the exact crack.

Testing the model beyond the benchmark

To see how the system behaved on real‑world hospital data, the team next applied FAD‑MIL to more than 1,300 X‑rays from patients with known wrist and ankle fractures at a single medical center. This group contained only people who did have breaks, so the researchers could measure how often the model successfully flagged these cases at different decision cutoffs, but not how many healthy patients it might falsely alarm. As they raised the bar for calling a scan “fractured,” the model became more selective and missed more true fractures, especially at high thresholds. The analysis showed that while many patients received high fracture scores, a noticeable minority with more subtle injuries were assigned lower scores and could be missed if the cutoff were set too high.

What this work means for patients and doctors

In everyday terms, this study shows that a carefully designed AI system can learn to recognize broken bones on X‑rays using only simple image‑level labels, and it can highlight suspicious regions in a way that is easier to interpret than a black‑box yes‑or‑no answer. However, the current results come mainly from a curated public dataset and from a hospital cohort that lacked matching non‑fracture cases, so the true balance between missed fractures and false alarms in busy clinics remains unknown. Before tools like FAD‑MIL can safely support emergency doctors, they will need to be tested prospectively across many hospitals and patient groups, with careful tuning of thresholds to favor catching as many real fractures as possible while avoiding overload from false alerts.

Citation: Xue, F., Zhang, Y., Zhao, W. et al. FAD-MIL: a weakly supervised fracture detection model based on X-ray images. Sci Rep 16, 13868 (2026). https://doi.org/10.1038/s41598-026-44675-2

Keywords: fracture detection, X-ray imaging, deep learning, multiple instance learning, medical AI