Clear Sky Science · en

Data-adaptive pattern-coupled Bayesian compressive sensing for sparse sound field reconstruction

Listening to Sound with Fewer Microphones

Modern industries—from car makers chasing quieter cabins to engineers tracking down rattles in machines—often need a detailed picture of how sound moves through space. Getting that picture usually means placing a dense grid of microphones around an object, which is slow and expensive. This paper introduces a smarter way to “listen” that can recover rich three‑dimensional sound fields from far fewer measurements, cutting hardware and test time without sacrificing accuracy.

Why Rebuilding Sound Fields Is So Hard

Near‑field acoustic holography is a workhorse technique for turning microphone measurements near a source into a full map of the surrounding sound. In principle, if you measure densely enough, standard mathematics can reconstruct the sound everywhere. In practice, the required spacing between microphones is so small that real‑world test setups balloon into unwieldy arrays containing hundreds of sensors. That drives up cost and limits use on large or complex structures. Compressive sensing, a mathematical framework developed over the past two decades, offers a way out: if the underlying sound field can be described by only a few key building blocks, then a carefully chosen set of measurements can still reconstruct the whole scene.

Using Hidden Building Blocks of Sound

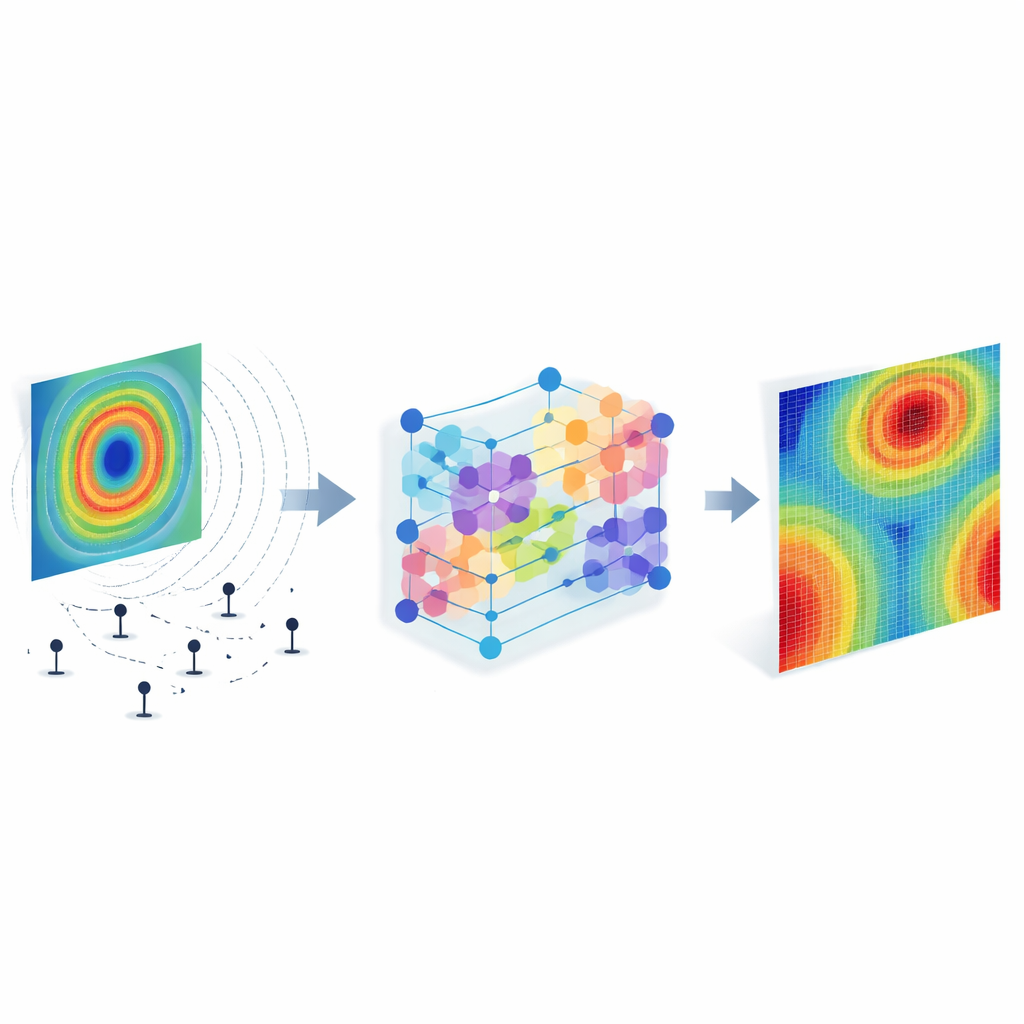

The authors build on the “equivalent source” idea, which replaces the real vibrating object with a grid of imaginary point sources whose combined effect reproduces the measured sound. By analyzing how these equivalent sources radiate, one can find a set of radiation patterns that naturally compress the problem: only a small number of patterns carry most of the energy. Expressing the sound in terms of these patterns turns the reconstruction into finding a sparse set of coefficients. Earlier Bayesian compressive sensing methods treated each coefficient independently, effectively assuming that important contributions are scattered at random. Real sound sources, however, are often spatially smooth—hot spots and quiet regions tend to occur in contiguous patches, not isolated pixels.

Letting Neighboring Points “Talk” to Each Other

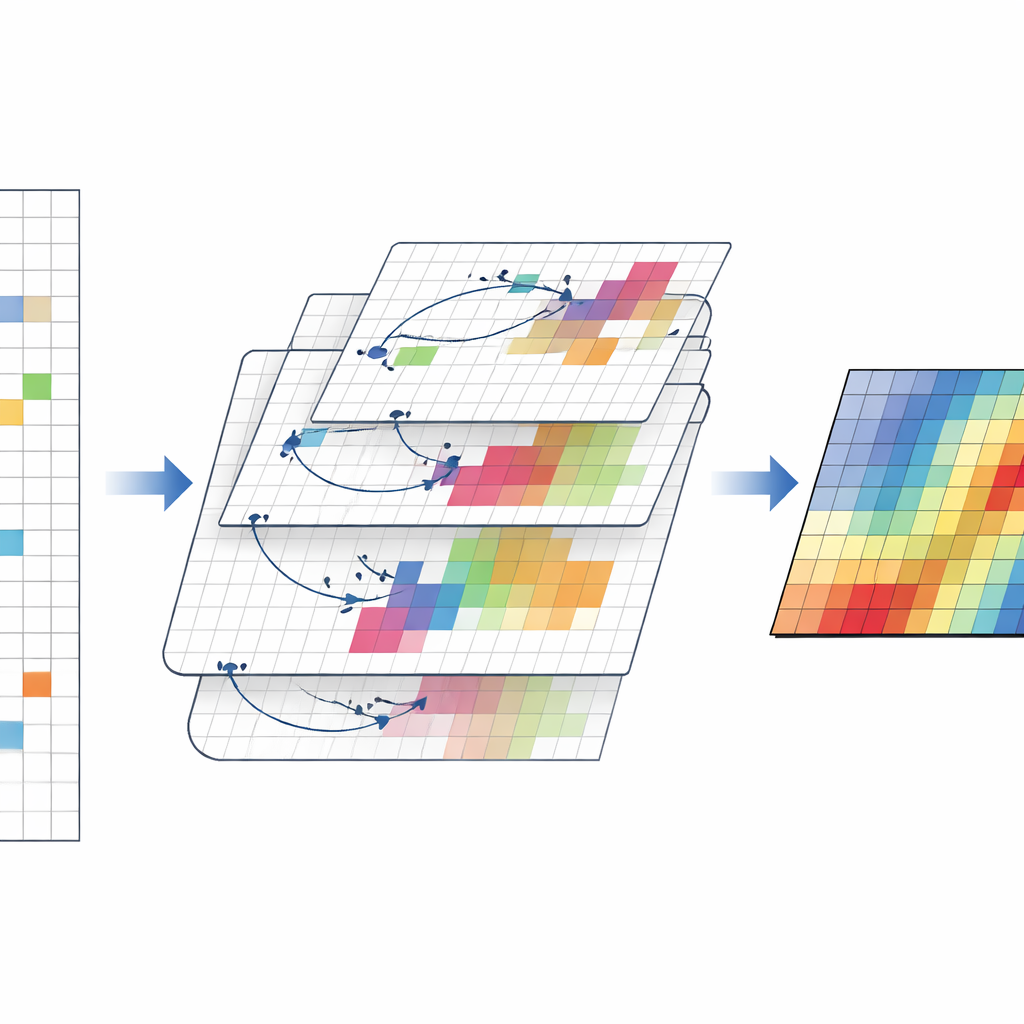

To better mirror this physical reality, the new method, called data‑adaptive pattern‑coupled Bayesian compressive sensing (DA‑PCBCS), links neighboring coefficients together. Instead of assigning each coefficient a completely separate strength control, the algorithm lets the controls influence one another through a learnable transformation. During reconstruction, the method repeatedly adjusts both the coefficients and these coupling strengths based on the measured data. Regions that genuinely radiate sound encourage one another to stay active, forming clusters of non‑zero values, while quiet areas reinforce their neighbors’ tendency to shrink toward zero. Mathematically, this behavior is encoded in a hierarchical probability model that favors block‑like patterns without needing to know in advance where those blocks are.

From Simulations to Lab Experiments

The team tested their approach on a vibrating steel plate, first in computer simulations and then in controlled experiments in a semi‑anechoic chamber. They compared the new method to two established Bayesian techniques that either ignore neighborhood structure or use a fixed, non‑adaptive form of coupling. Across a wide range of frequencies, the adaptive method consistently produced lower reconstruction errors, especially at medium and high frequencies where traditional methods struggled. It also maintained higher accuracy when the number of microphones was sharply reduced, and when artificial noise was added to mimic real‑world measurement conditions. In lab tests with a scanning microphone array, the new algorithm kept errors below about ten percent over the entire tested frequency band, outperforming the benchmarks while using the same limited data.

Sharper Acoustic Vision with Less Effort

In everyday terms, this work shows how to get a clearer “picture” of sound using fewer ears. By allowing the reconstruction algorithm to learn how nearby points in a sound field tend to rise and fall together, the method extracts more information from each microphone reading. For engineers, that translates into simpler measurement setups, lower test costs, and more reliable diagnostics of noisy structures—even when data are sparse or contaminated by noise. While the current implementation is computationally demanding, future refinements could pave the way for practical, real‑time acoustic imaging tools that listen smart rather than just listening more.

Citation: Xiao, Y., Liu, Y., Chen, Z. et al. Data-adaptive pattern-coupled Bayesian compressive sensing for sparse sound field reconstruction. Sci Rep 16, 14551 (2026). https://doi.org/10.1038/s41598-026-44624-z

Keywords: acoustic holography, compressive sensing, sound field reconstruction, Bayesian methods, sparse sensing