Clear Sky Science · en

CR-MSNet: a dual-branch multi-scale attention network for multi-label chest X-ray classification

Why smarter chest X-rays matter

Chest X-rays are one of the most common medical tests in the world, used to look for a wide range of lung and heart problems in just a single snapshot. Yet reading these images is hard work, even for experienced radiologists, and a single image can hide several different diseases at once. This study introduces a new artificial intelligence model, called CR-MSNet, that is designed to read chest X-rays more like an expert: paying attention both to the big picture of the whole chest and to tiny, hard-to-spot abnormalities, while also coping with rare diseases that appear only in a few patients.

Seeing the whole chest and tiny trouble spots

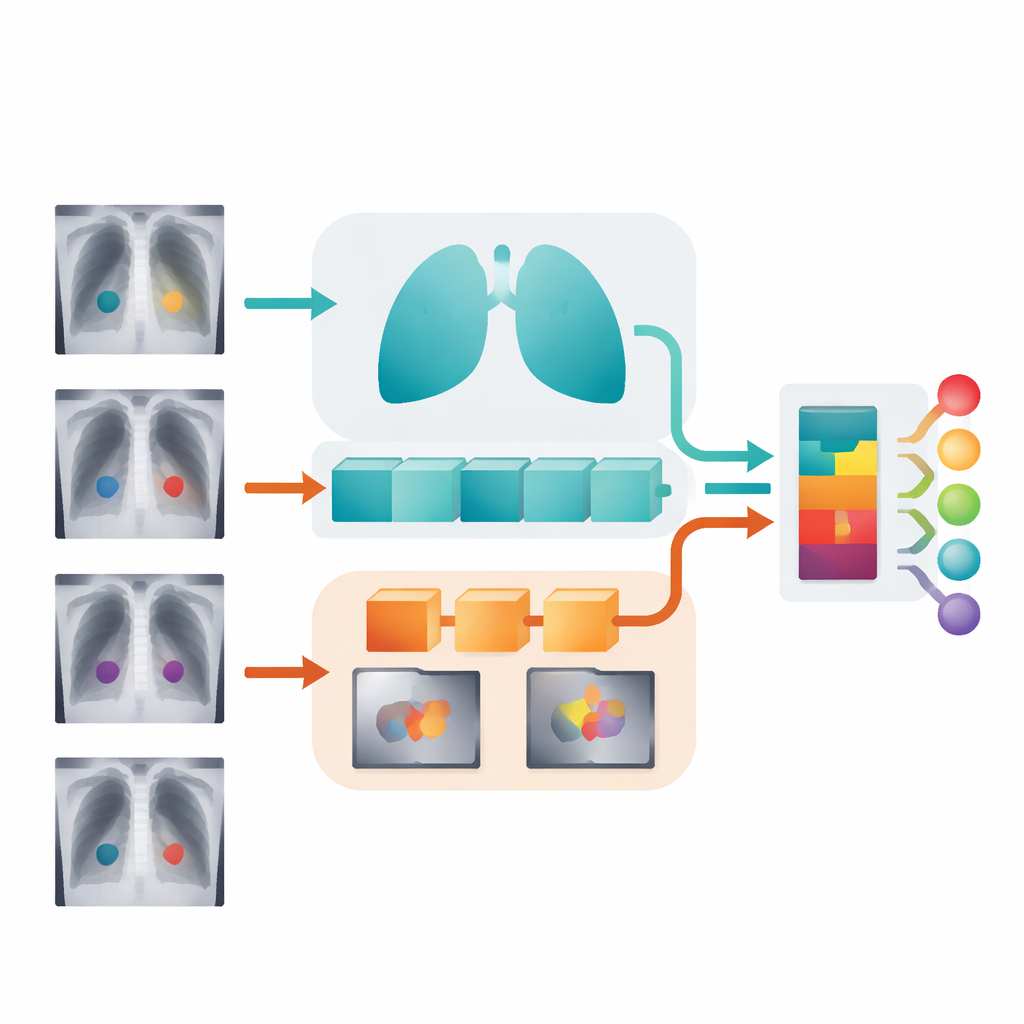

Most existing computer tools look at chest X-rays through a single processing path, which makes it difficult to capture both broad organ shapes and pinpoint-sized lesions in the same model. CR-MSNet instead uses two parallel paths. One “global” path focuses on the overall structure of the lungs and heart, learning long-range patterns that span the entire image. The second “local” path zooms in on smaller regions to pick up fine details, like small nodules or subtle thickening along the chest wall. By running these two paths side by side, the system can recognize diseases that show up as large, diffuse shadows as well as those that appear as small, sharp spots.

Teaching the model where to look

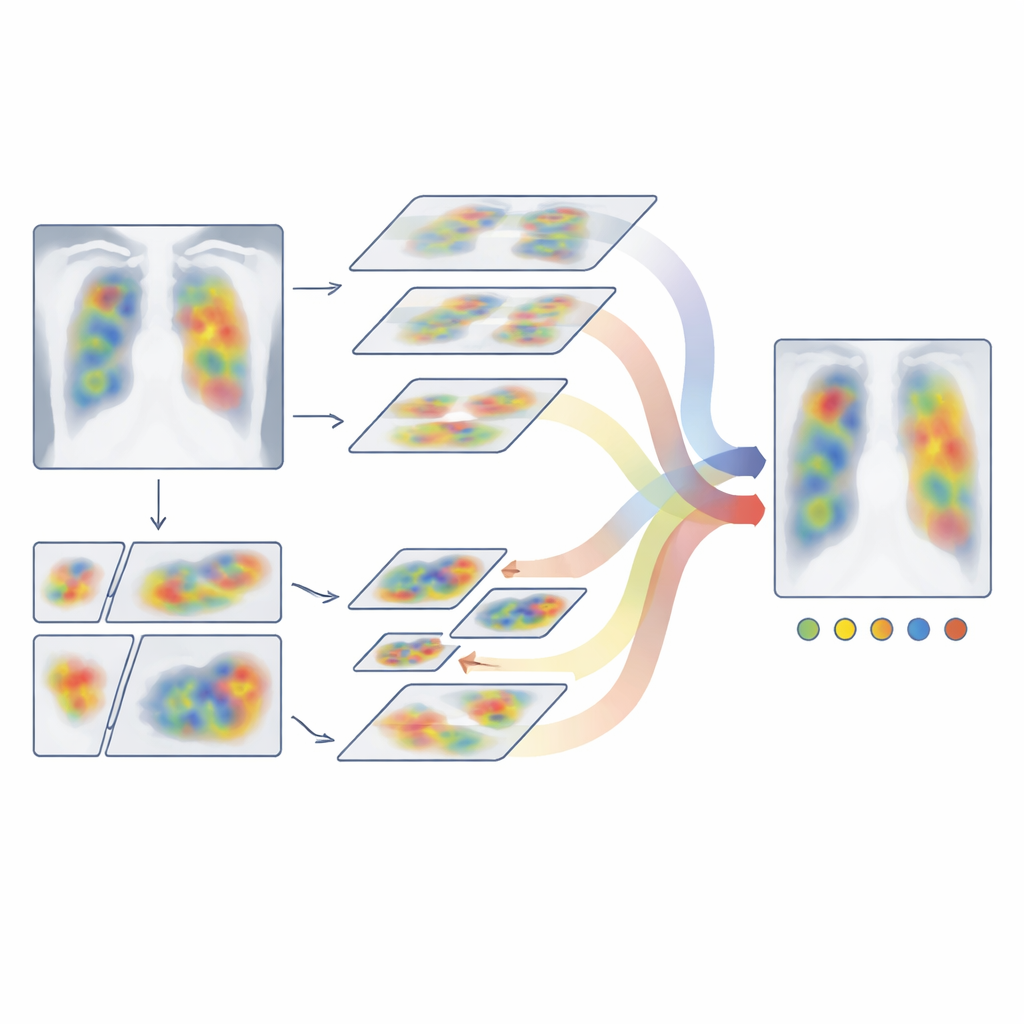

Simply having two paths is not enough; the system also needs to decide which parts of the image deserve the most attention. CR-MSNet introduces a new attention module that works in two ways at once. First, it weighs different feature “channels,” which you can think of as different ways of describing the image (such as edges, textures, and brightness patterns), and boosts those that are most useful for spotting disease. Second, it highlights important regions in space, strengthening signals in likely lesion areas while downplaying distracting structures like ribs or the heart shadow. These two kinds of focus are combined in a flexible way that preserves the original image structure, helping the model lock onto meaningful patterns across many lesion sizes.

Blending global context with local detail

After each branch has sharpened its own view of the X-ray, CR-MSNet brings them together using a cross-attention mechanism. In simple terms, the global branch asks: “Given my understanding of the whole chest, which local details matter most?” At the same time, the local branch offers up its most informative fine-grained patterns. The cross-attention step lets these two perspectives influence each other, producing a fused representation that keeps the overall layout of the lungs and heart while enriching it with precisely localized warning signs. An adaptive gating component then decides, image by image, how much to trust the combined view versus the purely global one, which helps maintain stability when local clues are weak or noisy.

Dealing fairly with common and rare diseases

Real-world chest X-ray collections are highly unbalanced: some problems, like general lung haziness, are common, while others, such as hernias seen on X-ray, are rare. Standard training methods tend to favor the common conditions and may overlook the rare ones. To combat this, the authors train CR-MSNet in two stages. First, they temporarily remove images that show no disease at all so the model can concentrate on learning what different abnormalities look like. In the second stage, they bring back the full dataset but use an adjusted loss function that gives extra weight to rare diseases and hard-to-classify examples. This staged approach helps the system remain sensitive to unusual findings without sacrificing overall accuracy.

How well the new system performs

The researchers tested CR-MSNet on ChestX-ray14, a large public dataset containing more than 100,000 chest X-rays labeled for 14 different diseases. Under identical training and evaluation conditions, their model outperformed a range of leading deep-learning approaches, including classic convolutional networks, modern transformer-based models, and other hybrids that mix the two. On average, CR-MSNet achieved a higher area under the ROC curve (AUC) than all baselines and delivered particularly strong gains for smaller or less common conditions such as hernia and certain masses. The model also showed reasonable robustness when evaluated, without retraining, on a different dataset called CheXpert, suggesting it can adapt to changes in patient populations and imaging styles.

What this means for future chest X-ray reading

In everyday terms, CR-MSNet is a step toward an AI assistant that can scan a chest X-ray for many diseases at once, watch out for both big and small problems, and still give due attention to rare but important conditions. By combining global and local views with smart focusing mechanisms and a careful training scheme, the model reduces some of the blind spots that hamper earlier systems. While it does not replace expert radiologists—and still struggles with some very ambiguous patterns like pneumonia—it offers a more reliable starting point for automated triage and decision support, potentially speeding up diagnosis and helping clinicians manage large volumes of imaging studies with greater confidence.

Citation: Wang, Y., Bao, C., Wang, Z. et al. CR-MSNet: a dual-branch multi-scale attention network for multi-label chest X-ray classification. Sci Rep 16, 14585 (2026). https://doi.org/10.1038/s41598-026-44591-5

Keywords: chest X-ray AI, multi-label diagnosis, deep learning radiology, medical image attention, imbalanced medical data