Clear Sky Science · en

MaizeFormerX: a lightweight vision transformer with cross-scale attention for explainable maize leaf disease diagnosis

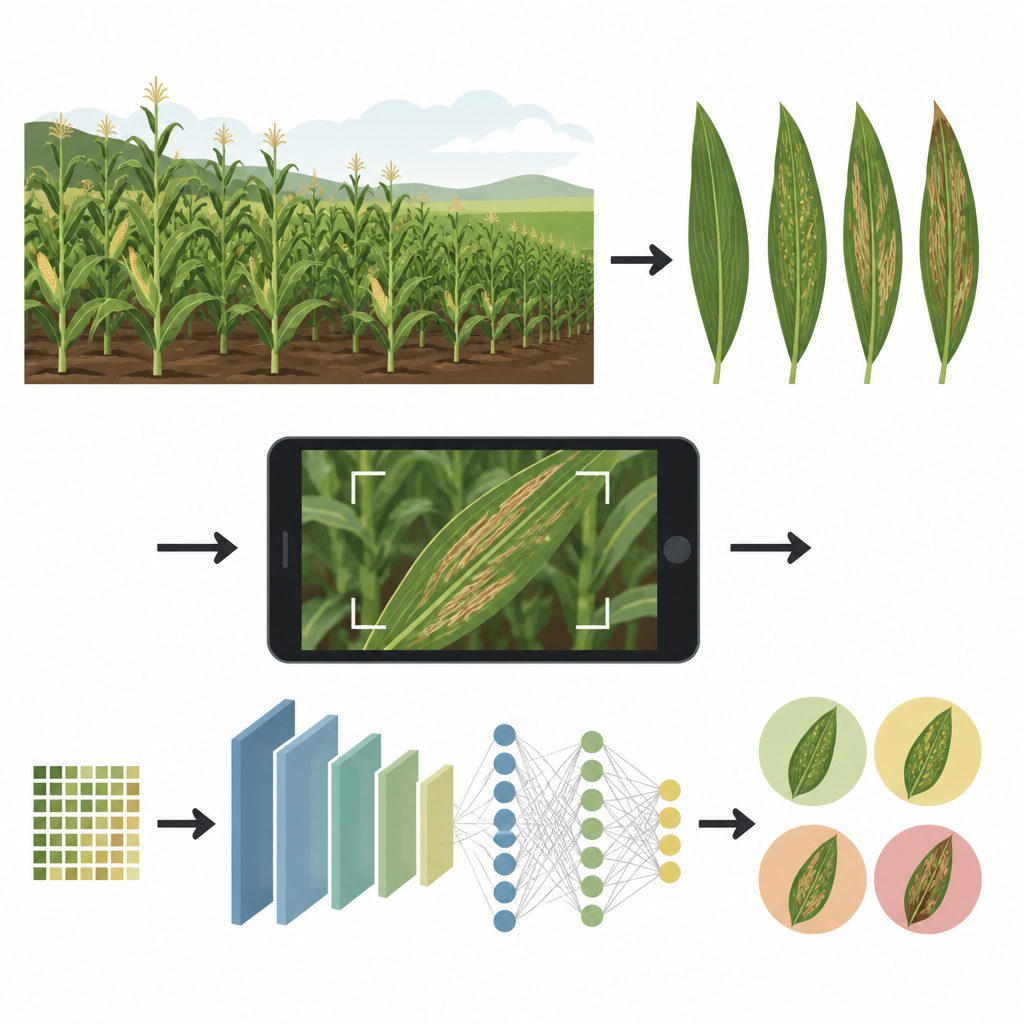

Why maize leaves and phone cameras matter

Maize is one of the world’s most important crops, feeding people and livestock and fueling industry. Yet a handful of leaf diseases can strip away a third or more of a farmer’s harvest, especially in tropical regions. This study introduces MaizeFormerX, a compact artificial intelligence system that can spot these diseases from simple photos of maize leaves, even under messy real farm conditions. The work shows how smarter image analysis could help farmers act earlier, use fewer chemicals, and protect both yields and the environment.

The growing threat on maize fields

Modern maize plants have large, sun-catching leaves that are also prime targets for infection. Diseases such as Maize Lethal Necrosis, Maize Streak Virus, and Maize Leaf Blight attack this leafy surface, causing streaks, spots, and dead tissue that can cut yields by 30 to over 80 percent. In many low and middle income countries, farmers do not have quick access to plant experts, and different diseases can look confusingly similar, or resemble simple nutrient shortages. Misreading these symptoms can waste money on the wrong treatment and allow infections to spread, endangering food security and farm income.

Why older tools fall short

Traditional diagnosis relies on human eyes and experience, which is slow and hard to scale across millions of small farms. Earlier computer models based on deep learning, especially convolutional neural networks, can be very accurate on tidy lab photos with plain backgrounds. However, they often struggle in the real world, where shadows, cluttered fields, and different cameras change how leaves appear. Most past systems were trained and tested on a single, well controlled dataset, so they looked good on paper but did not adapt well to new regions, new lighting, or new varieties of maize. Many were also too large or power hungry for use on low cost phones or edge devices.

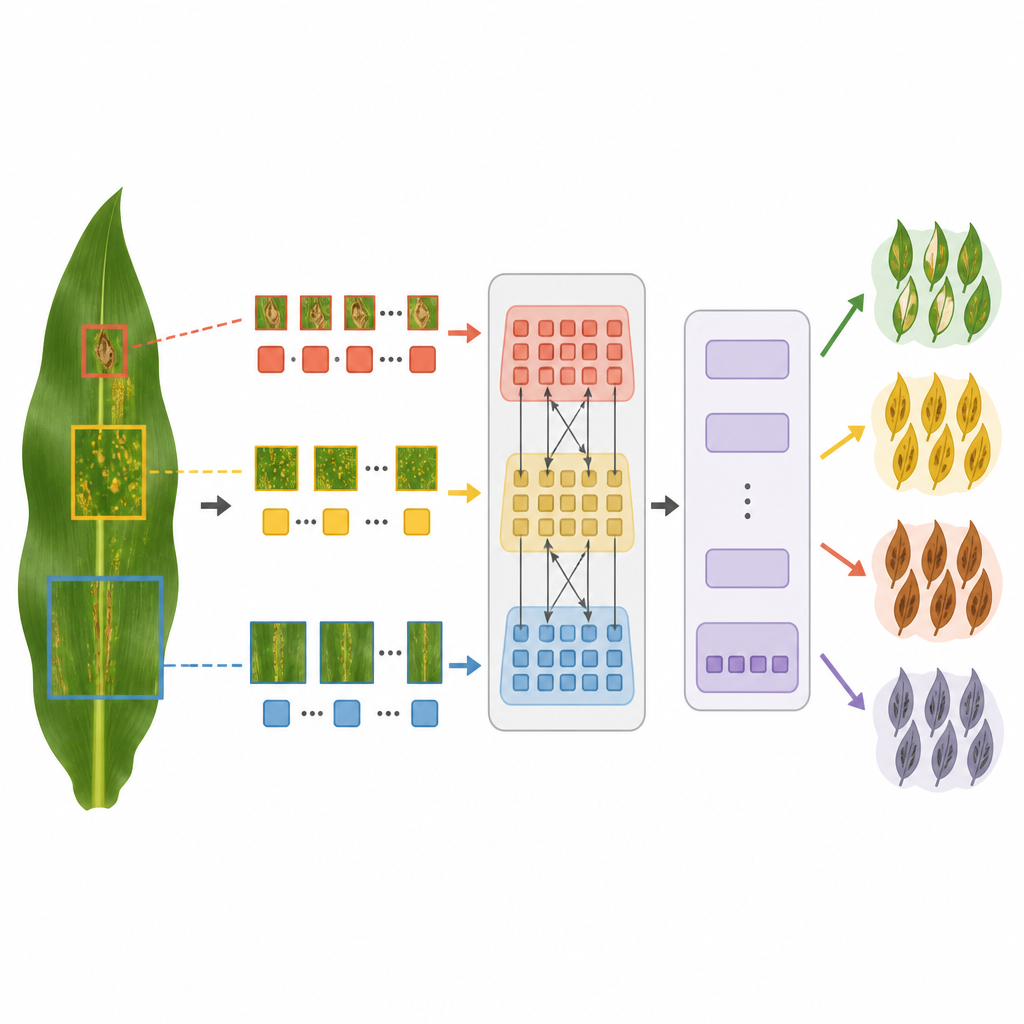

A new way of looking at diseased leaves

MaizeFormerX builds on a newer idea in computer vision called a vision transformer, which is especially good at seeing long range patterns in images. Instead of scanning only small patches, this model breaks a leaf image into patches at several sizes at once. Fine scale patches capture tiny lesions and speckles, while larger patches capture broad streaks and spreading patterns. A special cross scale attention block lets detailed patches “ask” coarser patches for context, helping the system decide whether a pale streak is a harmless blemish or part of a wider disease pattern. These enriched patches then pass through a compact transformer stack that learns to tell healthy leaves from several major disease types.

Testing across real farms and many microbes

To see whether MaizeFormerX would hold up outside the lab, the authors tested it on three very different collections of maize leaf images. One came from controlled lab settings, one from real Tanzanian farms, and one focused on fine grained distinctions among many bacterial, fungal, and viral agents. The team used careful preprocessing and on the fly image tweaks like flips, brightness changes, and added noise to mimic real field variation and to balance rare disease cases. Across all three datasets, MaizeFormerX matched or beat a range of strong compact models, reaching around 97 to 98 percent accuracy in the lab and field style sets and about 97 percent on the fine grained microbe set. When trained on one dataset and tested directly on another, it also stayed several percentage points ahead of the best alternatives, showing stronger cross region generalization.

Seeing what the model sees

High accuracy alone is not enough when farmers and advisors must trust a digital tool. The authors therefore linked MaizeFormerX to a simple web application that not only reports a predicted class but also overlays a heatmap on the leaf photo highlighting the regions that drove the decision. These visual explanations show that the model focuses on lesions, streaks, and discolored bands rather than on soil, sky, or other background clutter. In misclassified cases, the maps reveal where overlapping symptoms or harsh lighting confuse the model, guiding future improvements. This emphasis on human understandable outputs makes the system more suitable as a support tool rather than a black box.

What this means for future maize care

In plain terms, the study shows that a lean, carefully designed AI can reliably tell healthy maize leaves from several major diseases, using ordinary images and working across different regions. By blending multi scale views of each leaf with cross scale attention, MaizeFormerX captures both small spots and large patterns without needing heavy computing power. Combined with an explanation friendly web interface, it points toward future phone or edge based tools that could help farmers and extension workers catch problems earlier, apply treatments more precisely, and cut unnecessary pesticide use, supporting more sustainable maize production.

Citation: Rahman, M.M., Gony, M.N., Ullah, M.S. et al. MaizeFormerX: a lightweight vision transformer with cross-scale attention for explainable maize leaf disease diagnosis. Sci Rep 16, 15160 (2026). https://doi.org/10.1038/s41598-026-44550-0

Keywords: maize disease, plant health, vision transformer, precision agriculture, explainable AI