Clear Sky Science · en

An early detection framework for young Chinese learners at risk of reading difficulty using fNIRS and deep learning

Why spotting reading struggles early matters

Learning to read is one of the most important skills children gain in primary school, yet many struggle in ways that are hard to see from classroom tests alone. By the time reading problems are obvious, the best window for helping may already have passed. This study introduces a new way to detect which young Chinese learners are at risk for reading difficulty by quietly watching how their brains work during simple tasks, and using advanced computer models to interpret those patterns.

A closer look at hidden reading challenges

Reading difficulty, often called developmental dyslexia, affects a sizable share of children around the world. These children have persistent trouble recognizing words, reading fluently, and understanding what they read, even when they have normal intelligence and schooling. In Chinese, a language with a character-based writing system, the proportion of children with clear-cut dyslexia is estimated at 4–7%, but teachers report many more children who hover just below grade expectations. They may not meet the strict medical definition of dyslexia, yet they lag behind in recognizing and writing the characters required by the national curriculum. Identifying this broader “at-risk” group early is crucial so support can start before frustration and failure take root.

Listening to the brain without surgery

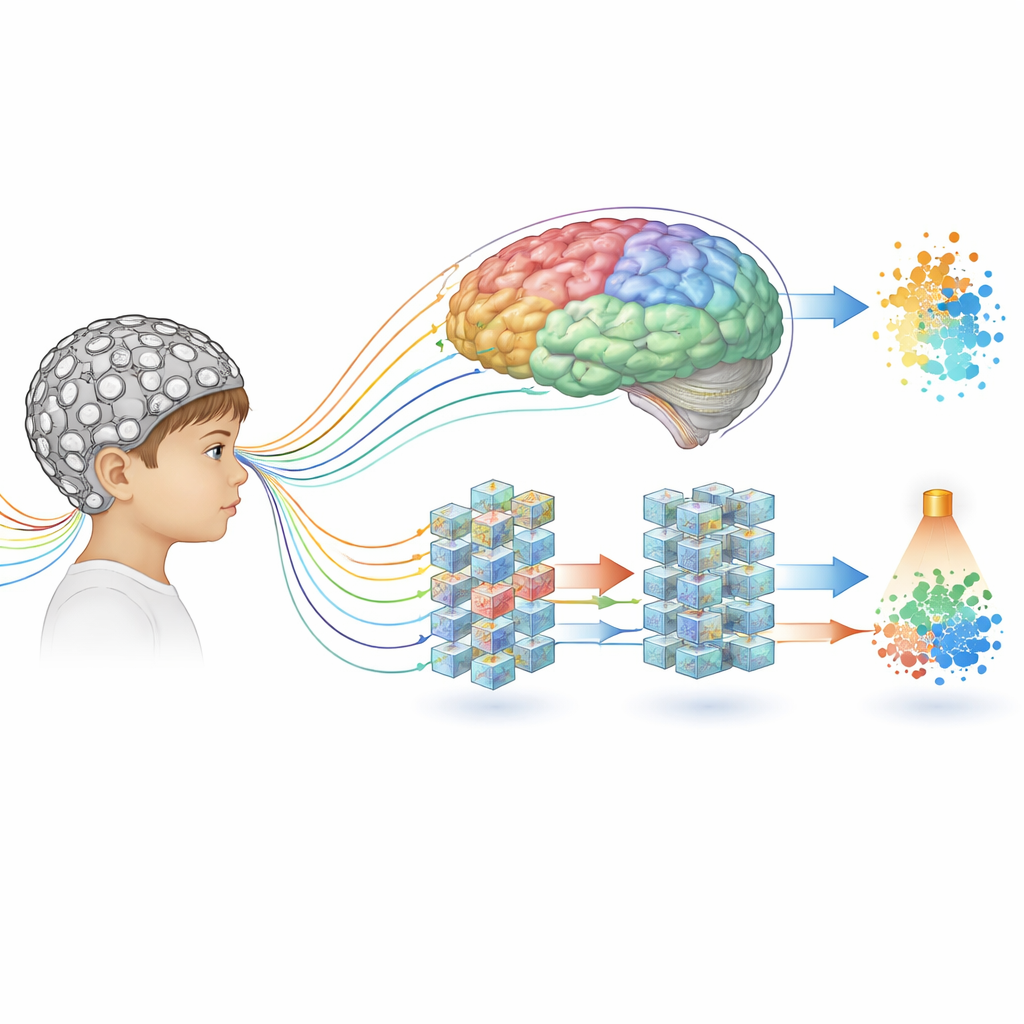

The researchers turned to functional near-infrared spectroscopy (fNIRS), a non-invasive method that uses light to track changes in blood oxygen in the brain. Children wear a comfortable cap studded with light sources and detectors, and the device measures how active different regions of the cortex are while they work. In this study, 30 second-grade students (16 flagged by teachers as having marginal reading problems and 14 with typical reading) completed two kinds of tasks while wearing the cap. One was a visual test in which they had to recognize and pick Chinese characters and English words that looked similar to each other. The other was an auditory test that asked them to decide whether pairs of spoken sounds were the same or different. At the same time, all 150 children in the grade completed a paper-and-pencil test of writing pinyin (sound-based notation) for Chinese characters, confirming that the at-risk group really did perform worse on language basics.

Teaching a smart model to read brain patterns

Raw fNIRS data are messy: they are long time series showing tiny changes in blood oxygen across many locations on the head. Traditional statistical tools struggle with such complex signals. The team built a new deep-learning model, called the RD-risk Classifier (RDr-C), designed specifically to handle this kind of data. First, a graph-based module looks at how signals from neighboring sensors relate in space, mimicking the brain’s network structure. Next, a bidirectional time-series module tracks how activity unfolds over hundreds of time points, both forward and backward in time. Finally, an attention module learns which moments in the signal carry the most useful clues. Together, these pieces form a pipeline that can automatically discover patterns that distinguish at-risk children from their peers, without hand-crafted rules.

Remarkably accurate early warning signals

When the model was trained and tested repeatedly on different splits of the data, it correctly separated at-risk and typical children almost every time, with accuracies around 99–100% for both the visual and auditory tasks. Even under a tougher test—training on all but one child and then predicting the left-out child—the model still achieved close to 90% accuracy. Competing models, including standard neural networks and two specialized fNIRS classifiers, did noticeably worse. Visualizations of the features learned by the model showed two tight, well-separated clusters for the two groups, suggesting that the brain signals of at-risk children really do carry a distinctive signature, even though their behavior on the tasks was not dramatically different from that of their classmates.

What the brain reveals about subtle reading risks

To probe what the model had learned, the researchers systematically shuffled data from different brain regions and watched how much the predictions deteriorated. This highlighted a particular area associated with fine finger movements as especially important. Detailed time–frequency analysis showed that, during visually demanding tasks, typical children displayed orderly, rhythmical patterns in this region, consistent with smooth sensorimotor control when pointing or pressing keys. In contrast, at-risk children showed more irregular activity, hinting at a context-dependent coordination problem rather than a general motor deficit. Notably, this difference was much weaker in the auditory task, reinforcing the idea that certain brain coordination issues emerge mainly under visually heavy reading-like demands.

Towards brain-informed help in the classroom

In plain terms, this work suggests that some young children who appear only slightly behind in reading may already show clear, measurable differences in how their brains coordinate vision, movement, and attention during reading-related tasks. By combining safe brain imaging with a tailored deep-learning model, the researchers created a highly accurate early warning tool that could, in the future, help teachers and clinicians flag at-risk students years earlier than current methods. While the study is still small and the system needs to be tested in larger, more diverse groups—and possibly combined with other measures such as handwriting or eye tracking—it points toward a future in which subtle learning risks are detected not just by watching test scores, but by quietly listening to the brain at work.

Citation: Yang, P., Duan, Y., Wang, L. et al. An early detection framework for young Chinese learners at risk of reading difficulty using fNIRS and deep learning. Sci Rep 16, 14104 (2026). https://doi.org/10.1038/s41598-026-44379-7

Keywords: reading difficulty, dyslexia, brain imaging, deep learning, child literacy