Clear Sky Science · en

Enhanced swin transformer with dual attention for knee osteoarthritis severity grading from X-ray images

Why sore knees matter

Knee pain is more than just an annoyance; it is one of the leading causes of disability worldwide, especially as people age. Doctors rely heavily on X‑ray images to decide whether someone’s knee osteoarthritis is mild and manageable, or severe enough to consider surgery. But reading these images is time‑consuming, can miss early damage, and different experts do not always agree. This study presents a new artificial‑intelligence (AI) system that aims to read knee X‑rays quickly and very accurately, helping clinicians catch joint damage earlier and guide treatment more consistently.

A smarter way to read knee X‑rays

Osteoarthritis gradually wears away the smooth cartilage that cushions the knee, causing pain, stiffness, and loss of mobility. On an X‑ray, doctors look for clues such as narrowing of the space between bones and small bony bumps called osteophytes. These changes are summarized using a five‑level score known as the Kellgren–Lawrence (KL) grade, from 0 (healthy) to 4 (severe). Traditional computer programs based on convolutional neural networks (CNNs) have helped automate this grading, but they struggle to capture subtle patterns across the whole image and often need large computing power and long training times. The authors of this paper set out to design a system that is not only more accurate but also lighter and faster, so it could realistically be used in busy clinics, including those with limited resources.

How the new AI system works

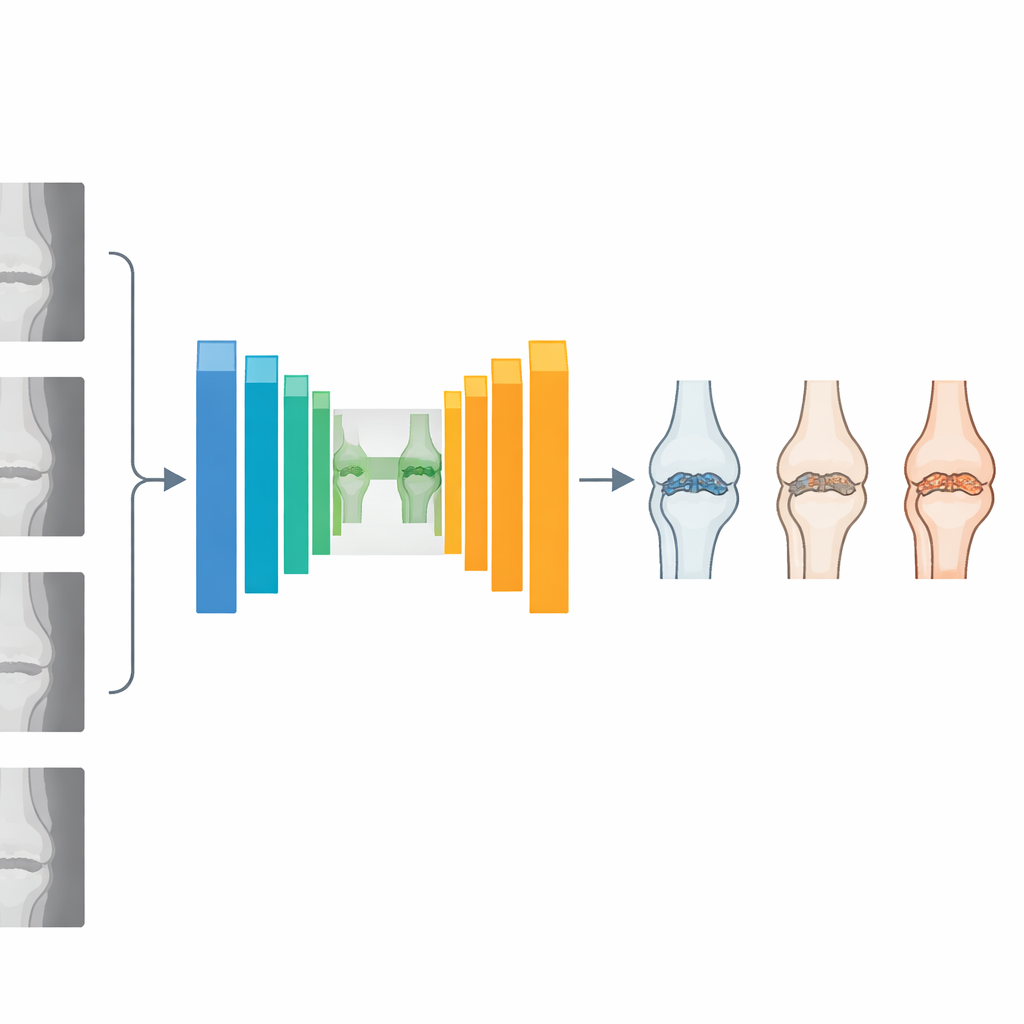

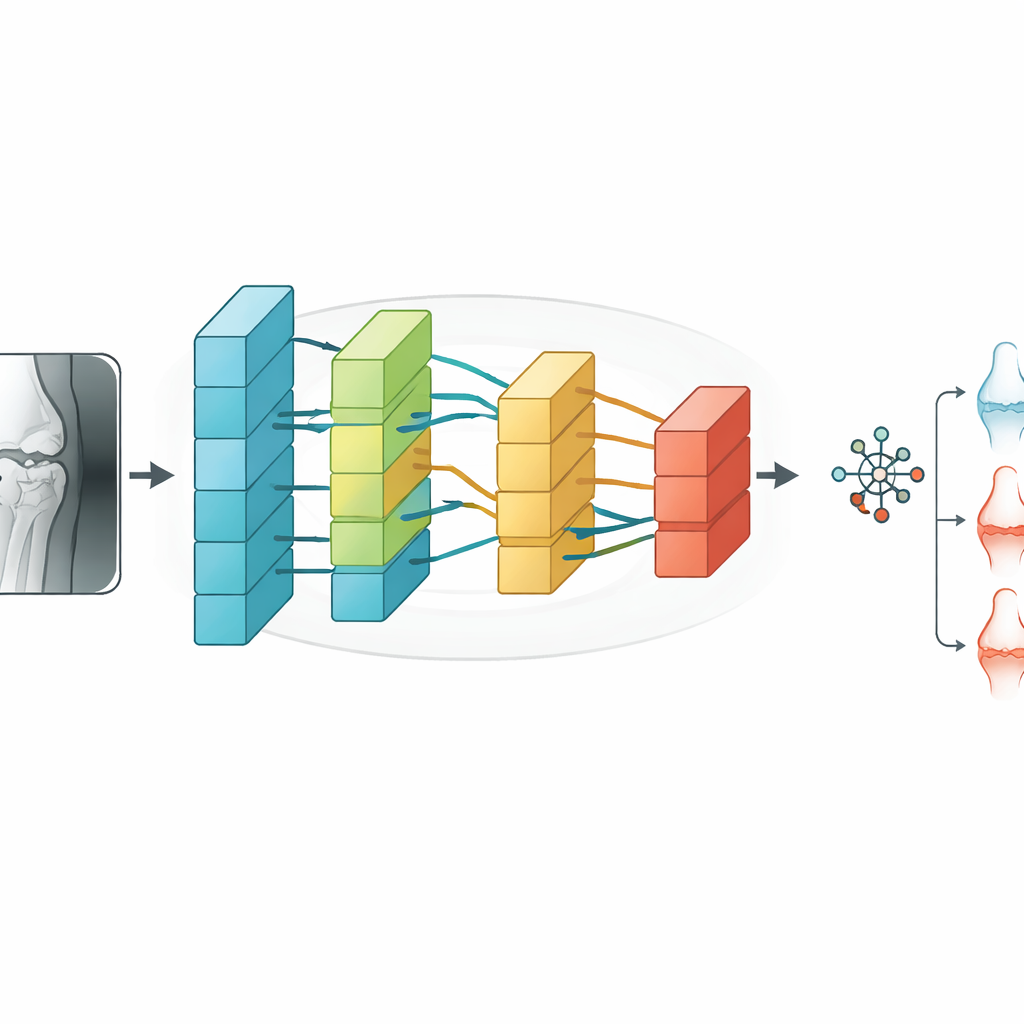

The researchers created a hybrid model called Swin‑O‑NETS that combines two ideas: an advanced image reader known as a Swin Transformer and a fast, lightweight classifier called a Fast Extreme Learning Network. First, X‑ray images from a large public database—the Osteoarthritis Initiative—are cleaned and enhanced to remove noise and improve contrast. The images are then split into small patches and passed through a U‑shaped network that segments and analyzes the knee region. Inside this network, a modified Swin Transformer looks at the image at multiple scales, from fine details at the joint surface to broader structural patterns across the whole knee.

Paying attention to the right details

A key innovation is the use of multi‑headed channel self‑attention, a mechanism that helps the AI decide which image features matter most. Instead of treating all parts of the X‑ray equally, the model learns to focus on channels that carry information about joint space narrowing, bone edges, and early bony growths, while downplaying less informative background regions. Multiple attention “heads” look at the data in parallel and then combine their findings, enriching the overall description of the knee. These refined features are fed into the Fast Extreme Learning Network, which performs the final step of assigning the X‑ray to one of the five KL grades. Because this classifier can compute its internal weights in a single mathematical step rather than through many slow training cycles, the whole system remains efficient despite its sophistication.

Putting the system to the test

To see how well Swin‑O‑NETS performs, the authors trained and tested it on 2,047 labeled knee X‑rays, carefully balancing the different severity grades and using data‑augmentation tricks such as rotation and scaling to avoid overfitting. They compared their model with popular deep‑learning architectures including standard CNNs, VGG‑19, ResNet, DenseNet, and several ensemble and attention‑enhanced variants. Across all five KL grades—ranging from healthy to severely damaged—Swin‑O‑NETS consistently delivered the highest scores. It reached about 99.5% overall accuracy, with similarly high precision, recall, and F1‑scores, and an area under the ROC curve of 0.9838, indicating excellent ability to distinguish between severity levels. At the same time, it required less computation and training time than many transformer‑based competitors.

What this could mean for patients

In simple terms, this work shows that a carefully designed AI system can grade knee osteoarthritis on X‑rays almost perfectly while remaining practical to run. By spotting early joint changes that the human eye might miss and doing so quickly and consistently, Swin‑O‑NETS could support earlier lifestyle or medical interventions, delay the need for joint replacement, and help standardize care across hospitals. The authors note that real‑world deployment will require further testing on larger, multi‑center datasets and the development of even lighter versions suitable for real‑time use. Still, their results suggest that intelligent image readers like this could soon become routine companions to radiologists, quietly improving the odds for millions of people living with sore, fragile knees.

Citation: Sudha, K., Rajiv Kannan, A. Enhanced swin transformer with dual attention for knee osteoarthritis severity grading from X-ray images. Sci Rep 16, 10617 (2026). https://doi.org/10.1038/s41598-026-44174-4

Keywords: knee osteoarthritis, X-ray imaging, deep learning, transformer networks, medical image classification