Clear Sky Science · en

A logic-based knowledge-driven bidirectional multi-attention GRU framework for fear level classification in humans

Why Reading Fear in the Body Matters

Fear is more than a feeling in the pit of your stomach—it reshapes your heartbeat, your sweat, and even your brain waves. If computers could reliably read those subtle changes, therapists might track anxiety in real time, cars and robots could react when people panic, and virtual reality could adapt to keep users safe. This study explores how to teach machines to recognize different levels of fear in people by combining signals from the brain and body with a kind of structured, rule-based reasoning, aiming for systems that are both accurate and understandable to humans.

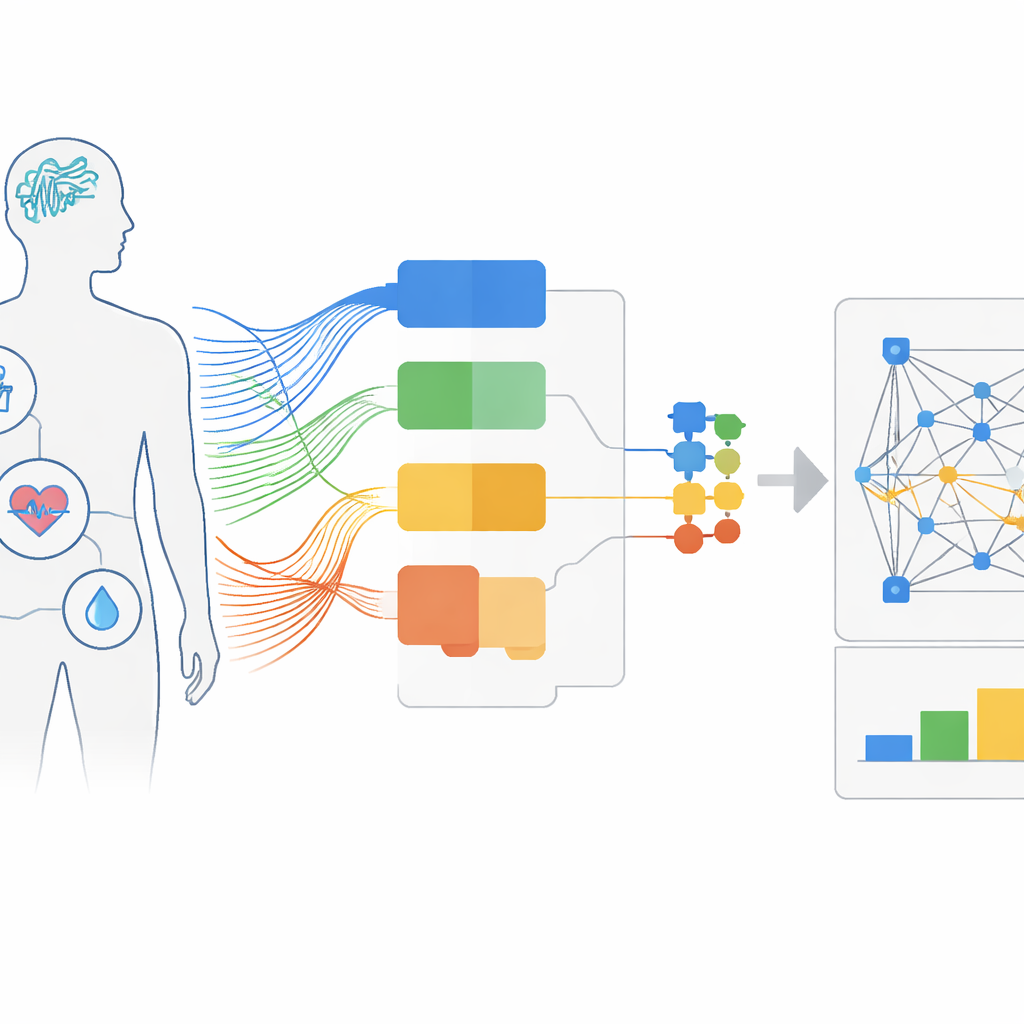

Listening to the Brain and the Body

The researchers start from the idea that no single measurement can fully capture fear. They therefore pull together several kinds of physiological signals: electrical activity from the brain, skin conductance that rises when we sweat, changes in heart rhythm, breathing patterns, and facial movements. Two existing experimental collections are used. One, called DEAP, records people’s brain and body signals while they watch music videos and rate how pleasant and how intense they feel. The other, DECAF, uses brain scans and body sensors while people watch fear-inducing movie scenes or listen to music. These multimodal datasets provide a rich picture of how fear shows up across the nervous system.

Turning Raw Signals into Simple Rules

Raw signals alone are noisy and hard to interpret, so the team distills them into features with known links to emotion. For example, they compute “frontal alpha asymmetry,” a pattern in brain waves over the left and right forehead often associated with negative mood, and they count peaks in the skin’s electrical response that tend to rise with stress. They also break brain signals into classic frequency bands—delta, theta, alpha, beta, and gamma—and summarize heart rhythm variability. Instead of feeding these continuous numbers directly to a black-box model, they introduce logic-based learning: each feature is judged as “high” or “low” using statistically chosen thresholds. From these binary building blocks, an algorithm known as inductive logic programming discovers human-readable IF–THEN rules, such as combinations of high skin response and low heart variability that reliably indicate fear.

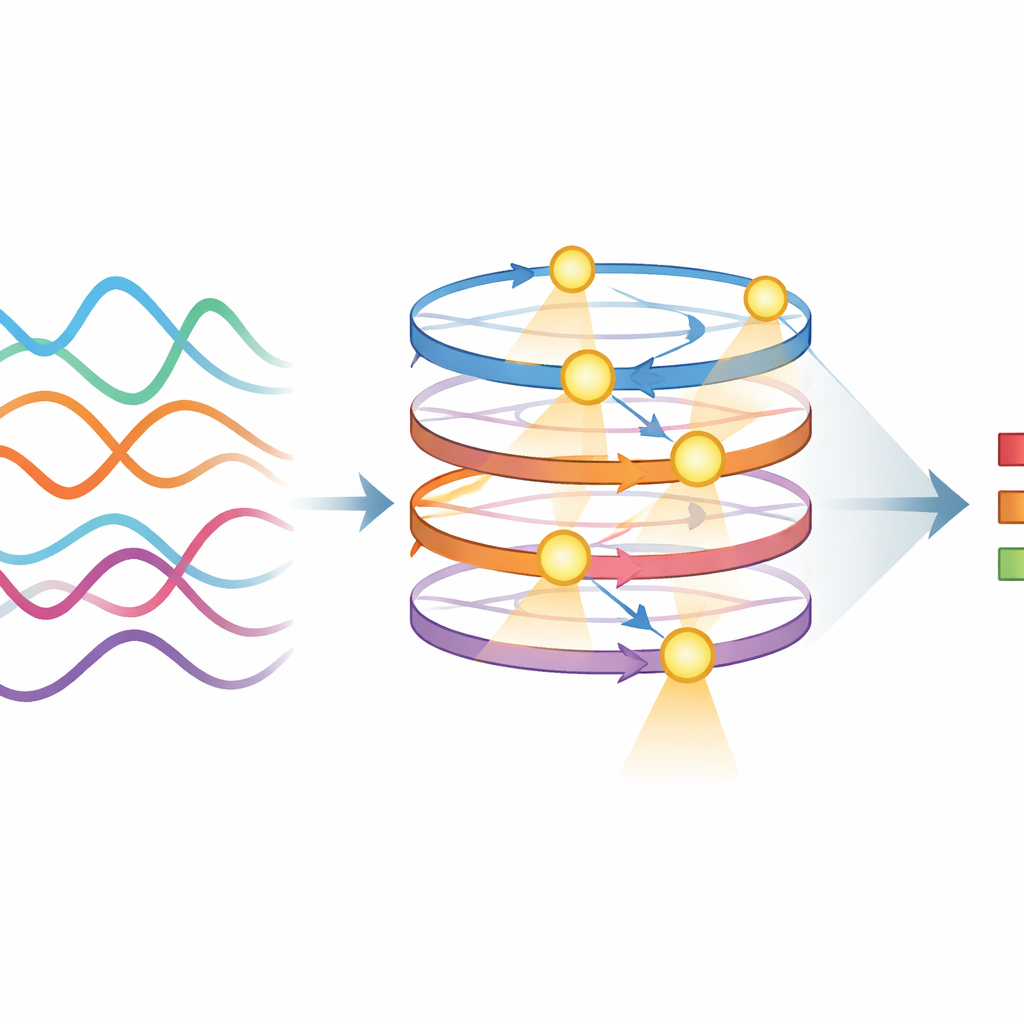

Marrying Rules with Deep Learning

While the logical rules make decisions easier to understand, modern deep learning models are better at squeezing out every bit of predictive power from complex data. The authors therefore treat the logic outputs as the sole inputs to several neural network architectures designed for time-varying signals. These include standard recurrent networks, long short-term memory networks, simpler gated recurrent units, and an advanced design called a bidirectional multi-attention GRU. This last model not only looks backward and forward through each sequence of features, but also uses attention mechanisms to focus on the most informative moments, such as sharp spikes in skin conductance or sudden shifts in brain rhythms, while ignoring minor fluctuations.

How Well the System Reads Fear

To test their approach, the team compares models trained on the same inputs with and without the logic-based layer. They evaluate them on how accurately they can separate no fear from fear, and in the DEAP data, how well they distinguish four levels ranging from no fear to high fear. Across extensive cross-validation and statistical tests, adding logical rules consistently boosts performance and makes results more stable from one test split to another. The standout architecture, the knowledge-driven bidirectional multi-attention GRU, reaches about 96.7 percent accuracy on the DEAP dataset and similarly high performance on music clips in DECAF, outperforming several previously published methods and more conventional machine learning techniques.

What This Means for Future Emotion-Aware Tools

In simple terms, the study shows that computers can gauge human fear levels with impressive reliability when they read multiple body and brain signals and interpret them through clear, rule-like knowledge before applying deep learning. This hybrid design offers the best of both worlds: the power of modern neural networks and the transparency of logical rules that experts can inspect and refine. Such an approach could underpin future mental health monitoring, adaptive entertainment, and safety systems that do more than guess at our emotions—they explain why they believe we are afraid, opening the door to more trustworthy and personalized technologies.

Citation: Joshva, A.A., Balasubramanian, V.K. & Murugappan, M. A logic-based knowledge-driven bidirectional multi-attention GRU framework for fear level classification in humans. Sci Rep 16, 13722 (2026). https://doi.org/10.1038/s41598-026-44151-x

Keywords: emotion recognition, fear detection, physiological signals, deep learning, interpretable AI