Clear Sky Science · en

An ensemble hybrid and explainable AI (XAI) framework for zero false-positive islanding detection in distribution networks

Why keeping the lights safe really matters

As homes and towns add more rooftop solar panels, small wind turbines, and other local power sources, our electric grid is becoming cleaner but also more complicated. One of the most serious hazards in this new landscape is “islanding,” a situation where a neighborhood keeps producing electricity even after it has been cut off from the main grid. This paper introduces a smart, two-step artificial intelligence system that aims to spot islanding quickly and reliably, while avoiding unnecessary shutoffs that annoy customers and strain the grid.

When a neighborhood becomes its own island

Imagine a street where the main power line has been switched off for maintenance or after a fault, but rooftop solar systems keep feeding electricity into the local wires. Workers may think the line is dead when it is still live, and equipment can be damaged when the grid is reconnected out of sync. Because of these safety risks, international rules require that local generators stop energizing an islanded section within two seconds. Traditional protection devices watch for changes in voltage or frequency, or deliberately disturb the grid to see how it reacts, but these methods either miss some islanding cases or trigger too often during harmless events such as motor starts or capacitor switching. Utilities are forced into a trade-off between missing dangerous islands and causing nuisance trips.

Why smarter learning tools are needed

Researchers have turned to machine learning to tell apart islanding from other disturbances by learning patterns directly from data. Earlier studies used single models such as neural networks or decision trees that answer a simple yes-or-no question: “is this islanding or not?” The problem is that the “not” category covers many different situations—faults, switching operations, normal grid behavior—that can look very similar in measurements taken at one point in the network. Tuning a single model to be more sensitive reduces the chances of missing an island, but usually raises the number of false alarms. Likewise, tuning it to avoid nuisance trips makes it more likely to overlook a real island. Many of these models are also hard to interpret, which makes grid engineers uneasy about trusting them in critical protection roles.

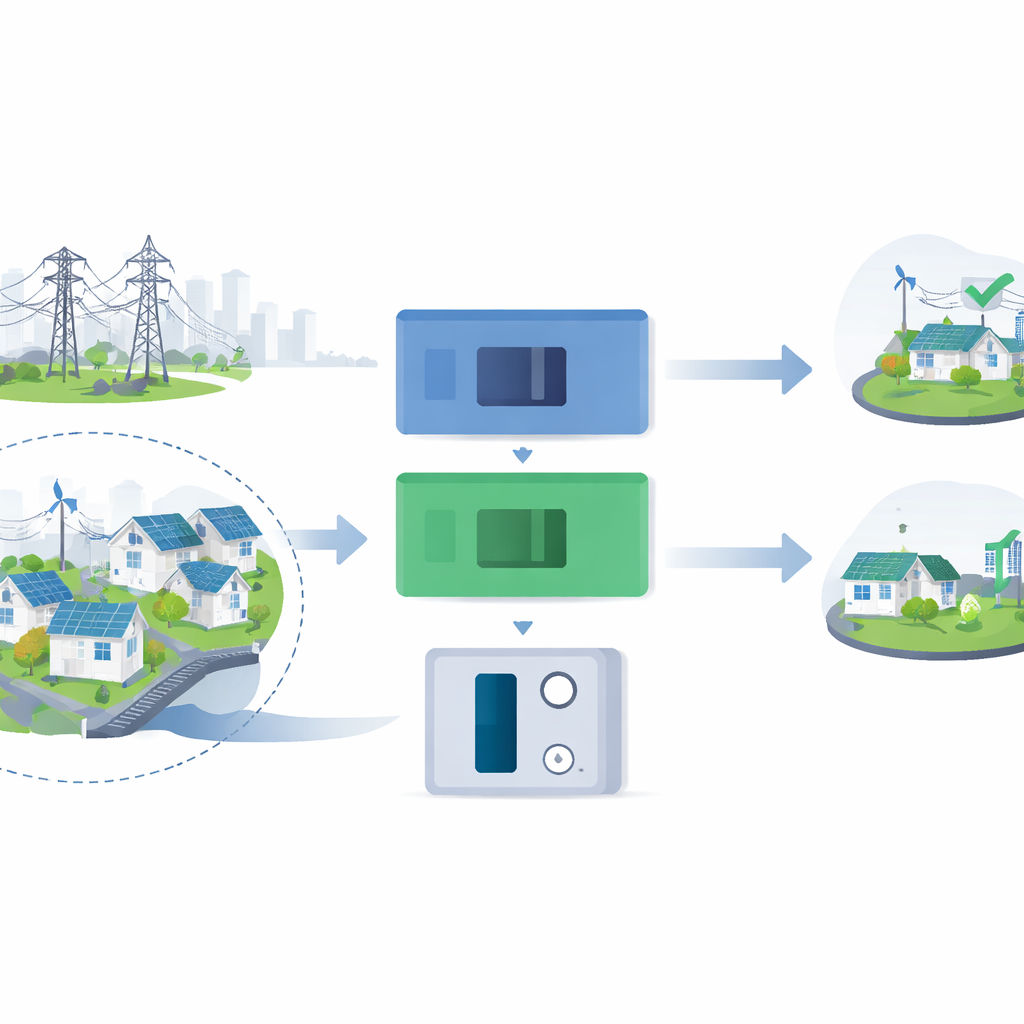

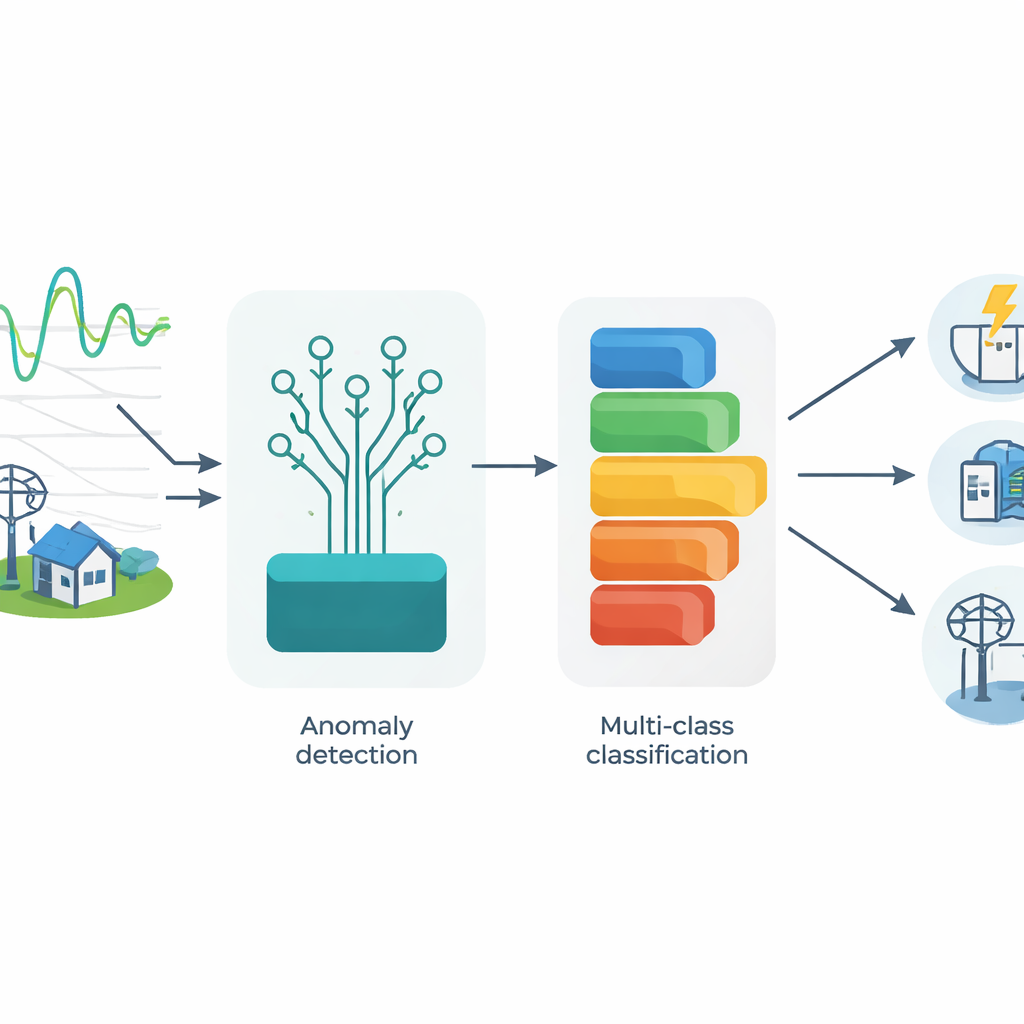

A two-step watchguard for the grid

The authors propose a different strategy: split the protection job into two dedicated stages that work together. In the first stage, an “Isolation Forest” algorithm acts like an always-on watchguard trained only on normal grid behavior. Its job is very simple—raise a flag whenever incoming measurements look unusual. This stage is tuned to be extremely sensitive so that almost any deviation, including subtle islanding cases, is caught early. Only when such an anomaly is detected does the second stage wake up. Here, a powerful “XGBoost” model does a more refined check, not just deciding whether the event is islanding or not, but classifying it into several categories such as islanding, faults, capacitor switching, motor starts, and routine load changes. By teaching the model to recognize these different signatures separately, the system becomes much better at avoiding false trips without relaxing its vigilance.

Seeing inside the black box with explanations

To build trust in this automated decision-maker, the framework also uses an explanation tool called SHAP. For each event, SHAP works out how much different features—like how fast the grid frequency is changing, how quickly power is shifting, or how distorted the voltage has become—push the decision toward or away from islanding. In real time, the system logs the few most important contributors so operators can see why a breaker was ordered to trip or stay closed. During testing and tuning, more detailed SHAP plots help the researchers spot where the model is being confused, for example when a capacitor switching event mimics islanding. These insights guided the design of an adaptive confidence threshold: the system demands stronger evidence before tripping when the patterns look ambiguous, and can relax slightly when the evidence is clear and consistent.

How well the new system performs

The team tested their approach on a publicly available simulated microgrid dataset that includes hundreds of examples of islanding and non-islanding events under many operating conditions. After extracting seven physically meaningful features from short windows of voltage and current data, they trained and tuned both stages, then evaluated performance on previously unseen cases. The cascaded system detected 96 percent of islanding events, meaning that very few islands went unnoticed in the test, while keeping the false alarm rate to about 2.7 percent. Overall accuracy reached 96.8 percent, and the method outperformed single-model machine learning baselines and a traditional threshold-based hybrid scheme. Even when realistic measurement noise was added, performance declined gracefully rather than collapsing, and the processing time stayed under ten milliseconds—far faster than required by grid standards.

What this means for everyday reliability

For non-specialists, the key message is that the paper presents a practical way to get “the best of both worlds” in protecting a modern, renewable-rich grid. By first asking “is anything odd happening?” and only then asking “exactly what is happening?”, the system sidesteps the usual trade-off between catching every dangerous island and avoiding needless shutoffs. The added layer of explanations lets engineers see the logic behind each trip command, turning a black-box algorithm into a tool they can inspect and refine. While the study relies on simulated data and will need to be proven on real-world measurements, it points toward safer, more reliable integration of rooftop solar, small wind farms, and other distributed generators into the everyday power systems we all depend on.

Citation: Shahade, S.K., Jawadekar, A.U. & Shahade, A.K. An ensemble hybrid and explainable AI (XAI) framework for zero false-positive islanding detection in distribution networks. Sci Rep 16, 13460 (2026). https://doi.org/10.1038/s41598-026-43913-x

Keywords: islanding detection, distributed generation, machine learning, power system protection, explainable AI