Clear Sky Science · en

Performance of a GPU- and time-efficient pseudo-3D network for magnetic resonance image super-resolution and motion artifact reduction

Sharper Brain Scans in Less Time

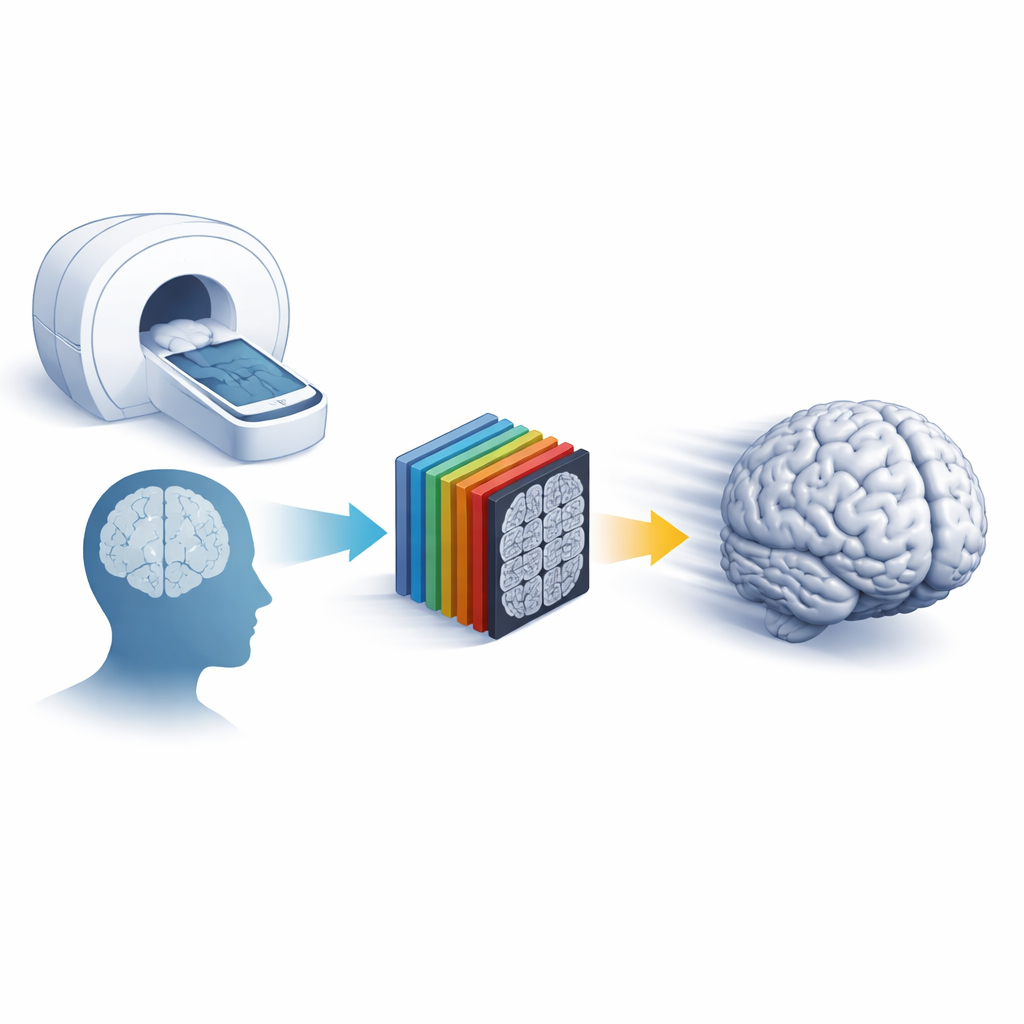

Magnetic resonance imaging (MRI) is a workhorse of modern medicine, but getting crisp three-dimensional pictures of the brain usually means long, uncomfortable scans that are easily ruined when patients move. This study introduces a smart computer method that can turn faster, lower-quality brain scans into clear, detailed images while also cleaning up motion streaks—and it does so using modest graphics hardware, making it practical for everyday hospital use.

Why Fast Scans Often Fall Short

Doctors want MRI images that are both sharp and free of motion blur, but there is a trade-off: higher resolution requires longer scans, which increases the chance that patients move and spoil the images. Traditional tricks to speed things up, such as parallel imaging, only go so far before noise and artifacts become a problem. Deep learning methods have recently shown that they can “super-resolve” images—reconstructing fine details from coarser scans—and reduce motion artifacts, yet most powerful approaches rely on fully three-dimensional networks that are slow and demand expensive graphics cards. This limits their use in busy clinical settings where time, cost, and reliability matter.

A Thin-Slice Shortcut to 3D Detail

The researchers adapted an existing two-dimensional deep network into what they call a “thin-slab” design. Instead of processing each MRI slice in isolation, the network ingests a small stack of neighboring slices at once and treats them as channels. This preserves important three-dimensional context without the heavy burden of a full 3D model. The same framework is trained to solve two jobs: super-resolution reconstruction, which recovers fine detail from scans acquired with thicker slices or fewer data points, and motion artifact reduction, which removes streaks and ghosting caused by head movement. To rigorously test performance, the team created realistic low-resolution and motion-corrupted data from high-quality public brain MRI datasets and compared their method against leading 3D networks and a popular 2D U-Net model.

Balancing Speed, Sharpness, and Scan Design

A key question for hospitals is how much they can shorten scans without sacrificing image quality. The authors systematically varied how much they “down-sampled” the original data in different directions, mirroring how real scanners trade resolution for speed. They found that modest thinning of slices (doubling slice thickness while keeping in-plane detail) was the best choice for twofold faster scanning, and an even, three-direction reduction worked best for fourfold speed-ups. Under these optimal settings, the thin-slab network beat or matched most state-of-the-art 3D models on standard image quality scores, all while cutting graphics memory use and processing time by up to 90%. In side-by-side examples, fine brain structures such as gray–white matter boundaries and small arteries were better preserved than with competing methods or simple interpolation.

Cleaning Up Motion and Knowing When Not to Trust the Image

Motion is a constant foe in MRI—especially for children, older adults, and patients in pain. Using carefully controlled simulated head movements, the authors showed that their network consistently removed strong motion artifacts, particularly when it could look at several slices together. It recovered both through-slice and in-plane consistency better than a refined 2D U-Net. Beyond restoration, the study tackled a more subtle safety issue: when does the network get things wrong? By training the system to output not only a cleaned or sharpened image but also pixel-wise “uncertainty” maps, the authors could estimate how trustworthy each region was. One type of uncertainty reflected noise in the data, while another captured how far a new scan differed from what the network had seen during training. This second measure correlated strongly with standard image quality metrics, allowing the team to predict quality even when no perfect reference image was available.

Testing on New Scanners and Looking Ahead

To see how well the approach holds up in the real world, the researchers applied their trained model to a completely independent dataset acquired on a different scanner with a different head coil, including scans with genuine, uncontrolled head motion. Even without retraining, the method sharpened low-resolution images and reduced motion streaks, though the uncertainty maps correctly indicated that the network was less confident on this unfamiliar data. This behavior suggests that the technique can both extend useful image quality across scanners and flag cases where caution is warranted.

What This Means for Patients and Clinicians

In plain terms, this work shows that a lean, cleverly designed deep network can deliver 3D-quality brain images from quicker, lower-resolution or motion-degraded scans, without requiring cutting-edge hardware. It identifies practical scanning strategies that best pair with such software and adds built-in uncertainty estimates that warn radiologists where the reconstruction may be less reliable. If validated across more body regions and disease types, this approach could make MRI exams shorter, more comfortable, and more informative, while giving clinicians clearer insight into when to trust the images on screen.

Citation: Li, H., Liu, J., Schell, M. et al. Performance of a GPU- and time-efficient pseudo-3D network for magnetic resonance image super-resolution and motion artifact reduction. Sci Rep 16, 9654 (2026). https://doi.org/10.1038/s41598-026-43804-1

Keywords: MRI super-resolution, motion artifact reduction, deep learning imaging, brain MRI, uncertainty maps