Clear Sky Science · en

Optimizing charge discharge cycles using QPPONet-enabled hybrid learning framework for energy management and safety in electric vehicles

Smarter Batteries for Safer Electric Drives

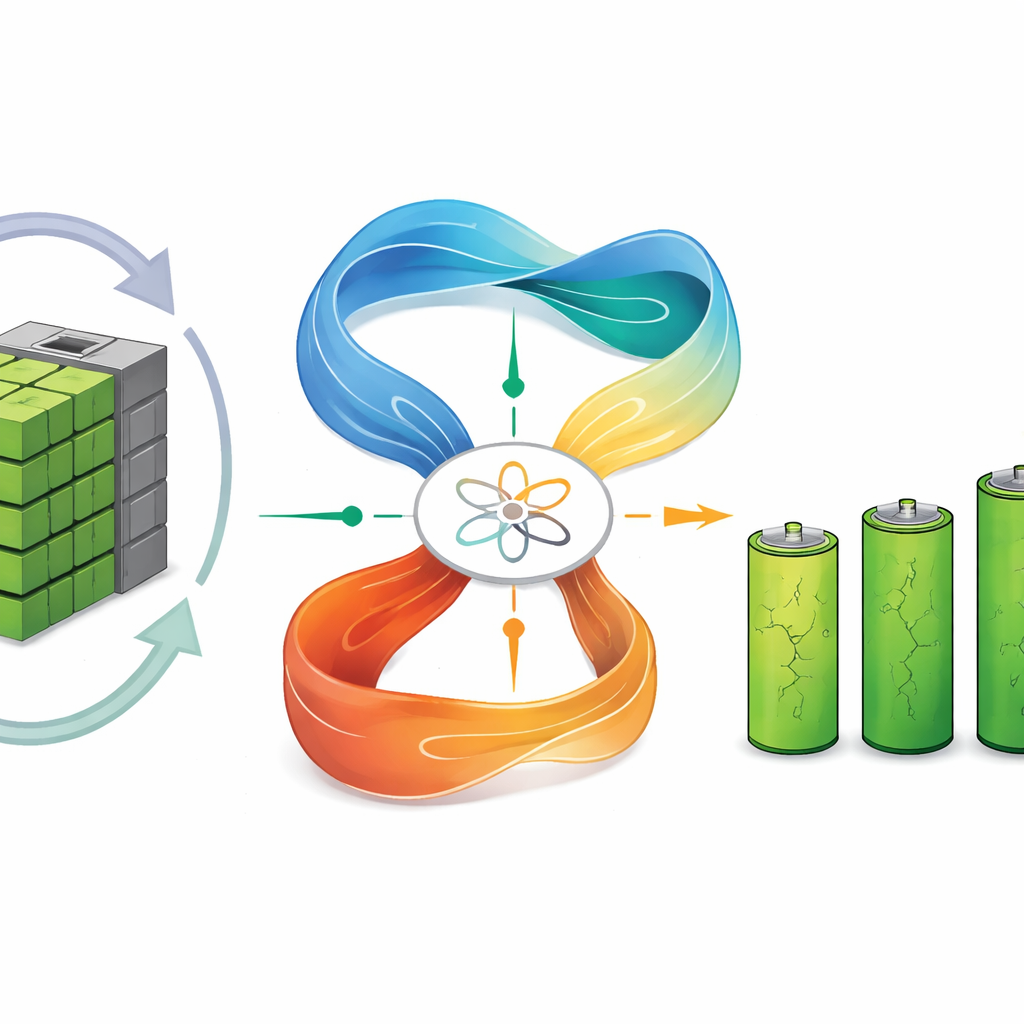

Electric vehicles promise cleaner air and lower fuel bills, but their batteries are expensive, age over time, and can fail in dangerous ways if not carefully managed. This paper explores how modern artificial intelligence can act as an always‑on guardian for electric‑vehicle batteries, squeezing out more range, extending lifespan, and spotting early signs of trouble long before a driver notices anything is wrong.

From Simple Rules to Learning Brains

Today’s battery systems inside most electric cars rely on fixed rules and simplified formulas. They estimate how much energy is left and when the pack might fail, but they struggle when real driving conditions differ from the lab—such as frequent fast charging, steep hills, or heat waves. The authors argue that this rule‑based approach wastes usable energy, shortens battery life, and can miss warning signs of dangerous overheating. They instead propose an adaptive battery manager that learns directly from data: previous trips, past charge–discharge cycles, and a wide variety of temperatures and driving styles.

Reading the Battery’s Health and Remaining Life

The first part of the framework focuses on “how healthy is the battery right now?” Using measurements like voltage, current, temperature and internal resistance, the team compared several machine‑learning methods to estimate state of health—a percentage that reflects how far the battery has aged. A technique called gradient boosting came out on top, matching real measurements almost perfectly while still being light enough to run on car‑grade electronics in a few milliseconds. To answer the second key question—“how long will this pack keep working?”—the researchers built a hybrid deep‑learning model that combines two sequence‑focused networks. This model watches how the battery changes over many charge–discharge cycles and learns to forecast its remaining useful life with only a few percent error, across different cell types and driving patterns.

Teaching the Battery to Charge Itself Wisely

Knowing health and remaining life is only half the story; the system also needs to act. The authors introduce QPPONet, a hybrid reinforcement‑learning controller that learns how to charge and discharge in ways that balance immediate needs—like giving the driver enough range today—against long‑term battery wear. It blends two complementary strategies: one that estimates the value of each possible action and another that keeps learning stable and gradual. Trained in a realistic simulator built from detailed lab data, this controller boosts an overall “reward” score—combining energy efficiency and reduced aging—by about one quarter compared with standard charging methods, while cutting capacity loss and remaining robust under changing operating conditions.

Watching for Early Signs of Dangerous Heat

Safety is the third pillar of the framework. Instead of relying on simple temperature thresholds, the system uses unsupervised anomaly‑detection methods to learn what “normal” looks like for voltage, current and temperature signals. It then flags unusual spikes or combinations that hint at thermal runaway, the chain reaction that can lead to fires. By training on both real and stress‑augmented data—where readings are artificially pushed into extreme yet plausible ranges—the models learn to distinguish harmless blips from serious events. In tests, they detected early warning signs with over 92 percent accuracy, while filtering out short‑lived quirks that would cause false alarms.

Built to Work in Many Vehicles, Not Just One

A key strength of the work is that each part—health estimation, life prediction, charge control, and safety monitoring—is designed and validated as a separate module. This makes the system easier to adapt to different battery chemistries, vehicle platforms, and hardware limits. The authors trained and tested their models on a detailed in‑house dataset covering many temperatures and load patterns, and then confirmed that the same methods generalize well to a public electric‑aircraft battery dataset with very different usage profiles. Even when moved across these contrasting scenarios, the models maintained high accuracy, suggesting that the approach can scale beyond a single laboratory setup.

What This Means for Everyday Drivers

For non‑specialists, the takeaway is straightforward: by giving the battery pack a kind of digital nervous system, built from modern learning algorithms, electric vehicles can become safer, more reliable, and cheaper to own. The proposed framework shows that it is possible to estimate battery condition precisely, forecast how long it will last, choose gentler yet efficient charging patterns, and catch dangerous overheating early—all within the computing limits of real cars. As these intelligent battery managers mature and spread, drivers may see longer warranties, fewer surprise failures, and more confidence that their vehicles are making smart choices about the energy stored under the floor.

Citation: Sujan Kumar, M.V., Khekare, G. Optimizing charge discharge cycles using QPPONet-enabled hybrid learning framework for energy management and safety in electric vehicles. Sci Rep 16, 11384 (2026). https://doi.org/10.1038/s41598-026-43692-5

Keywords: electric vehicle batteries, battery management systems, machine learning, reinforcement learning, thermal safety