Clear Sky Science · en

Hybrid CNN–transformer model with BM3D and YOLOv8 for early detection of lung cancer in low-dose CT scans

Why catching lung cancer sooner matters

Lung cancer kills more people worldwide than any other cancer, largely because it is often found too late. Doctors increasingly use low-dose CT scans—X‑ray images taken with less radiation—to screen people at risk. But these images are noisy and the warning spots, or nodules, can be tiny and easy to miss. This study presents a computer system that cleans up these scans and helps flag suspicious areas earlier and more accurately, with the goal of supporting radiologists rather than replacing them.

Cleaner pictures from gentle scans

Low-dose CT scans are attractive because they expose patients to less radiation than standard scans, yet still reveal the lungs in 3D detail. The trade-off is extra graininess and faint structures that blur into the background. The authors first tackle this problem with an advanced image cleaning step called BM3D, which is designed to remove noise while preserving important edges and shapes. They test several filtering methods and find that BM3D yields the sharpest balance between clarity and fidelity, producing images that keep subtle lung nodules visible without introducing distortions that could mislead the computer or the radiologist.

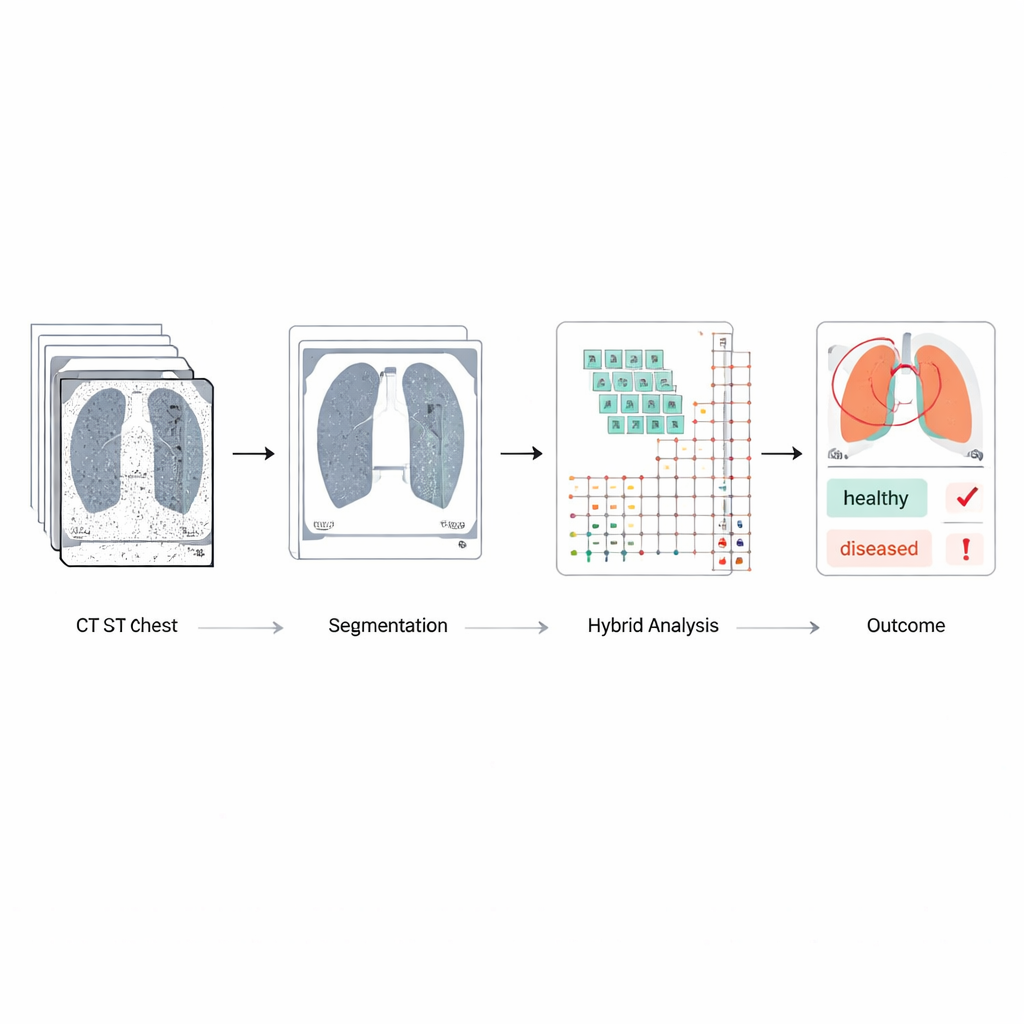

Focusing only on the lungs

Raw chest scans contain ribs, spine, heart, and other tissues that can distract an automated system. To concentrate on what matters, the team uses a modern object-detection network (YOLOv8) to outline and extract just the lung regions. They annotate lung boundaries by hand on many scans, train the system to recognize these shapes, and then let it automatically crop each image down to a lung-only view. This step cuts out irrelevant structures and reduces the risk that normal tissues or scanning artifacts will be mistaken for disease, while also making later processing more efficient.

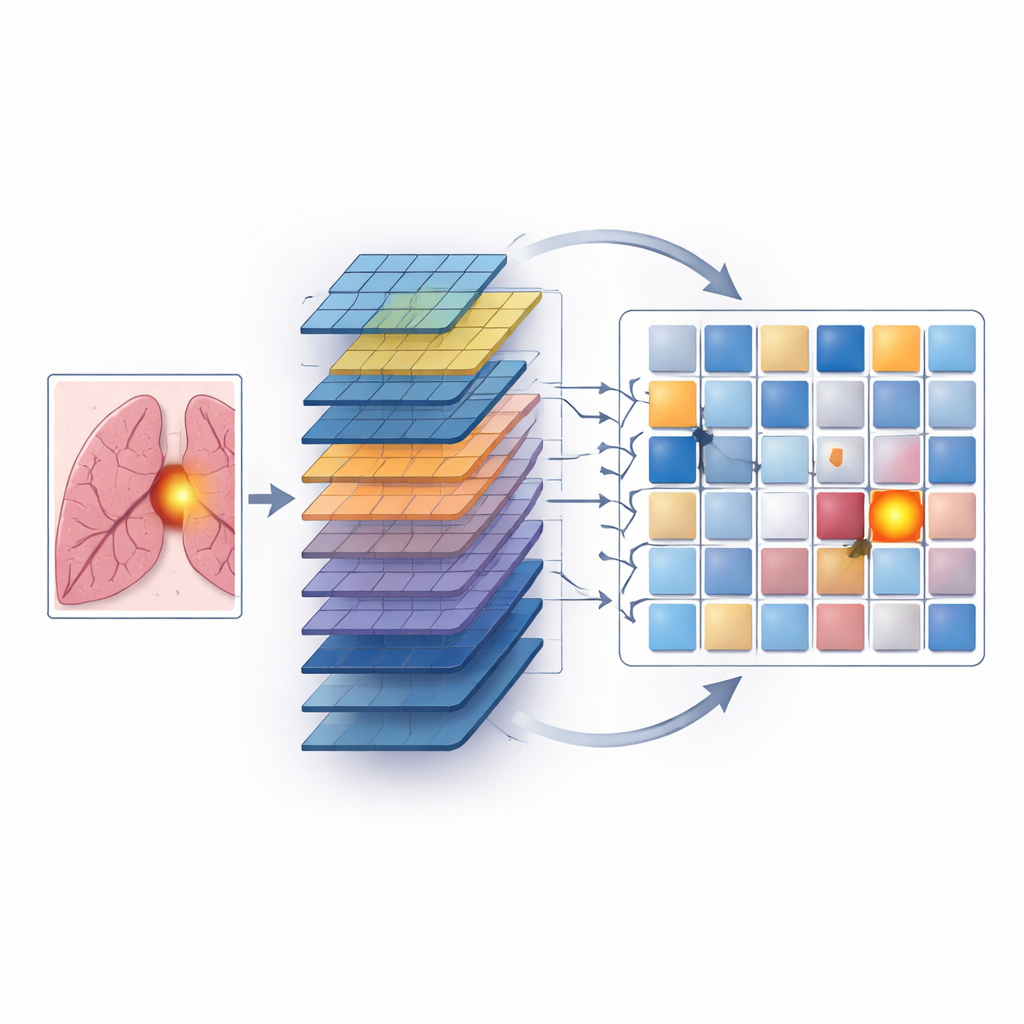

Teaching a hybrid artificial brain to spot risky nodules

The heart of the work is a hybrid model that combines two complementary ways of analyzing images. Convolutional neural networks, or CNNs, scan small neighborhoods of pixels to learn detailed patterns such as edges, textures, and the round or irregular outlines of nodules. Transformers, originally developed for language, excel at seeing long-range relationships—how distant parts of an image relate to each other. In this system, the cleaned, lung-only images are first passed through CNN layers to extract rich spatial features. These features are then broken into patches and fed into transformer blocks that learn how different regions within the lungs interact, which helps the model notice faint or scattered signs of cancer that might otherwise be overlooked.

Training, testing, and beating existing tools

The model is trained and evaluated on a large public dataset of over a thousand lung CT scans, each carefully marked by radiologists with nodule size and estimated likelihood of cancer. The authors separate patients into training, validation, and test groups so that images from the same individual never appear in more than one split, avoiding overly optimistic results. They also use data augmentation—such as small rotations and brightness shifts—to mimic real-world variability. When compared to widely used deep-learning systems, including standard CNNs, popular image networks, and vision transformers alone, the hybrid approach performs best. It correctly distinguishes cancerous from non-cancerous cases about 95% of the time, with high sensitivity (few missed cancers), high specificity (few false alarms), and strong scores on segmentation quality and overall discrimination.

Promise and remaining hurdles

Despite these gains, the authors acknowledge important challenges before such a system can be routinely used in clinics. The public dataset they use, while well labeled, may not reflect the full diversity of scanners, hospitals, and patient populations worldwide, and it contains fewer cancer cases than healthy ones. The hybrid model is also more complex and computationally demanding than simple networks, which could limit deployment in smaller centers. Finally, like many deep-learning systems, its inner reasoning is opaque, so clinicians cannot easily see why a particular nodule was flagged. The authors propose future work on lighter models, multi-center testing, combining scan data with other medical information, and adding explainability tools so radiologists can better trust and interpret the system’s output.

What this means for patients

In simple terms, this study shows that carefully cleaning low-dose CT images, focusing only on the lungs, and then using a combined "local and global" artificial intelligence can find suspicious lung spots earlier and more reliably than many existing methods. While it is not yet a plug-and-play hospital tool, the approach points toward screening systems that could help overworked radiologists catch more early cancers while reducing unnecessary scares. If refined and validated in real-world settings, such hybrid models could become important teammates in the fight to detect lung cancer when it is still highly treatable.

Citation: Thakral, G., Kumar, U. & Gambhir, S. Hybrid CNN–transformer model with BM3D and YOLOv8 for early detection of lung cancer in low-dose CT scans. Sci Rep 16, 13597 (2026). https://doi.org/10.1038/s41598-026-43517-5

Keywords: lung cancer screening, low-dose CT, deep learning, medical image analysis, computer-aided diagnosis