Clear Sky Science · en

Explainable VGG16 transfer learning with SHAP and grad-CAM for wax moth pest and infestation detection in honeybee apiaries using imaging data

Why watching over bees matters to everyone

Honeybees quietly support much of the world’s food supply by pollinating fruits, vegetables, and nuts. Yet their hives are under attack from a small but destructive pest: the greater wax moth. These insects can hollow out combs, weaken colonies, and ultimately cut into harvests and biodiversity. The study summarized here shows how a camera and an intelligent, transparent computer system can work together to spot wax moth trouble early, giving beekeepers a practical tool to protect their hives and, indirectly, our food.

The hidden invader inside the hive

Wax moths lay their eggs in dark corners of the hive. The larvae that hatch tunnel through beeswax, pollen, and brood cells, leaving behind silk, frass, and crumbling comb. Over time, this damage can cause the entire colony to collapse and can spread disease. Traditional checks rely on a beekeeper physically opening each hive, lifting frames, and judging damage by eye. This is slow, tiring work that becomes unrealistic for large or remote apiaries. As a result, infestations are often discovered only after serious harm has already been done, with economic and ecological costs that extend far beyond a single beekeeper’s operation.

Turning hive photos into early warnings

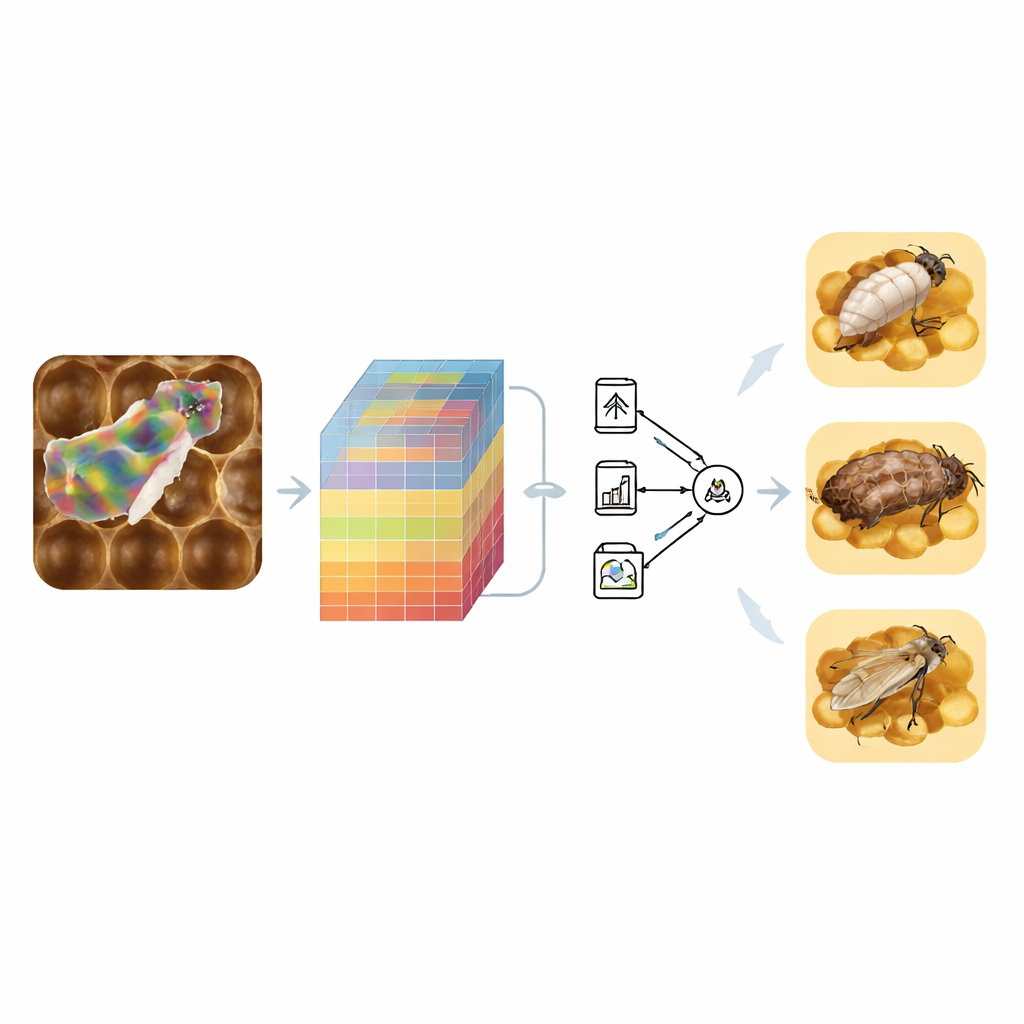

The researchers built a new monitoring system based on simple photographs of hive frames taken with a standard smartphone in working bee yards in southern Pakistan. They assembled a dataset of 3,252 high‑resolution images showing both healthy combs and those infested by wax moths at three stages: larva, pupa, and adult. Instead of forcing the model to learn everything from scratch, they used “transfer learning” with an existing image network called VGG16 to pull out detailed patterns of color and texture from each photo. These patterns were then passed to more familiar machine‑learning methods—such as logistic regression, support vector machines, and tree‑based ensembles—that are easier to tune, compare, and explain.

A two-step checkup for each frame

The system examines each image in two tiers, mirroring how a skilled beekeeper thinks. First, it decides whether a frame is healthy or infested. If infestation is detected, a second stage determines whether the visible pest is in the larval, pupal, or adult form. To avoid bias toward the more common types of images, the team used a technique called SMOTE to balance the training data. They also tested many alternative models and combinations of models, using cross‑validation and statistical tests to make sure that performance gains were not due to chance. The best combination for the first step, called XAI‑HoneyNet, blended two strong classifiers in a voting scheme and correctly separated healthy from infested frames in 99 out of 100 test images. For the second step, an ensemble dubbed XAI‑PestNet accurately distinguished among larva, pupa, and adult stages about 96 percent of the time.

Opening the “black box” of artificial intelligence

Many powerful image-recognition systems behave like black boxes: they give an answer but not a reason. That is a problem for beekeepers who must decide whether to remove equipment, treat hives, or leave them alone. To tackle this, the authors added explainability tools. One, known as SHAP, assigns each image feature a score that reflects how much it pushed the decision toward “healthy” or “infested.” Another, called Grad‑CAM, produces heatmaps that highlight the regions of the photo that most influenced the decision. In this study, the bright regions in these maps tended to fall on silk webbing, damaged comb, larval tunnels, or the bodies of moths themselves, rather than on irrelevant background. This gives users confidence that the model is “looking” where an experienced beekeeper would look.

From smart pictures to smarter beekeeping

In practical terms, the work shows that a low‑cost camera and an explainable computer model can reliably flag wax moth problems early and indicate which life stage is present. Because the approach uses modular components—standard image networks and well‑known classifiers—it can be adapted to other hive pests, crops, and regions. By making its decisions understandable through visual cues, the system is more likely to be trusted and adopted by beekeepers who must act on its output. If folded into simple field devices or mobile apps, such tools could help keep colonies stronger, support pollination, and contribute to more resilient and sustainable agriculture.

Citation: Ghafoor, A., Majid, M., Amjad, M. et al. Explainable VGG16 transfer learning with SHAP and grad-CAM for wax moth pest and infestation detection in honeybee apiaries using imaging data. Sci Rep 16, 13861 (2026). https://doi.org/10.1038/s41598-026-43455-2

Keywords: honeybee health, wax moth detection, explainable AI, precision agriculture, pest monitoring