Clear Sky Science · en

Intelligent resource management in UAV-enabled networks using cell-free communications and intelligent reflective surfaces

Bringing Reliable Wireless Service to Hard-to-Reach Places

As our world fills up with Internet-connected sensors and gadgets—from farm soil probes to factory robots—the wireless networks that link them are straining. This paper explores a new way to keep billions of small devices online using flying relays and smart reflecting panels on buildings, all coordinated by a learning algorithm. The result is a network that can stretch coverage, speed up data transfers, and save energy at the same time.

Why Today’s Networks Fall Short

Conventional cellular networks were never designed for huge numbers of low-power devices spread across cities, countryside, and disaster zones. Signal coverage is patchy, interference between neighboring cells is common, and energy use is high. Many Internet of Things (IoT) devices sit in basements, behind thick walls, or in remote fields where signals are weak. At the same time, mobile flying relays—unmanned aerial vehicles (UAVs)—and intelligent reflecting surfaces (IRSs), which are flat panels that bounce radio waves in chosen directions, have separately shown promise in boosting wireless performance. Yet most earlier studies treated these pieces in isolation, without a unified way to coordinate them in real time.

A New Kind of Cloud of Antennas in the Sky

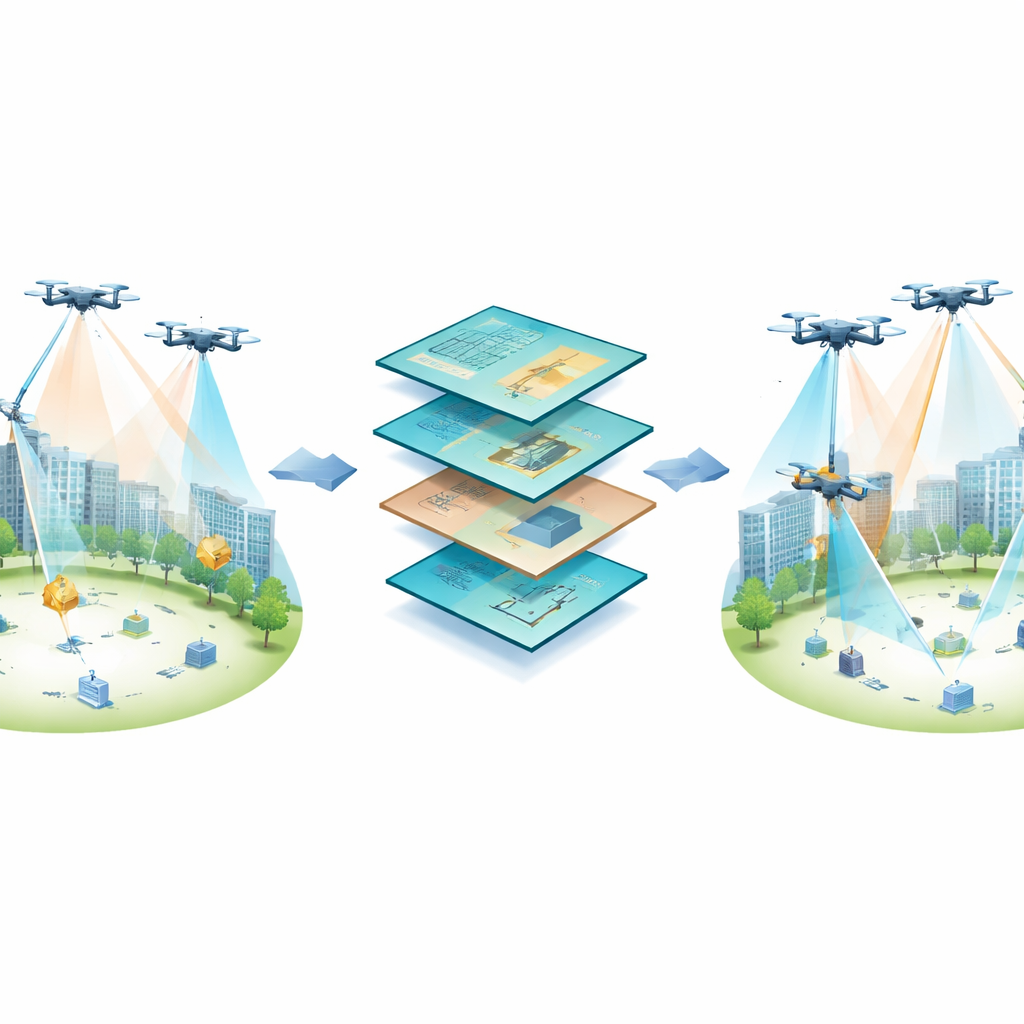

The authors propose a “cell-free” network architecture that abandons rigid cell boundaries. Instead of each user being tied to a single tower, many small access points cooperate to serve devices together. In this system, ground access points, airborne UAVs, and wall-mounted reflecting panels form a flexible web around the devices. A central base station sends radio signals that not only carry data but also recharge the UAVs wirelessly. The drones act as aerial relays, while the reflecting panels bend and focus signals into hard-to-reach corners, helping sensors that would otherwise be in dead zones.

Teaching the Network to Adapt on Its Own

Coordinating where drones fly, how strongly the base station transmits, which access point serves which device, and how each reflecting panel aims its signals is a tangled juggling act. Traditional optimization methods struggle when conditions change quickly, as they do when UAVs move or obstacles block signals. To tackle this, the authors cast the problem as a learning task. They use reinforcement learning, in which the network repeatedly tries different configurations and receives a “score” based on how well it did on three fronts: data speed, coverage, and energy use. Over time, a simple Q-learning algorithm learns which choices tend to give better overall results and gradually refines a policy for adjusting power, flight paths, and reflection directions.

How the System Performs in Practice-Like Tests

The team built detailed computer simulations of a 500-by-500-meter area with multiple drones, reflecting surfaces, access points, and dozens of IoT devices. They included realistic wireless effects such as partial line-of-sight, random fading, and imperfect knowledge of the radio channel, as well as limits on drone altitude, battery energy, and total power. They then compared their learning-based scheme with several established benchmarks: two classical optimization methods and two popular deep reinforcement learning approaches. Across a wide range of scenarios, the proposed method consistently delivered higher total data throughput, covered more devices at the required minimum data rate, and used less energy. For example, compared with the best competing approach, it increased total throughput by about 15%, raised coverage by roughly 6%, and lowered energy consumption by about 5%.

Working Together: Drones and Smart Surfaces

The simulations also reveal how the different pieces of the system complement each other. When the number of drones is small, the learning algorithm leans heavily on the reflecting panels, adjusting their behavior to steer signals into shadowed areas without making the UAVs move more than necessary. As more drones become available, the learned policy begins to reposition them to open clearer paths and shorten links, while the reflecting panels fine-tune the signal paths. This division of labor allows the network to reach near-optimal performance even when hardware resources are limited, which is crucial in cost-sensitive or tightly regulated environments.

What This Means for Future Connected Worlds

In plain terms, the study shows that letting a network “learn by doing” can make airborne relays and smart walls work together far more efficiently than hand-designed rules. The combination of drones, distributed access points, and intelligent reflectors, guided by reinforcement learning, offers a path to robust, energy-aware connectivity for dense cities, remote rural areas, and disaster zones alike. While the work is based on simulations and still relies on simplified channel models, it points toward future 6G-style networks in which the very environment—buildings, drones, and infrastructure—acts as a programmable fabric that intelligently bends radio waves to keep everything connected.

Citation: Wu, H., Gu, F., Lu, H. et al. Intelligent resource management in UAV-enabled networks using cell-free communications and intelligent reflective surfaces. Sci Rep 16, 12900 (2026). https://doi.org/10.1038/s41598-026-43358-2

Keywords: UAV wireless networks, intelligent reflecting surfaces, cell-free communications, reinforcement learning, Internet of Things