Clear Sky Science · en

Spinal disease image segmentation technology integrating U-ResNet and shape-aware attention

Why Spine Scans Need Smarter Tools

Back pain is one of the most common reasons people visit a doctor, and modern scanners like MRI and CT can reveal the tiny bones and discs that make up the spine in remarkable detail. But turning those gray images into clear outlines of which part is bone, which part is disc, and where disease is hiding is still often done by hand, slice by slice. This paper introduces a new artificial intelligence system that can automatically trace spinal structures and common problems with greater accuracy and speed, helping radiologists and surgeons make better decisions while reducing workload.

The Challenge of Reading the Spine

The spine is a complex column of many small parts: stacked vertebrae, soft discs in between, and delicate curves that vary from the neck to the lower back. Diseases such as slipped discs, compression fractures, and scoliosis can dramatically distort this structure. Traditional computer methods struggle with these variations, and even many recent deep-learning models make mistakes at the borders between bones and discs or fail when the anatomy looks very abnormal. Manual outlining by experts is slow, inconsistent, and difficult to scale in busy hospitals, especially as more young office workers and students develop back problems.

A Tailored Network for Spinal Images

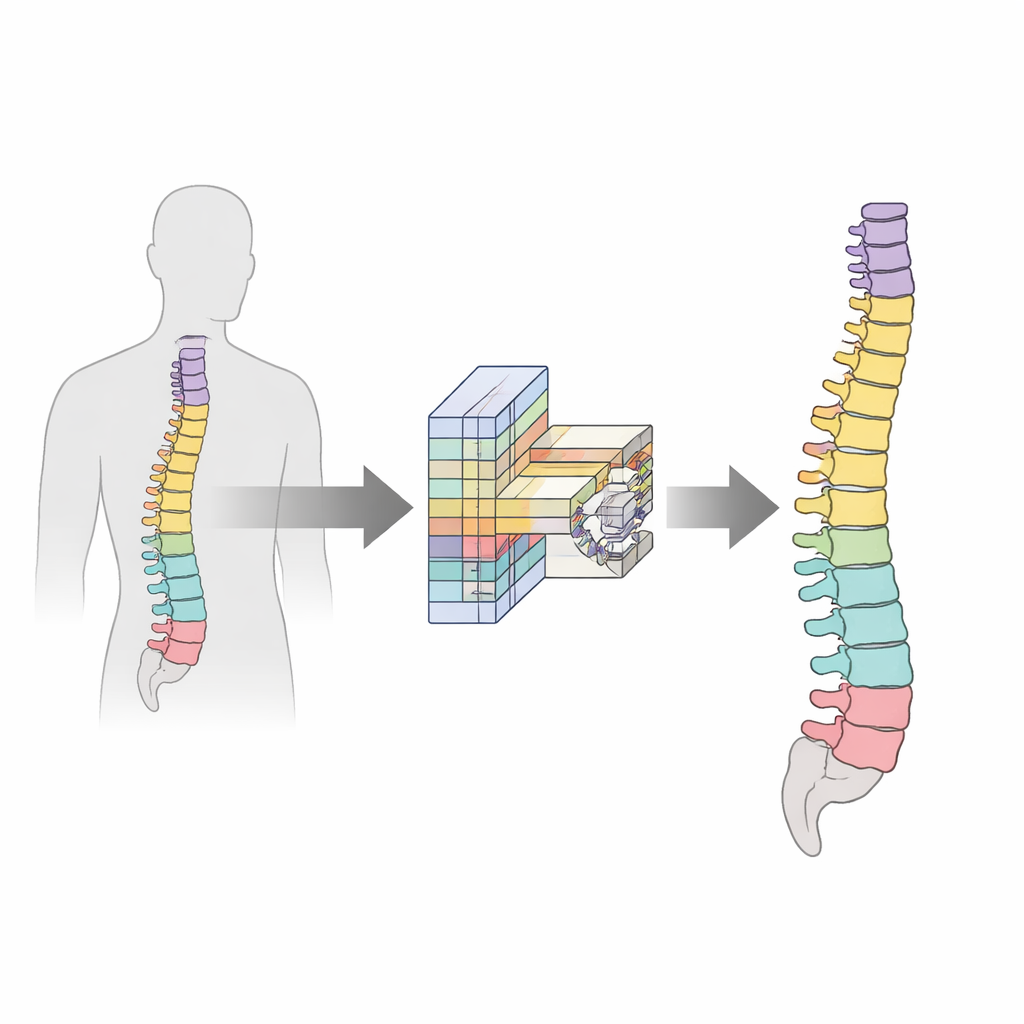

To address these issues, the authors design a spine-specific deep-learning model built on an improved version of a popular medical imaging network called U-Net. Their customized backbone, named U-ResNet, is shaped like a U: one side gradually compresses the image to capture overall context, while the other side expands it again to produce a detailed map of each structure. The new design balances depth and efficiency so it can work on high-resolution scans without requiring supercomputers. It also fuses features at multiple scales, allowing the model to pay attention both to large vertebral bodies and to smaller, thinner discs, and it normalizes information so the same network can handle both MRI and CT images.

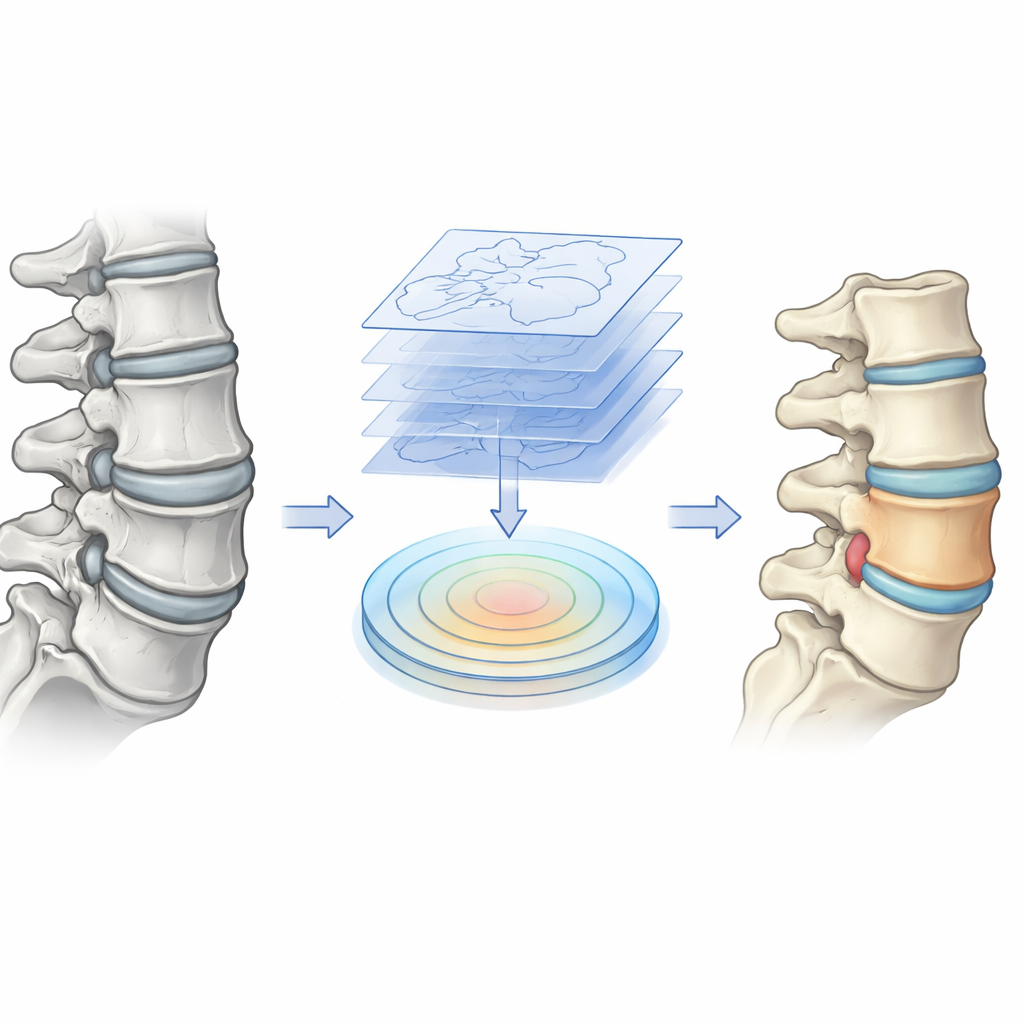

Teaching the Model to Respect Shape

A key innovation is a "shape-aware attention" module that explicitly teaches the network what a healthy spine usually looks like and how disease can bend or break those patterns. Before images reach this module, a preprocessing step extracts contour information—essentially outlines of bones and discs—from the scans. Inside the module, the network compares these shape hints with what it has learned from the image itself, strengthening signals where the two agree and adapting when disease causes deformation. This lets the system focus on true spinal structures, even when they are compressed, bulging, or curved, while suppressing distracting background tissues such as muscle and fat.

Balancing Whole Regions and Sharp Edges

Another core contribution is a training strategy that balances two competing goals: capturing the full extent of each structure and drawing its edges sharply. The authors design a combined loss function—essentially a coaching rule—that tells the model how to improve. One part emphasizes getting the entire region of a vertebra or disc correct, which is vital for measuring volumes and degeneration. Another part focuses on the accuracy of boundaries, which matters when planning surgery or tracking the progression of disease. The model automatically adjusts how much it cares about each part depending on the size and clarity of the structure, and it adds a volume constraint so that numerical measurements match what doctors would expect in practice.

Putting the System to the Test

The researchers evaluate their approach on two sizable datasets: lumbar spine MRI scans, rich in soft-tissue detail, and a whole-spine CT collection that spans the neck, chest, and lower back across a range of conditions, including scoliosis and compression fractures. Compared with nine state-of-the-art methods, their model achieves the highest scores in nearly every category, from overall segmentation quality and boundary precision to grading how worn down an intervertebral disc has become. It does this while using fewer computations and parameters than many rivals, making it better suited to run on standard hospital hardware or even lower-power devices.

What This Means for Patients and Clinicians

In simple terms, this work shows that teaching AI to understand both the shapes and the boundaries of spinal structures can make automated readings of spine scans more reliable and clinically useful. The model not only traces vertebrae and discs more accurately, including those that are small or badly deformed, but also runs fast enough to fit into real-world workflows. Although it still needs to be tested on more rare spinal diseases and across more hospitals, it offers a promising step toward routine, high-precision computer assistance in diagnosing back problems, planning surgery, and monitoring recovery.

Citation: Zhao, D., Qin, R., Chai, Z. et al. Spinal disease image segmentation technology integrating U-ResNet and shape-aware attention. Sci Rep 16, 12465 (2026). https://doi.org/10.1038/s41598-026-42870-9

Keywords: spinal imaging, medical image segmentation, deep learning, MRI and CT spine, back pain diagnosis