Clear Sky Science · en

Evaluating AI models for food and alcohol advertisement classification against human benchmarks

Why Tracking Online Ads Matters

Every day, people scroll past countless ads for food and alcohol on social media, often without noticing how strongly these messages can shape what we eat and drink. Health agencies and researchers want to keep tabs on how heavily unhealthy products are promoted, especially to children and teenagers, but manually checking thousands of ads is slow and expensive. This study asks a timely question: can modern artificial intelligence systems do this monitoring work as reliably as people, and if so, for which kinds of ad features can we already trust them?

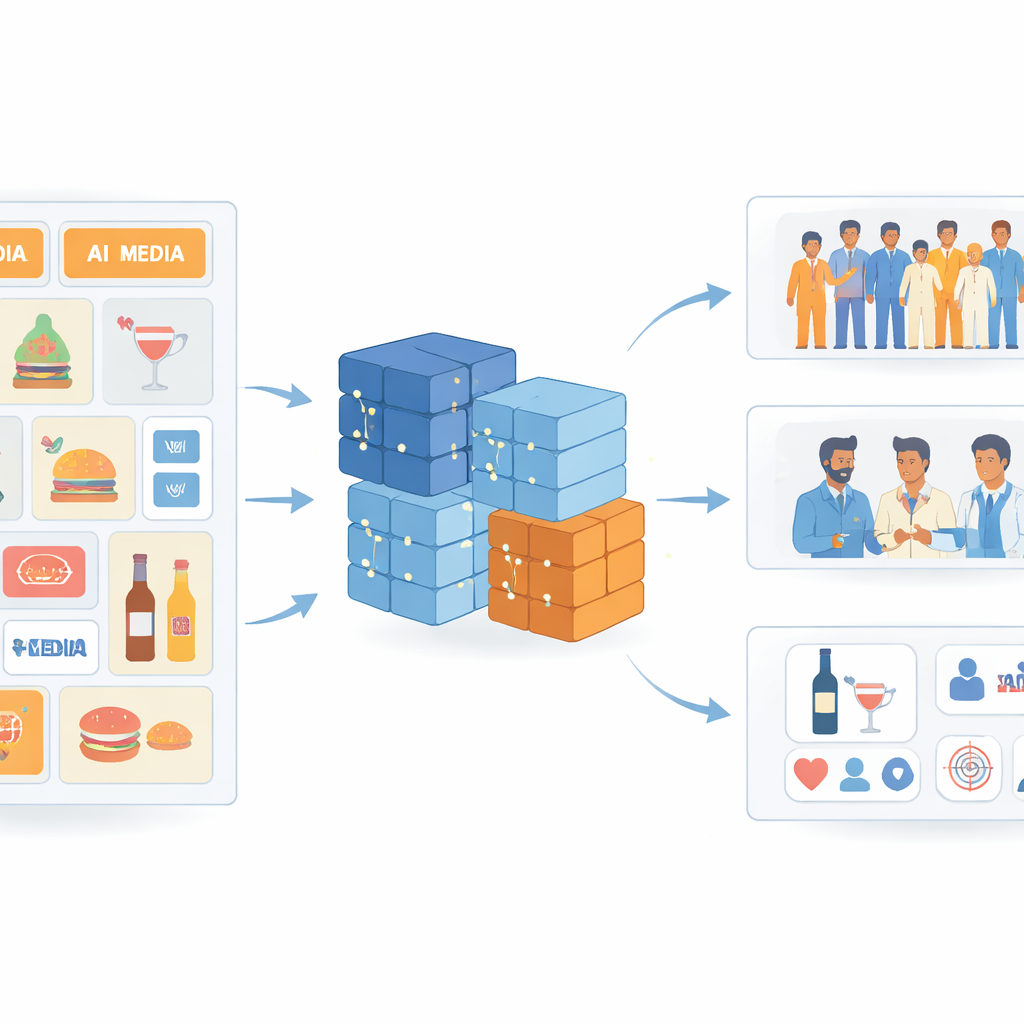

How the Study Looked at Real-World Ads

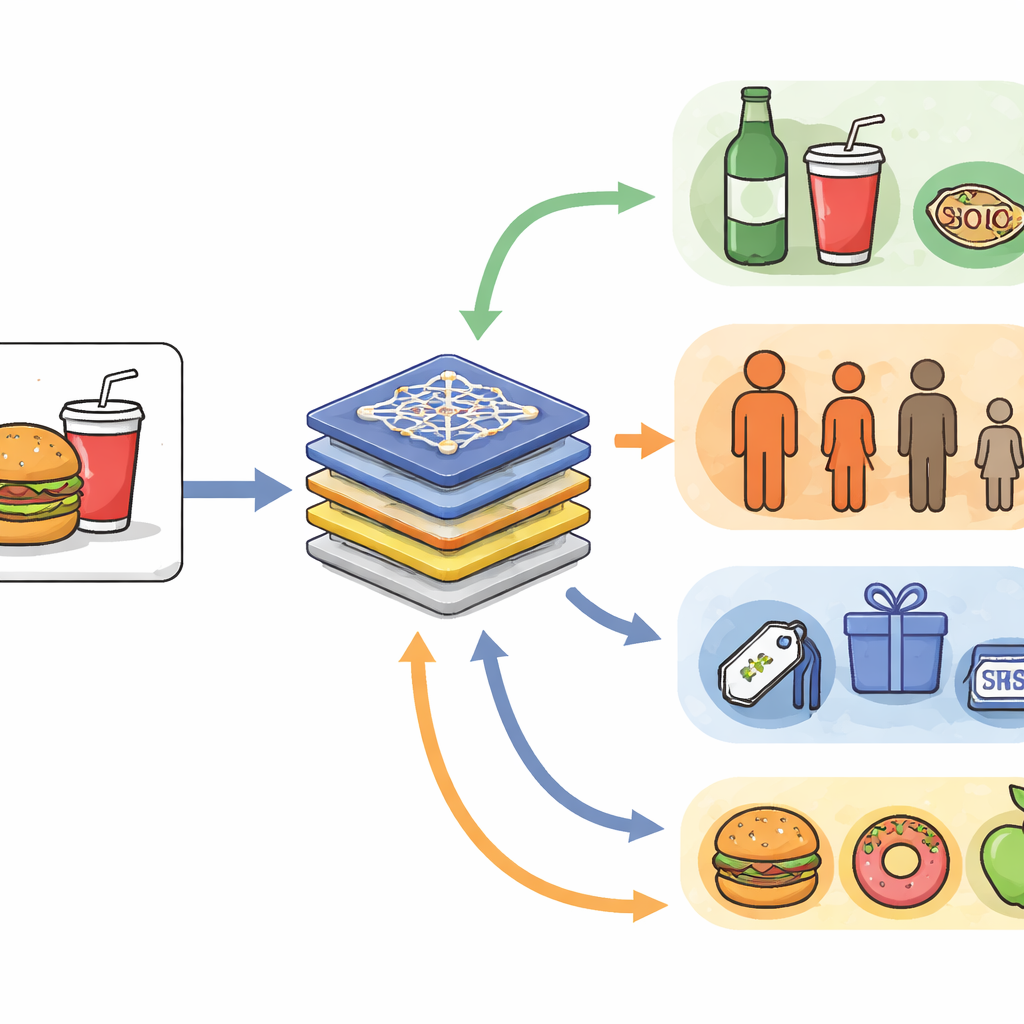

The researchers gathered 1000 Facebook ads from 77 major Belgian food, drink, and alcohol brands, including both the images and their captions. Around 600 members of the general public, three trained dieticians, and four advanced AI systems all looked at the same ads. For each ad, they answered questions such as whether alcohol was present, who the ad seemed to target (children, adolescents, or adults), what type of advertiser it was, and which sales tactics or food categories appeared. Some questions had only one possible answer, like a yes–no decision on alcohol. Others allowed multiple answers, such as several different marketing tricks or several food types in the same ad. This design allowed the team to compare AI, crowd workers, and experts head-to-head.

Where AI Matches Human Judgment

For simple, single-answer questions, the AI systems—especially GPT-4o and Qwen—performed remarkably well. When deciding whether an ad contained alcohol, agreement between these models and the dieticians was above 90 percent and nearly indistinguishable from the agreement among the dieticians themselves. For classifying who the ad was mainly aimed at and what type of advertiser it came from, the AIs again reached agreement levels that sat within the natural variation seen between different human coders. In other words, for clear-cut features like “alcohol or not” and straightforward audience or brand type, the best AI systems already operate at roughly human level.

Where Things Get Messy and Disagreeable

Performance dropped for more complicated, multi-answer questions. When coders had to identify several possible premium offers (discounts, contests, loyalty schemes), marketing strategies (events, characters, endorsements), or detailed food categories (like snacks, ready-made meals, or dairy), agreement was noticeably lower for everyone—humans and AIs alike. Even the dieticians, who are nutrition specialists, often disagreed with one another, especially on abstract marketing tactics. For some marketing strategy labels, pairwise agreement between dieticians could be extremely low, showing that the task itself is hard and somewhat subjective. In this context, AI did not clearly lag behind humans; instead, it behaved much like an additional, somewhat noisy human rater.

Hidden Biases in How AI Sees Ads

Looking beyond overall scores, the authors examined how the models consistently over- or under-detected specific labels. Across questions, all AIs were reluctant to choose options that meant “none” or “not applicable,” tending instead to assign at least one concrete feature. This creates a risk of overstating how often special offers or persuasive tactics are present. Some models, such as Gemma and Qwen, showed stronger biases than others: for instance, they frequently flagged events and ready-made meals even when human coders did not. GPT-4o generally showed milder, more conservative patterns, but still had blind spots—for example, discount offers and celebrity or charity endorsements. These systematic quirks mean that relying on a single AI system could skew estimates of how much people are exposed to particular marketing tactics or food types.

Guidelines for Using AI in the Real World

To translate their results into practice, the authors propose a three-tier strategy. In the first tier, relatively simple single-answer tasks—such as detecting alcohol, basic ad type, or main target group—are ready for large-scale automation, with AI taking over much of the manual work after a small local validation check. The second tier covers more complex, multi-answer questions about offers, strategies, and detailed food categories. Here, AI can be a helpful assistant to pre-screen ads, suggest labels, or guide human reviewers, but human oversight and better label definitions remain crucial. A third tier includes even more intricate or untested areas, such as other harmful substances or fine-grained nutrition details, where AI outputs should currently be treated as exploratory rather than reliable.

What This Means for Consumers and Policy

In plain terms, the study shows that today’s AI can already help public health agencies and researchers keep an eye on straightforward aspects of food and alcohol advertising at the scale of modern social media. However, when it comes to subtle sales tricks and complex food categories, both humans and machines still struggle to agree, and AI models carry recognizable biases. The authors conclude that carefully combining AI with human expertise—using AI where it is strongest, and humans where nuance and interpretation matter most—offers the most promising path toward fair and effective monitoring of how unhealthy products are promoted online.

Citation: Gitu, PA., Cerina, R., Grigoriev, A. et al. Evaluating AI models for food and alcohol advertisement classification against human benchmarks. Sci Rep 16, 13058 (2026). https://doi.org/10.1038/s41598-026-42426-x

Keywords: food advertising, alcohol marketing, artificial intelligence, social media, public health policy