Clear Sky Science · en

Inspection of pollination transfer and success in coffee flowering detection using intersection over union based cascade RCNN in a vision environment

How Coffee Flowers Shape Your Morning Brew

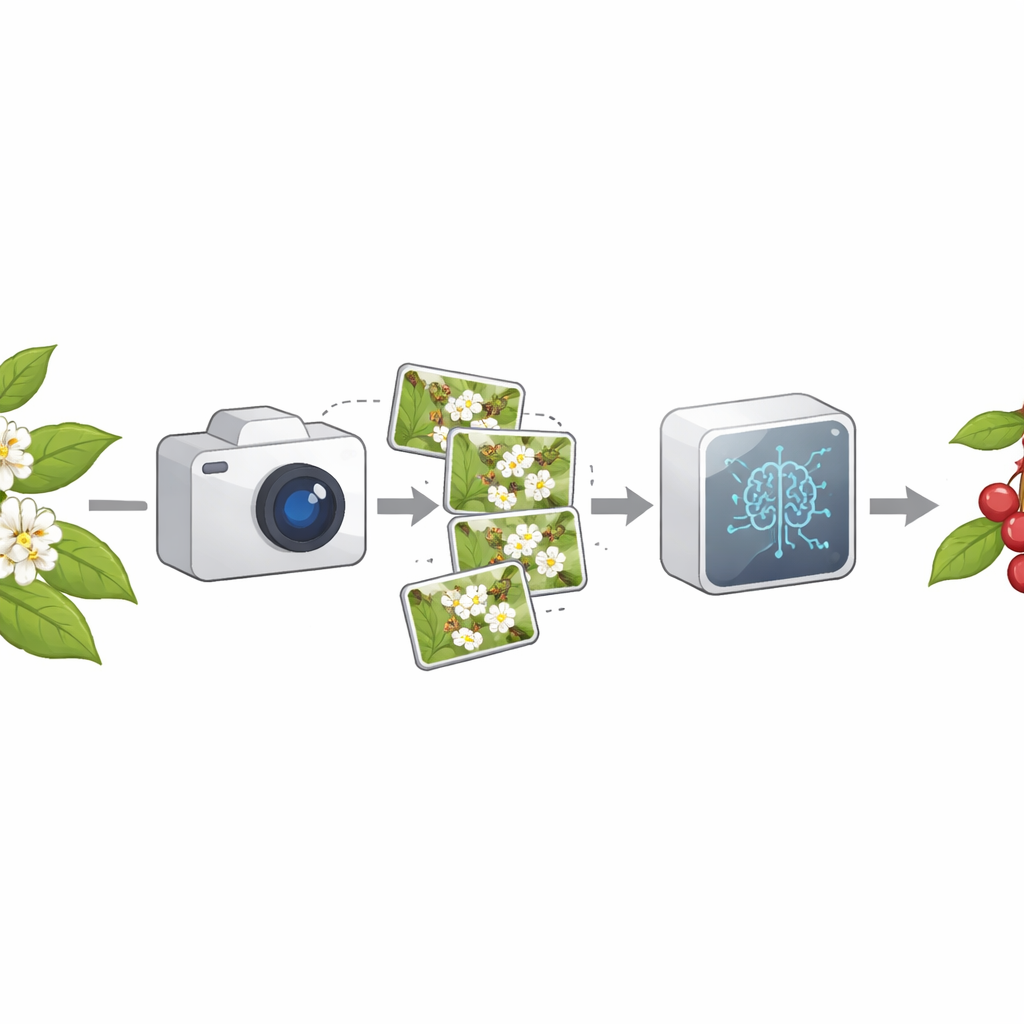

Every cup of coffee begins as a brief burst of white blossoms on a tropical shrub. These flowers open for only a short time, and whether or not they receive enough pollen determines how many ripe red cherries – and ultimately beans – a plant will produce. This study shows how researchers are using cameras and artificial intelligence to watch coffee flowers in fine detail, measuring when and how well pollination happens so farmers can safeguard yields in a changing climate.

Watching Flowers Instead of Guessing

Traditional pollination studies often relied on people counting pollen grains or visiting flowers in the field, an approach that is slow, subjective, and easy to disturb. For coffee, whose flowers change quickly from tight buds to fully open blooms and then fade, these methods miss much of the action. The authors argue that farmers and scientists need a way to monitor pollination automatically, over many plants and in real time, to understand how weather, pests, and landscape changes affect coffee productivity. Vision-based systems, using fixed or mobile cameras, can provide continuous, high‑resolution images without touching the plants, but they must be smart enough to pick out tiny structures like pollen grains against a noisy outdoor background.

Teaching Computers to See Coffee Flowers

The team built a machine vision pipeline they call IoU‑AI, tailored specifically to coffee flowers. They collected more than a thousand high‑resolution images from plantations in Coorg, India, spanning the full journey from bud formation to full bloom, pollen transfer, and successful fruit set. Experts in plant biology carefully drew boxes around each important floral part – the anthers that release pollen, the stigma that receives it, and the petals and surrounding structures – creating a detailed teaching set for the computer. These labeled images were used to train a deep‑learning model known as a cascade R‑CNN, a type of object detector that proposes regions of interest and then refines them in several stages to reduce both missed flowers and spurious detections.

Measuring Contact Between Pollen and Stigma

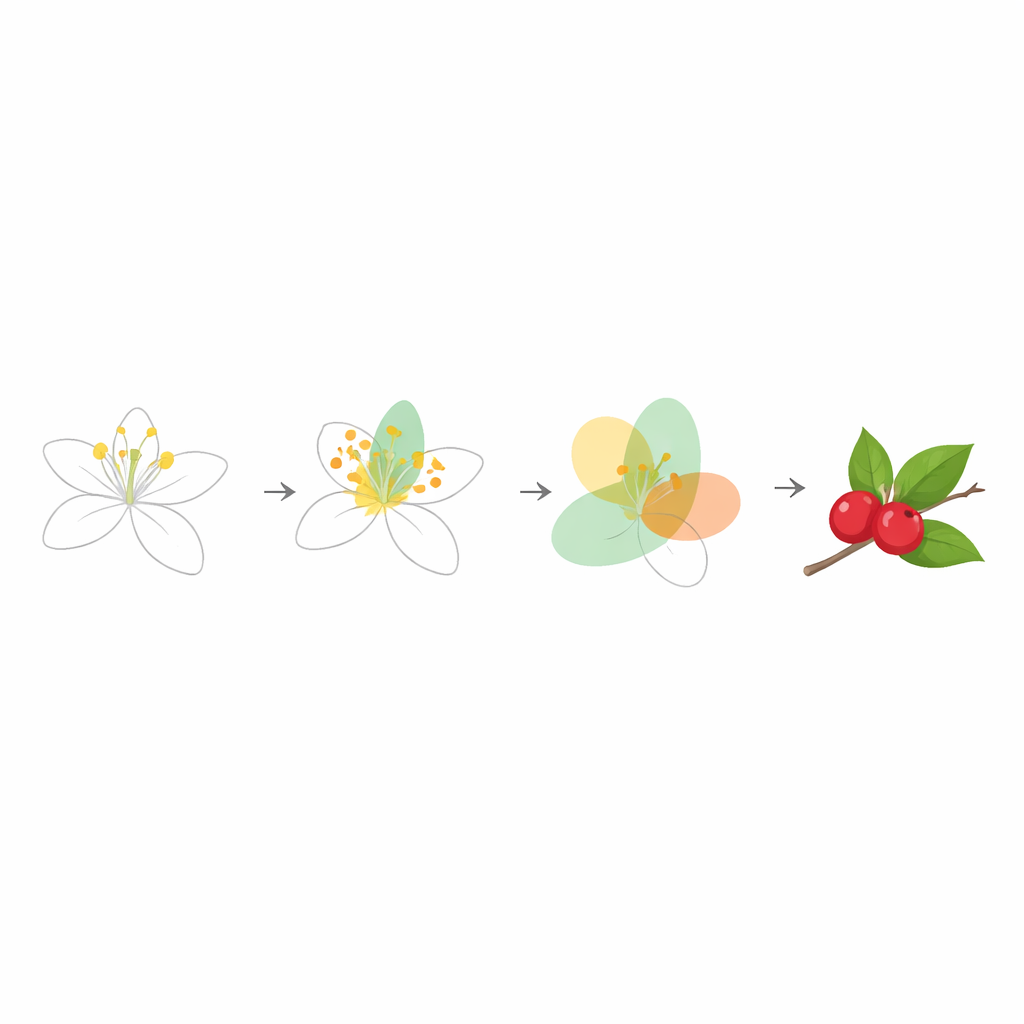

What makes IoU‑AI distinctive is that it does not stop at simply finding flowers; it also evaluates how well pollination is likely to succeed. The system uses a metric called "intersection over union" – originally designed to judge how well a computer’s predicted box matches a human‑drawn box – and reinterprets it as a biological measure. One region marks where pollen grains are detected, and another marks the receptive surface of the stigma. Their overlapping area, relative to the combined area of both, serves as a score of how much pollen actually touches the right target. By tuning this overlap threshold, the model balances the risk of counting noise or dust as pollen against the risk of overlooking genuine pollen–stigma contact events.

From Bud to Fruit: Tracking Pollination Success

Using this overlap‑based approach, the researchers could follow individual buds as they opened into flowers, received pollen, and progressed toward successful pollination. They report that, at an intermediate overlap threshold, the system achieves a strong blend of precision and sensitivity when judging flowering, pollen transfer, and pollen success. Compared with widely used detectors such as SSD and YOLO, their cascade R‑CNN combined with IoU‑based filtering proved better at spotting delicate features like pollen grains and the narrow stigma surface. Overall flower detection accuracy ranged roughly from the mid‑90s down to the mid‑80s across different flowering stages, with similarly high scores for recall and precision, indicating that the system is both reliable and consistent under realistic field conditions.

Why This Matters for Coffee and Beyond

For non‑specialists, the take‑home message is that this work turns a general computer‑vision tool into a kind of automated pollination inspector. Instead of just counting how many grains of pollen appear in an image, IoU‑AI pays attention to where they land relative to the flower’s receptive surface, which is what really determines whether a cherry will form. In practical terms, such a system could help growers spot fields or varieties with poor pollen contact early in the season, guide targeted measures like supplemental pollination, and evaluate how weather extremes or pollinator declines are affecting crop health. The same strategy – combining detailed imaging with overlap‑based measures of contact between biological structures – could be adapted to many other flowering crops, making smarter, data‑driven agriculture a realistic goal.

Citation: Sivasubramanian, S., Thanarajan, T., Selvanarayanan, R. et al. Inspection of pollination transfer and success in coffee flowering detection using intersection over union based cascade RCNN in a vision environment. Sci Rep 16, 11554 (2026). https://doi.org/10.1038/s41598-026-42347-9

Keywords: coffee pollination, computer vision in agriculture, deep learning, flowering phenology, crop yield monitoring