Clear Sky Science · en

Threshold-based artefact correction methods influence heart rate variability measurements in individuals with type 2 diabetes mellitus

Why tiny changes in data cleaning matter

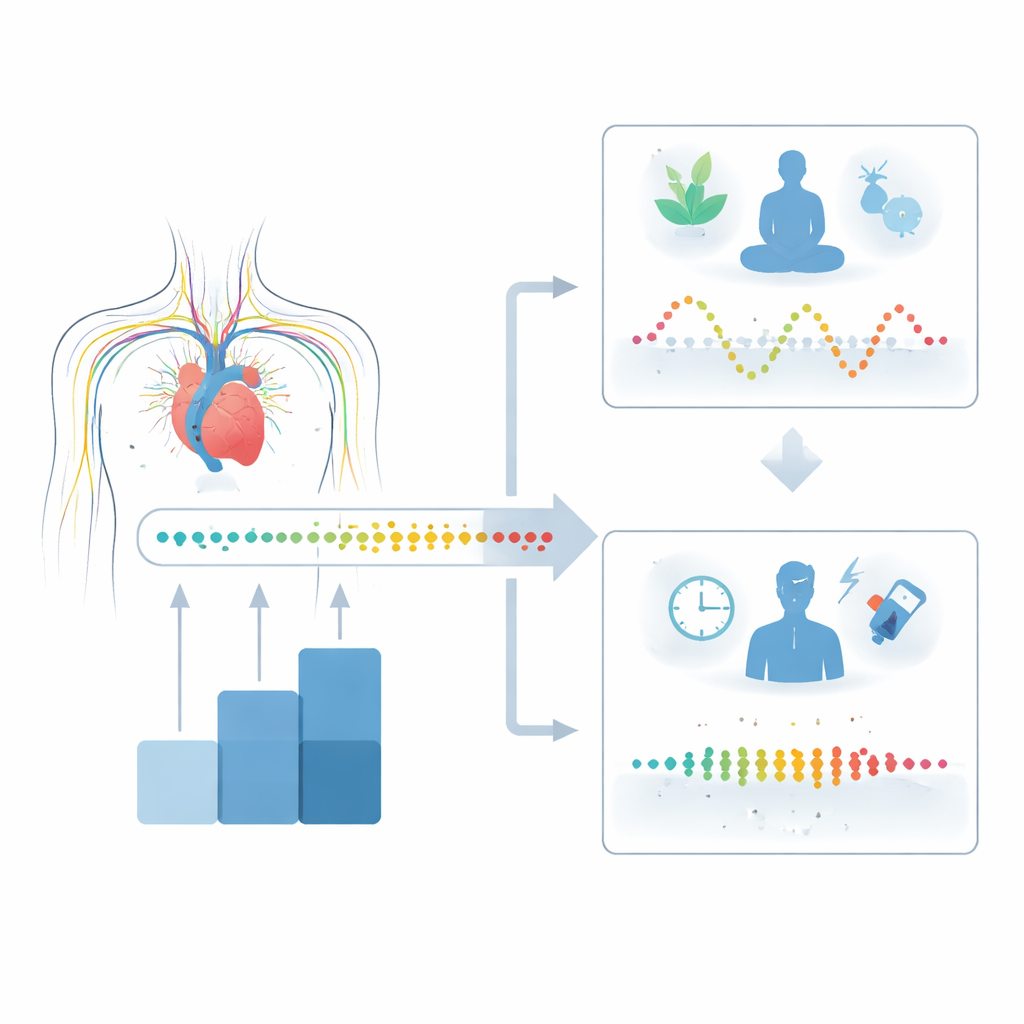

Doctors increasingly use subtle variations in heartbeat timing, known as heart rate variability, to gauge how well the nervous system is controlling the heart. This is especially important in people with type 2 diabetes, who face a higher risk of silent heart damage. But those heartbeat recordings are never perfectly clean: devices miss beats, people move, and electrical noise sneaks in. This study shows that the way we "clean up" those recordings on a computer can itself change the results in a meaningful way—and may even mislead doctors and researchers if they are not careful.

Watching the heart’s rhythm in diabetes

Type 2 diabetes affects hundreds of millions of people worldwide and is closely tied to heart and blood vessel disease. Long before chest pain or fainting spells appear, the nerves that control the heart may start to fail, a problem called cardiac autonomic neuropathy. Heart rate variability (HRV) is a simple, noninvasive way to spot early signs of this nerve damage by measuring how much the time between heartbeats naturally speeds up and slows down over a few minutes. In people with diabetes, this variation is often reduced, signaling that the heart is losing some of its flexible response to stress and rest.

From raw beats to computer-ready data

In this study, 52 adults with type 2 diabetes visited a research center, where their heartbeat was recorded for several minutes while they rested quietly on their backs in a temperature-controlled room. The team used a chest strap heart monitor to collect raw series of R–R intervals—the tiny time gaps between each pair of beats. These recordings were then analyzed with a popular HRV software program called Kubios, which offers several built-in "filters" to detect and correct suspicious beats. The filters range from no correction at all to a very strict setting that aggressively flags and replaces any interval that looks unusual compared with its neighbors.

Turning up the filter changes the story

To see how much these filters matter, the researchers took the same five-minute, high-quality segment of data from each person and re-ran the analysis using every filter level in turn. At the gentlest settings, almost no beats were altered, and HRV results stayed essentially the same. But under the most restrictive filter, nearly one in ten beats were changed on average, and in some people, up to half of the beats were corrected by the software. This heavy-handed cleaning shifted multiple HRV measures across the board—those that reflect overall variability, the balance between "fight-or-flight" and "rest-and-digest" activity, and more complex, nonlinear patterns in the heartbeat signal.

Why over-cleaning can mislead

These changes are not just technical details. HRV values are used to judge whether a person’s heart is under too much stress, to estimate how much the calming branch of the nervous system is working, and even to predict future heart events. If a filter quietly reshapes the underlying signal—smoothing out genuine irregularities along with real noise—it can make the heart look healthier or sicker than it truly is. In this group with type 2 diabetes, the strictest filter made indices linked to both calming and stress responses look markedly different, even though the actual heartbeat recordings were already carefully selected to be stable and of good quality.

What this means for patients and studies

The authors conclude that very aggressive cleaning of heartbeat data can distort HRV results in people with type 2 diabetes, leading to overestimation or underestimation of nerve-related heart problems. They do not claim there is a single perfect filter, but they warn against using the strictest option by default. Instead, they argue that researchers and clinicians should favor minimal or gentle correction when the original signal is good, clearly report their settings, and work toward shared standards. For patients, the message is indirect but important: the numbers you see on a test report depend not only on your heart, but also on how the data are processed—and consistent, well-chosen methods are key to making those numbers truly trustworthy.

Citation: Bassi-Dibai, D., Santos-de-Araújo, A.D., Rocha, D.S. et al. Threshold-based artefact correction methods influence heart rate variability measurements in individuals with type 2 diabetes mellitus. Sci Rep 16, 11341 (2026). https://doi.org/10.1038/s41598-026-42255-y

Keywords: type 2 diabetes, heart rate variability, cardiac autonomic neuropathy, data preprocessing, Kubios filters