Clear Sky Science · en

A low-latency deep learning framework for volcanic ash cloud nowcasting using geostationary satellite imagery

Why fast views of drifting ash clouds matter

When a volcano erupts or a nuclear plant releases radioactive dust, dangerous particles can be swept into the air and carried hundreds or even thousands of kilometers by the wind. These clouds threaten aircraft, power grids, and human health, and officials have only minutes to decide whether to reroute flights or issue evacuation advice. This study presents a way to turn rapid-fire satellite images into equally rapid short-term forecasts of where such clouds are heading, using an artificial intelligence model that runs on a compact, low-cost computer.

Watching the sky from a fixed vantage point

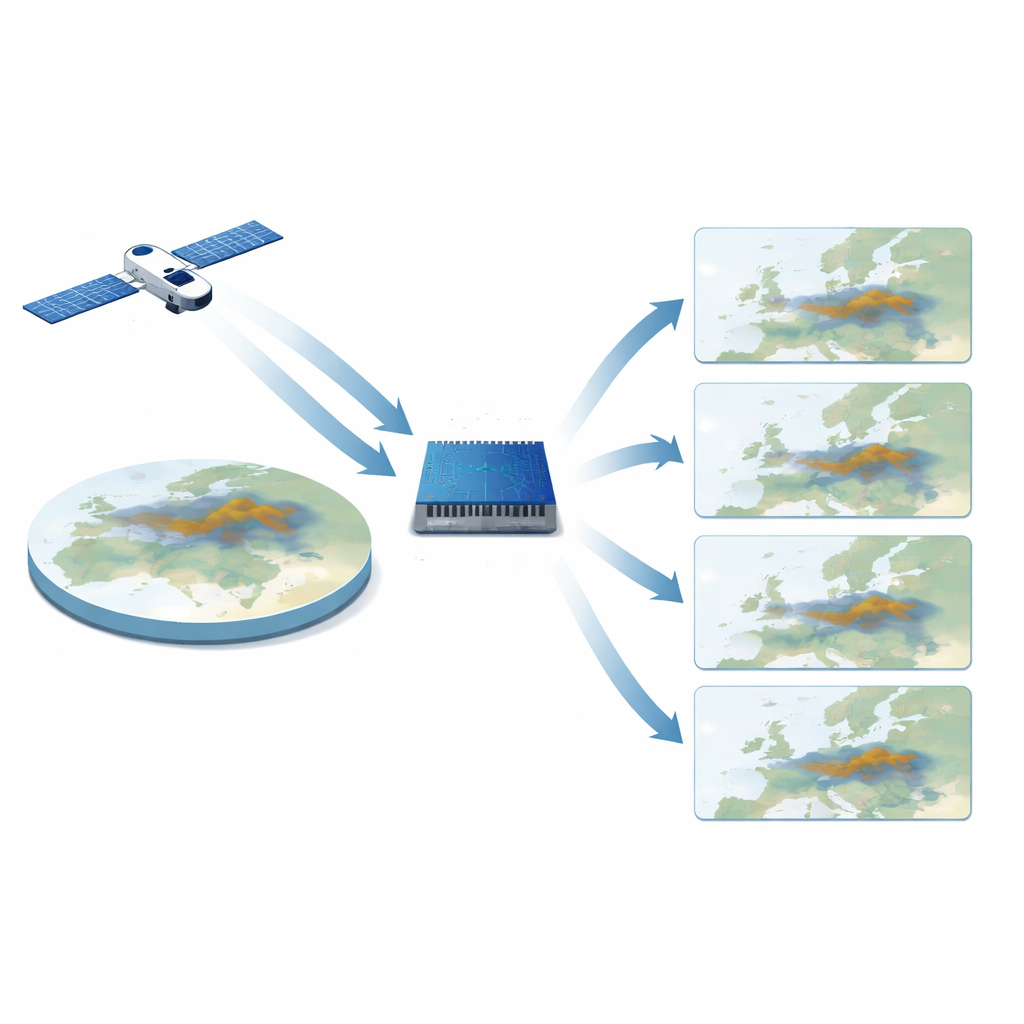

The work begins with Europe-wide pictures taken every 15 minutes by a weather satellite in geostationary orbit, which continuously watches the same half of the planet. From these images, a particular color composite is used that makes volcanic ash clouds stand out against the background. Over four and a half years, the researchers collected more than 168,000 of these images, each covering most of Europe at a few kilometers’ resolution. Together, this archive captures many different weather situations and ash events, giving the AI model plenty of examples of how real ash clouds move and thin out with time.

A learning machine that thinks in space and time

Instead of relying on traditional physics-based weather and dispersion models, which can be slow and require heavy computing power and specialized weather data, the team built a deep learning system that learns patterns directly from the satellite pictures. The core of the system is a neural network design that is especially good at handling sequences of images. It looks at four consecutive satellite frames—about 45 minutes of recent history—and predicts what the scene will look like 15 minutes later. By feeding each fresh prediction back into the model, it can step forward repeatedly to cover the next two hours, offering a “movie” of the near future in quarter-hour increments.

Forecasts fast enough for emergency decisions

Because time is critical during an unfolding crisis, the entire pipeline was engineered to run on an energy-efficient edge device, an NVIDIA Jetson AGX Orin, rather than a large computing cluster. Downloading four new satellite images and running the AI model takes under five seconds, well below the 15-minute gap between satellite scans. Tests on unseen volcanic ash events showed that the predicted frames remained visually close to the real satellite images, preserving the shape and location of ash clouds well over the first forecast step and degrading smoothly over longer lead times. In other words, the system can keep pace with the sky and provide continually updated short-range outlooks with modest hardware.

Imagining what-if plumes in real time

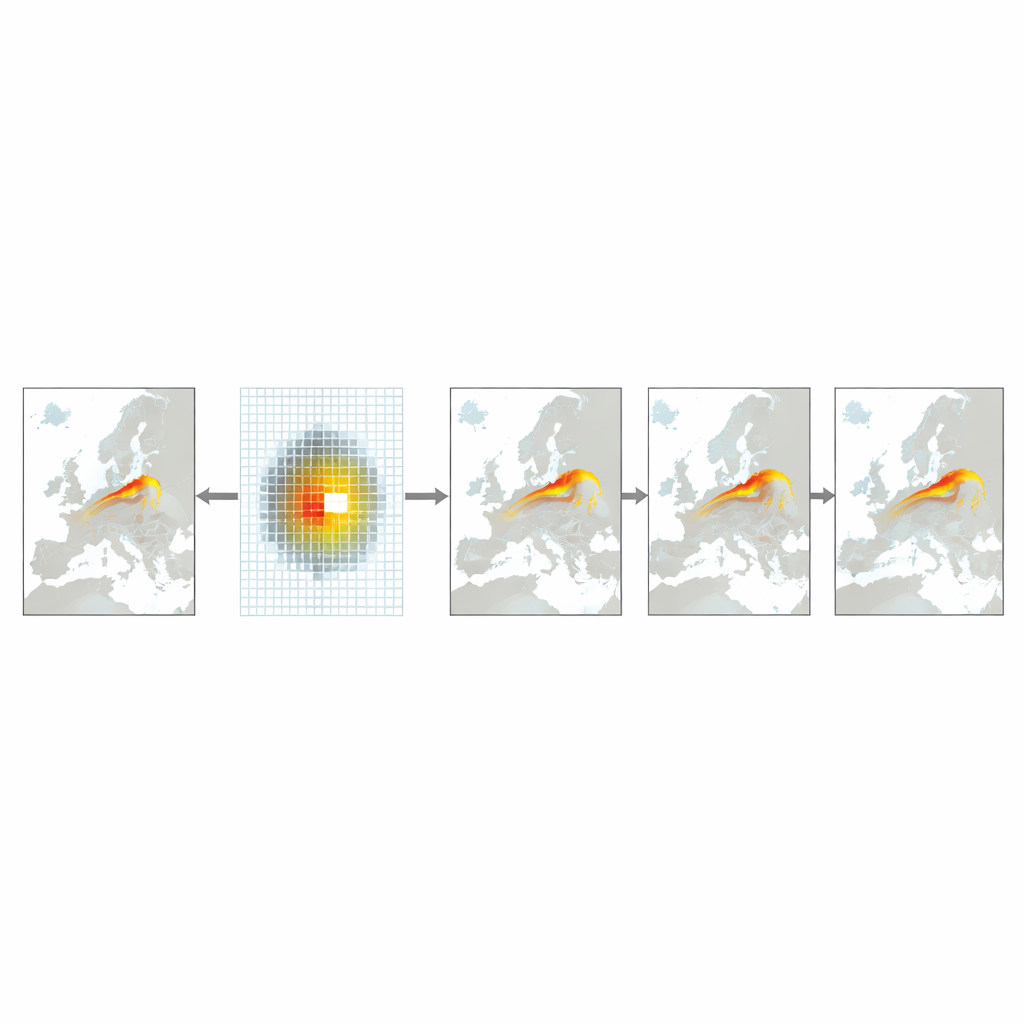

Beyond real eruptions, authorities also need tools to explore hypothetical situations: what if a reactor released material over a major city, or several hazardous sites were affected at once? To support this, the authors created a simple “event injection” method. They draw synthetic plumes directly onto the latest satellite image as colored patches of different sizes, guided by rough size estimates taken from nuclear-blast studies. These artificial clouds are then treated just like real ones by the AI model, which moves and deforms them according to the winds implied by the recent image sequence. The team demonstrates scenarios over Berlin, Paris, the Iberian Peninsula, and all of Europe at once, showing how multiple plumes would spread and overlap within the first two hours.

How this tool fits into the bigger safety picture

The authors emphasize that their injected scenarios are not precise simulations of radioactive fallout: the system does not track chemical transformations, rainfall washout, or fine details of city-level airflow. Instead, it offers quick, visually intuitive sketches of how a dangerous cloud might drift, grounded in real current weather as seen from space. Traditional physics-based models remain essential for detailed dose estimates and long-term evolution, but they often take longer to initialize and run. This new framework is designed as a fast, low-cost first-look tool that can run at the edge of the network, giving decision-makers an early sense of direction and spread while more complex modeling and measurements catch up.

Citation: Alves, D., Radeta, M., Mendonça, F. et al. A low-latency deep learning framework for volcanic ash cloud nowcasting using geostationary satellite imagery. Sci Rep 16, 14498 (2026). https://doi.org/10.1038/s41598-026-42230-7

Keywords: volcanic ash, satellite nowcasting, deep learning, hazard dispersion, emergency response