Clear Sky Science · en

Decoding real-world visual scenes from alpha and gamma band flicker evoked oscillations in human EEG

Seeing the World Through Brain Waves

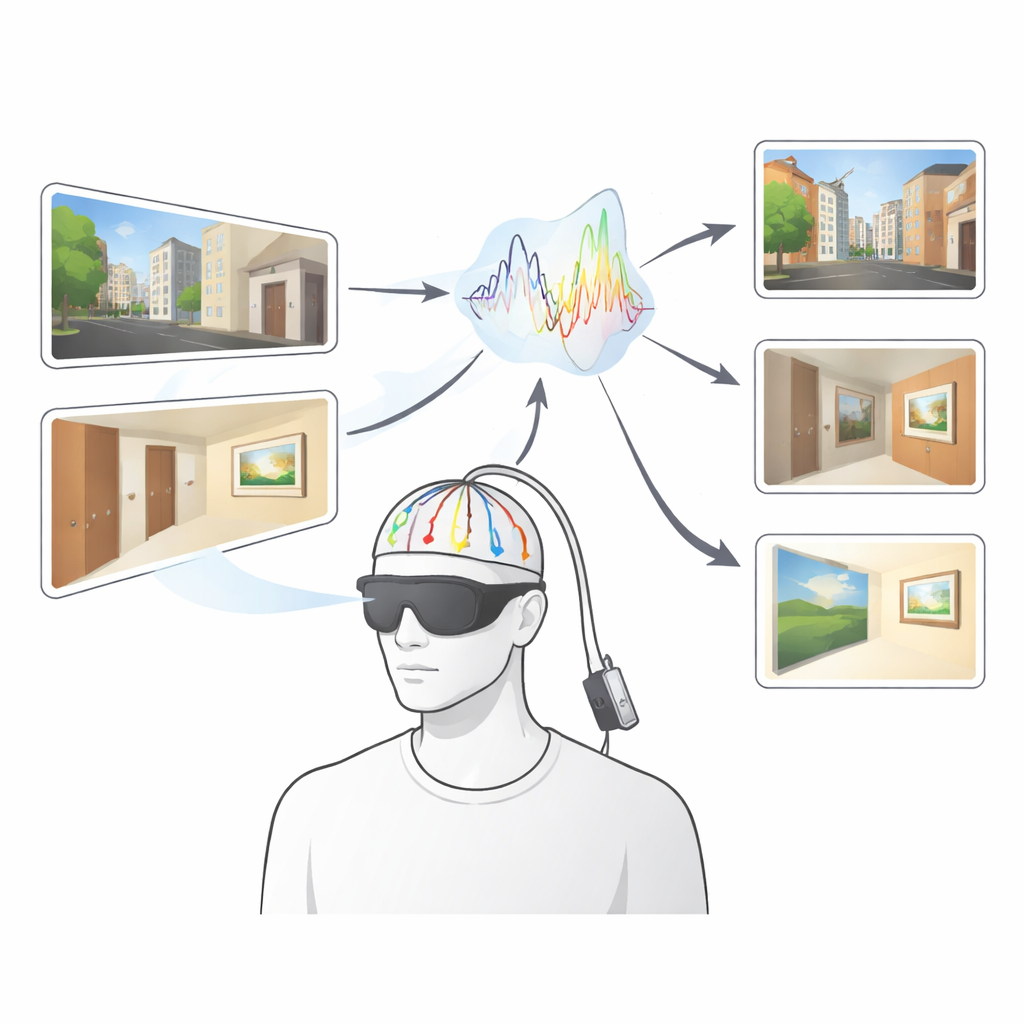

Imagine being able to tell exactly what someone is looking at—whether a city skyline, a hallway, or a blank wall—just by reading their brain waves. This study shows that such “mind-reading” is no longer science fiction. Using special flickering glasses and a lightweight brain-recording cap, the researchers decoded which real-world scene people were viewing, with surprising accuracy, in just a fraction of a second. The work opens doors for more natural, mobile brain research and hints at how the brain’s fast rhythms help us make sense of the complex world around us.

Brain Flicker in Real-Life Settings

Most brain studies of vision still rely on people staring at small, controlled images on a computer screen in a lab. Here, volunteers instead stood in real locations: looking far out of a window, down a hallway, across a large room, or at a nearby wall with or without a painting. They wore liquid-crystal glasses that rapidly flickered between clear and slightly darkened, and a small set of electrodes on the back of the head recorded their brain activity. The flicker rhythm acts like a metronome for the visual system, causing the brain’s electrical activity to pulse in step. These steady pulses—called steady-state visually evoked potentials—form a distinctive waveform for each combination of person and scene.

Each Scene Leaves a Unique Brain Signature

To test whether scenes could be identified from these waveforms, the researchers compared the shapes of the flicker-driven brain signals from different locations. Rather than focusing only on how strong the signal was, they looked at the fine-grained pattern over time—its ups, downs, and subtle bends. For each scene, they checked whether its waveform on one trial was more similar to its own waveform on another trial than to any other scene. Across six different places, decoding was strikingly accurate: on average, over 90% of scenes were correctly identified from single electrodes near the back of the head, and some participants reached perfect decoding. Crucially, these patterns were stable for the same person even across days, despite changes in lighting or weather, yet clearly different between people, making it possible to identify not only the scene but also whose brain produced the signal.

Fast and Reliable Reading of Brain Activity

The team then asked how little data they could use and still succeed. Starting from 30 seconds of flicker per scene, they gradually shortened the time window. Decoding stayed above chance with less than a second of data and remained reliable down to about 300 milliseconds—just three flickers at 10 cycles per second. They also checked common sources of noise: eye blinks, small head movements, and electrical “hum” from power lines. Removing these artifacts made almost no difference, showing that the signal is robust enough for use outside tightly controlled lab environments. Interestingly, when the researchers tried a simpler approach based only on the signal’s overall size, decoding dropped sharply, confirming that the detailed waveform shape carries far richer information than a single amplitude measure.

Why Fast Brain Rhythms Matter

A key question was which ranges of brain rhythm are most informative. In one experiment, all scenes were viewed with a 10-cycles-per-second flicker, and the researchers mathematically isolated different multiples of that rhythm—from slower, smoother waves to very rapid ripples. In a second experiment, they directly compared slow (1 per second), medium (10 per second), and very fast (40 per second) flicker. In every case, the most informative signals came from a broad mix of frequencies, but the strongest single band was consistently around 40 cycles per second, a range often linked to detailed visual processing. By contrast, the brain’s natural resting rhythms without flicker carried much less information about which scene was being viewed. This suggests that driving the visual system with flicker can reveal how a broad orchestra of brain rhythms, and especially fast ones, help encode complex natural environments.

What This Means for Everyday Brain Tech

For non-specialists, the take-home message is that our brains leave a rich, scene-specific electrical fingerprint when we look at the world, and this fingerprint can be read quickly and reliably using only a few sensors and simple equipment. Because the method works while people are standing and looking at real surroundings, it brings brain research closer to everyday life, from wearable devices that monitor how we interact with our environment to fast, portable tools for studying perception outside the lab. The study also offers strong evidence that a wide range of brain rhythms—especially fast, 40-cycle-per-second activity—plays a central role in how we see and understand real-world scenes.

Citation: Dowsett, J., Muñoz, I.M. & Taylor, P. Decoding real-world visual scenes from alpha and gamma band flicker evoked oscillations in human EEG. Sci Rep 16, 13221 (2026). https://doi.org/10.1038/s41598-026-42197-5

Keywords: brain decoding, visual perception, EEG, gamma oscillations, real-world scenes