Clear Sky Science · en

Interpretable machine learning with SHAP predicts honest behavior from personality traits and physiological data

Why this research matters

We like to believe we are honest people, yet small temptations—padding an expense report, exaggerating a grade, or quietly taking extra change—are everywhere. This study asks a striking question: can brief acts of dishonesty be predicted in advance from who we are and how our bodies react in the moment? By blending personality tests, wearable sensors, and modern computer algorithms, the researchers explore whether tiny clues in our traits and physiology can forecast when we are more likely to bend the truth.

How the study was set up

The team recruited 58 university students who first completed a standard personality questionnaire called HEXACO, which measures six broad tendencies such as being outgoing, organized, curious, or modest. Next, participants were wired to simple sensors: a chest strap for heart activity, and finger sensors for skin sweat and pulse changes. These devices captured subtle shifts in heart rate patterns, electrical activity in the skin, and the strength of blood pulses in the finger—signals that often change when people feel tense or conflicted.

A game that invites small lies

To observe real choices, the researchers used a computerized coin-tossing game that quietly encouraged cheating. On each of 100 trials, players guessed heads or tails, saw the true outcome, and then reported whether their guess had been correct, earning a small cash reward for every claimed “win.” Because the computer secretly controlled the number of real wins and losses, the scientists could see exactly when a participant falsely claimed to have guessed correctly. Each trial was labeled as honest or dishonest based on whether the report matched the actual outcome, giving the team a clean record of hundreds of tiny moral decisions.

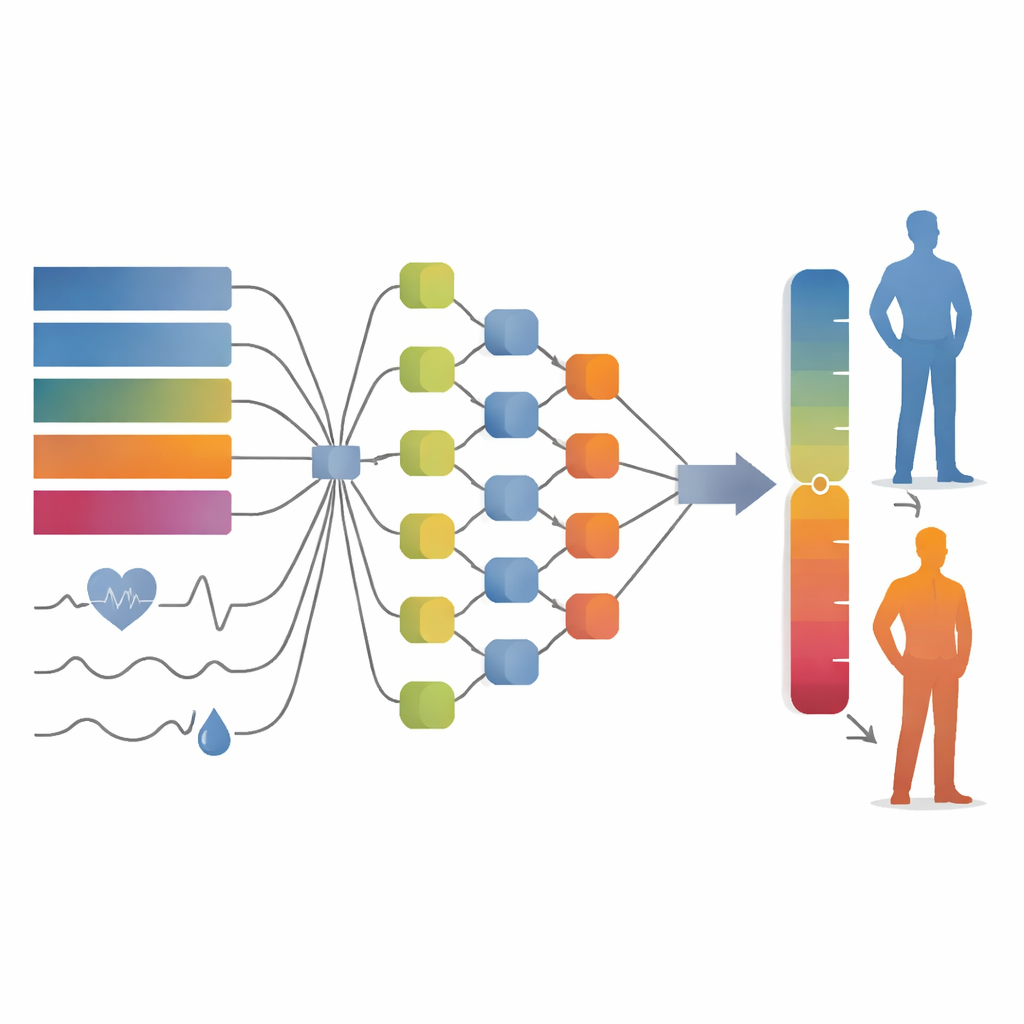

Teaching computers to read the clues

Armed with scores from the personality test and moment-to-moment bodily readings, the researchers trained eight different machine-learning models—computer systems that look for complex patterns in data. They built three kinds of predictors: one using only personality traits, one using only physiological signals, and one combining both. To keep the results fair, they split the data into training and test sets and used cross-validation, a standard method that checks how well a model generalizes beyond the data it was taught on. The main yardstick was a statistic called AUC, which reflects how well the model distinguishes dishonest from honest trials across many thresholds.

What turned out to matter most

Among all models, a personality-based approach called AdaBoost performed best, reaching a strong AUC of about 0.84. This means that stable traits alone were surprisingly good at telling which trials would be dishonest. When the researchers used only physiological indicators, or combined traits and physiology, performance was slightly lower, though still respectable. To peek inside these “black box” models, they used an interpretability method called SHAP, which assigns each feature a contribution score. For personality, the strongest positive links to dishonesty were higher Openness to Experience and higher Extraversion, whereas lower Conscientiousness also nudged predictions toward cheating. In contrast, the Honesty–Humility trait—often highlighted in previous work—played only a minor role in this specific, low-stakes setting.

What the body reveals during temptation

Physiological signals also carried clues, though not as powerfully as traits. Patterns in very-low-frequency heart-rate variability emerged as a key bodily predictor: higher values were associated with a greater likelihood of being flagged as dishonest. Another important signal was finger pulse amplitude, which tends to drop when blood vessels tighten under arousal or stress; lower pulse amplitude was linked to more predicted dishonesty. Measures of skin conductance, reflecting tiny changes in sweat, also contributed but to a lesser extent. The authors suggest that even minor cheating in a simple game can provoke subtle bodily reactions tied to tension, conflict, or excitement, though these signals may blur together when averaged across a long task.

Limits, open questions, and real-world relevance

While the models worked well for this laboratory game, the authors stress several caveats. The study involved a relatively small group of young adults from one cultural context, so results may not generalize to older, more diverse populations or to high-stakes situations where reputations and relationships are on the line. Physiological measures were averaged over the entire session rather than tied to individual trials, which may have weakened their predictive power. And because many personality traits are interconnected, the importance assigned to any single trait should be interpreted cautiously. Still, the work offers an early blueprint for combining questionnaires, biosignals, and interpretable algorithms to understand how personal makeup and bodily states shape everyday honesty.

What this means for everyday honesty

In plain terms, this research suggests that quick, low-cost cheating is not random: it reflects a mix of enduring tendencies—like being curious, outgoing, or disciplined—and fleeting bodily stirrings that register when we face temptation. The study shows that computers can learn to read these patterns well enough to make above-chance predictions about who will fudge the truth on a given trial, and why. Although far from a tool for judging individuals in real life, the approach points toward more nuanced ways of studying honesty, and may eventually help institutions design fairer environments and safeguards that reduce the pull of small but costly lies.

Citation: Meng, Y., Chen, Y., Zhang, Z. et al. Interpretable machine learning with SHAP predicts honest behavior from personality traits and physiological data. Sci Rep 16, 13457 (2026). https://doi.org/10.1038/s41598-026-41677-y

Keywords: dishonest behavior, personality traits, physiological signals, machine learning, moral decision-making