Clear Sky Science · en

An image recognition agorithm for fine-grained high-frequency workpieces based on a multi-branch network architecture

Smarter eyes for factory parts

Modern factories depend on cameras and computers to sort thousands of nearly identical metal parts at high speed. When those parts differ only in tiny surface details, even advanced image-recognition software can get confused, leading to mis-sorted items, production delays, and added costs. This study presents a new way for machines to "see" and tell apart such look‑alike components, promising more reliable, flexible, and efficient automated manufacturing.

Why similar parts are hard to tell apart

In many production lines, so‑called high‑frequency workpieces—flat metal parts made in large quantities—must be classified into dozens of categories. The challenge is that parts within the same category can show complicated surface textures, while parts from different categories may look almost the same from above. Lighting changes and variations in how a part is positioned in front of the camera make the problem even harder. This kind of task falls into what computer scientists call fine‑grained recognition: not just telling a car from a person, but telling one very similar part from another based on subtle clues.

A two-track way of looking at each part

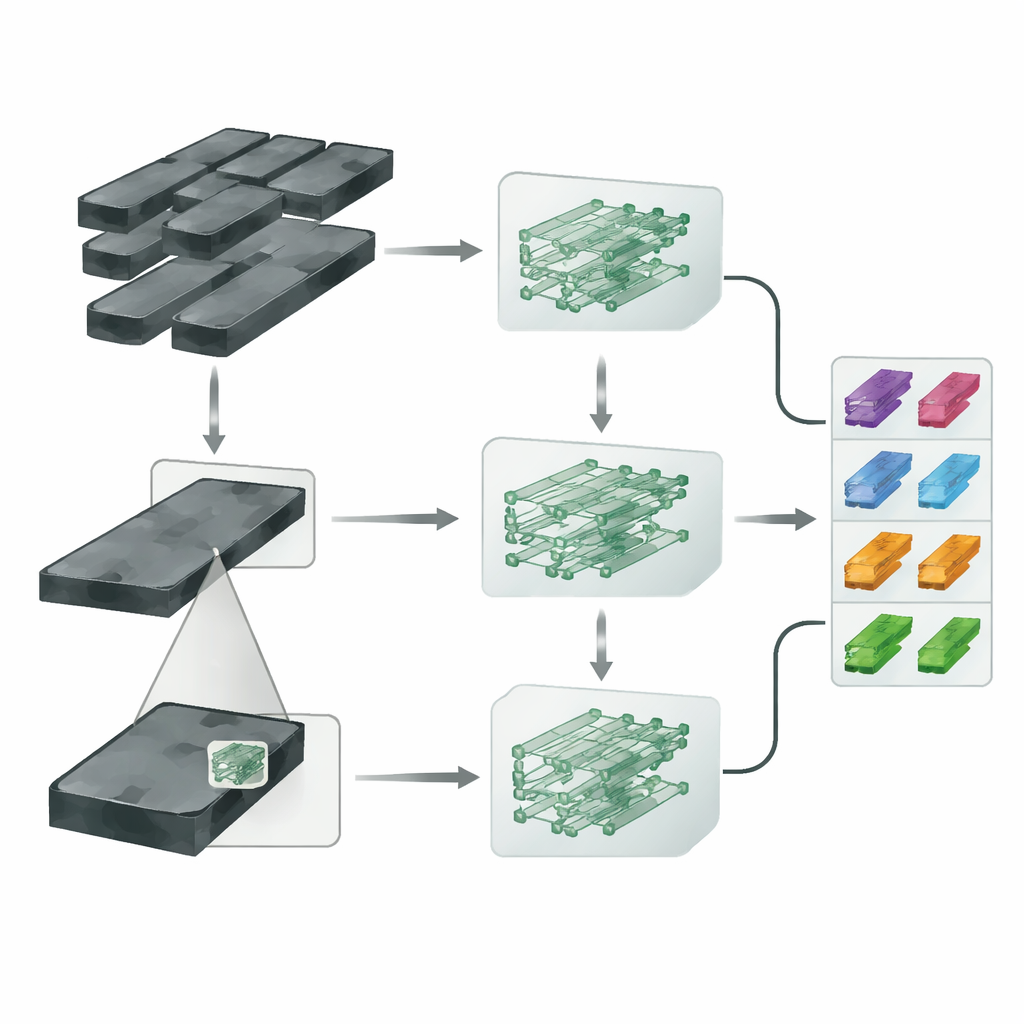

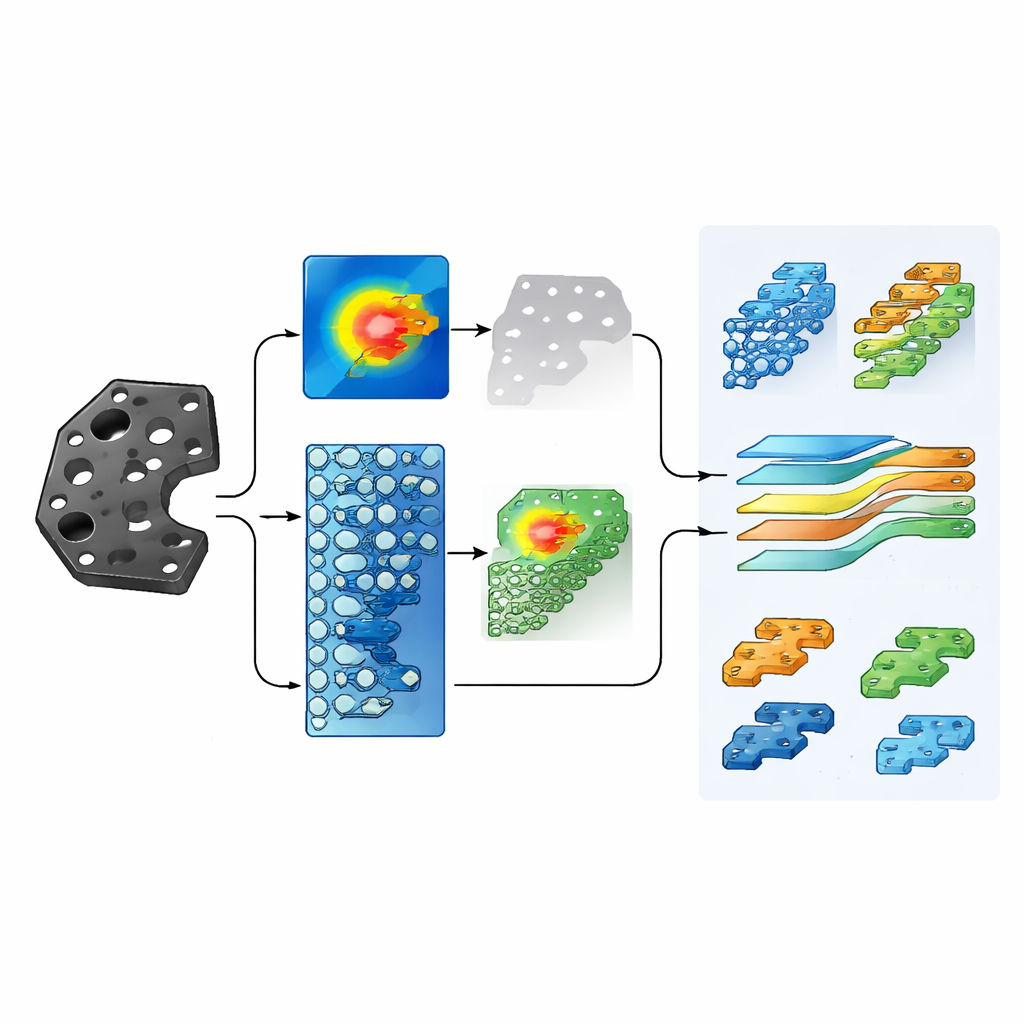

The researchers build on a compact neural network known as EfficientNet‑B0 and turn it into a multi‑branch system they call MBEN. Instead of feeding the network only the whole image of a part, they first let the model roughly figure out which area of the image carries the most distinctive information. A special weakly supervised region‑detection module creates a kind of heat map that lights up likely key zones, then crops out a smaller image patch around this area. The full image travels through one branch of the network (the global branch), while the cropped close‑up travels through another (the local branch). This design lets the system learn both the overall appearance and the tiny, localized differences that separate one part type from another.

Teaching the model what really matters

Simply providing two views is not enough; the network also has to be taught to focus on the right distinctions. To do this, the authors design a loss‑augmentation module—rules that guide how the network adjusts itself during training. One part of this module makes the system pay extra attention to categories it currently finds confusing, so it does not become overconfident on easy cases and neglect difficult ones. Another part encourages images of the same type of workpiece to end up close together in the network’s internal representation, while pushing different types farther apart. Together, these mechanisms shape a clearer internal map of the part categories, improving the chances that new, unseen images will be classified correctly.

Blending the big picture with the close-up

After the global and local branches each produce their own predictions, a branch‑fusion module combines them into a final decision. The researchers tune how much each branch should contribute, finding that giving slightly more weight to the global image but still relying strongly on the close‑up region works best. They test their method on a custom dataset of 20 kinds of high‑frequency workpieces photographed under realistic factory lighting, with thousands of images expanded through data‑augmentation tricks such as rotations and random crops. The MBEN system reaches 98.75% accuracy—several percentage points better than a range of existing fine‑grained recognition methods—while using relatively modest computing resources.

What this means for real-world production

The study shows that combining whole‑image context, automatically discovered detail patches, and carefully designed training rules can make machine vision much more reliable for hard industrial tasks. For manufacturers, such improvements could translate into fewer sorting errors, less manual inspection, and greater flexibility when switching between many similar product types. Although the work does not yet tackle imbalanced real‑world data, where some part types are far rarer than others, the results suggest that smarter, more selective digital "eyes" can keep pace with increasingly precise and varied production lines.

Citation: Deng, J., Sun, C., Lin, J. et al. An image recognition agorithm for fine-grained high-frequency workpieces based on a multi-branch network architecture. Sci Rep 16, 11067 (2026). https://doi.org/10.1038/s41598-026-41639-4

Keywords: industrial image recognition, fine-grained classification, automated quality control, computer vision in manufacturing, neural networks