Clear Sky Science · en

A cascaded group attention mechanism-based object detection algorithm for construction and demolition waste

Why Smarter Waste Sorting Matters

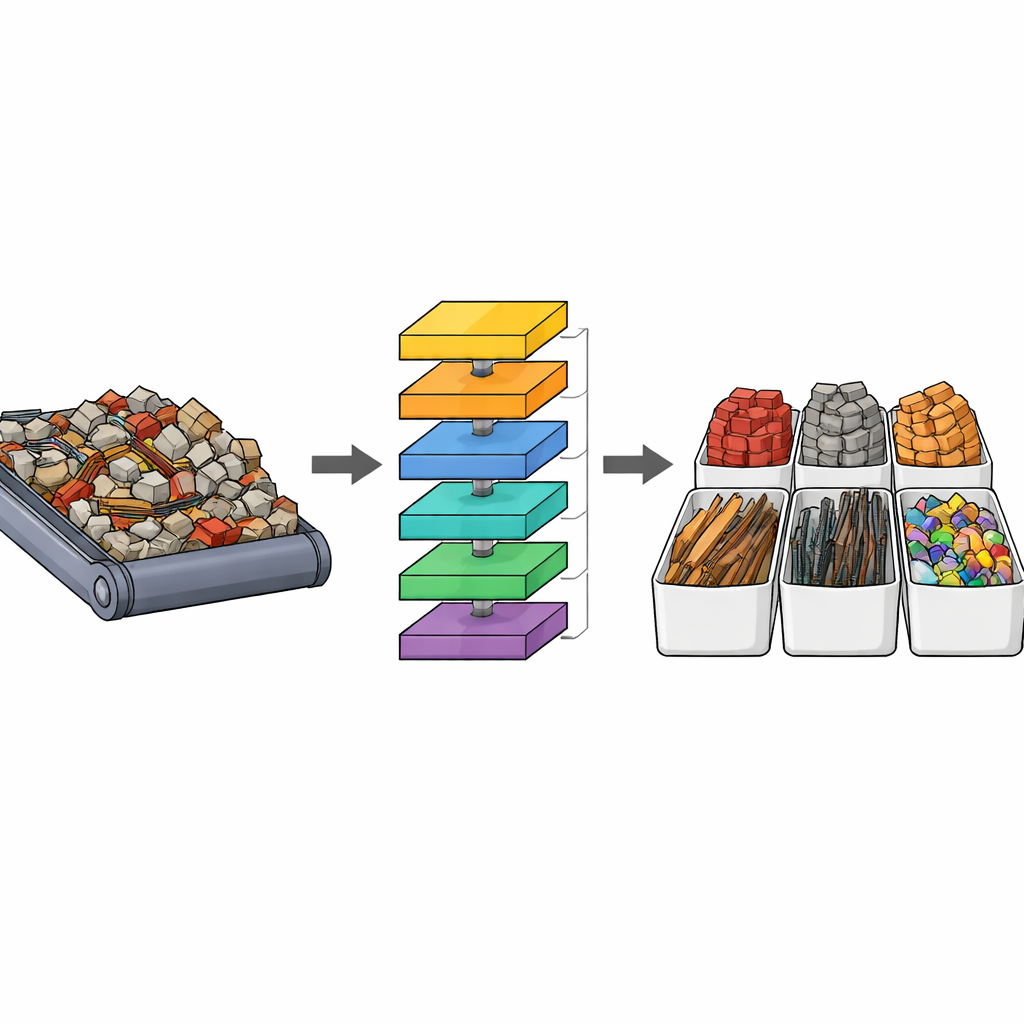

Every time a building goes up or comes down, mountains of rubble are created—concrete chunks, broken bricks, tiles, wood, metal, and plastic. This construction and demolition waste now accounts for around 40% of the trash in many cities. Hidden in that rubble are valuable materials that could be recycled into new building products, but today much of the sorting is still done by hand, which is slow, costly, and dangerous. This paper presents a new computer-vision system that can automatically spot and classify different types of construction waste in real time, even when pieces are small, overlapping, or look very similar to one another.

The Challenge of Seeing Order in a Pile of Rubble

Sorting mixed construction debris is surprisingly hard for machines. Pieces of concrete and ceramic tile, for example, often share similar colors and textures, making them easy to confuse. In real-world scenes, large fragments sit right next to tiny chips, many objects are partially hidden, and lighting or camera angle can change how materials appear. Earlier artificial-intelligence systems for this task either lacked accuracy, struggled with very small items, or required heavy computing power that is unrealistic for use on sorting lines and mobile equipment. The authors focus on improving a popular family of fast object-detection models, known as YOLO, to better handle these messy, cluttered scenes without slowing down.

A New Way for the Network to Pay Attention

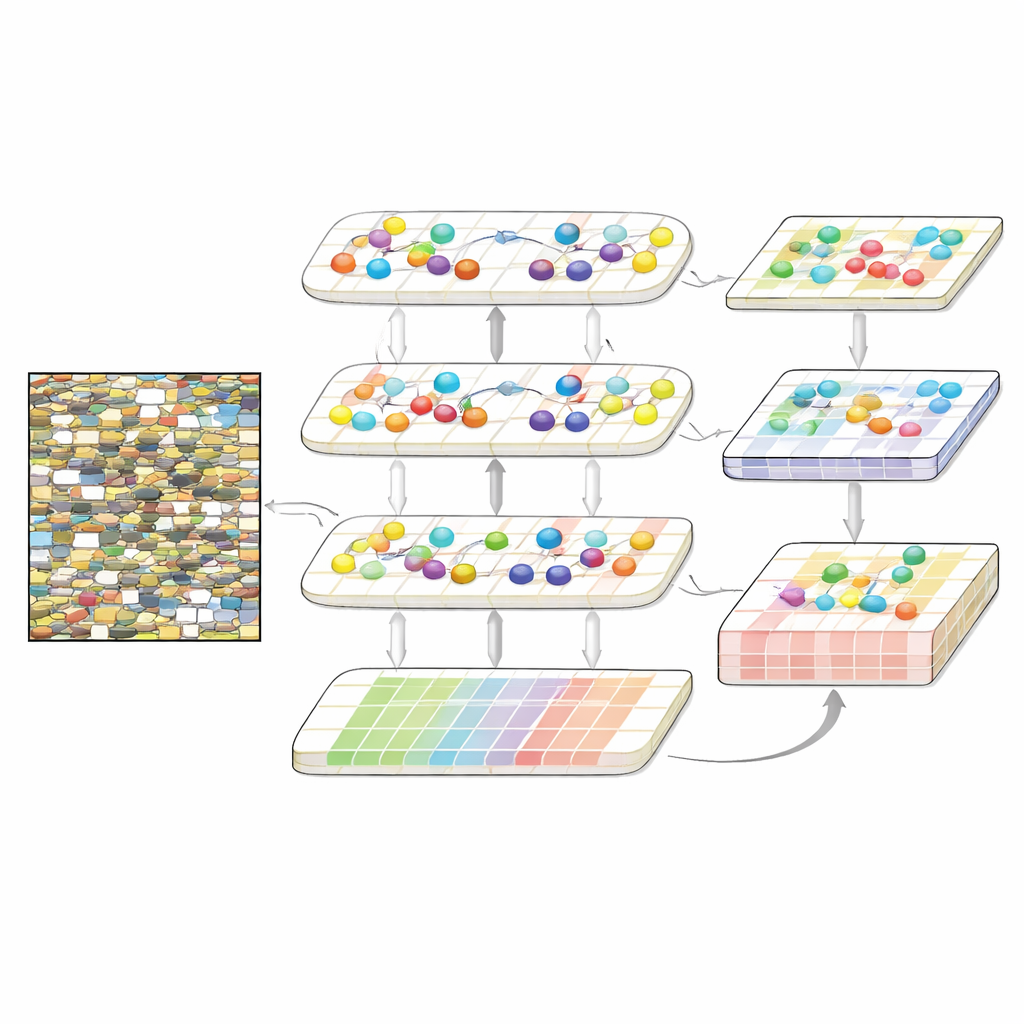

The heart of the new method is a redesigned “backbone” that processes images in stages, inspired by transformer models used in language and vision. Instead of treating the image only in small local patches, the network learns how distant regions relate to each other, which helps when objects overlap or blend into the background. To do this efficiently, the authors introduce a cascaded group attention mechanism. They split the internal representation of the image into groups, let each group focus on patterns within itself, and then gradually pass information from one group to the next. This “local focus first, global refinement later” scheme allows the model to emphasize subtle differences between, say, concrete and ceramics, while keeping memory and computation low enough for real-time use.

Looking at Waste at Several Scales at Once

Beyond recognizing material types, the system must also find objects of very different sizes, from tiny shards to large beams. The model therefore uses multiple layers that each operate at a different image resolution. A dedicated interaction module lets information flow both from coarse, big-picture layers down to fine, detailed ones and back again. Coarse layers contribute overall context—where piles are, how objects cluster—while fine layers contribute sharp edges and textures. A spatial attention component then highlights the most informative regions at each scale and suppresses distracting background. Finally, separate detection branches at each resolution predict where objects are and what material they belong to, with a training setup that encourages precise box placement and balanced trade-offs between finding many objects and avoiding false alarms.

Putting the System to the Test

To evaluate their approach, the researchers used two public datasets of construction and demolition waste. One, called BTC, contains images of bricks, tiles, and concrete; the other, SWP, focuses on steel, wood, and plastics and includes thousands of high-resolution images. The team compared their method to several existing versions of YOLO models that had been adapted for this task. Their system achieved markedly higher detection scores on both datasets, especially on the tougher measure that judges how precisely predicted boxes line up with the true object outlines. It was particularly strong at maintaining very high recall—missing almost no objects—while keeping the overall computational load modest, competitive with or lower than many rival models.

What This Means for Real-World Recycling

For non-specialists, the key takeaway is that the authors have built a smarter “eye” for sorting construction debris, one that can pick out and distinguish recyclable materials in busy, chaotic scenes better than previous tools. By combining efficient attention mechanisms with multi-scale processing, the system spots small and overlapping pieces more accurately, while still running fast enough to be practical on industrial hardware. Some confusion between waste and background remains, but overall performance is strong and stable across different datasets. In the long run, such advances could help recycling facilities recover more valuable material with less manual labor, reduce landfill use, and make the construction industry cleaner and more resource-efficient.

Citation: Jiang, Z., Yang, Y., Hu, J. et al. A cascaded group attention mechanism-based object detection algorithm for construction and demolition waste. Sci Rep 16, 11798 (2026). https://doi.org/10.1038/s41598-026-41557-5

Keywords: construction waste detection, deep learning vision, automated recycling, object detection, attention mechanisms