Clear Sky Science · en

Predicting concrete compressive strength using optimized deep learning and large language models

Why this matters for safer, greener buildings

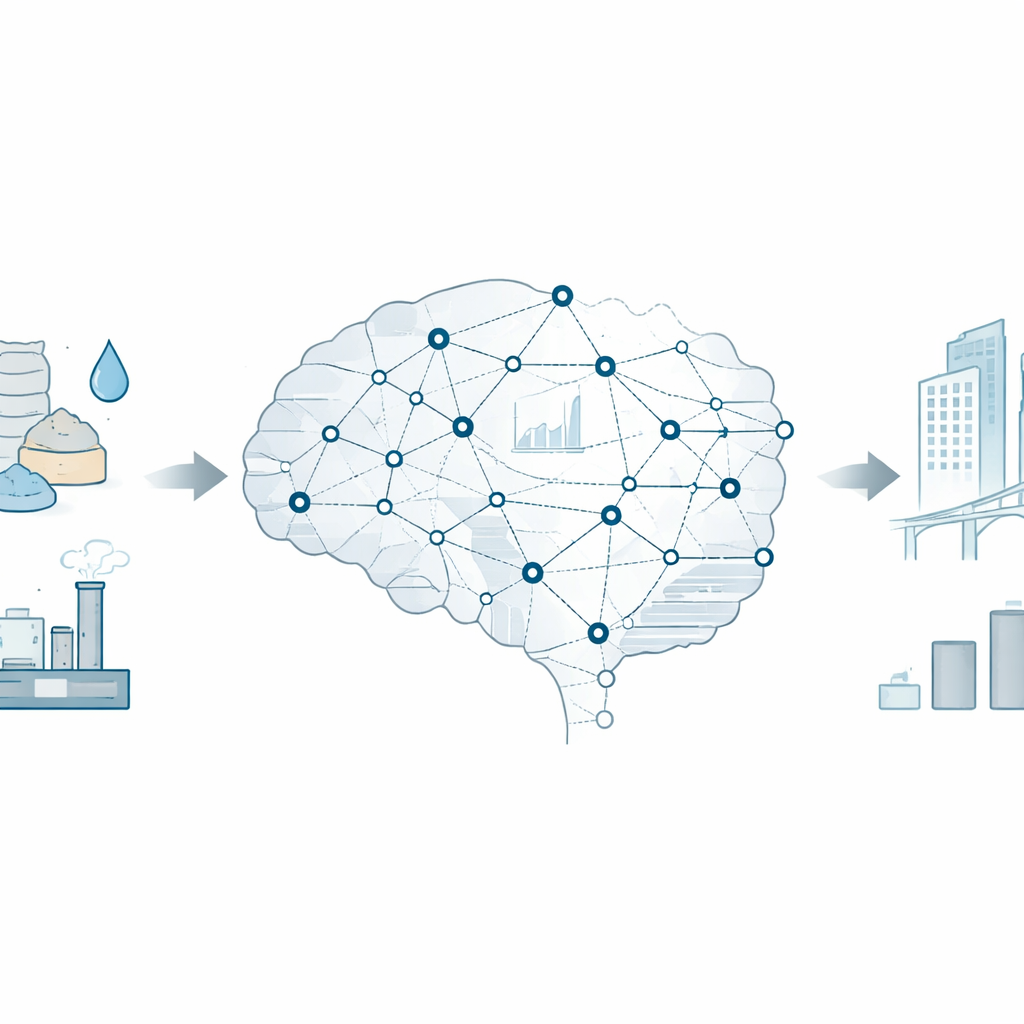

Concrete holds up our homes, bridges, and tunnels, but testing how strong it is usually means waiting weeks for lab results. This study explores how advanced artificial intelligence can predict concrete strength quickly and accurately from its recipe alone. Faster, more reliable predictions could help engineers design safer structures, cut wasteful trial-and-error in the lab, and make it easier to use low‑carbon ingredients like industrial by‑products.

From messy lab data to clean digital ingredients

The researchers start with a large public dataset of concrete mixes. Each entry lists how much cement, slag, fly ash, water, chemical additives, sand, gravel, and curing time were used, along with the final measured strength. Real‑world data of this kind is often inconsistent: measurements can be missing, units can differ, and odd outliers can slip through. To tackle this, the team uses large language models—general‑purpose AI systems usually used for text—to help clean and restructure the data. These models guide tasks such as checking ranges, reconciling naming and units, creating meaningful derived quantities like water‑to‑binder ratio, and handling outliers. The goal is to transform a noisy spreadsheet into a coherent, physics‑aware description of each mix that learning algorithms can digest.

Teaching a network to see both mixture and time

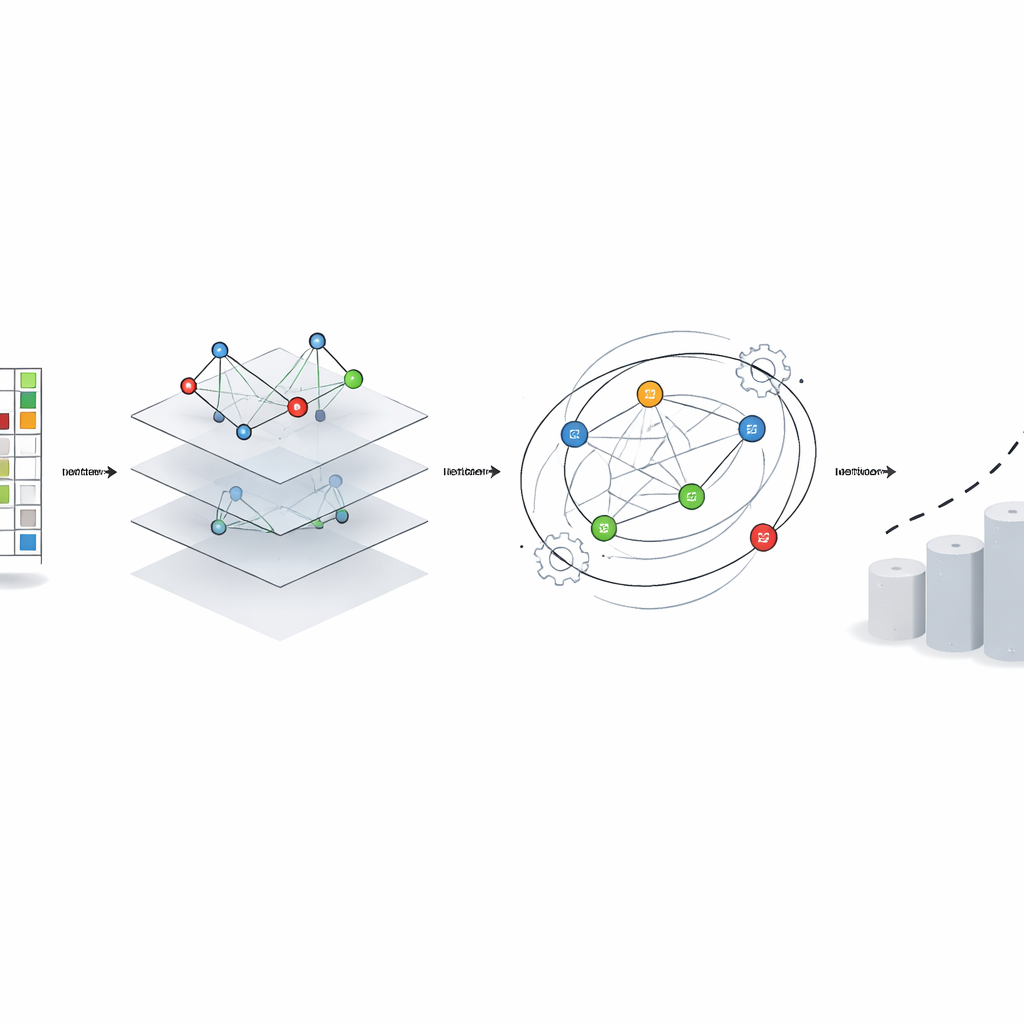

Concrete strength is not just about what goes in the mixer; it is also about how strength grows over days and weeks as the material cures. Many past prediction tools either focused on the ingredients or on time trends, but rarely both at once. This work uses a spatio‑temporal graph convolutional network, a type of model that treats each ingredient (such as cement, water, slag, or additives) as a node in a network, with connections that reflect how strongly they interact. At the same time, it looks along the time axis to learn how these combinations gain strength as they age. In effect, the model learns patterns like “high cement plus low water typically leads to faster, higher strength,” while also capturing subtler roles for slag, ash, and chemical agents across different curing periods.

Letting a “human‑like” optimizer tune the AI

Even powerful neural networks can underperform if their internal settings—such as learning rate, number of layers, or filter sizes—are chosen poorly. Instead of hand‑tuning these, the authors use a new optimization method called the iHow Optimization Algorithm. Inspired by how people learn, it alternates between broad exploration of many possible designs and focused refinement of the most promising ones, while keeping a kind of internal memory of what has worked so far. This optimizer searches the space of network designs and training settings for the graph‑based model, aiming to minimize prediction errors while avoiding overfitting. The same family of optimization ideas is also used for feature selection, trimming the input list down to the most informative quantities and keeping the model compact and interpretable.

How well does it work compared with other AI tools?

The team runs extensive comparisons against a wide range of alternatives: standard deep networks, vision‑style transformers, variational autoencoders, physics‑informed networks, and several different metaheuristic optimizers such as particle swarm and genetic algorithms. They also test four distinct preprocessing pipelines driven by different language models, showing that better cleaning and feature crafting noticeably improve downstream accuracy. Across all these tests, the combination of iHow optimization with the spatio‑temporal graph network provides the lowest prediction errors and the highest agreement with measured strengths. Statistical checks, including analysis of variance and non‑parametric rank tests, confirm that these improvements are unlikely to be due to chance.

What this means for future construction practice

In plain terms, the study shows that a carefully tuned, graph‑based AI model, fed with well‑cleaned data, can predict concrete strength much more reliably than earlier approaches. It captures how ingredients and curing time interact, and it uses a “learning to learn” optimizer to fine‑tune itself. While the authors stress that the method still needs to be tested on more diverse, real‑world datasets before being embedded in building codes or plant control systems, it already points to a future in which engineers can explore greener mix designs on a computer, with confidence that the predicted strengths will closely match what is later seen in the lab or onsite.

Citation: Zaman, S., Eid, M.M., Mattar, E.A. et al. Predicting concrete compressive strength using optimized deep learning and large language models. Sci Rep 16, 11076 (2026). https://doi.org/10.1038/s41598-026-41072-7

Keywords: concrete strength prediction, deep learning in construction, graph neural networks, optimization algorithms, sustainable concrete materials