Clear Sky Science · en

Deep residual and hybrid CNN models for confidence-aware real-world waste classification for sustainable waste management

Why Smarter Sorting of Trash Matters

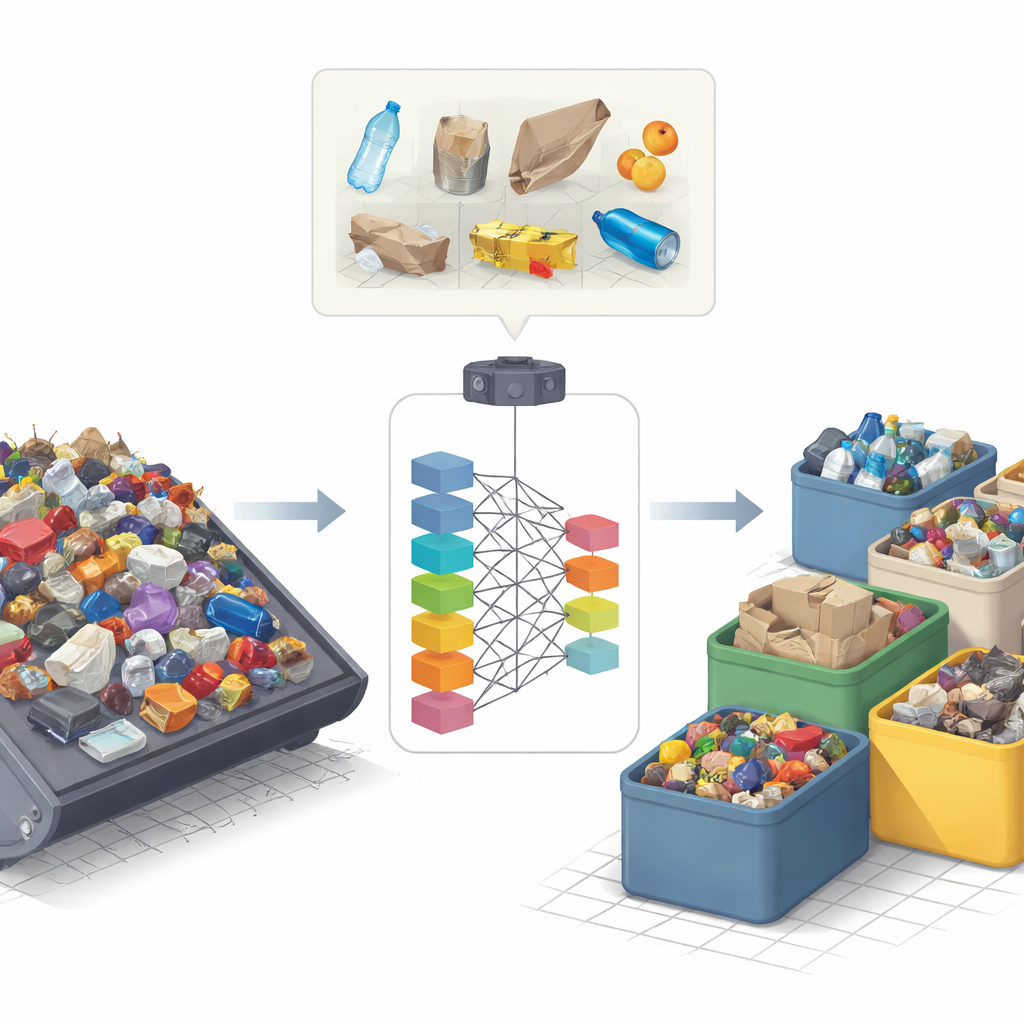

Modern life produces mountains of garbage, and much of it ends up in the wrong place. When recyclables are buried in landfills or food scraps are mixed with metals and plastics, we lose valuable resources and create pollution and greenhouse gases. This study explores how advanced image-based artificial intelligence can automatically recognize different kinds of waste in the messy conditions of a real landfill, with the goal of making recycling faster, safer, and far more reliable than manual sorting alone.

From Real Landfills, Not Clean Lab Photos

Most past research on automated waste sorting has relied on clean, carefully staged pictures: a single bottle centered on a plain background, or neatly arranged piles of paper and glass. In contrast, the authors work with the RealWaste dataset, a collection of thousands of color photos taken at an actual waste and recycling facility in Australia. Each image may contain distorted, overlapping, or dirty items lying on rough concrete: cardboard tubes, food scraps, broken glass, crumpled paper, bits of metal, plastic containers, and textile pieces. These images are grouped into nine broad categories that match how facilities actually sort waste at the first stage, such as paper, plastic, metal, food organics, and vegetation. This focus on authentic scenes makes the resulting system much more relevant to real-world operations.

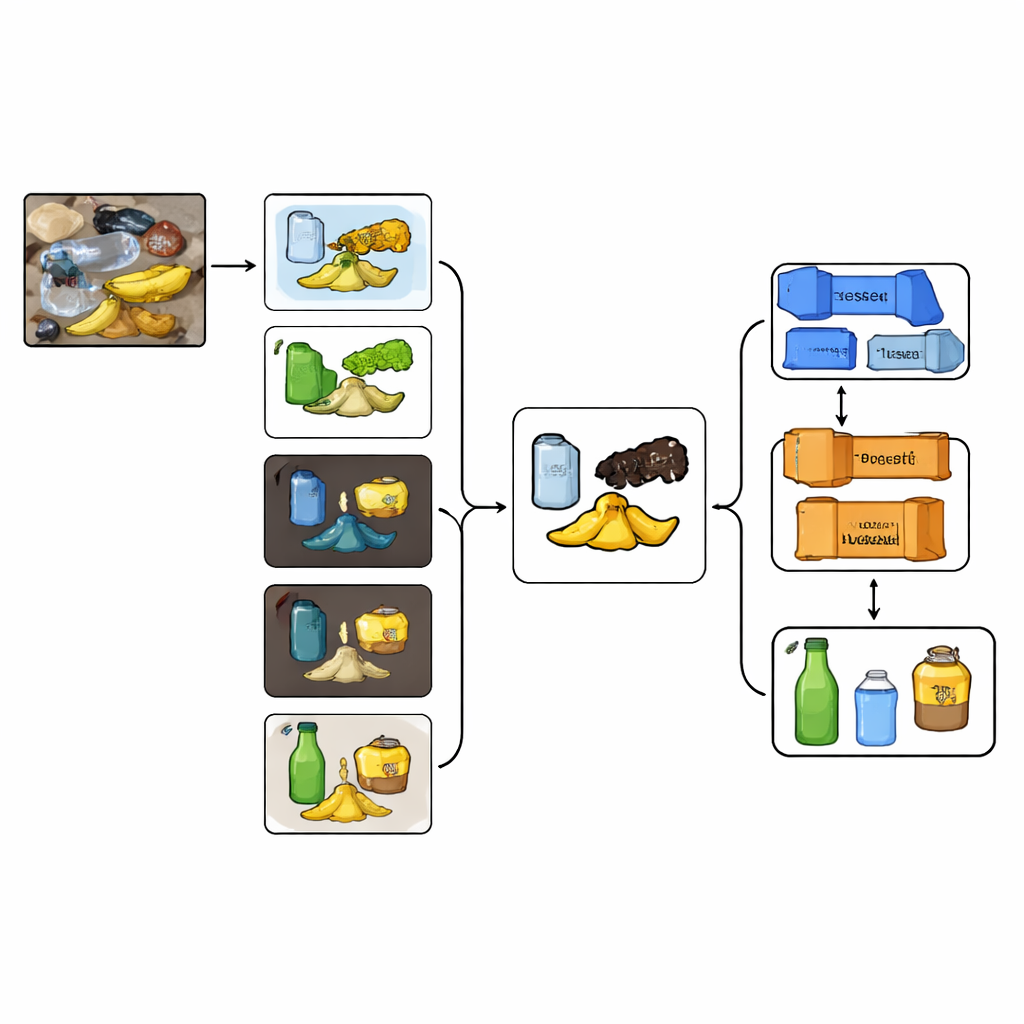

Cleaning Up the Picture Before Making a Decision

Because the raw images are so cluttered, the team first "cleans" them using a combination of image segmentation techniques. Instead of relying on a single method, they apply four different ways to separate foreground objects from the background, each tuned to spot different visual cues like contrast, lighting, or color groupings. The results are merged so that only regions agreed upon by several methods are kept as likely waste items. A further step then teases apart objects that are touching or stacked. This produces a refined mask that highlights only the trash while muting the confusing textures of the floor and surroundings. The original image is then filtered through this mask so the neural networks see mostly the waste itself, not the noise around it.

Deep Networks and Hybrid Models Learn to See Trash

On top of this preprocessing, the researchers fine-tune a wide range of modern image-recognition networks, known as convolutional neural networks. These include popular designs such as Inception, DenseNet, VGG, EfficientNet, MobileNet, and several versions of ResNet. Among them, a very deep model called ResNet101 stands out, reaching almost 99% accuracy and an equally high F1 score on the RealWaste data. To push further, the authors build "hybrid" models that merge the internal feature maps of two different networks—for example, combining ResNet101’s strong handling of texture and structure with InceptionV3’s ability to look at objects at multiple scales. These hybrids prove especially helpful for tricky categories like textiles and miscellaneous trash, where items may be wrinkled, torn, or partially hidden.

Checking Not Just What the Model Predicts, but How Sure It Is

Beyond raw accuracy, the study asks a crucial question for any system that might run in a factory or city sorting center: how confident is the model about each decision? For every prediction, the network produces a confidence score between 0 and 1, indicating how strongly it believes an item belongs to a given class. The authors analyze the spread of these scores across thousands of test images. They find that for visually distinct categories such as vegetation, plastic containers, and food organics, both the best single model and the best hybrid model usually predict with very high confidence, often above 0.95. More confusing categories show a broader range of scores, signaling where extra human checks or improved training data might be needed. They also show that adding the segmentation step before classification measurably boosts all key performance numbers, from accuracy to F1 score.

Toward More Reliable and Sustainable Waste Systems

In simple terms, the paper demonstrates that a carefully designed combination of image cleanup, deep learning, and hybrid model design can recognize real-world trash with remarkable reliability, even when items are dirty, overlapping, or oddly shaped. ResNet101 emerges as a powerful backbone, while hybrid models offer added strength for the hardest-to-spot materials. By attaching a meaningful confidence score to each decision, the system not only sorts waste but also signals when it might be unsure, paving the way for safer automation. While further work is needed to shrink models for small devices and test them in full-scale, real-time facilities, this research lays a strong foundation for intelligent waste sorting that can help cities recycle more, send less to landfills, and reduce the environmental burden of our everyday trash.

Citation: Kumar, Y., Bhardwaj, P., Malhotra, S. et al. Deep residual and hybrid CNN models for confidence-aware real-world waste classification for sustainable waste management. Sci Rep 16, 10424 (2026). https://doi.org/10.1038/s41598-026-41001-8

Keywords: waste classification, deep learning, computer vision, recycling systems, sustainable waste management