Clear Sky Science · en

Cattle lameness detection using depth image and deep learning

Why sore feet in cows matter to all of us

Cows that walk in pain may sound like a niche farm problem, but lameness in dairy herds quietly drives up the cost of milk, shortens animals’ lives, and raises serious welfare concerns. Traditionally, spotting a limping cow has relied on a keen human eye and a lot of time in the barn—something many busy farms cannot provide every day. This paper describes a camera‑based system that watches cows automatically as they leave the milking parlor, using depth images and artificial intelligence to flag early signs of trouble around the clock.

From crowded barns to constant gentle watching

Lameness affects roughly one in five dairy cows worldwide and can reach far higher levels in some herds. It cuts milk yield, harms fertility, and can force farmers to cull animals early, costing hundreds of dollars per cow each year. Yet the science of lameness is far ahead of what actually happens on most farms: farmers often underestimate how many cows are sore, and expert scoring is time‑consuming and subjective. Earlier generations of technology tried to help by putting force plates in walkways or attaching motion sensors to animals, but these solutions can be expensive, intrusive, and hard to scale. The authors instead turn to “precision livestock farming” with a non‑contact, overhead depth camera that sees the three‑dimensional shape of each cow as it walks naturally along a lane.

How a ceiling‑mounted eye reads a cow’s back

In two commercial dairy farms in Japan, a single time‑of‑flight depth camera was mounted above the narrow passage cows use when returning from milking. As each animal walks underneath, the camera records a top‑down depth image: essentially a height map showing the contour of the cow’s back. The system first isolates each cow from the background using an advanced “instance segmentation” model. After testing several options, the researchers found that a modern YOLO‑based model gave both extremely high accuracy and fast processing, correctly detecting and outlining cows in more than 99% of frames while handling tens of images per second—fast enough for real‑time use in a busy barn.

Following each cow and turning posture into a pain signal

Detecting cows is only the beginning; the system also has to keep track of who is who as multiple animals flow through the lane. To do this, the authors designed a series of custom multi‑object tracking algorithms that progressively improved how well the system maintains each cow’s identity over time. The final version uses not only overlap between boxes but also how long a cow has been tracked and whether it is moving left‑to‑right or right‑to‑left. This simple but clever use of direction almost eliminated identity mix‑ups, reaching about 99.9% tracking accuracy on unseen days and on both farms.

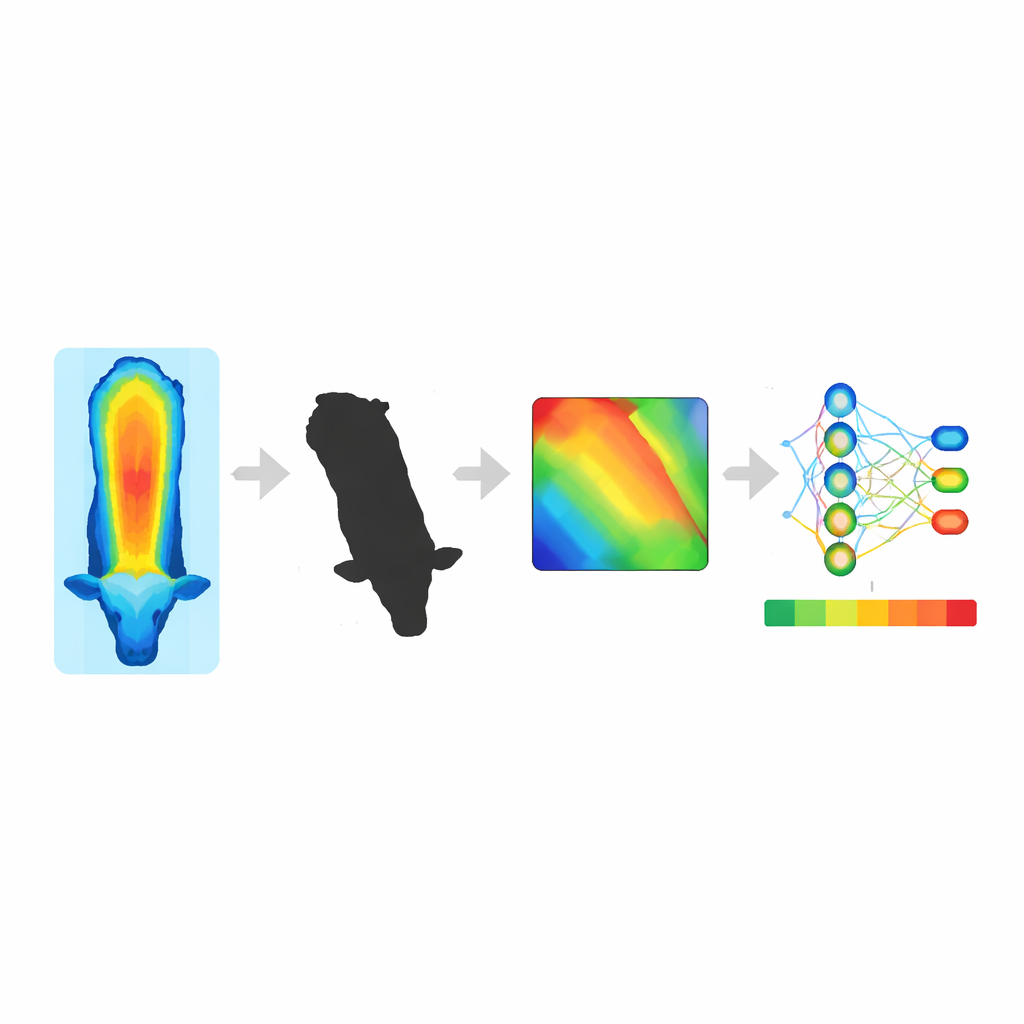

Teaching the computer what “stiff and sore” looks like

The heart of lameness detection lies in the shape and motion of the cow’s back. Pain in the feet or legs often leads to a more arched or uneven spine as the animal tries to relieve pressure. Instead of hand‑picking a few geometric measurements, the team converts the depth image of each cow into a normalized height map of the back, then feeds short sequences of these maps into a deep‑learning model. A modern image network (EfficientNet) learns spatial patterns in each frame, while a Long Short‑Term Memory (LSTM) network captures how posture evolves over several steps. Using expert labels on a four‑point scale—from sound to severely lame—the best configuration, EfficientNet‑B7 plus LSTM on five‑frame sequences, correctly classified lameness scores on unseen cows with about 96% accuracy and F1‑score, despite real‑world challenges like class imbalance and varied walking speeds.

What this means for cows, farmers, and your glass of milk

Put together, the depth camera, detection, tracking, and gait‑scoring model form an end‑to‑end, 24/7 monitoring system that can watch every cow that passes beneath it, without halters, tags, or special flooring. The authors argue that such an objective and continuous tool can help bridge the long‑standing gap between what researchers know about lameness and what is routinely practiced on farms. By flagging at‑risk cows earlier and more consistently than the human eye alone, this technology could reduce pain, cut treatment and culling costs, and support more humane, sustainable milk production. While wider testing on more farms is still needed, the study shows that a single overhead depth camera, paired with carefully designed deep learning, can turn a difficult manual chore into an automated safeguard for animal welfare.

Citation: Tun, S.C., Tin, P., Aikawa, M. et al. Cattle lameness detection using depth image and deep learning. Sci Rep 16, 13456 (2026). https://doi.org/10.1038/s41598-026-40780-4

Keywords: cattle lameness, depth imaging, precision livestock farming, computer vision, animal welfare monitoring