Clear Sky Science · en

An adaptive multi-scale spatio-temporal graph network for robust MOOC dropout prediction

Why online course dropout matters

Massive Open Online Courses, or MOOCs, promise high-quality education to anyone with an internet connection. Yet most people who sign up never finish: in many courses, more than four out of five learners quietly disappear before the end. This paper tackles a practical question behind those numbers: can we reliably spot which students are at risk of dropping out early enough for teachers or platforms to step in and help—especially in messy, real-world courses where everyone studies on their own schedule?

Learning from a web of learners and lessons

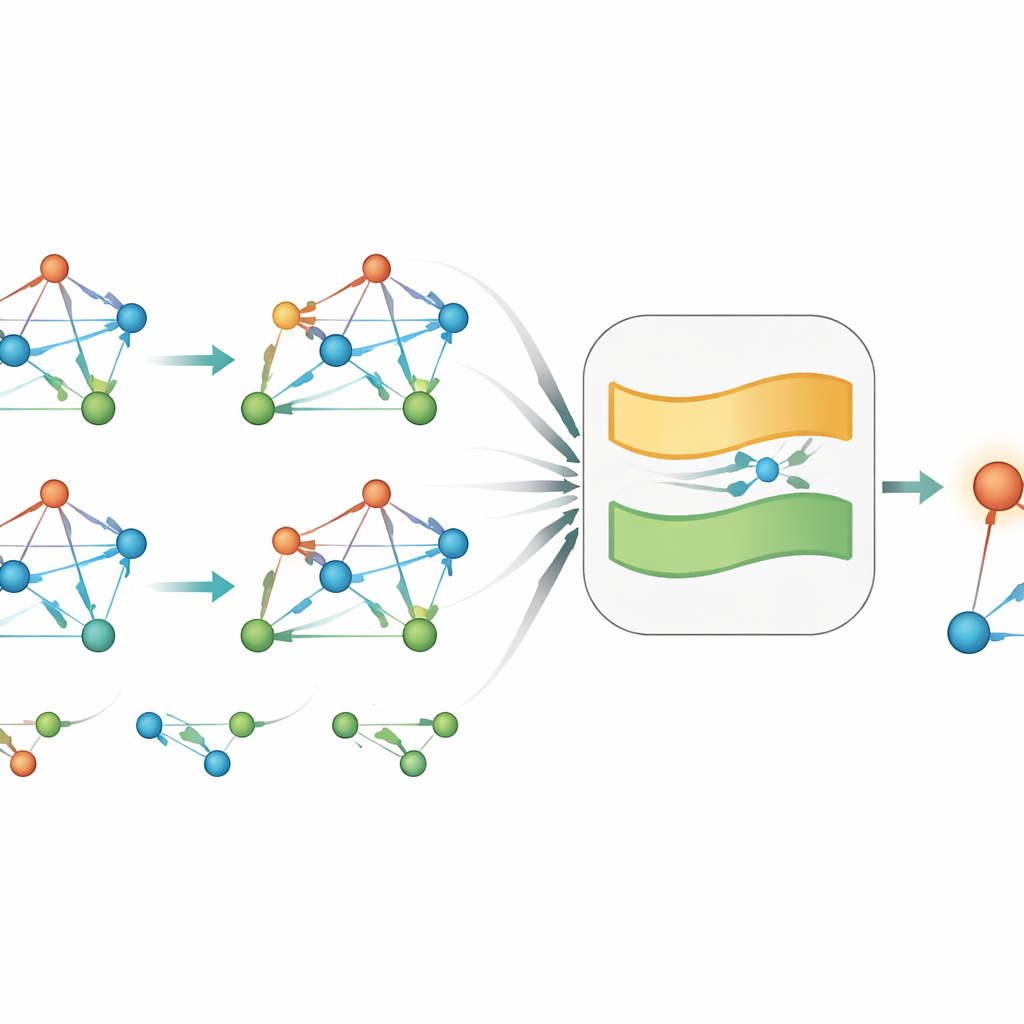

Instead of treating each student as an isolated row in a spreadsheet, the authors view a MOOC as a living network. In this network, there are nodes for students, course units, and entire courses, all linked by rich patterns of activity: watching videos, attempting quizzes, joining forums, or taking multiple classes. This structure changes over time as learners engage—or stop engaging—with different parts of the course. By modeling this evolving web, the system can capture not only how much a student does, but how their behavior relates to specific content, peer activity, and course design.

Short bursts versus long-term habits

Many existing prediction systems rely on step-by-step memory: they update their view of a student’s state using only the most recent time slice of activity. The authors argue that this is too simple for real learning behavior, which often reflects a tug-of-war between sudden shocks and long-term trends. A student might briefly surge in activity while trying to catch up, or temporarily slow down during a busy week, without that blip defining their overall path. The key is to tell when to trust the latest change and when to rely on deeper history.

A network that remembers on two time scales

To address this, the paper introduces MST-GCN, a “multi-scale” graph network that looks at both recent behavior and slightly older patterns at the same time. At each step, the model builds a snapshot of the interaction network and uses a specialized graph module to summarize the current learning context around every student: which materials they touched, which peers they resemble, and how challenging the course elements appear to be. Then a novel “adaptive gate” mixes two memories: one that captures immediate momentum and another that reflects more stable engagement from earlier. Crucially, how much weight each memory receives depends on the current context in the graph, not on a fixed rule.

Putting the model to the test

The authors test MST-GCN on two large real-world datasets. One, from the KDD Cup 2015 competition, contains only tightly scheduled, instructor-paced courses. The other, from the XuetangX platform, includes hundreds of both scheduled and self-paced courses where students can start and progress at irregular times. In both settings, dropouts vastly outnumber completers, making prediction particularly challenging. Compared with classic machine-learning methods, sequence models such as LSTMs, transformer-based approaches, and earlier graph-based systems, MST-GCN consistently achieves better scores in distinguishing likely dropouts from likely completers. The gains are modest but clear in regular, weekly courses, and more pronounced in flexible, self-paced ones, where behavior is noisier and timing less predictable.

Peeking inside the black box

Beyond raw accuracy, the authors examine how the adaptive gate behaves. When a student shows a sharp, sustained rise in activity, the gate leans toward recent behavior, effectively “listening” to the new momentum. When current signals are weak or erratic, the gate falls back on long-term patterns, avoiding overreaction to a one-off spike or dip. Visualizations of students’ internal representations show dropout and non-dropout groups clustering separately, suggesting that the network has learned meaningful notions of engagement. Case studies further illustrate that the model can resist being fooled by last-minute cramming while rewarding genuine, sustained improvement.

What this means for online learners and educators

For a general audience, the takeaway is that dropout is not just about how much a learner does right now, but about how current behavior fits within a longer story. By combining a rich view of the course network with a flexible notion of memory, MST-GCN offers a more stable and interpretable way to forecast who is likely to leave and who is on track to finish. While the model does not yet provide fine-grained explanations that pinpoint specific problem videos or assignments, its design moves online education closer to early warning systems that are both reliable and aligned with how real learners change over time.

Citation: Duan, Y., Chen, X. An adaptive multi-scale spatio-temporal graph network for robust MOOC dropout prediction. Sci Rep 16, 10966 (2026). https://doi.org/10.1038/s41598-026-40502-w

Keywords: MOOC dropout prediction, educational data mining, graph neural networks, student engagement, early warning systems